About the Authors:

Charlotte Ledoux is a data and AI governance strategist who helps enterprise teams close the gap between governance frameworks and operational reality. She brings the practitioner voice to this piece: what trust looks like inside organizations, where AI breaks existing governance, and what boards are really asking.

Vivek Dubey is a data & AI advocate at Metadata Weekly and provides the structural argument: why fragmented governance stacks compound risk and what a unified control plane looks like in practice. Together, they make the case that governance foundations have not changed, but the risk surface has.

The trap: why organizations are fighting the wrong battle

Permalink to “The trap: why organizations are fighting the wrong battle”Across most large organizations, data governance, AI governance, and information governance are being built in separate rooms, with separate frameworks, separate committees, and separate languages. The result is confusion: business leaders and boards struggle to understand which one owns risk, and none of them have a clear answer.

External pressure has spiked. CXO research now places AI governance among the top emerging board-level priorities, reshaping CIO agendas in real time. Boards are signing off on systems that can draft, decide, and act, including agents that trigger real-world outcomes autonomously. They want visibility, control, and accountability, and they want it now.

This is not abstract. Forrester projects AI governance software alone will reach $15.8 billion by 2030, capturing 7 percent of total AI software spending. A market is forming around a problem most organizations have not yet structured internally.

The instinctive response has been to stand up AI governance as a separate track. In practice, that deepens the split instead of resolving it.

The “data governance vs AI governance” framing is a false battle. The foundations are the same, but the risk surface has expanded, especially into agents and autonomous action. The sustainable response is not a new silo. It is to extend and converge existing governance into one active control plane that governs how information and intelligence are used end to end.

Governance is a trust engine, not a paperwork function

Permalink to “Governance is a trust engine, not a paperwork function”Let’s be honest. If you ask most teams what data governance looks like, they’ll describe the same thing: a 40-page policy deck nobody reads, a steering committee that meets quarterly to approve things that already happened, and an access request process that makes you want to scream into the void.

I’ve been there. My clients have been there. And when governance shows up as a layer of friction, of course people build workarounds. Of course the business says “we don’t need more rules.”

But here’s the thing.

Boards and regulators don’t care about your policy deck. They don’t ask “do you have a governance framework?” They ask: “Can we trust the information behind this decision? Can we show how it was made and what constraints applied?”

I’ll say it plainly: Data Governance is not a library of rules. It’s an operating system for trust.

Rules sit on a shelf. An operating system runs in the background, making sure things work, that the right people have the right access, that data is what it claims to be, that decisions can be traced back to their source.

Think about it like a house — my favorite analogy, you know me by now. The policy deck is the house rules taped to the fridge. The operating system is the plumbing alerting when something’s wrong, the locks on the doors, etc. One is a piece of paper. The other keeps the house standing.

The trust foundation you already have

Permalink to “The trust foundation you already have”If you’ve been doing data governance for a while, you’ve probably already built more trust infrastructure than you realize :

-

Data quality — making sure the numbers people see are actually correct

-

Privacy — controlling who sees what, and proving it to regulators

-

Lineage — tracing where data came from and how it was transformed

-

Access control — ensuring the right people (and only the right people) can reach sensitive data

This is your trust foundation. It’s not glamorous, but it’s real. Every time a CFO trusts a financial report, every time a compliance team passes an audit, every time a product manager makes a decision based on a dashboard, that trust was built on your work.

But here’s the thing: The game has changed, and that foundation is now under pressure.

Until now, decisions were made by people looking at dashboards, reading reports, reviewing spreadsheets. Governance could afford to operate in the background because a human was always at the end of the chain, applying judgment before anything happened.

AI changes that equation. Because when an agent executes (places the order, sends the email, triggers the workflow), that’s not a trust question anymore. That’s a trust crisis, if governance hasn’t kept up.

What AI changes: new risk surfaces, very little time

Permalink to “What AI changes: new risk surfaces, very little time”AI is no longer a pilot project buried in the innovation team’s backlog. Mayfield’s 2026 CXO Network Survey of 266 Fortune 50–Global 2000 technology leaders found that 42% already have AI agents in production, with 72% either in production or actively piloting. Gartner predicts that by 2027, half of all business decisions will be augmented or automated by AI agents.

So the trust foundation we just talked about — quality, privacy, lineage, access — is still essential. But it was designed for a world where humans query data and make decisions. AI introduces entirely new risk surfaces that your existing governance framework was never built to handle.

Let me give you an example.

A mid-sized European retailer has solid data governance. They’ve invested in it seriously. Data quality scores are tracked. Privacy policies are enforced. Access controls are tight. Lineage is documented in their catalog. Their CDO is proud.

They deploy an AI agent to handle dynamic pricing for their e-commerce platform. The data feeding the model is clean — inventory levels, competitor pricing, demand signals — all governed, all documented.

But:

-

The pricing model was fine-tuned by a vendor using a training set that included US market data. The retailer sells in the EU. Nobody reviewed the training data composition. The model has a built-in bias toward aggressive discounting patterns that don’t match their margin strategy.

-

The agent uses RAG to pull in “pricing guidelines” from the internal wiki. But the retrieval window also grabs a draft document — a scenario analysis for a potential Black Friday promotion that was never approved. The agent treats the draft as policy.

-

The agent runs in human-on-the-loop mode. A pricing analyst is supposed to review changes daily. But the agent makes 400 price adjustments overnight — a Sunday night — and the analyst doesn’t check the dashboard until Monday at 10am.

By Monday morning: 400 products have been repriced using a biased model, informed by unapproved promotional guidelines, with no human review. Customer complaints are already coming in. The margin impact is six figures. The compliance team wants to know how this happened. The board wants to know who’s accountable.

And the data? The data was fine. Every single data quality check passed. Every access policy was respected. The lineage was clean.

But the model supply chain wasn’t governed. The context assembly wasn’t governed. The agent’s action surface wasn’t governed.

The shift from “AI that informs” to “AI that acts” is the single biggest governance challenge most organizations aren’t ready for.

AI governance is data governance

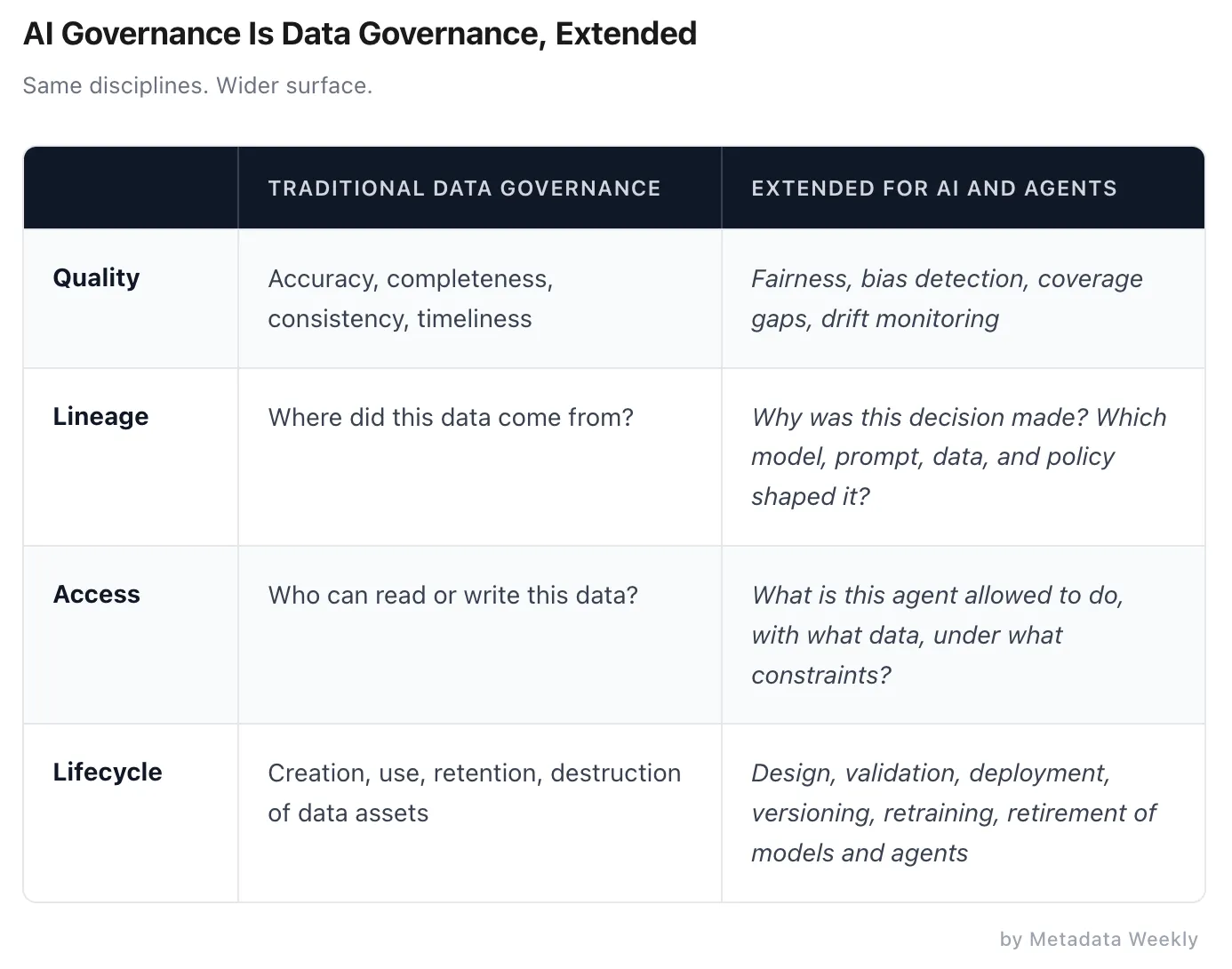

Permalink to “AI governance is data governance”Once you map the AI risk surface, the risks are new but the governance disciplines needed to manage them are not. Is the data accurate enough for the decision? Do we have the right to use it this way? What happens downstream if it is wrong?

We have been asking these questions for years. AI governance extends them into models, prompts, and automated decisions.

The table makes a simple point: every row is the same governance discipline, pointed at a wider surface. Data governance teams are already equipped for the left column. The right column is where they need to be invited into the AI loop.

This is not just our argument. Gartner recommends mapping AI governance into the same foundational pillars used for data and analytics governance, precisely to avoid duplication and gaps. The convergence case is being made by the industry’s leading analyst firms, not just by practitioners.

As JPMorgan Chase’s Naren Chittar puts it:

Governance must exist in every layer of the stack, from models and data to applications and interfaces, while enabling safe self-serve environments where employees can build and deploy their own agents.

Most AI governance failures are not exotic model failures. They are old governance failures that AI has made visible. You cannot effectively govern AI if your data governance is weak. And any data governance program that ignores how data is consumed by models and agents will be obsolete quickly.

The anti-pattern: fragmented stacks, compounding risk

Permalink to “The anti-pattern: fragmented stacks, compounding risk”If converging data and AI governance is the goal, it is worth being honest about where most organizations are today. Most do not have a single team, budget, or operating model that spans data and AI governance. The retailer story in Section 3 showed what fragmentation looks like in a single use case. Across an enterprise, it looks like this.

Data governance runs in one stack: catalog, glossary, lineage, access policies. AI governance forms in another: model registry, risk committee, red-teaming guidelines. Security sits on the side. Information governance lives with legal. Each team can show its own policies and metrics. From a board or regulator’s perspective, that is not enough.

Fragmentation produces four problems:

Inconsistent definitions. “High risk customer” means one thing in the warehouse, something else in the CRM, and a third thing in an AI risk register.

Policy gaps. Masking rules enforced in the warehouse may not apply in a retrieval pipeline or chatbot context.

Broken explainability. When an incident occurs, no one can produce a clean end-to-end story. This is worst with agents: an agent pulls data from one governed system, reasons through an ungoverned model, and triggers an action in a third. No single governance stack sees the full chain.

Slow scaling. Every new AI use case triggers fresh negotiations between data, AI, security, and compliance teams. Little is reusable, so scaling becomes a slow process.

The pattern here is not “governance is missing.” It is that governance exists in every lane but connects across none of them. That is where confidence breaks, with regulators, customers, and boards.

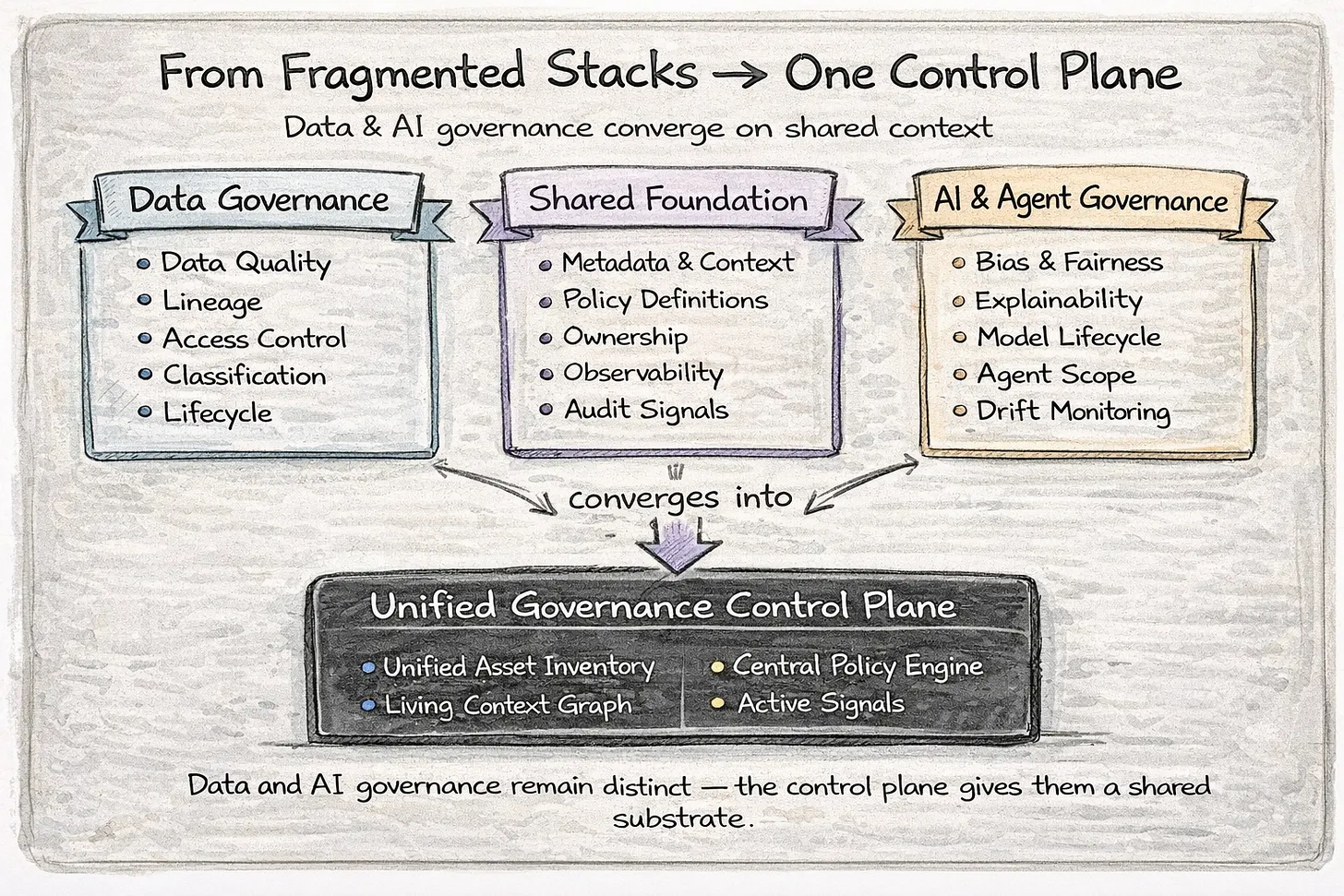

One governance control plane, built on context

Permalink to “One governance control plane, built on context”The answer is not another governance silo bolted on top of a fragmented stack. It is convergence, built from shared context.

Organizations need a unified governance control plane: a horizontal layer that understands data, information, and AI assets, knows how they relate, encodes policy once, and enforces it everywhere work happens.

Unified Governance Control Plane | Source: Author

Unified Governance Control Plane | Source: Author

This works because the control plane maintains a living graph of context, the relationships between assets, policies, owners, and the decisions they feed. It is not a static catalog that indexes assets in isolation. It is an active map of how information and intelligence flow through the organization.

In practice, new models or agents are discovered and linked into lineage automatically. When a policy tightens, controls update across the warehouse, the AI application, and the agent layer, not just in a document. When a steward updates a metric definition, that change propagates to every experience that exposes it.

The convergence works because each discipline retains its specialization while sharing one foundation.

-

Data Governance teams own AI-ready data practices: labeling, lineage, observability, and quality baselines.

-

AI Governance teams plug into that same foundation for model-specific controls: validation, bias mitigation, lifecycle gates.

Neither team loses its identity. Both gain a shared substrate that makes their work connect instead of collide.

Each governed AI use case becomes a reusable context product, not a bespoke compliance exercise. Patterns from the first agent can be reused for the next hundred.

The distinction that matters is architectural. The specific implementation will vary by stack and maturity, but the principle is consistent: shared context as the foundation, not disconnected tools stitched together as an afterthought.

Organizations can keep layering governance tools on top of disconnected systems, adding catalogs here, policy engines there, model registries somewhere else, and stitching them together after the fact. Or they can build from a foundation of shared context where data governance, AI governance, and security operate on the same map. One approach scales. The other compounds governance debt at exactly the moment AI is accelerating it.

Questions leaders should be asking

Permalink to “Questions leaders should be asking”Boards and executives need sharper questions, not more frameworks.

Ownership. Who is accountable for AI governance today, and how does that connect to data governance and security? One system or separate empires?

Decision risk. For our highest-stake decisions, can we name the data and models that influence them and reconstruct those decisions when challenged? Who is responsible for the model results and impacts?

AI security. Where do models and agents touch sensitive data? How are we detecting and controlling shadow AI in practice? Are we protected against prompt injections?

Architecture. Are we converging toward a single governance control plane, or adding more parallel stacks? When we change a policy, how many systems have to be updated by hand?

Closing: convergence or confusion

Permalink to “Closing: convergence or confusion”This year’s Gartner D&A Summit 2026 agenda told everyone where the conversation has moved:

-

A unified “Data and AI Governance” track sat alongside a dedicated AI track, with sessions asking how organizations should govern agents.

-

The opening keynote framed the challenge as navigating AI on the data and analytics journey to value, not as a standalone governance problem.

-

CXO surveys showed boards treating AI governance as a top emerging priority while enterprises moved into production faster than frameworks could follow.

That context makes the choice stark. Organizations that converge governance now – with one set of principles, one shared understanding of risk, and one active control plane spanning data, models, and decisions – will move faster with fewer surprises.

Organizations that let governance fragment will spend the next 18 months stitching together conflicting policies, reconciling audit trails across disconnected tools, and explaining to boards why the AI program that was supposed to create advantage became a compliance liability.

The question was never data governance versus AI governance. It was always whether you are building one thing or three.

References

Permalink to “References”Mayfield, The Agentic Enterprise in 2026

Forrester, AI Governance Market Forecast

Forrester, Enterprise AI Governance Survey

Gartner, D&A Governance Framework

Gartner, Data and Analytics Summit 2026 session agenda

NIST AI Risk Management Framework

NIST AI 100-1

EU AI Act

OECD AI Principles

Forrester blog: https://www.forrester.com/blogs/ai-governance-software-spend-will-see-30-cagr-from-2024-to-2030/

Forrester report: https://www.forrester.com/report/global-commercial-ai-software-governance-market-forecast-2024-to-2030/RES181688

Author Connect

Permalink to “Author Connect”Explore her newsletter - Data Governance Playbook

Thanks for reading Metadata Weekly!

The Insight Index: Your Weekly Data & AI Digest

Permalink to “The Insight Index: Your Weekly Data & AI Digest”Top resources, social posts, and recommended reads, carefully curated for you.

-

Your Data Agents Need Context — Jason Cui and Jennifer Li

-

From Words to Wisdom: How to Structure Knowledge for AI— Bruno Fievet

-

Managing Enterprise Context in the AI Era; A Practitioner’s Perspective— Karthik Ravindran

-

Unified Context-Intent Embeddings for Scalable Text-to-SQL— Keqiang Li, Bin Yang

-

Analytics Engineering’s Unfinished Work — Tim Castillo

-

OpenAI’s Frontier Proves Context Matters. But It Won’t Solve It.— Prukalpa

That’s all for this edition. Stay curious, keep exploring, and see you all in the next one!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

Originally published in the Context & Chaos newsletter on Substack.

Share this article