I don’t know how many of you have watched Inside Out as an adult. Both parts. I love them. Pixar has a way of naming things the rest of us feel but can’t quite articulate, and the thing Inside Out named, the thing that stayed with me long after the credits, is this: all emotions are valid. Joy spends the entire first film trying to keep Sadness out of the control room. She sidelines her, works around her, shuts her out. And it makes everything worse. The breakthrough doesn’t come from managing emotions better. It comes from finally understanding what each one is trying to tell you.

I’ve been thinking about that a lot lately. Because over the past few months, working at the intersection of AI and enterprise data, I’ve watched the same pattern play out in team after team. People who genuinely want to use AI, who believe in it, and who have everything they need to get started. And yet something stops them.

The finger often gets pointed at the technology. But the technical barriers have largely fallen. What remains are the human ones. And they have names.

The problem that was never really a technology problem

Permalink to “The problem that was never really a technology problem”For as long as I’ve worked in data, the metadata supply problem has been identical across organizations. You know which assets matter. You know which tables your analysts rely on, which dashboards leadership checks every Monday. You also know that almost none of them is properly documented: no descriptions, no context, no record of what they mean or how they’re used.

So teams do what they’ve always done. Ask the stewards, schedule the sessions, build the templates, follow up. Months pass. The documentation that does come in is uneven: some assets lovingly described, most still blank. The people who were supposed to write it had other priorities. Documentation was the thing they were supposed to do on top of their real work.

For most organizations, meaningful metadata enrichment has taken 9 to 12 months, if it ever happens at all. Not because people didn’t care, but because they were being asked to do something at a scale and consistency that humans were never built for. The context existed in SQL, query logs, and the heads of analysts who’d been with the data for years. The bottleneck was always the pipeline to surface it.

What changed recently is that AI can now read everything already living in your data environment — your SQL logic, lineage, existing annotations, query patterns — and synthesize it into rich contextual metadata at scale. Not replacing what your team built, but amplifying it across every asset at once, including the ones that never made it to the top of anyone’s queue.

That capability shift is real. But the teams I’ve watched aren’t slowing down because the technology isn’t ready. They’re slowing down because of something else entirely.

Over the past year, I’ve worked closely with enterprise data and governance teams going through structured AI enrichment sprints: teams that came in skeptical, teams that came in enthusiastic, and teams that came in with firm positions about what they would and wouldn’t allow. What I observed across all of them is that the blockers were rarely technical. They were emotional. And the emotions were consistent enough to name.

The three emotions AI deployment actually surfaces

Permalink to “The three emotions AI deployment actually surfaces”These aren’t feelings to fix or suppress. They’re signals that show up because you care about quality, you’ve built things that matter, and you take your work seriously.

Trust: “How can AI generate better descriptions than analysts who’ve spent years with this data?”

Permalink to “Trust: “How can AI generate better descriptions than analysts who’ve spent years with this data?””This one makes complete sense. Your analysts know things no model could: the history, the politics, the edge cases that never made it into any documentation. The tribal knowledge that lives in people, not systems.

But that framing misses what’s actually happening. AI isn’t competing with your analysts’ knowledge. It’s reading everything that knowledge has already produced: the SQL they wrote, the lineage they built, the queries they ran thousands of times, and synthesizing it across every asset simultaneously. It doesn’t replace the expert. It scales what the expert already created.

The fastest path past this emotion isn’t persuasion. It’s evidence. Show someone the output on data they know well. The trust question tends to answer itself.

One technical program manager at a freight analytics company described what happened when her team ran AI enrichment on their first set of assets:

“I expected boilerplate. Instead, it inferred business context I never explicitly provided, correctly describing how an asset fit into our customer journey just from column names and lineage. That’s when I realized this was knowledge synthesis, not just documentation.”

One team I worked with systematically rated every piece of AI-generated output across tens of thousands of asset descriptions. Seventy percent were immediately rollout-ready. A subset were flagged by the team as the standard they’d want human-written descriptions to meet going forward. They came in skeptical. The output changed the conversation.

A data governance lead at a European investment bank put it this way:

“We were stunned by the quality. How could it create such high-quality context from lineage, from SQL logic, from dbt logic? It really shows how much business information is hidden in metadata that we simply cannot see with human eyes. Agents pick it up, consume it all, and surface it in an organized way.”

Trust is earned through evidence, not persuasion.

Fear: “If I roll this out and something isn’t accurate, I’ll lose credibility with my team.”

Permalink to “Fear: “If I roll this out and something isn’t accurate, I’ll lose credibility with my team.””This one I felt personally. When I was building an internal AI agent to surface account health signals for a field team, I was genuinely afraid to show it to anyone. The output was useful, and I knew it. But instead of rolling it out, I quietly pulled two colleagues aside and asked for private feedback first. I didn’t want to be the person who shipped something broken.

One of them said something I’ve thought about almost every day since:

“Nandini, you don’t know that you’re competing with us not having anything.”

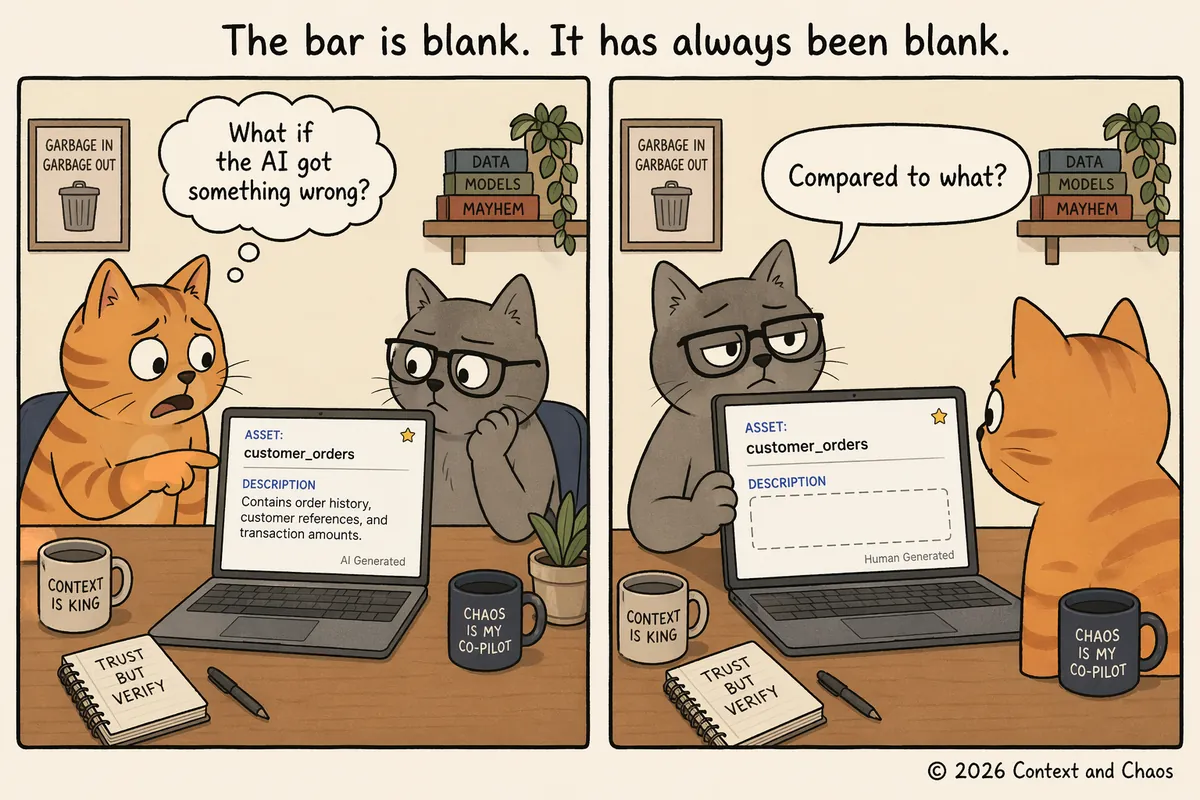

That was the whole reframe. We weren’t comparing AI output to perfect human documentation. We were comparing it to blank. And blank is a very low bar to clear. Most data environments aren’t choosing between AI-generated descriptions and carefully written human ones. They’re choosing between AI-generated descriptions and nothing: no context, no lineage explanation, no indication of what an asset is for or who uses it. The imperfect description almost always beats the absent one.

© 2026 Context and Chaos

The downside of rolling out AI-generated context, clearly labeled as such, is that some descriptions will be imperfect. The downside of not rolling out is that your users keep navigating blank assets with nothing to go on. The teams that moved fastest were the ones who stopped asking permission and started showing results. The fear of the conversation, it turned out, was consistently bigger than the conversation itself.

“Allow yourself to trust the process. If you do that a bit earlier, it could be surprising for you.”

Control: “I need to review and verify every single description before anything goes live.”

Permalink to “Control: “I need to review and verify every single description before anything goes live.””This is the most understandable emotion of the three, and the hardest to let go of. When you’ve spent years caring about the quality of your data, the instinct to review everything before it reaches users isn’t obstruction. It’s integrity.

The reframe that works: stop asking “how do I verify a million descriptions” and start asking “how do I build a system where quality is maintained at scale?” You move from reviewer to architect. The unit of work changes. Suddenly the thing that felt impossible becomes a design task instead of a manual one.

One team I watched started a sprint with a firm position: they wouldn’t release AI-generated content to their users and weren’t going to run it in production. By the end, they had enriched over 25,000 assets and were among the loudest advocates for AI-generated context in the group. The product didn’t change. Their frame did, shaped by watching peers they respected go through the same journey and come out with more confidence than they’d walked in with.

A senior data governance manager described the shift afterward:

“With single-digit hours of effort from our team, we were able to accomplish work that would have taken months for a larger team to finish. And realistically, we likely would not have ever started it.”

But the bigger shift wasn’t just what they built. When teams brought this context back to their wider organizations, the conversations changed entirely. They weren’t talking about documentation anymore. They were being asked: how do we make this the nervous system of our organization? What does our context layer strategy look like at scale? How do we feed this into the agents our engineering team is building?

The governance teams and stewards who had spent years being asked to document things found themselves being asked to architect something. That’s the agency that was always waiting on the other side of control: not just doing less manual work, but stepping into a more strategic role at exactly the moment it matters most.

Give up control. Retain agency. The goal isn’t to review everything. It’s to build a system you trust, and then use the time it frees to do the work only you can do.

What scale actually looks like when teams push through

Permalink to “What scale actually looks like when teams push through”Across enterprise teams working through these emotions in structured enrichment sprints, the output is not marginal. It’s discontinuous. The descriptions enriched, the SQL intelligence surfaced, the README updates generated: the volume is not what the same teams would have produced in a few extra months. It’s what they would never have produced at all. Not because no one cared, but because the manual model had a ceiling.

AI didn’t replace the humans. It removed the ceiling on what the humans could accomplish.

One governance lead put it this way:

“I was intimidated about this whole initiative. I wasn’t sure if it was feasible or if we’d have something presentable by end of year. This gave us a huge leap forward. The AI just really enables us to do things we wouldn’t be able to do otherwise.”

Another described their team’s attitude changing “instantly” the moment they saw what SQL intelligence surfaced about how their data was actually being used. They came in treating AI enrichment as just another tool. They left treating it as infrastructure.

The fourth emotion nobody names: identity

Permalink to “The fourth emotion nobody names: identity”There’s one more thing underneath all three of these that rarely gets said out loud. Data stewards, governance leads, the people who have spent years building context into systems that were never designed to reward that work: when AI can do the production work at scale, what is their role?

When AI takes on the documentation, the descriptions, the SQL intelligence synthesis, two things happen simultaneously. The pressure to personally document everything lifts. And what gets built becomes the foundation not just for human users but for every AI agent the organization runs. One shared context layer serves both. The governance work stops being a background maintenance task and becomes the infrastructure the company’s AI strategy runs on.

In practice, this means the person who used to spend their time chasing stewards for descriptions is now the person deciding which assets the AI prioritizes, how quality thresholds get set, and which context the organization’s agents are allowed to trust. That’s not a smaller job. It’s a more consequential one.

One governance lead described the shift plainly:

“I spent years building context that sat in a catalog nobody opened. Now I’m being asked to make sure every AI agent we run can access and trust it. That’s not a footnote to my job anymore. That is my job.”

The people who have been building context layers for years, often without recognition or the right tools, are exactly the people positioned to lead what comes next. Not in spite of AI. Because of it.

Joy spent the whole first film trying to keep the difficult emotions out of the control room. The breakthrough came when she finally understood what they were trying to tell her. Trust was telling you the expertise already exists and needs scaling. Fear was telling you to compare yourself to blank, not to perfect. Control was telling you to become the architect, not the auditor. Identity was telling you that the work you have been doing for years is about to matter more than it ever has.

The context layer your organization builds now isn’t just documentation. It’s the infrastructure every AI agent you run will depend on. The people who build it well are not being replaced by the technology. They’re becoming indispensable to it.

This is an adapted version of a piece I originally wrote.

Note: Views expressed are those of the contributor. All submissions are vetted for quality and relevance. Context and Chaos is information-first: no promotions, paid or otherwise.

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you.

Share this article