Every few months, the tech headlines follow a predictable script: a new AI model is out and — surprise surprise — it topped the leaderboards. Think of Gemini 3.1 Pro hitting 94% on PhD-level science reasoning tests, or GPT-5.4 Pro solving one of FrontierMath’s Open Problems that are unsolved by real mathematicians. In fact, AI models are doing so well that we have to keep developing harder benchmarks.

At the same time, the cost of frontier-level inference dropped nearly 300x in just two years. Just last month, Gartner predicted that by 2030, LLMs will become up to 100x more cost efficient than 2022 models and running inference on a model with 1 trillion parameters will cost 90% less. In short, AI intelligence is becoming historically powerful, abundant, and cheap.

And yet, in practice, AI feels particularly stupid. MIT found that 95% of generative AI pilot programs fail, and IDC reported that 88% of AI POCs never make it to production.

Models are getting smarter, options seem limitless, their prices are falling, and yet nearly half of AI projects are on the chopping block. We’ve rebuilt our junkyard engine into a racecar turbine but the car is still stuck in the driveway.

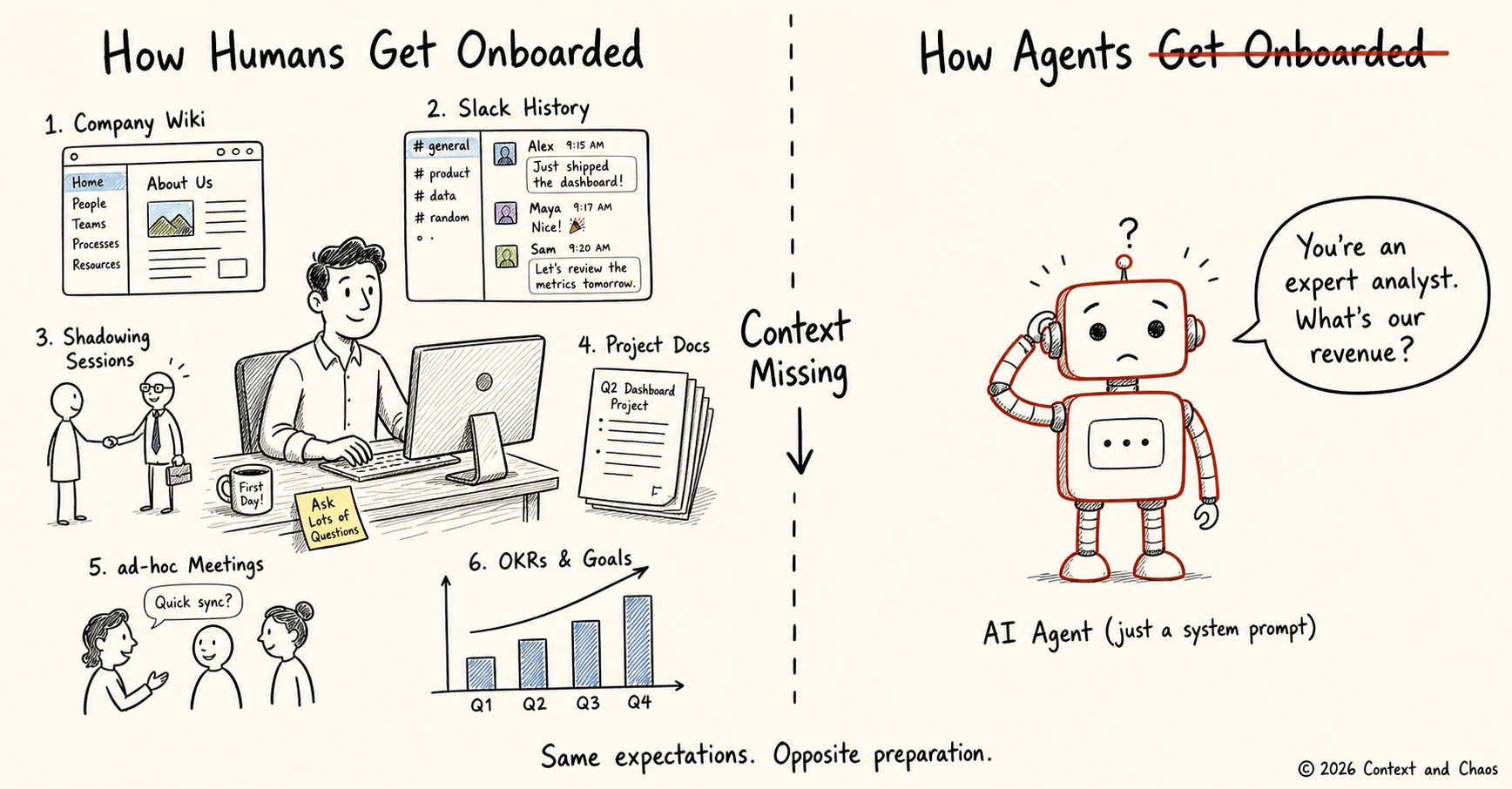

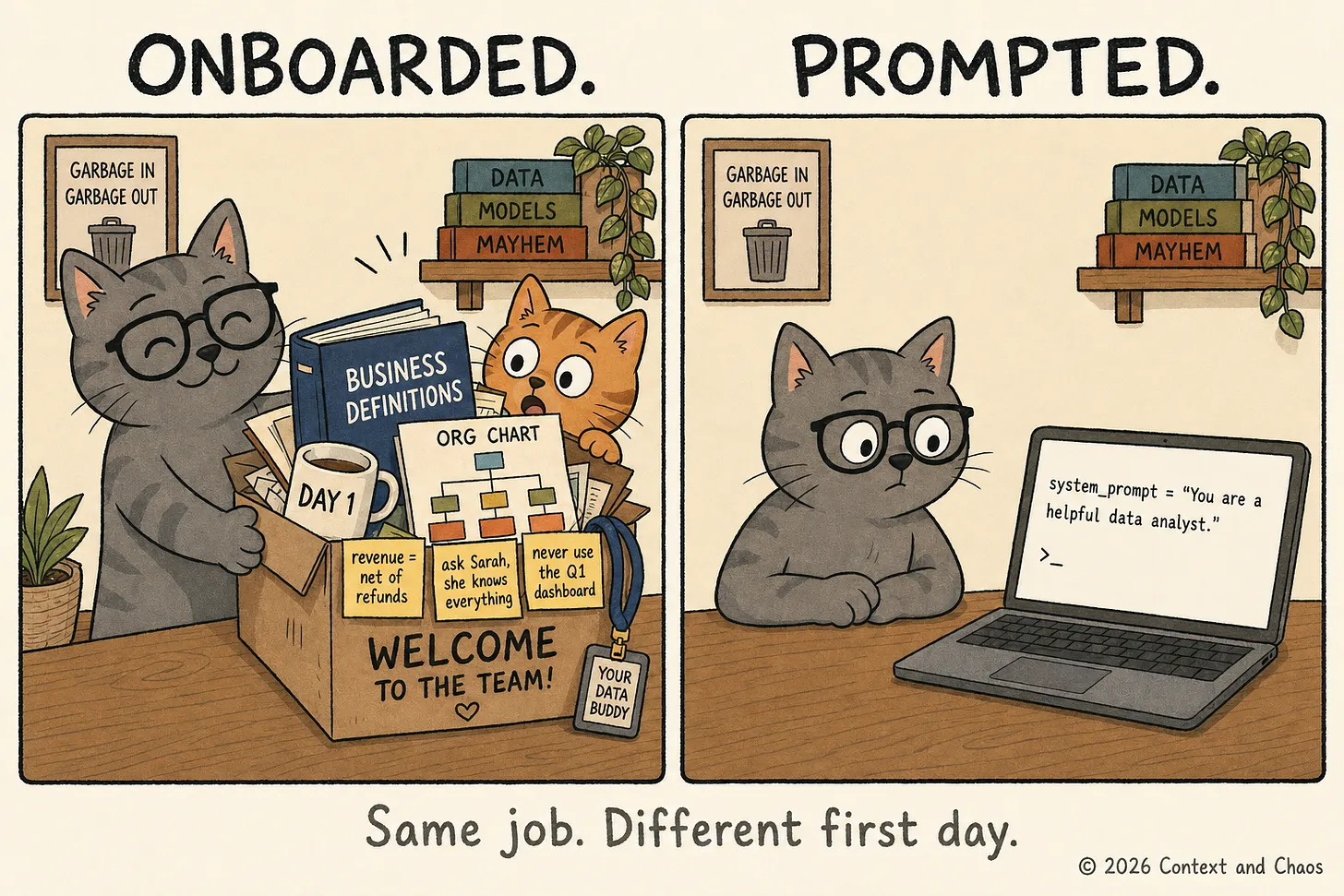

We don’t understand what intelligence actually means. We treat model intelligence like many people treat SAT scores — a narrow benchmark that people interpret as a broad measure of intelligence and real-world success. But just like the person with the highest SAT score won’t necessarily be the best employee (especially without onboarding, mentorship, and business context), even the “smartest” AI can’t deliver business value without the systems, knowledge, and feedback loops that provide context. In an era where intelligence is becoming commodified, the ultimate competitive advantage won’t come from a higher benchmark score. It will come from mastering the most valuable asset in the AI era: context.

The next frontier of AI: contextual intelligence

Permalink to “The next frontier of AI: contextual intelligence”The entire model race has been built around optimizing raw reasoning capability — pattern recognition, logical inference, code generation, language comprehension. It’s what we think of as intelligence in AI today. It’s certainly important and a commodity, but intelligence in a vacuum is only so helpful.

What we actually care about is whether the AI did the right thing in a specific situation. This is contextual intelligence — the ability to navigate, internalize, and apply an organization’s specific situational knowledge to an AI’s reasoning process. This context includes organizational definitions, domain rules, historical precedents, user intent, environmental constraints, institutional memory, and the tacit knowledge that lives in every situation, organization, and moment.

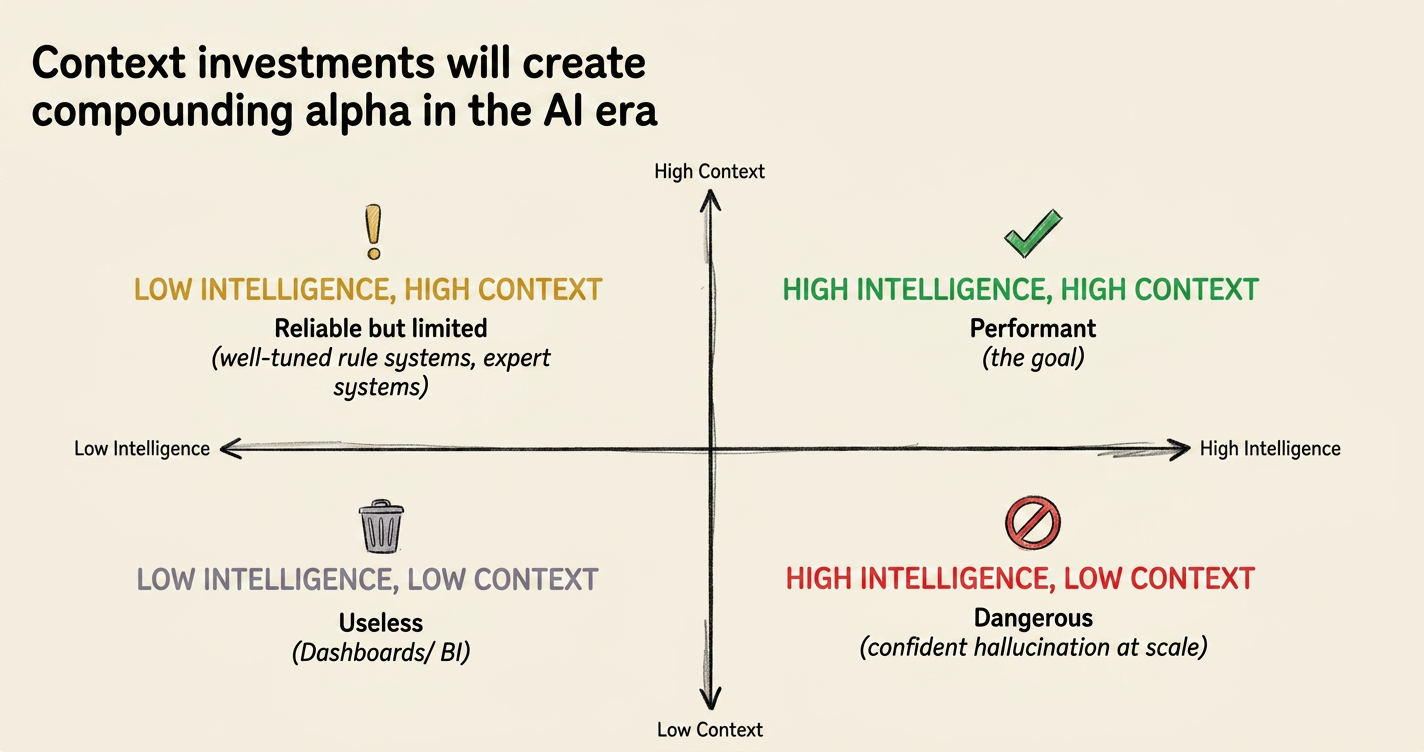

Performance is a function of both of these factors: intelligence applied to some specific context.

This function is multiplicative, not additive. That means that if your context is zero, your performance is zero. Even the world’s most capable model can’t make a trustworthy decision if it doesn’t know your company’s risk scoring convention or which data source is the “source of truth” this week.

Performance is a function of Intelligence x Context | Source: Context & Chaos

This also means that pairing high intelligence with the wrong context leads to negative performance. A smarter model operating on incorrect definitions, wrong governance rules, or outdated institutional knowledge produces more elaborate, more persuasive, more dangerous errors. It hallucinates more convincingly, not less.

The AI industry is currently behaving as though intelligence is the hard frontier and context is an easy implementation detail for the customer to figure out. The truth is the exact opposite. Building the reasoning engine is a problem being solved at breakneck speed by well-funded labs. Building the contextual basis for that reasoning is the real unsolved frontier. And without a corresponding improvement in context, enterprise AI will remain stalled.

Contextual intelligence isn’t anything new

Permalink to “Contextual intelligence isn’t anything new”There are two ways to think of something being important. The first is to say that it’s a necessary input, like a car needing gas. The second is more fundamental — like saying that walking needs gravity. There’s just no way around it.

When I say that intelligence needs context, I don’t just mean that context matters. I mean that context is what makes intelligence possible for enterprise AI. This isn’t a hot new take but an old insight we’ve forgotten from decades of cognitive science research.

In 1987, Lucy Suchman studied how people used Xerox copiers and discovered something the engineering community hated: people improvise rather than executing plans. The “intelligent” help system on the copier failed because it only saw button presses; it couldn’t see the situation the user was actually navigating. Agentic AI is based on the same idea as old-school copiers — give an LLM a prompt or a “workflow” and it will execute it perfectly. Real-world performance requires continuous adaptation to context that can’t be scripted in advance.

In 1979, James Gibson introduced the idea of “affordances” — a chair “affords” sitting to a human but not to a fish. The usefulness of the object isn’t in the chair itself, but in the relationship between the object and the environment. Similarly, “intelligence” isn’t an inherent property of AI models. They can be brilliant at solving one problem but hallucinate wildly for another. All that changes is the context, not the underlying model.

In 1980, brothers Stuart and Hubert Dreyfus proposed their five-stage model of skill acquisition: novice, advanced beginner, competent, proficient, expert. Novices follow context-free rules like “shift gears at 3,000 RPM.” Experts perceive a situation and respond intuitively. The implicit promise of AGI is that with enough compute and data, you get general intelligence that works everywhere. However, Dreyfus’s model says the opposite: the more intelligent a system becomes, the more its intelligence should be context-sensitive, not less. More context doesn’t always mean better performance either, as illustrated by research on context rot in LLMs. Our race toward Artificial General Intelligence is building a world of perpetual novices rather than useful models for enterprise.

This understanding of expertise as a “situated” skill is echoed by Edwin Hutchins’ theory of distributed cognition from the 1990s. After studying navigation teams on naval vessels, he realized that no single person “knows” how to pilot a massive ship — the intelligence is distributed across people, tools, maps, and procedures. This flips our current AI race on its head: from “how smart is the model?” to “how intelligent is the system?” A brilliant model in a poorly configured system produces worse outcomes than a mediocre model in a well-configured one.

Even AI heavyweights seem to be coming to this conclusion. In 2026, Yann LeCun left Meta after twelve years to launch AMI Labs, raising $1.03 billion — the largest European seed round ever — to build “world models” that understand physics rather than predicting text. His billion-dollar bet: intelligence without contextual representation is a dead end.

Today’s intelligence gap in practice

Permalink to “Today’s intelligence gap in practice”While this theoretical backing may feel abstract, the reality is anything but. When you look at the 95% of AI pilots that fail to reach production, the post-mortem is rarely about a lack of reasoning power. It’s almost always a context collapse.

In practice, this collapse usually looks like one of three failure scenarios playing out in organizations every day:

The Connectivity Failure. An AI agent is asked to analyze a “customer journey,” but the organization’s data is a fragmented map of different systems. The customer is “John Doe” in the CRM, “J. Doe” in the billing system, and “User_882” in the support logs. The model reasons perfectly on the data it can see but is functionally blindfolded.

The Semantic Failure. A model is asked to calculate “Quarterly Revenue.” It finds the data, but it doesn’t know that for the Sales team, revenue means bookings, while for Finance, it means recognized cash. The model picks one, applies it confidently, and produces a report that is technically sophisticated but functionally useless — lacking the “local dictionary” required to translate raw intelligence into organizational truth.

The Institutional Knowledge Failure. A compliance agent flags a transaction as a violation because it strictly follows the written manual. What it doesn’t know is that the compliance lead issued a memo six months ago noting a permanent exception for this specific entity type. That knowledge lives in a buried PDF and a veteran analyst’s memory. The AI produces a review that is technically correct but still wrong for the business.

In all three cases, the failure has nothing to do with the model’s parameters. Upgrading from GPT-4 to GPT-5 or switching from Claude to Gemini fixes exactly zero of these problems. These are context failures, not reasoning failures.

The worst part is that these failures compound. If an AI agent has an 85% success rate at each step of a ten-step workflow — and let’s be honest, that’s a high bar — the odds of the entire chain succeeding is only about 20%. Failures cascade, and hallucinations emerge from a model trying to reason through a contextual void.

OpenAI discovered this for themselves. When they built an internal data agent, they learned they couldn’t just point the model at their databases and hit go. They needed to build six layers of context: table usage and schema, human annotations, code-derived definitions, institutional knowledge from Slack and Docs, memory from corrections, and runtime context from live queries.

What this means for the enterprise

Permalink to “What this means for the enterprise”We are witnessing a massive shift in the AI stack. As models compete on benchmarks and price, the cost of reasoning continues to collapse. No company can build a moat based on AI alone — you can’t build a durable competitive advantage on a resource that gets exponentially cheaper every year and is available to all of your competitors off the shelf.

On the other side, context is non-commoditizable. Your organization’s understanding of its own data, its unique semantic definitions, and its historical “why” can’t be trained into a generic model. While intelligence converges, context compounds.

If this holds, three things change for every leader trying to navigate this landscape:

The frontier shifts. The most important question in AI is no longer “How do we build a more powerful model?” It’s “How do we represent and maintain organizational context?” After decades spent building the science of data engineering, we now need to build the science of context engineering — creating systems that can capture the tacit knowledge in an experienced analyst’s head and translate it into a structured world model that a machine can actually navigate.

The strategic asset shifts. In an era of abundant intelligence, the only durable moat is your organization’s “world model.” The organizations that invest in building and governing their context infrastructure are building a massive compounding advantage. The tenth AI agent you deploy will inherit the context built for the first nine. In this world, the marginal cost of deploying AI goes down as collective accuracy goes up.

The measure of progress shifts. AI companies need to stop obsessing over MMLU scores and leaderboard rankings. They measure a model’s potential in a vacuum but nothing about its performance in reality. Instead, we need situated evaluation — a measure of how the entire system performs a specific task within your actual environment, using your actual, messy data.

Investing in Context will Create Compounding Alpha in the AI Era | Source: Author

Conclusion: the great inversion

Permalink to “Conclusion: the great inversion”For the last few years, the “hard problem” was building a machine that could reason. The model was the center of discourse, while giving it whatever infrastructure or information it needed to succeed was an afterthought.

Now, as AI models are growing at an astonishing rate, the hard and easy problems have officially switched places. Building the reasoning engine — once the pinnacle of human engineering — is being solved at scale by a handful of labs. Building the context to make reasoning useful? That’s the new frontier. Unlike launching a new model, this can’t be solved once and shipped globally. It must be solved for every organization, every domain, and every evolving situation.

The companies that will win the next decade won’t be the ones with the largest compute clusters or the most expensive model subscriptions. They will be the ones that have mastered situated intelligence — figured out how to capture their tacit knowledge, resolve their semantic conflicts, and map their unique data landscapes.

The Cats of Context & Chaos

Permalink to “The Cats of Context & Chaos”

Upgrading the Model vs Fixing the Context | Source: Context & Chaos

Thanks for reading Context and Chaos!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

Share this article