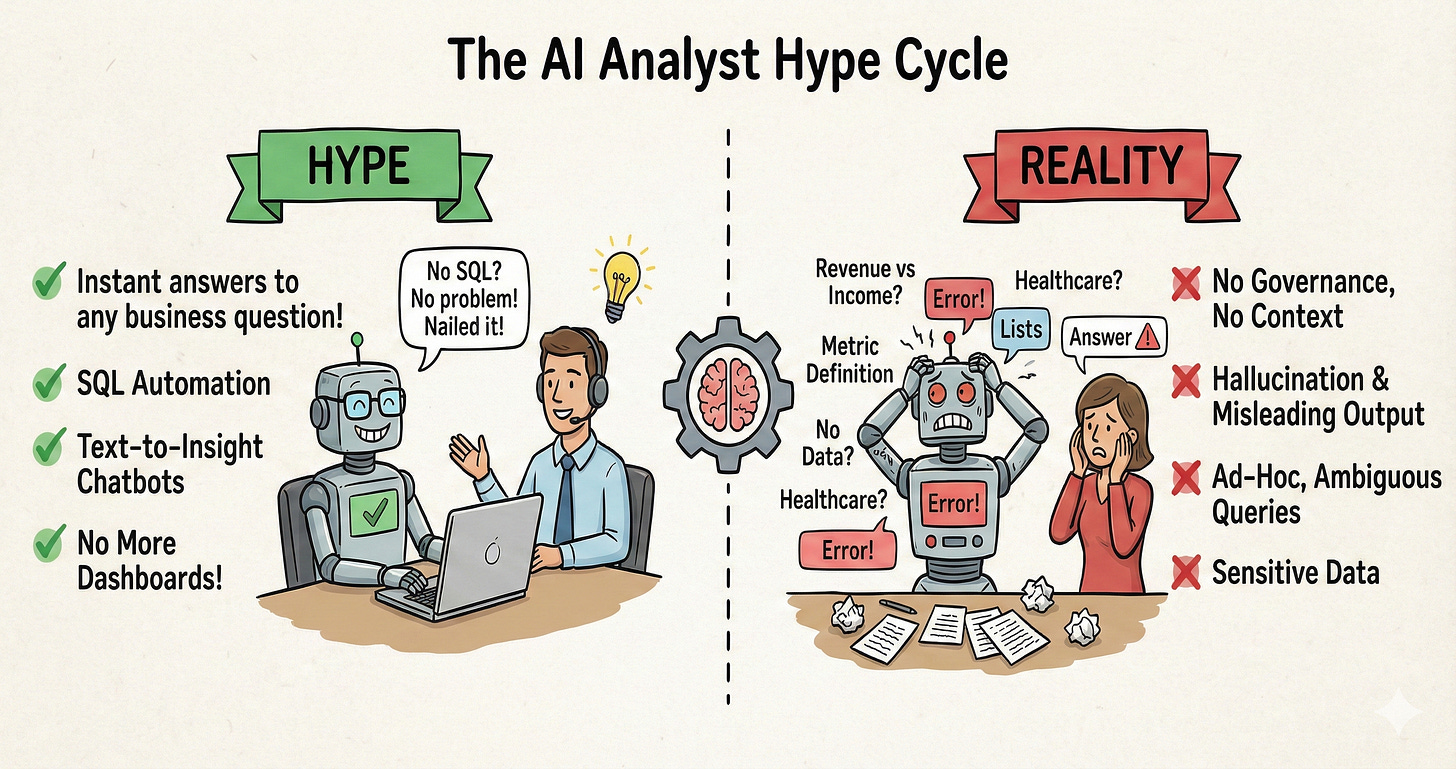

When GPT-3 hit the scene, the software world got flipped on its head. Suddenly, things that seemed impossible — using AI to generate SQL, turning business questions into instant answers, finally making data accessible to everyone — felt within reach.

The market opportunity was massive. Nearly every business intelligence vendor saw both the threat and the opportunity, pivoting hard and shipping AI features at unprecedented rates. Startups rushed in. Venture capital followed. The demos were magical.

Two and a half years later, those demos are still magical and polished — but there are far more of them than production deployments. The gap between what AI analysts promise and what they actually deliver in enterprise environments isn’t closing as fast as anyone expected.

So where are we stuck? What does success actually look like today? And where do we go from here?

AI Analysts: Hype Vs Reality | Source: Author

Why dashboards exist (and why that matters)

Permalink to “Why dashboards exist (and why that matters)”Before we talk about replacing dashboards, it’s worth understanding why they exist in the first place.

Dashboards aren’t just a user interface choice. They’re a governance mechanism. Data teams use them to “freeze” metric definitions in place, preventing business users from accidentally generating misleading numbers. When a dashboard shows “Monthly Recurring Revenue,” everyone sees the same number, calculated the same way, every time.

This solved a real problem. Give business users direct database access, and they’ll join tables incorrectly, filter data inconsistently, and create fifteen different definitions of the same metric. Dashboards were the guardrails.

Platforms like Looker tried to evolve this with LookML, giving business users more freedom to explore data while keeping them within safe boundaries defined by the data team. Other tools followed similar patterns. The principle remained: technical teams define what metrics mean, and business users work within those constraints.

But dashboards have obvious limitations. They’re rigid. They pile up until no one can find what they need. The UX is clunky for people who spend 90% of their time in Slack, email, or CRM tools — and don’t have bandwidth to learn another platform, especially when those metrics keep changing anyway.

A chat interface that could simply answer questions in natural language felt like the obvious next step. Just ask “What was revenue last quarter by region?” and get an answer. No hunting through folders. No learning query builders.

The problem everyone overlooked: AI analysts need the same governance that dashboards provided. Actually, they need more of it.

When you look at a dashboard, you can see the filters, the date ranges, the calculation logic. When an AI generates an answer, all that context is hidden behind a natural-language interface that sounds authoritative, even when it’s completely wrong.

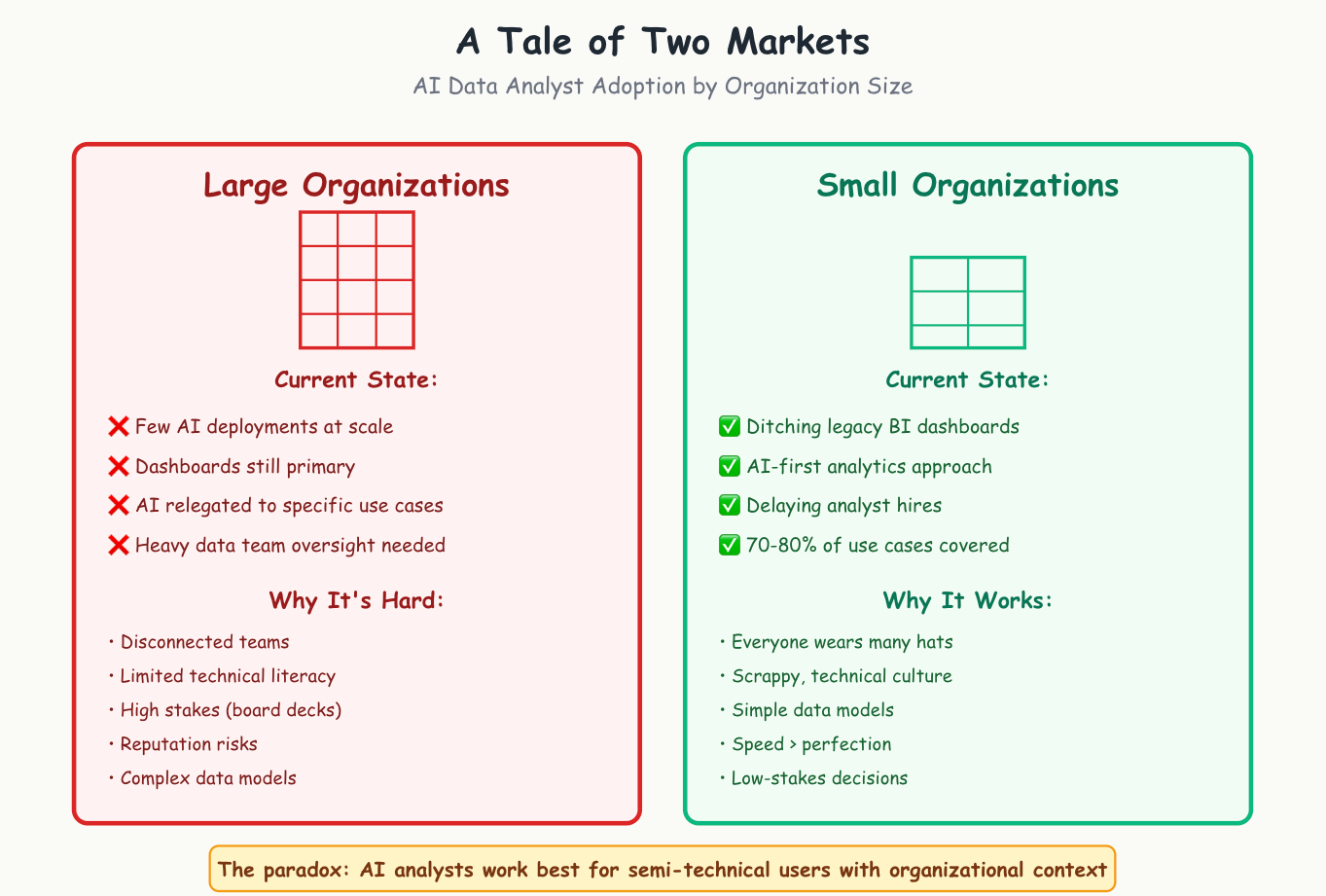

The current state: a tale of two markets

Permalink to “The current state: a tale of two markets”After working with thousands of teams and talking to many more evaluating their options, here’s where things actually stand:

Large organizations: Very few have successfully rolled out AI that can answer arbitrary business questions from non-technical users. Dashboards remain the primary interface. AI features exist, but are relegated to specific use cases or require heavy data team oversight.

Small organizations: Many are completely ditching legacy BI dashboards. Some are even delaying data analyst hires in favor of AI analysts.

Even in organizations abandoning dashboards, AI analysts are most useful in the hands of semi-technical users. This seems paradoxical until you consider the organizational context.

A large public company has massive teams, often disconnected from each other and from the underlying data. Many employees have limited technical literacy and no way to validate whether an AI’s answer makes sense. The stakes are high. Bad data in a board presentation can end careers. The data team’s reputation depends on accuracy.

Contrast this with a 20-person growth startup where every customer success manager is scrappy enough to dig into data when needed. The data model is simple enough to hold in your head. Everyone has context about what numbers should roughly look like. Using some data, even imperfect data generated by AI, is better than having no data at all.

The Current State by Organization Size | Source: Author

At an early stage of organizational maturity, speed matters more than perfection.

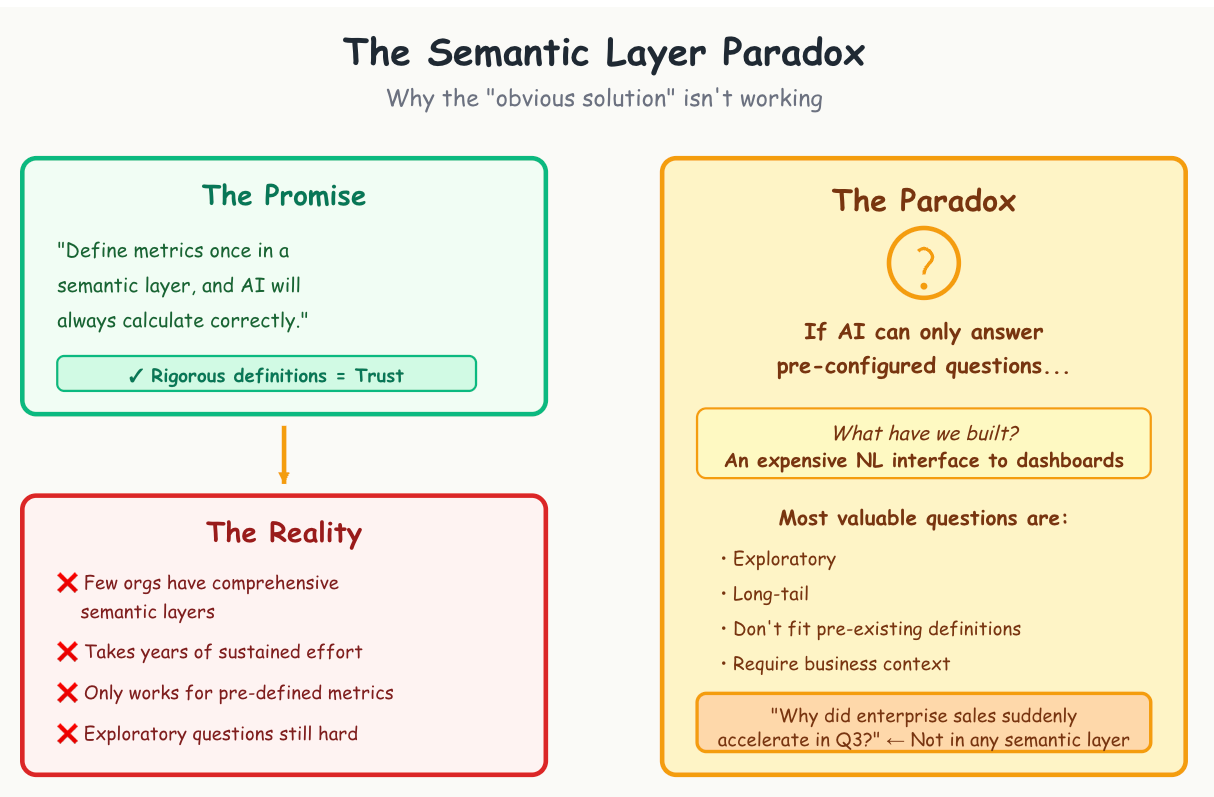

The semantic layer solution that isn’t

Permalink to “The semantic layer solution that isn’t”Ask how to bridge this gap — how to make AI analysts trustworthy enough for large enterprises — and everyone points to the same answer: semantic layers.

The logic is sound. If data teams rigorously define each metric in a structured format, and AI uses those specific definitions to answer questions, then AI can be deployed with confidence. Define “revenue” once, properly, and the AI will always calculate it correctly.

There’s just one problem: semantic layers remain elusive for all but the most well-resourced organizations.

I’ve talked to data leaders at some of the most respected, tech-forward companies in Silicon Valley. I can count on one hand the number who told me they’ve successfully implemented comprehensive semantic layers. And these are organizations with large, sophisticated data teams. The ones who succeeded invested years of sustained effort.

For everyone else, semantic layers are aspirational. They exist in roadmap slides, not in production.

But even if we solved the implementation challenge, there’s a deeper paradox lurking underneath.

If AI can only answer questions that have been pre-configured by the data team in a semantic layer, what have we actually built? An expensive natural-language interface to existing dashboards. The AI can slice and dice pre-defined metrics in creative ways, but it can’t help with genuinely exploratory questions — the nebulous, long-tail queries that don’t fit neatly into pre-existing definitions.

The Semantic Layer Solution That Isn’t | Source: Author

From our experience, most ad hoc or exploratory questions exist precisely to probe that long tail. “Why did enterprise sales suddenly accelerate in Q3?” doesn’t map to any pre-built metric. But it’s often the most valuable question to answer.

What we actually need

Permalink to “What we actually need”The solution isn’t traditional semantic layers. It isn’t rigid YAML or JSON files that require engineering effort to update and that no human actually reads.

We need something closer to living documentation. AI context that’s human-readable, so data teams can actually maintain it, and AI-maintainable, so it can evolve based on user feedback and interactions. Call it an AI context graph, call it dynamic semantic layers, call it whatever you want. The key is that it needs to be fundamentally different from what exists today.

The context layer needs to capture not just metric definitions, but business logic, edge cases, known data quality issues, and the ambiguities that exist in real-world data. It needs to help AI say “I’m not confident about this answer because…” rather than hallucinating precision.

This is infrastructure work. It’s not sexy. It doesn’t make for exciting demo videos. But it’s the actual bottleneck that’s blocking AI in production.

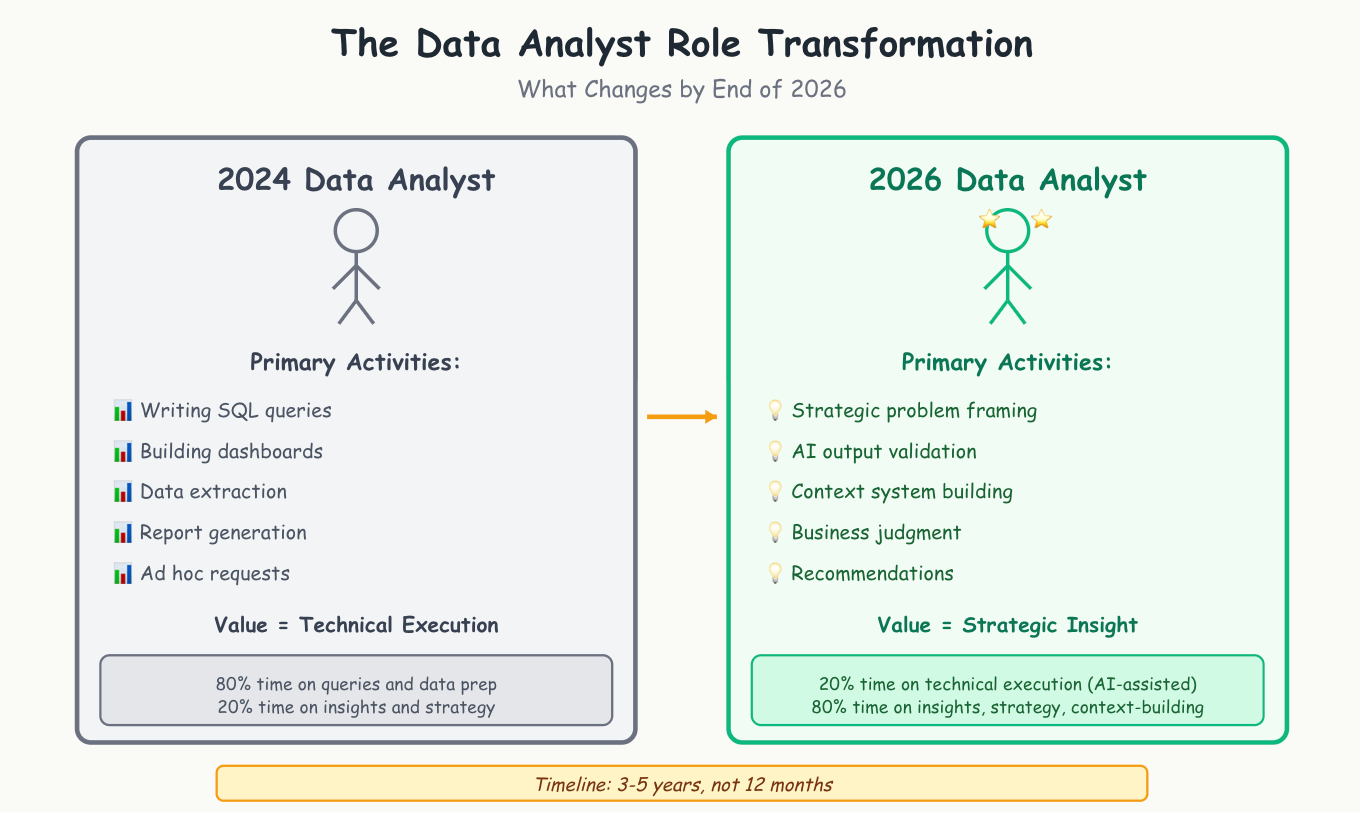

What 2026 will actually look like

Permalink to “What 2026 will actually look like”Here are my predictions for where we’ll be by the end of 2026:

Dashboards don’t die. There’s enduring value in being able to return to a report week after week and know the numbers are measured consistently. Core business metrics — the ones that go into board decks and strategic planning — will stay in dashboard form for the foreseeable future.

Small and medium organizations lead the charge. We’re already seeing this. These teams are rushing to AI-first analytics solutions for most ad hoc and exploratory questions. Dashboards don’t fully disappear, but we’ll see a surge in adoption of tools that replace 70–80% of dashboard use cases. Teams that previously couldn’t justify hiring data analysts will get access to insights they never had before.

The data analyst role transforms completely. It’s hard to imagine data analyst and data scientist roles looking the same by the end of 2026 as they did in 2024. AI will elevate analysts who are close to the business and provide strategic insight and recommendations. It will replace those whose primary value was writing SQL queries and sending reports without strategic context.

The analysts who thrive will be those who can translate business problems into the right questions, validate AI output, build the context systems that make AI useful, and provide the judgment and recommendations that AI cannot.

The Data Analyst Role Transformation | Source: Author

But this transformation takes 3–5 years, not 12 months. The infrastructure for trustworthy AI analytics doesn’t exist yet. Building it requires solving hard problems around data governance, context management, and organizational change that won’t be solved by the next model release.

What this means for data teams

Permalink to “What this means for data teams”If you’re a data leader trying to navigate this landscape, here’s what actually matters:

Invest in context and documentation now. However you formalize it — whether through comprehensive data dictionaries, well-maintained wiki pages, or actual semantic layer implementations — having clear, accessible documentation of what your metrics mean is the foundation everything else builds on. AI doesn’t eliminate this need. It amplifies it.

Start with narrow, high-value use cases. Don’t try to replace your entire BI stack overnight. Find specific workflows where AI assistance provides clear value. Maybe it’s helping analysts write SQL faster, or generating natural-language explanations of dashboard metrics for executives. Build confidence through small wins.

Focus on augmentation before replacement. The teams seeing success are using AI to make their data teams more productive, not to eliminate them. AI-assisted analytics works today. Fully autonomous AI analysts for non-technical users remains challenging.

Prepare your organization for the shift. The data analyst role is changing. Start having conversations now about what skills matter in an AI-assisted world. Business acumen, communication, strategic thinking, and context-building become more valuable. Pure technical execution becomes less differentiating.

The gap between demos and deployment is real. But it’s not permanent. The teams that invest in the right infrastructure now — the unsexy work of building context systems and governance frameworks — will be the ones who successfully deploy AI analysts at scale.

The question isn’t whether AI will transform how we work with data. It’s whether we’re building the foundation that makes that transformation actually work.

Thanks for reading Context & Chaos!

The Insight Index: Your Weekly Data & AI Digest

Permalink to “The Insight Index: Your Weekly Data & AI Digest”Top resources and recommended reads, carefully curated for you.

-

The Analytical Skills No One Teaches You — SeattleDataGuy and Olga Berezovsky

-

Data Contracts: A Missed Opportunity — Ananth Packkildurai

-

Ontologies, Context Graphs, and Semantic Layers: What AI Actually Needs in 2026 — Jessica Talisman

-

Collaborative Intelligence — Aatish Nayak

-

Why We’ve Tried to Replace Data Analytics Developers Every Decade Since 1974 — Mark Rittman

-

Data is Your Only Moat — Vikram Sreekanti and Joseph E. Gonzalez

-

Why the “Semantic Layer” Isn’t Enough for AI — Juha Korpela

-

Why Lineage and Quality Metrics Are the Foundation of Context Graphs — Eric Simon

-

Context Management for Deep Agents — Chester Curme and Mason Daugherty

-

Why AI’s Productivity Promise Falls Apart Without Human Expertise — Brent Dykes

That’s all for this edition. Stay curious, keep exploring, and see you all in the next one!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you.

Originally published on the Context & Chaos Substack newsletter.

Share this article