The most common question surfacing in practitioner communities right now isn’t which model to use or how to structure the prompt. It’s a quieter, more uncomfortable question: why does our agent keep getting things wrong that we didn’t even think to put in the prompt?

The answer, across the post-mortems and the Slack threads and the write-ups being shared on LinkedIn, is consistent. The agent isn’t hallucinating. It’s operating without the organizational context that any human in the same role would have absorbed in their first month.

The numbers confirm what practitioners already feel. McKinsey’s most recent State of AI found only 39% of organizations attributing any EBIT impact to AI at all, with most reporting it accounts for less than 5% of enterprise EBIT. Gartner predicted 30% of generative AI projects to be abandoned after proof of concept by the end of 2025, citing poor data quality, inadequate risk controls, and unclear business value. BCG surveyed 1,250 firms and found 60% are reaping hardly any material value from AI investments. And according to Gartner’s Predicts 2026 on data and analytics, the agent deployment wave is accelerating regardless: hundreds of agents per enterprise over the next twelve months, in some cases thousands.

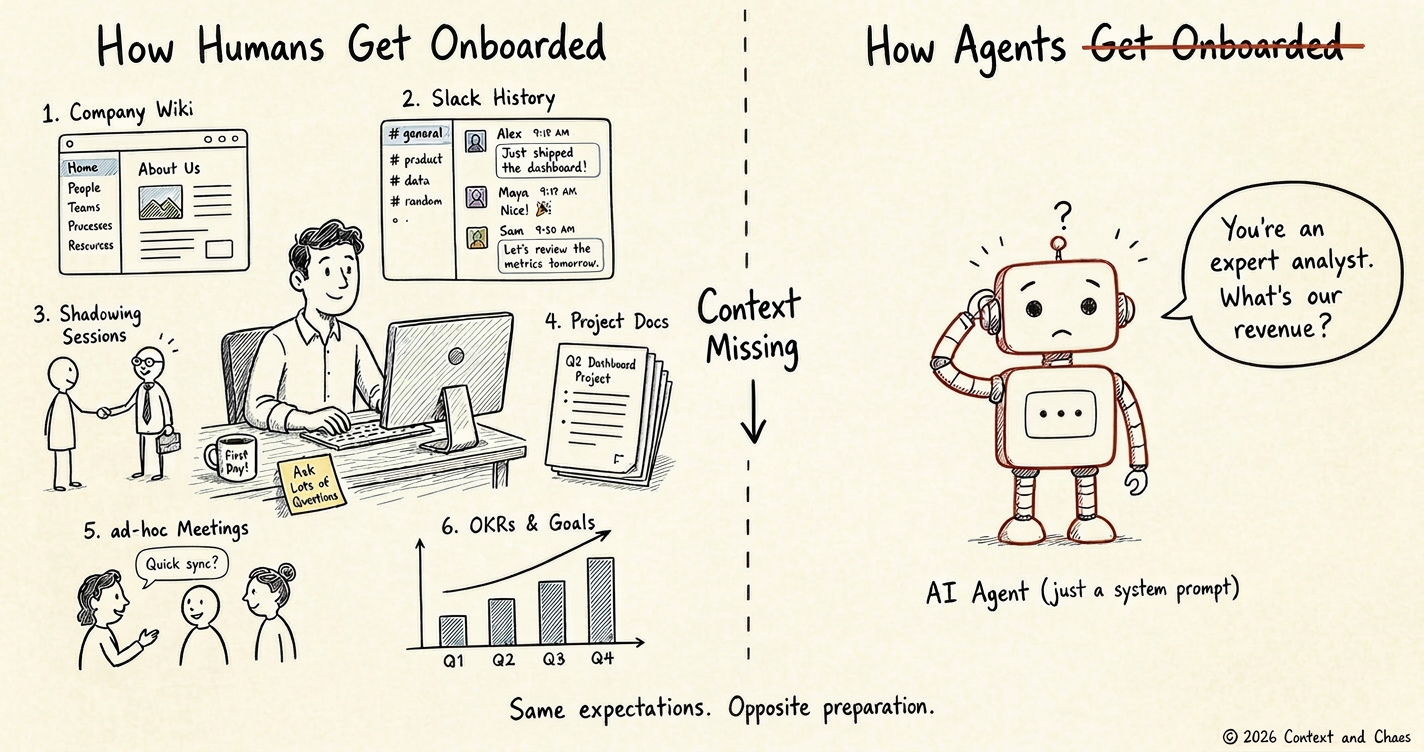

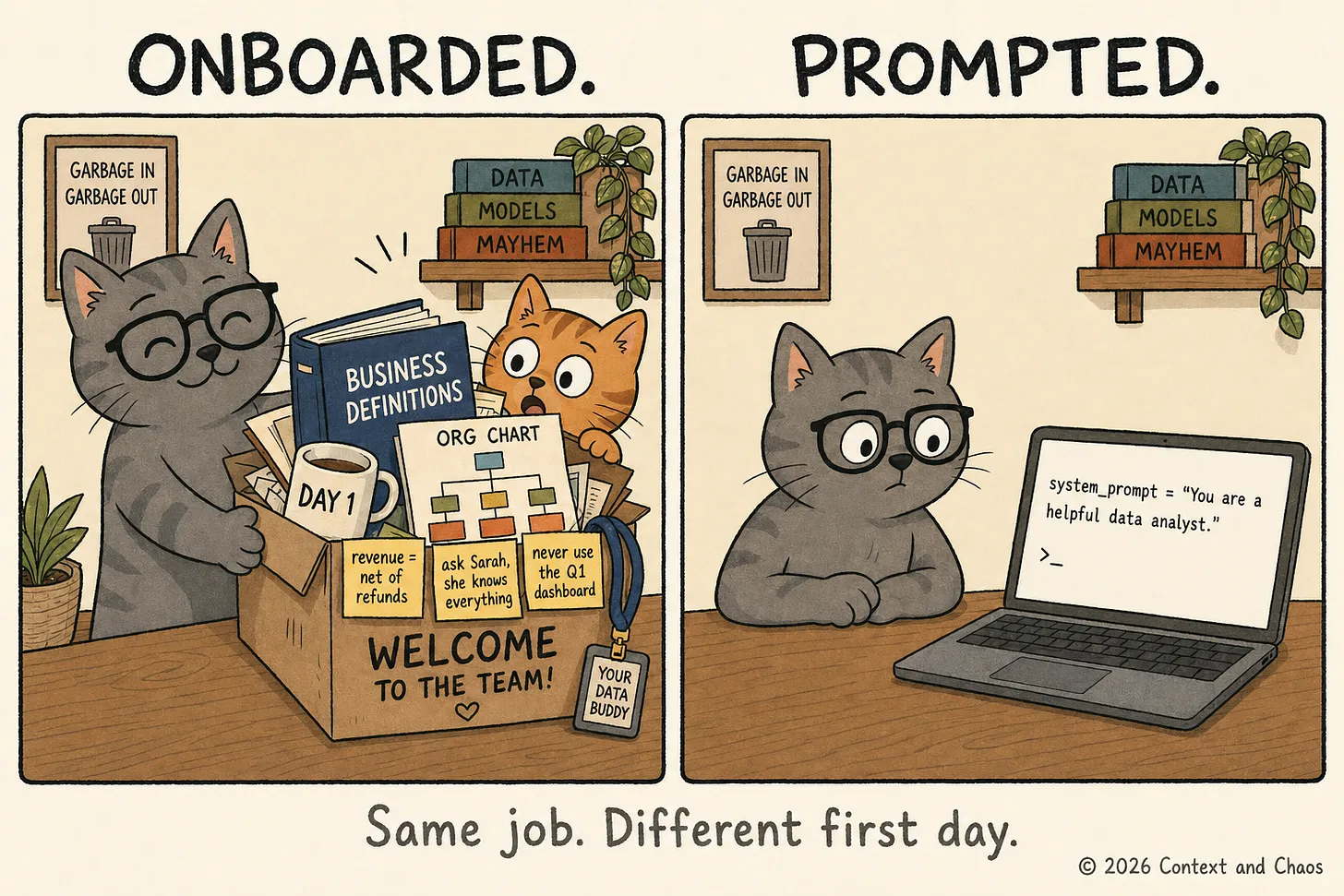

Now compare what those agents are getting at deployment to what the average new hire gets in their first week.

A new human hire gets walked through the data sources and told which one to trust for what. Use the warehouse for historical. Never the operational system for anything going into a report. The Q1 dashboard is broken, don’t touch it. They get the business definitions: what “customer” means here, why “revenue” in Finance doesn’t match “revenue” in Sales, why churn is calculated three different ways across three dashboards and which one the CFO actually uses. They hear the unwritten rules in hallways and in meetings: when to escalate, when to bend the policy, when to absolutely not. They make mistakes in their first ninety days and their manager corrects them, and the correction sticks.

The simplest way to think about it:

“A human hire gets six months of structured exposure to the business before they’re trusted to act on their own. An AI agent gets a prompt.”

This is the gap. Not whether AI works. Not whether the model is smart enough. We have spent fifty years building onboarding infrastructure for human employees and roughly six months pretending agents don’t need any.

What context failure actually looks like in agent deployments

Permalink to “What context failure actually looks like in agent deployments”The pattern shows up the same way across most of the public stories practitioners are sharing.

A finance analyst agent at a Fortune 500 has been pulling the wrong revenue number into executive dashboards for weeks. Not a hallucination. Not a model failure. The agent picked a legacy table that nobody had told it to avoid, did perfect math on it, and produced a number that flowed all the way up to the CEO. By the time someone caught the mistake, the wrong figure had been quoted in three meetings.

A customer support agent starts processing refunds for serial complainers because nobody encoded the eighteen-prior-complaints rule that veteran reps know by instinct.

A compliance agent flags a transaction as a violation, strictly following the written policy. It does not know that the compliance lead issued a memo six months ago noting a permanent exception for that specific entity type. The agent’s review is technically correct. It is also wrong for the business.

These aren’t edge cases. They’re the median outcome of agent deployments that skip the contextual layer. Three patterns surface again and again in the practitioner discussion:

The connectivity gap. The agent finds the customer represented as three different IDs across three different systems. The model reasons perfectly on the data it can see. It is functionally blindfolded.

The semantic gap. The agent calculates quarterly revenue, finds the data, and doesn’t know that for one team revenue means recognized cash and for another it means closed-won bookings. It picks one, applies it confidently, and produces a report that is technically sophisticated and operationally useless.

The institutional knowledge gap. The agent follows the written policy and misses the unwritten exception that lives in a buried PDF or a senior employee’s head. The output is technically correct and still wrong for the business.

In all three cases, upgrading from one frontier model to the next fixes nothing. These aren’t reasoning problems. They’re context problems.

Three moves the fastest teams are making

Permalink to “Three moves the fastest teams are making”The instinct when teams recognize the onboarding gap is to start writing things down. Document the tables. Define the metrics. Catalog the exceptions. Build the briefing document.

That instinct is right. The execution is what fails.

Building proper context documentation for a single production use case is a months-long project in most organizations. Define every metric. Debate it in committee. Ship one agent. Then do it again for the next one. Multiply that by hundreds and the math collapses. Most of the documentation that gets written goes stale before the next agent ships.

The teams making real progress have replaced this model with something different.

Move 1: Run a context audit before every agent deployment

Permalink to “Move 1: Run a context audit before every agent deployment”Before deploying the next agent, map what it would need to know. Which data sources does it touch? Which business definitions apply? What exceptions exist that aren’t in any documentation? The teams moving fastest run a pre-deployment context review the same way engineering teams run a security review. It adds days to the front end. It eliminates weeks of silent failure in production.

Move 2: Use AI to draft the onboarding package; use humans to certify it

Permalink to “Move 2: Use AI to draft the onboarding package; use humans to certify it”The first agent the fastest-moving teams deploy isn’t the one running analytics or processing invoices. It’s the one that learns the business. It reads the data estate, synthesizes undocumented lineage, infers relationships between tables nobody has formally defined, drafts metric definitions, flags conflicts, and surfaces gaps where documentation simply doesn’t exist. Then it hands the output to humans to certify, correct, and govern.

The distinction matters: the agent isn’t deciding what the business means. It’s drafting what it has observed and presenting it for human review, the same way a junior analyst hands a first draft to their manager. The agent does the cataloging, the structuring, the consistency-checking, the parts that took data teams years and produced incomplete results anyway. Humans do the governing, the certifying, the deciding what counts as truth.

Move 3: Version the context layer like code

Permalink to “Move 3: Version the context layer like code”The teams further along on this describe treating organizational context the same way great engineering teams treat their codebase. Shared. Versioned. Reviewed before it goes into production. Improved every time someone touches it. The definitions certified for the first agent become the foundation the second agent starts from on day one. The exceptions caught in production for one workflow become the rules baked into every workflow that follows. The corrections that would have lived in a Slack thread and been forgotten now live in a structured layer that every future agent inherits.

Published case studies on this approach describe teams producing roughly three times more structured business context in two weeks than the same teams had produced manually in the prior year. Not because they weren’t trying before. Because the manual model has a ceiling and an AI-assisted model doesn’t.

This is what compounding looks like in practice. The tenth agent takes a fraction of the time the first one took, and starts from a significantly more accurate picture of the business. The teams that skip onboarding for the first three agents don’t save time. They build three siloed pilots that hallucinate slightly different versions of the business, and redo the work for every agent that follows.

The question worth asking

Permalink to “The question worth asking”The question “is our model good enough?” is a dead end because it implies a single bar to clear. Frontier model capability is increasing too fast for that question to stay answered. The more useful question is: what does this agent know about our business, and how does it know it?

If the answer is “the prompt,” what’s been deployed isn’t an agent. It’s a very expensive guess.

The Cats of Context & Chaos

Permalink to “The Cats of Context & Chaos”Thanks for reading Context and Chaos!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

Share this article