Before you read further, here are six questions worth keeping on hand for conversations at Gartner D&A. Pick any three and see where the dialogue goes.

-

How are you ensuring models and agents see enough business context to make reliable decisions, and who owns that context supply chain in your organization?

-

What is one term or metric your leadership team still debates, and how many AI use cases depend on that term today?

-

Where have you moved governance in your AI lifecycle?

A) Earlier, before models and agents reach production?

B) Or are you still governing agents only after they have already acted?

-

For your biggest AI initiative, how clear is the line between who owns the business outcome and who owns ongoing cost and drift? Are those the same person?

-

For the AI-assisted decision that carries the most risk in your organization, who is accountable if it goes wrong? And do they actually have the authority to pause or shut it down?

-

What is the hardest behavior change you are asking of teams this year, and which legacy process is most likely to pull you back?

These are not hypothetical questions. They come from patterns we see consistently in conversations with data leaders: the same gaps surfacing across industries, roles, and maturity levels. Missing context. Unresolved semantics. Governance that arrives too late. Ownership that is unclear. Change management that is underfunded. The sections below unpack each of those gaps, not as abstract problems, but as starting points for the conversations worth having at the summit.

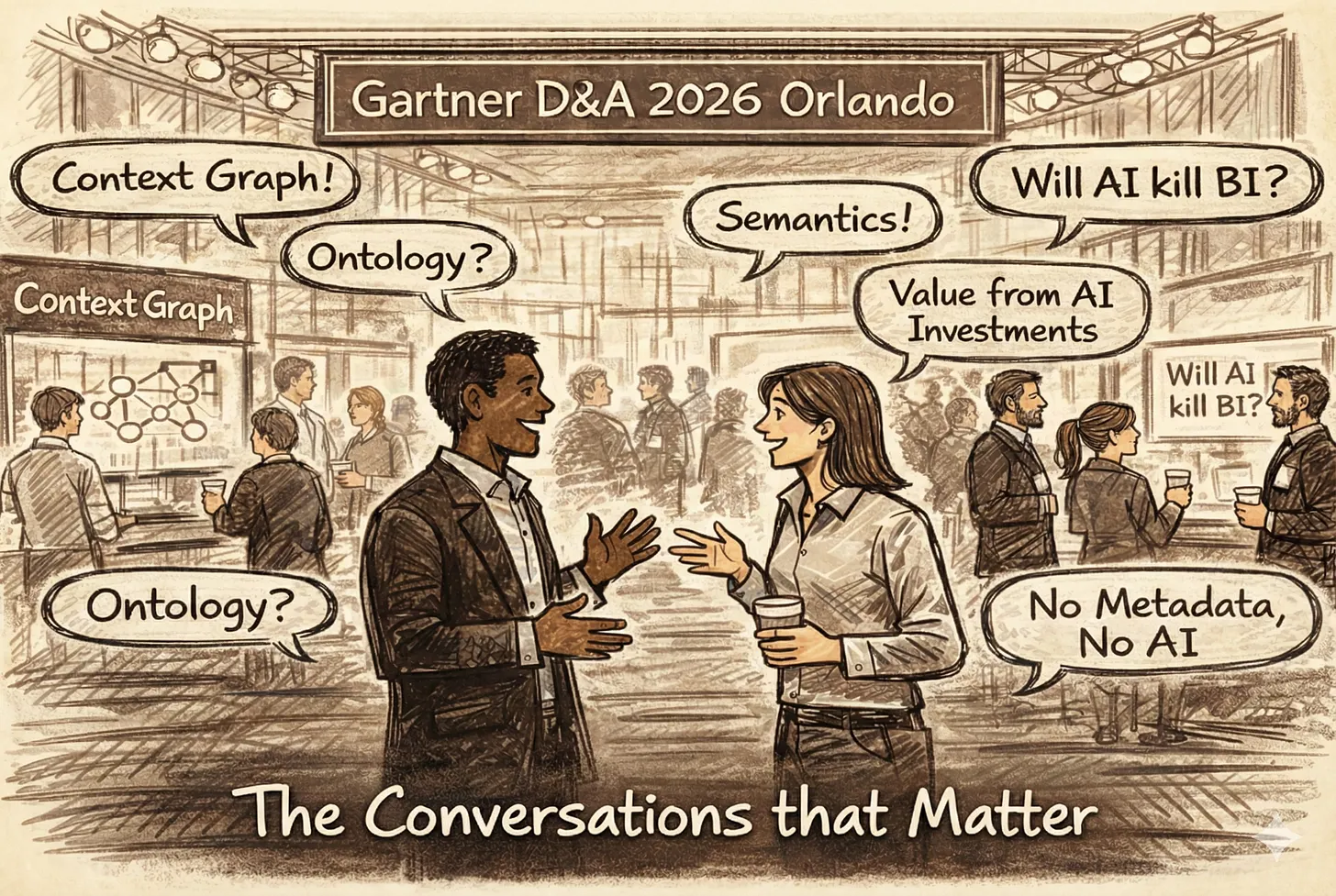

Gartner D & A | Source: Author

Gartner D & A | Source: Author

The context gap is the root problem

Permalink to “The context gap is the root problem”The question we keep hearing from data leaders is some version of: how do we know our AI systems have enough context to make reliable decisions? The honest answer, for most organizations, is: we don’t. That is not a tooling problem. It is a context problem.

Recent summits have shifted from generic “data quality” to AI-ready data, with active metadata positioned as the foundation of an AI trust stack. The shift is overdue.

Gartner surveys show that most organizations still rely on manual metadata inventories, and only a small fraction use machine learning to analyze metadata or power recommendations. In practice, models making consequential decisions such as pricing, triage, or risk scoring operate with partial context at best.

When context is missing, failure follows a predictable pattern. A model optimizes for a revenue metric that finance defines one way and sales defines another. An assistant retrieves authoritative data from an asset that has not been certified in years. A governance rule exists on paper, but there is no traceable link from the policy to the pipelines, models, or prompts that should honor it.

The pattern is worse with agents. They don’t just surface a wrong answer. They act on it. Consider an agent tasked with customer retention. It pulls a churn definition from one system, applies a discount policy from another, and triggers a personalized offer, all without a human reviewing the chain. When the definition and the policy are misaligned, the agent does not produce a conflicting dashboard – it gives away margin on the wrong customers. By the time the mismatch surfaces, the actions have already compounded.

The instinct is to layer more policy tools on top of existing systems. But if those tools cannot see the same context, they produce more rules without more alignment. Disconnected catalogs and policy engines cannot reconcile what was never connected underneath.

Gartner analysts now describe metadata as the matchmaker between user questions and data, especially for unstructured and AI workloads. When that matchmaker lacks visibility, mismatching and misanswering are almost guaranteed. Solving this is less about buying another AI platform and more about investing in a context layer: a live graph that knows how questions, people, policies, data assets, and models connect.

The context layer becomes the routing brain between every AI question and the right, governed data. It is powered by active metadata, not spreadsheets and tribal knowledge.

The real question for leaders is not which AI tool is smartest. It is:

-

What is our operating model for context?

-

Who maintains the context graph beneath every AI interaction?

-

How would we detect a context failure before it becomes a reputational or regulatory incident?

Until these answers are clear, conversations about AI accuracy remain downstream of a context gap.

Semantic debt compounds the gap

Permalink to “Semantic debt compounds the gap”Ask any two business units to define ‘customer’ and you will get two different answers. Data leaders know this. What they are starting to realize is how much more dangerous that ambiguity becomes when AI acts on it.

Most enterprises carry semantic debt: Teams define “customer,” “active account,” or “churn” differently. Previously, this surfaced as conflicting dashboards. In an AI world, it surfaces as inconsistent answers, recommendations, and policy behavior across applications.

When an agent acts on a disputed definition, semantic debt stops being an analytics nuisance, and becomes decision inconsistency in real time. A dashboard shows a wrong number. An agent makes a wrong decision and executes it.

Many organizations respond with documentation. They publish glossaries, send updates, and move on. That creates static documentation, but not alignment.

A more realistic approach is to treat semantics like an API contract:

-

Clear ownership and change processes for each key definition.

-

Automated propagation of changes into schemas, metrics stores, policies, and prompts.

-

Monitoring for semantic regressions, not just technical ones.

If someone quietly redefines “churn” in a feature pipeline, you should know before AI acts on it.

Each well-governed semantic domain, metric, customer definition, or policy, becomes a reusable context product that models and agents can consume with confidence. Without that productization, every AI use case reinvents its own understanding of the business from scratch, and every agent carries its own version of the truth.

Gartner sessions on catalog best practices and AI ready data increasingly treat clear semantics as a precondition. The message is right. Execution remains uneven.

A practical exercise for the Summit: identify one high stakes term your organization still debates. Then trace where that term appears across features, retrieval rules, prompts, guardrails, and dashboards. The number of touchpoints will reveal the urgency of the debt.

Governance cannot be retrofitted onto AI

Permalink to “Governance cannot be retrofitted onto AI”One of the most honest conversations happening among data leaders right now is about timing: when does governance actually show up in your AI lifecycle? For most, the answer is uncomfortably late.

Yet most AI initiatives still follow the older pattern: governance arrives after political commitment is high, which means it is cast as obstruction rather than design partner. Controls are waived or exceptions proliferate. This is governance by incident.

For traditional analytics, this was painful but survivable. For AI – with opaque models, external APIs, unstructured data, and automated actions – retrofitting governance expands the risk surface. The more AI governance drifts from existing data and analytics governance practices, the more fragmented controls become.

Governance by design requires a shared context layer underneath, not a collection of disconnected catalogs and policy engines stitched together after the fact. If governance tools cannot trace from a policy to the data, model, prompt, and action it should cover, governance remains aspirational regardless of how many policies are written.

Three entry points:

Use case intake. Create a light checklist before any build begins that defines allowed and off-limits use cases, data sensitivity, and initial risk level.

Data readiness assessment s. Before funding a use case, assess whether lineage, quality, and semantic clarity are sufficient. Readiness means the relevant context products exist: definitions are resolved, lineage is traceable, policies are linked. If the metadata is incomplete, the use case is not ready.

Model and agent design templates. Standardize checkpoints at the design stage, such as ownership, applicable policies, rollback plans, and evidence logging. For agents, the template must also capture scope of allowed actions, whether the design is human-in-the-loop (a person approves before the agent acts) or human-on-the-loop (the agent acts and a person monitors after), and what kill-switch or rollback mechanism exists. An agent that can modify records or trigger workflows needs governance that covers what it can do, not just what data it can see.

The goal is not bureaucracy. It is replacing governance by incident with governance by design. If the Governance team first hears about a use case in a post-mortem, the organization is already behind.

Someone has to own outcome, value, and drift

Permalink to “Someone has to own outcome, value, and drift”Walk the expo floor and you will hear plenty of launch stories. The question worth asking peers over coffee is different: who owns that model in year two?

AI does not end at go-live. Models drift. Prompts evolve. Regulations change. Costs and risks are continuous. Yet only about a third of data leaders systematically track ROI. And for agentic AI, the most valuable outcomes, incidents avoided, escalations prevented, and manual rework eliminated, are invisible to traditional ROI frameworks. As AI becomes a board level line item, that gap becomes unsustainable.

Someone must own three things:

1. Outcomes. Who owns the business metric the AI system is designed to influence? If the model disappeared tomorrow, whose OKRs would suffer?

2. Value. Who proves AI usage generates tangible value and decides whether to scale or retire a use case? For agents, this means defining value broadly enough to capture what did not happen: the incidents avoided, the escalations prevented, the hours of manual reconciliation that quietly disappeared.

3. Drift and lifecycle. Who funds and runs ongoing monitoring for drift, fairness reassessment, prompt updates, and retraining?

Without clear owners for all three, decision accountability breaks down. “Human in the loop” often masks this: the distinction between human-in-the-loop (a person approves before the agent acts) and human-on-the-loop (the agent acts and a person monitors after) matters enormously. Many organizations have quietly moved to on-the-loop without explicitly deciding to. That is an unacknowledged governance gap.

Gartner increasingly frames governance as a growth engine tied to trusted AI and business impact. Ownership makes that framing operational.

The change management risk nobody budgets for

Permalink to “The change management risk nobody budgets for”If you ask leaders who have actually scaled governance what surprised them most, the answer is rarely about technology. It is about change management.

Success stories share a pattern. Governance is reframed as a business enabler. Domain ownership and automation are embedded. Councils and sponsors have authority. Adoption follows.

What is less visible is the risk of legacy lock-in:

-

AI introduced into approval flows designed for static reporting.

-

Modern AI expectations layered onto fragmented tool stacks with fragmented context. When each tool maintains its own partial view of assets, definitions, and policies, change management becomes a game of manual synchronization across systems that do not share a common understanding.

-

Teams asked to adopt new behaviors without aligned incentives or capacity.

The bottleneck has shifted from infrastructure to behavior. Customers increasingly ask not just for connectors and lineage, but for success plans, change frameworks, and transformation scorecards that demonstrate value.

Three questions deserve reflection:

-

Are you prepared to retire approval committees that slow decisions but add little scrutiny?

-

Are domain owners and stewards given time and authority to manage AI risk, not just definitions?

-

Are you investing as much in training and communication as in platform licenses?

Technology will improve. Without parallel investment in change, organizations risk recreating yesterday’s governance failures on tomorrow’s AI platforms.

Make the hallway conversations count

Permalink to “Make the hallway conversations count”Gartner D&A will have no shortage of sessions on AI-ready data, active metadata, and governance structures. Many are worth your time.

The real value, however, lies in whether leaders engage honestly with hard questions. Not tool questions. Ownership questions. Context questions. Questions about what they are willing to change.

If those hallway conversations shift, toward context, semantics, ownership, and change, the tools and architectures will follow. The organizations that win this wave will not be the ones with the flashiest agents, but the ones that quietly build a context layer and governance-by-design habit beneath every AI interaction.

If the questions in this article resonated, these sessions will push the conversation further:

On the context gap:

-

How to Build the Context Layer for Reliable AI Agents — Andrés García-Rodeja , Wed March 11, 10:30 AM

-

Using Active Metadata to Support Data Agents for AI — Mark Beyer , Mon March 9, 4:30 PM

-

Cargill’s Reusable Framework for Evaluating Active Metadata Platforms — Kirsten Walter alter, Tue March 10, 3:00 PM

On semantic debt and data readiness:

-

AI-Ready Data: Lessons Learned Become Practices to Follow — Roxane Edjlali (Mon March 9, 12:45 PM)

-

Best Practices for Implementing and Maintaining a Data Catalog — Thornton (TJ) Craig (Tue March 10, 12:30 PM)

On governance convergence:

-

Crossroads Debate: Data & Analytics Governance vs. AI Governance — Andrew White vs. Lauren Kornutick, CIPM (Mon March 9, 2:30 PM)

-

Trust as the New Currency: A Paradigm Shift in Governance — Guido De Simoni (Tue March 10, 10:30 AM)

On ownership and scaling AI:

-

How Is Agentic AI Impacting and Disrupting Your Data Management Discipline — Now? — Ehtisham Zaidi , Mon March 9, 4:30 PM

-

PPG’s Formula: Scaling Data Literacy With AI — Robert Howden & Austin Kronz onz, Mon March 9, 2:05 PM

Thanks for reading Metadata Weekly!

The Insight Index: Your Weekly Data & AI Digest

Permalink to “The Insight Index: Your Weekly Data & AI Digest”Top resources, social posts, and recommended reads, carefully curated for you.

-

**Something Big Is Happening — **Matt Shumer

-

**The 2028 Global Intelligence Crisis — **Citrini & Alap Shah

-

**Data and AI Pulse Check: Gartner 2025 D&A Summit Reflections — **Sanjeev Mohan

-

**Data Engineering After AI — **Ananth Packkildurai

-

**Five Levels Between Chaos and (Almost) AI-Ready Data — **Jean-Georges Perrin

-

**Data Governance Operating Model Blueprint — **John Wernfeldt

-

**Why the Smartest People in Tech Are Quietly Panicking Right Now — **Shane Collins

-

**The Context Problem Nobody’s Fixing in Talk to Data Initiatives (NL> SQL) — **Manoj Shanmugasundaram

That’s all for this edition. Stay curious, keep exploring, and see you all in the next one!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

Originally published in the Context & Chaos newsletter on Substack.

Share this article