I’ve been making one argument everywhere I go this year. At data conferences, at AI summits, at internal briefings with CDOs and CIOs. The argument is always the same and the rooms are always divided. Half the people nod, half the people cross their arms, and at some point in the middle something shifts.

The Knowledge Graph Conference felt like the right place to make this argument in full, because the knowledge graph community has been passionate about this problem since before it was cool.

KGC 2026 | Source: Author & KGC

Since I gave this talk at the Knowledge Graph Conference last week, a few people asked me to share the deck. I admired what Jessica Talisman did with her KGC talk this year, which was to publish the deck and walk through the thinking behind it, so I’m borrowing her format with credit.

Here’s my full deck: “The Context Layer: Knowledge Graph’s Second Act.” I’m walking through my thoughts, section by section. Feel free to take it, forward it, or even use it to make the same argument in your own organization.

My diagnosis: The skeptics are right about AI

Permalink to “My diagnosis: The skeptics are right about AI”I opened by giving the skeptics the floor.

“AI is not really changing that much.”

“It’s all just hype.”

“Things are still the same as before.”

“AI investments haven’t had much returns.”

Skepticism on AI | Source: Author

I put these lines on this slide, unattributed. In most rooms, a few people quietly relax. Finally, someone is saying what they’ve been thinking.

And they’re not wrong, which is the point of the next slide.

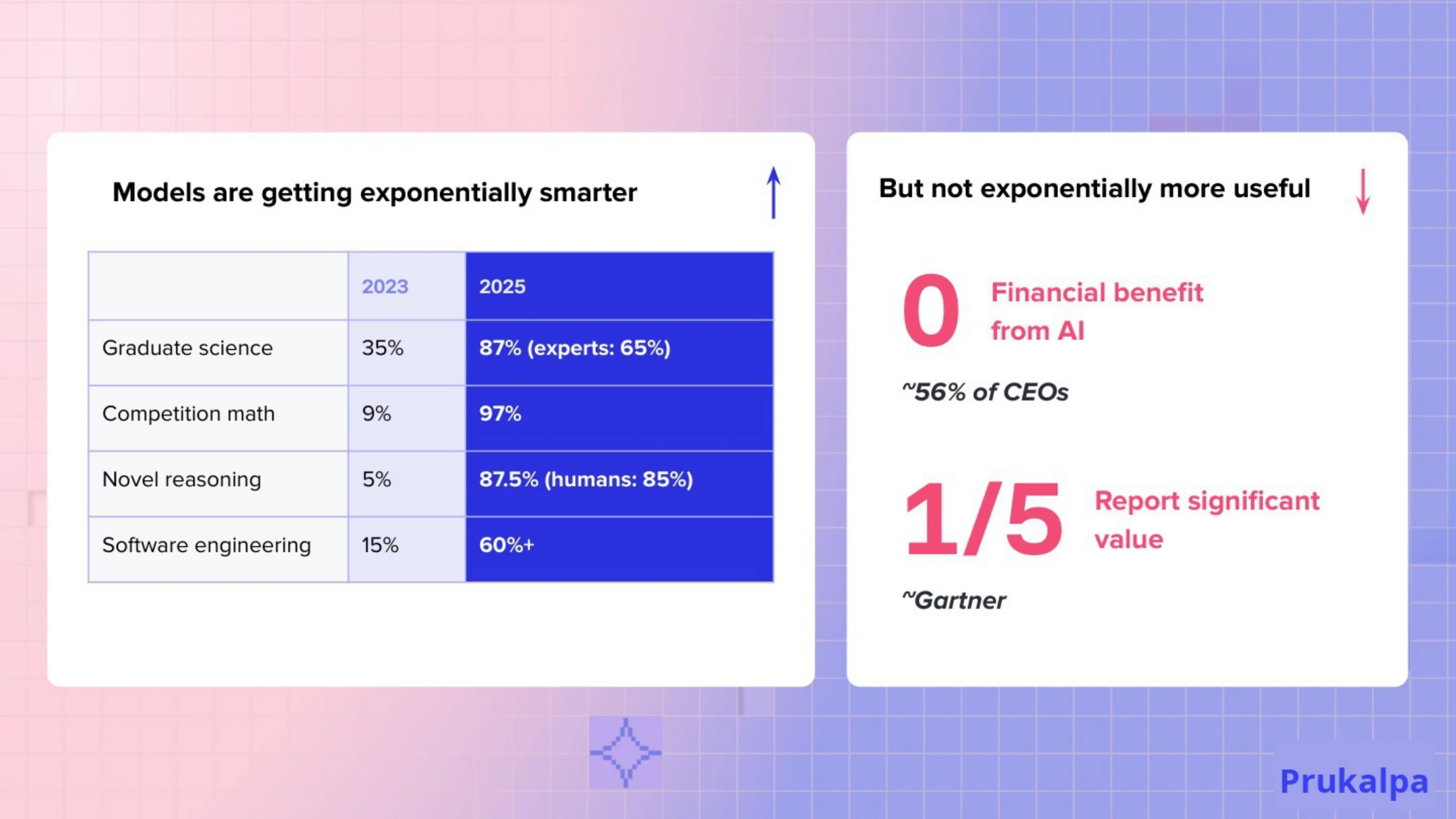

Bar exam performance: 2023, models were failing. 2025, top 1%. Novel reasoning benchmarks: 5% to 87.5%. Graduate science exams: from 35% to 87%, against a domain expert baseline of 65%. These are not incremental gains. These are step-changes happening in eighteen-month windows.

And yet: 56% of CEOs report zero financial benefit from AI. Only one in five reports significant value. The MIT METR randomized trial found experienced developers using AI tools were 19% slower, not faster, despite believing they were 20% faster.

Both things are true. The capability is real. The returns are not materializing. That is not a contradiction. It is a diagnosis waiting to be made.

Slide 4 named it: Models are getting exponentially smarter, but not exponentially more useful.

That gap was what the rest of the talk was about.

Performance = Intelligence × Context

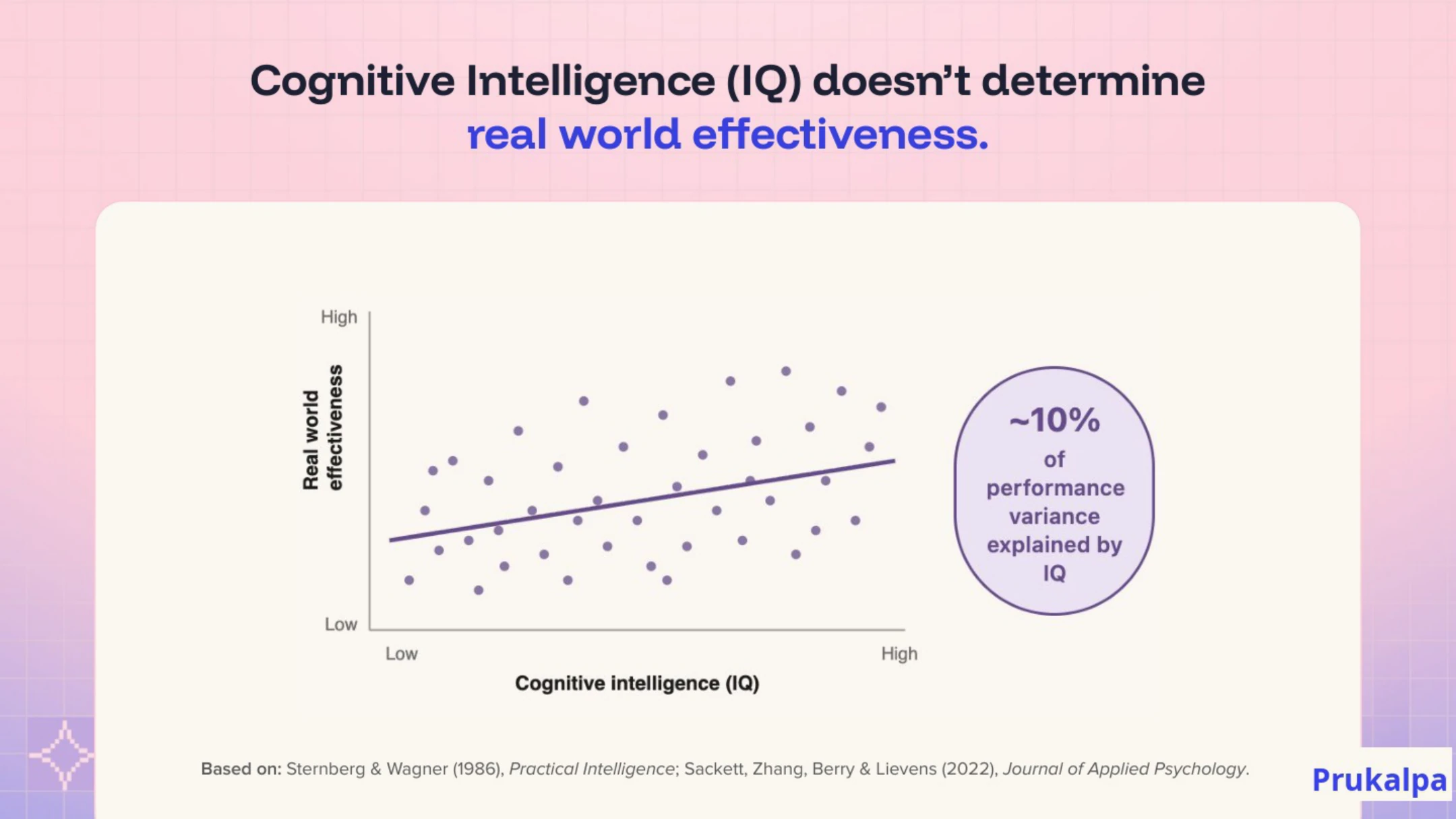

Permalink to “Performance = Intelligence × Context”I started with a piece of cognitive science that most AI strategists have never thought about. Cognitive intelligence, what IQ tests measure, accounts for roughly 10% of real-world job performance variance. Only 10%.

Think about your best employee. Is she the highest IQ in the room? Almost never. She is the person who has accumulated the most context about how things actually work here: your specific customers, your specific edge cases, your specific “for this client, we do it differently.” That is performance. Not raw intelligence.

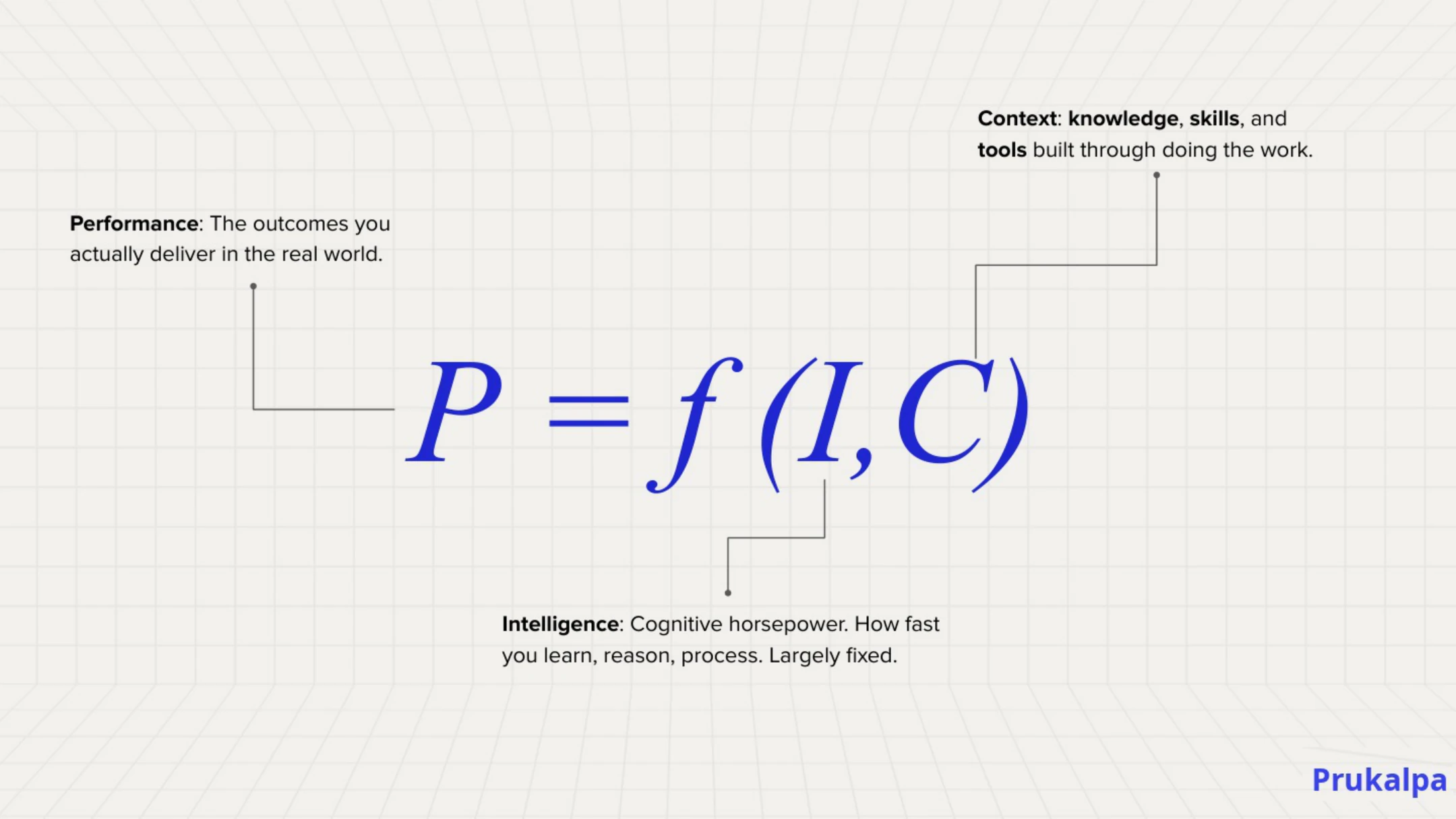

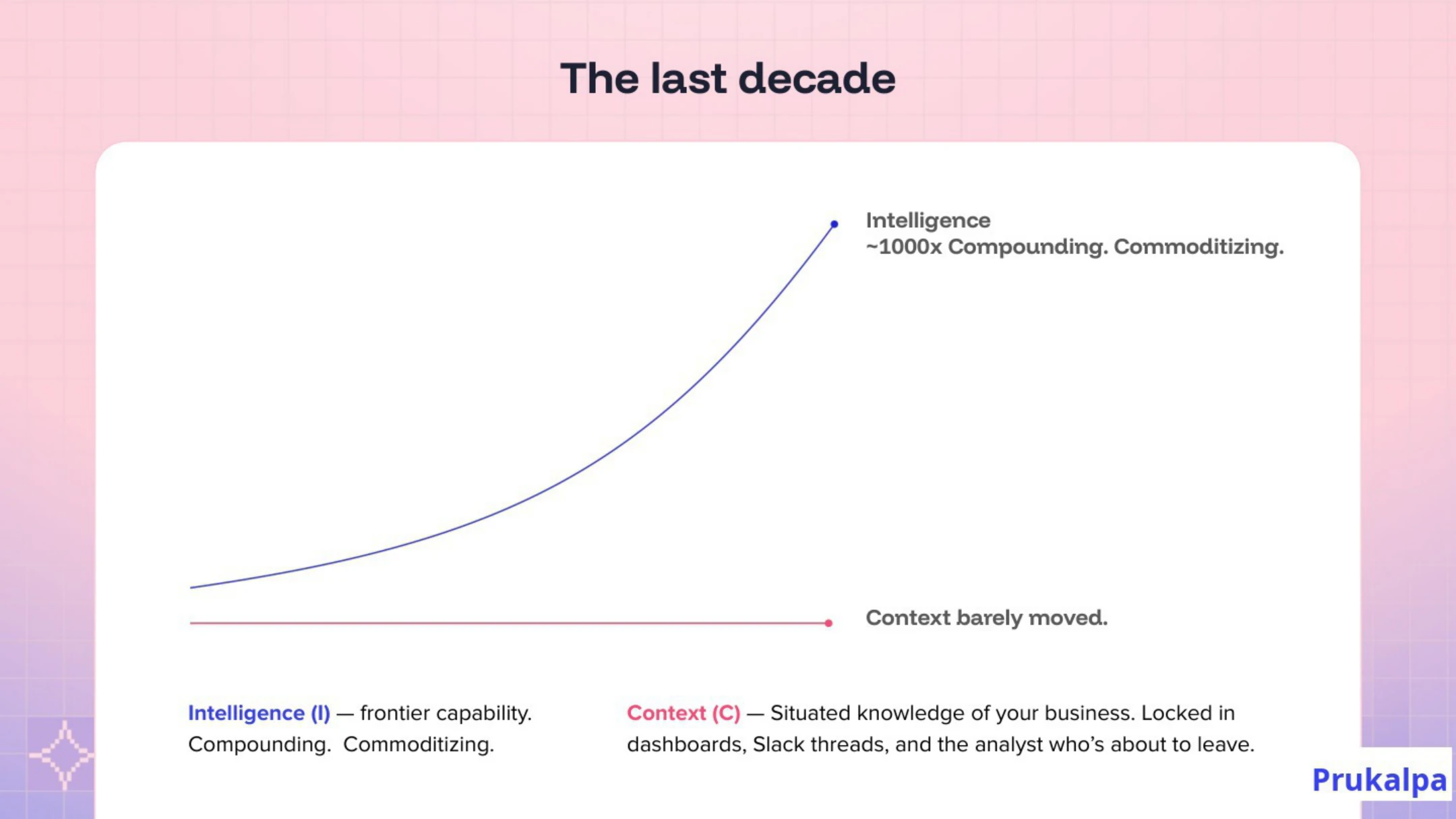

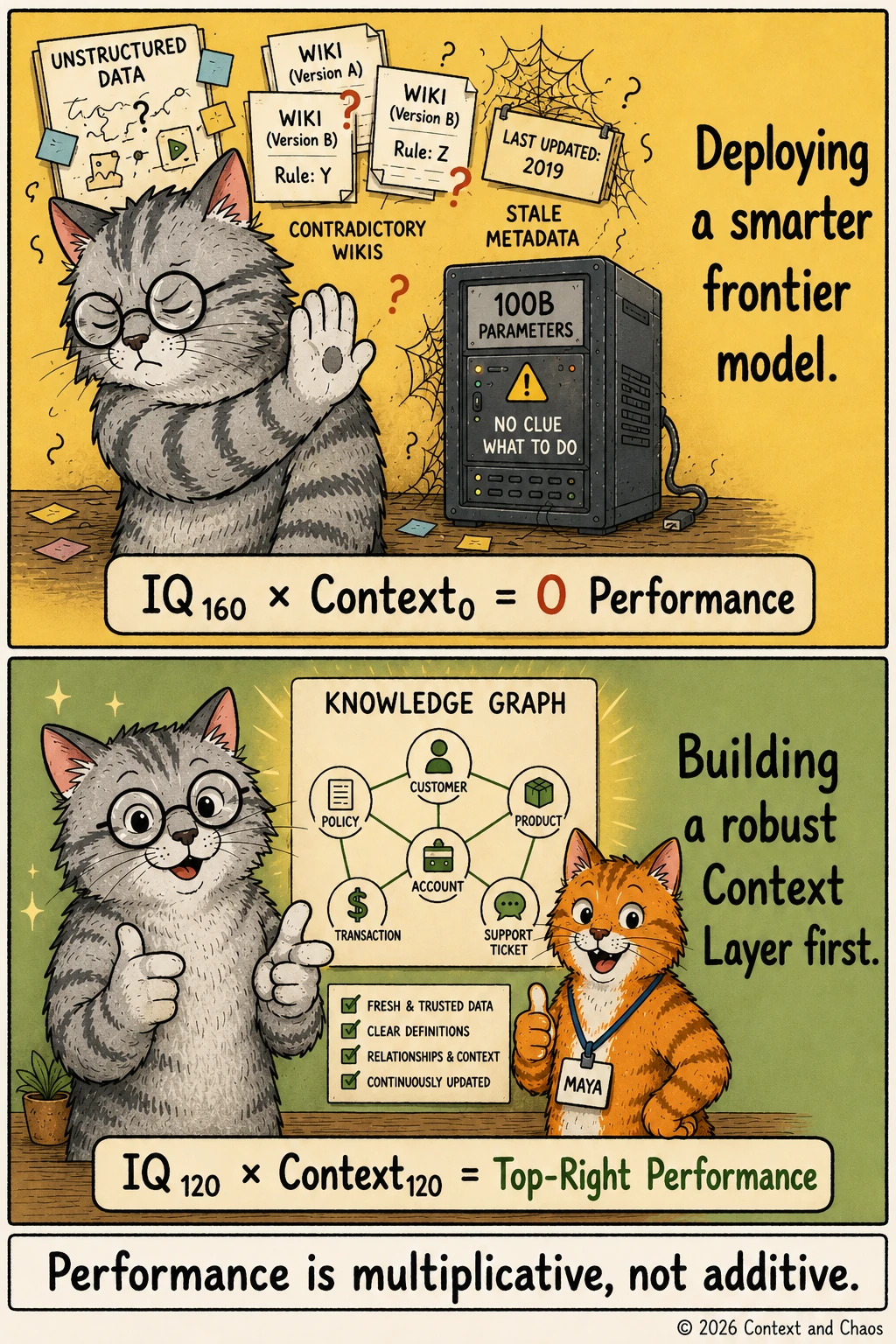

Slide 6 translated this into a formula:

Performance = Intelligence × Context

It is multiplicative for a reason. A context score of zero makes performance zero, regardless of how capable the model is. And a smarter model operating on fractured context does not produce fewer errors. It produces more elaborate, more persuasive, more dangerous errors.

Slide 7 showed what happened over the last decade. Intelligence advanced by roughly three orders of magnitude, from GPT-2 to the frontier today. Context barely moved. It remained locked in dashboards, employee knowledge, and the heads of people who have been at the company long enough to know where the bodies are buried.

You can buy intelligence at API prices. You cannot buy context at any price.

Slide 8 named the next frontier: contextual intelligence. There is no intelligence without it being contextual and practical. I have started saying this twice in talks because it does not land the first time. The raw capability is table stakes. What enterprises are actually missing is the other variable in the formula.

What it takes to be effective: Meet Maya, briefly

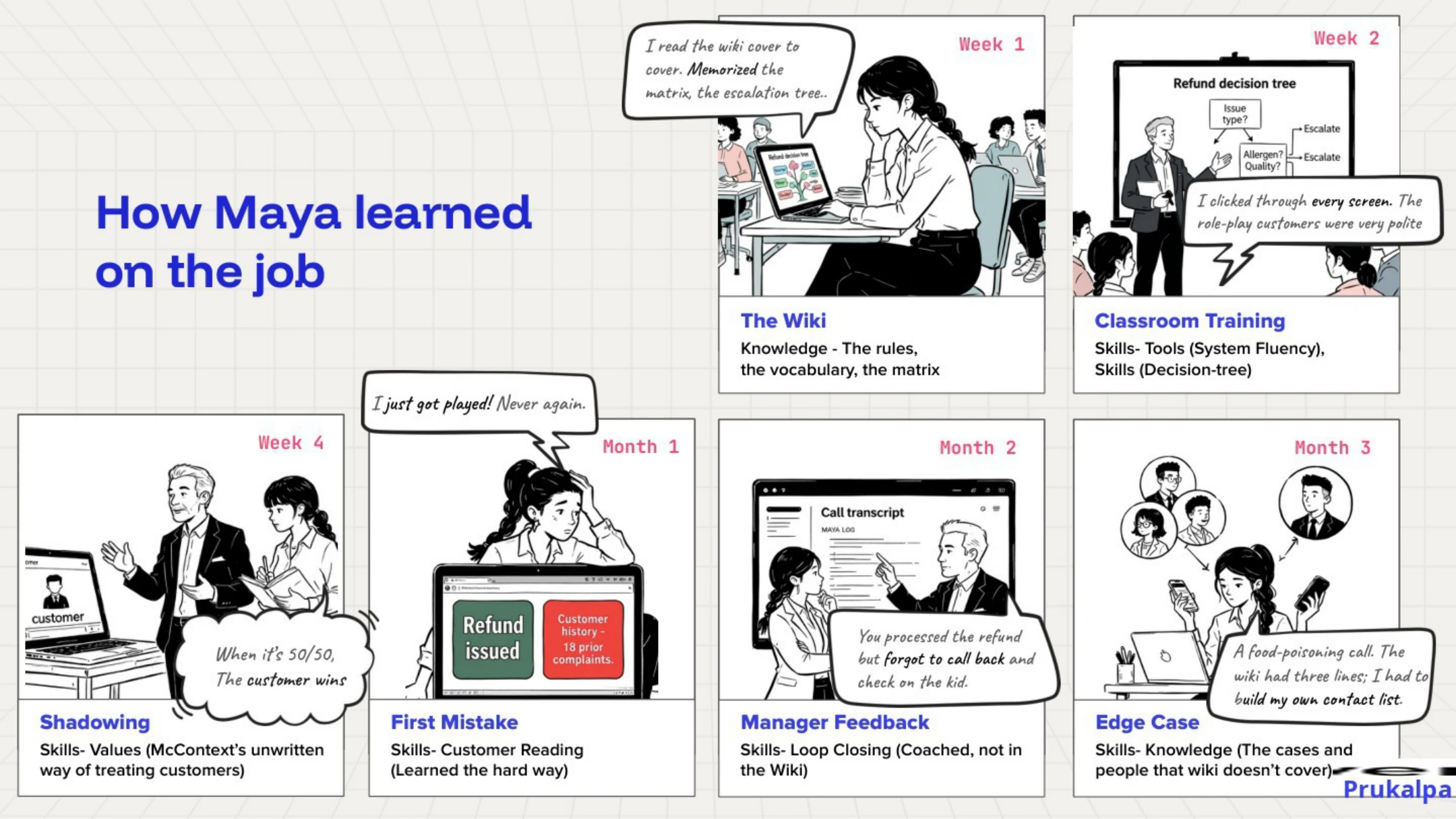

Permalink to “What it takes to be effective: Meet Maya, briefly”Maya is the through-line of the talk. She is a customer support rep at a burger chain in Chicago, and the deck spends thirteen slides on her because the weight of what comes next on slide 22 depends on the audience inhabiting how she got effective. The slides are worth the read on their own. The compressed version, for our purposes here, is this.

Maya handles a ninety-second call from an angry mother whose child has just had an allergen exposure from an order that was marked allergen-free in the app. The mother is furious. The child is fine. Two items from the order are also missing. Maya resolves the entire situation in ninety seconds, de-escalates a customer who came in ready to post on social media, and ends the call with a five-star review.

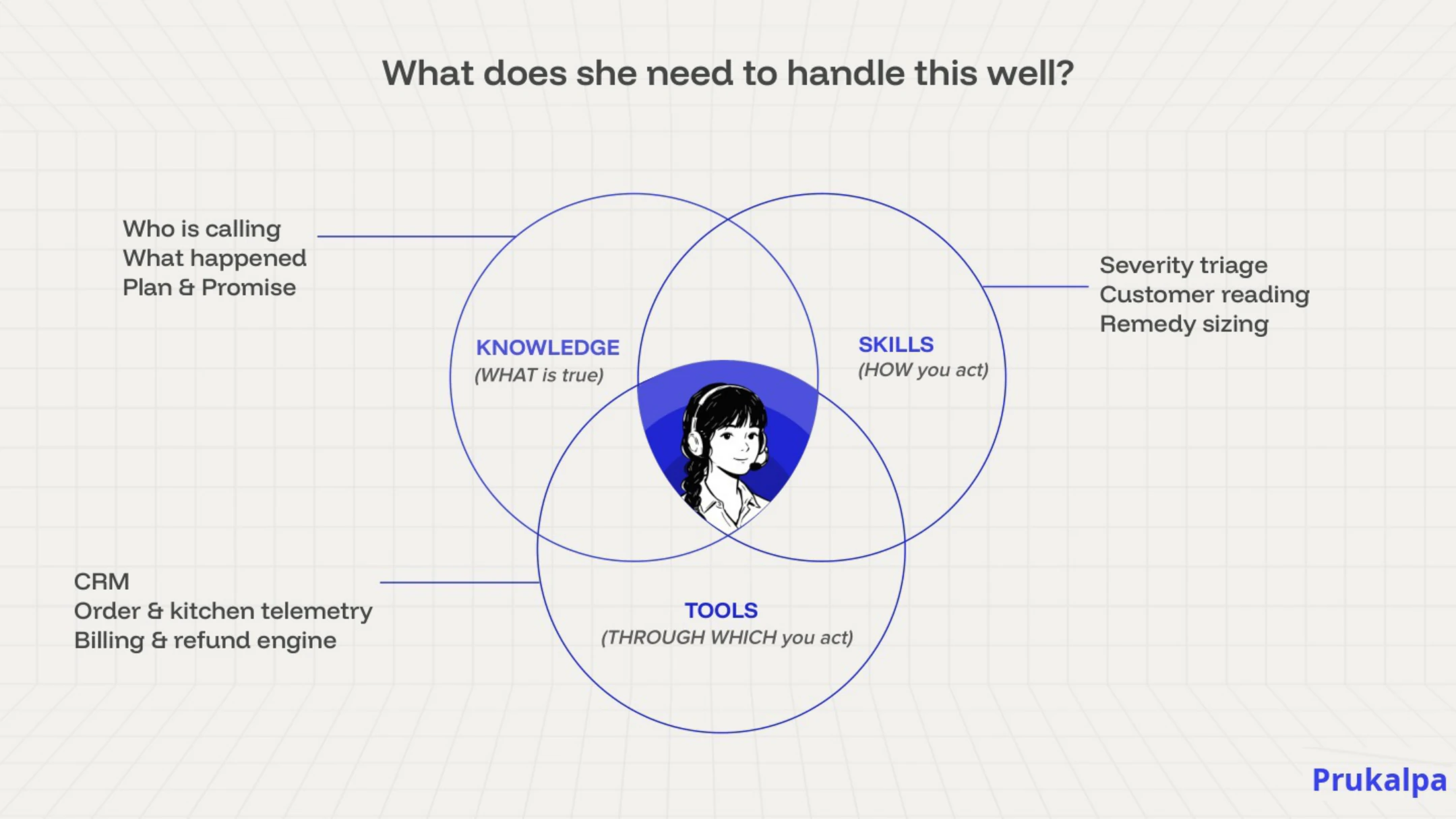

The question that matters is how she got there. She needed four things to handle that call.

- Knowledge of what is true: the allergen policy, the refund procedure, the escalation criteria.

- Skills for how to act: de-escalation technique, tone calibration, the judgment to know when an apology is enough and when it is not.

- Tools through which to act: the CRM, the order system, the refund workflow.

- Context about the specific situation: a first-time customer or someone who has complained seventeen times; a pattern at this location or a one-off; a mother who wants money back or a mother who wants to know this will not happen again.

She did not arrive with that capability. She built it over four months, through a sequence every organization manages intuitively for human employees and almost never thinks about for AI agents. Documentation. Classroom training. Shadowing a senior rep. Making mistakes under supervision. Getting feedback on the edge cases she got wrong. Slowly building the pattern recognition that tells her when a situation is routine and when it is not. By month four, Maya is the best rep on the floor. Not because she got smarter. Because she built context.

AI today is Maya on Day 1

Permalink to “AI today is Maya on Day 1”Everything before this slide was prologue.

AI today is Maya on Day 1.

Every AI agent your organization has deployed, the chat assistant, the data analyst, the document processor, arrived with extraordinary capability and no context. It knows everything in general and nothing about your business specifically.

It has never shadowed Jamie. It does not know the “50-50, customer always wins” rule. It has not made the refund mistake that taught Maya to check customer history first. It has never been corrected, never been coached, never accumulated the institutional knowledge that lives in no wiki, no escalation tree, no onboarding document.

It is Day 1. Every single time.

This is the slide where the room usually goes quiet. People realize they have been asking the wrong question. “Is this model good enough?” The real question is “Have we given it anything to work with?”

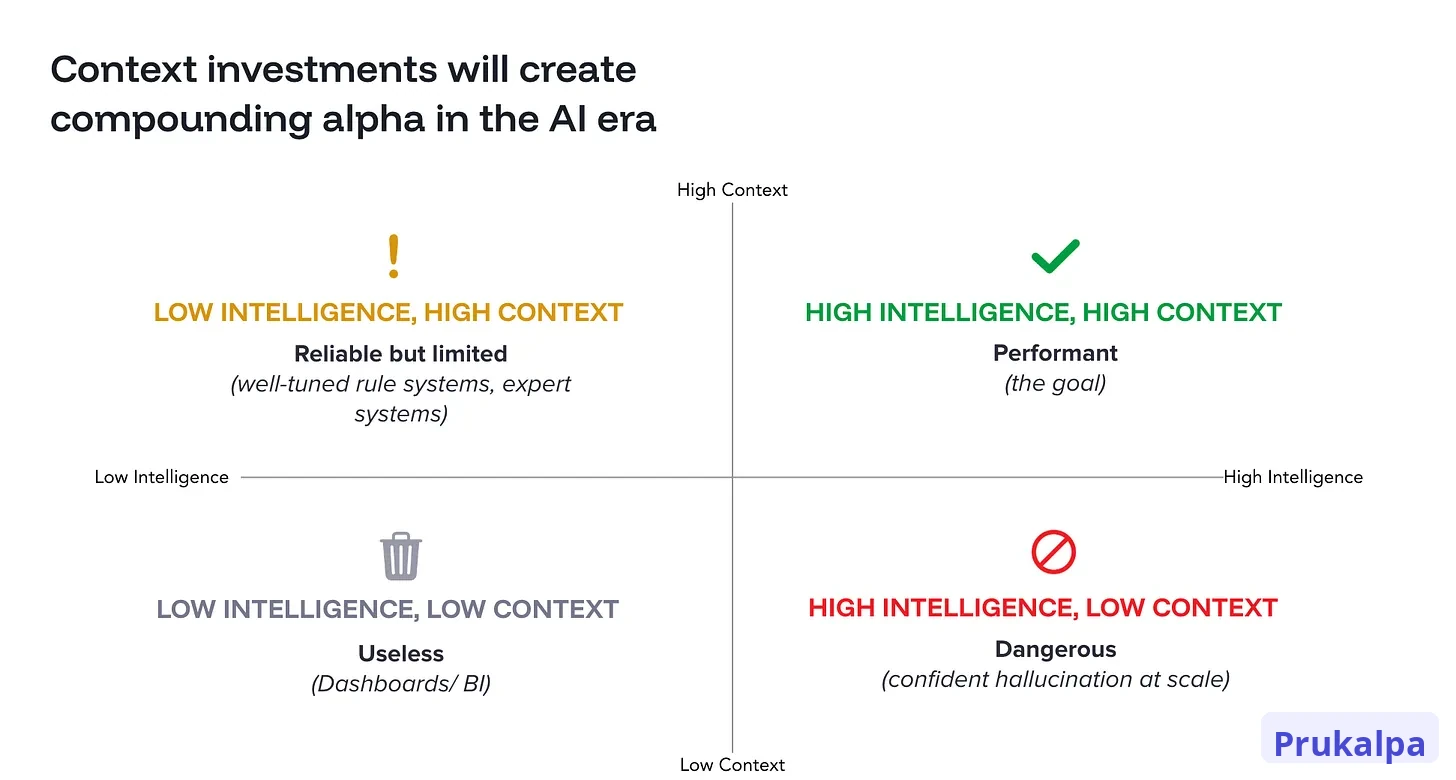

Quadrant: The four squares of failure modes

Permalink to “Quadrant: The four squares of failure modes”Note: I’ve added one slide here from an earlier version of this talk I gave at another conference, because it is the cleanest diagnostic in the argument and I thought this slide deserved a second life.

If “AI is Maya on Day 1” named the diagnosis, the quadrant named the failure modes. This is the slide I pulled forward from the earlier version of this talk because the rest of the argument lands harder once you can see the four squares.

Two axes: Intelligence on one, context on the other. Let’s walk through the four squares from the bottom-left corner, where every enterprise started, around to the top-right corner, which is where we are trying to get to.

Bottom-left: Low Intelligence, Low Context. Useless. This is the dashboard era. Static reports, fixed queries, no reasoning, no awareness of the business beyond what was hard-coded into the schema. Most enterprise BI lives here. It is reliable in the sense that it does not surprise you, and useless in the sense that it cannot answer any question it was not built to answer.

Top-left: Low Intelligence, High Context. Reliable but limited. This is the world of well-tuned rule systems and classical expert systems. The rules encode the business correctly. The system applies them consistently. It cannot reason outside the rules, which means every new situation requires a human to extend the system. It’s useful for narrow domains, but does not scale to the breadth of work a modern enterprise needs to automate.

Bottom-right: High Intelligence, Low Context. Dangerous. This is where most AI agents in production live today. The model is capable, but it has no idea what “active customer” means in your company, which definition of revenue the finance team uses, or which exceptions override the official refund policy. The output is confident, articulate, and frequently wrong. The danger is not that the agent fails visibly. The danger is that it fails persuasively. Confident hallucination at scale is the failure mode that turns AI from an investment into a liability.

Top-right: High Intelligence, High Context. Performant. This is the goal. The agent has the reasoning capability of a frontier model and the situated knowledge of an experienced employee. It knows the rules. It knows the exceptions to the rules. It knows when the situation calls for the exception. This is the quadrant where AI investment finally compounds, and it is the quadrant almost nobody is in yet.

The reason most enterprises are stuck in the bottom-right square is not because their models are not smart enough. The models are plenty smart. The reason is that they have invested in one axis for a decade and ignored the other. The fix is not a better model. The fix is the other axis.

What the context layer actually is

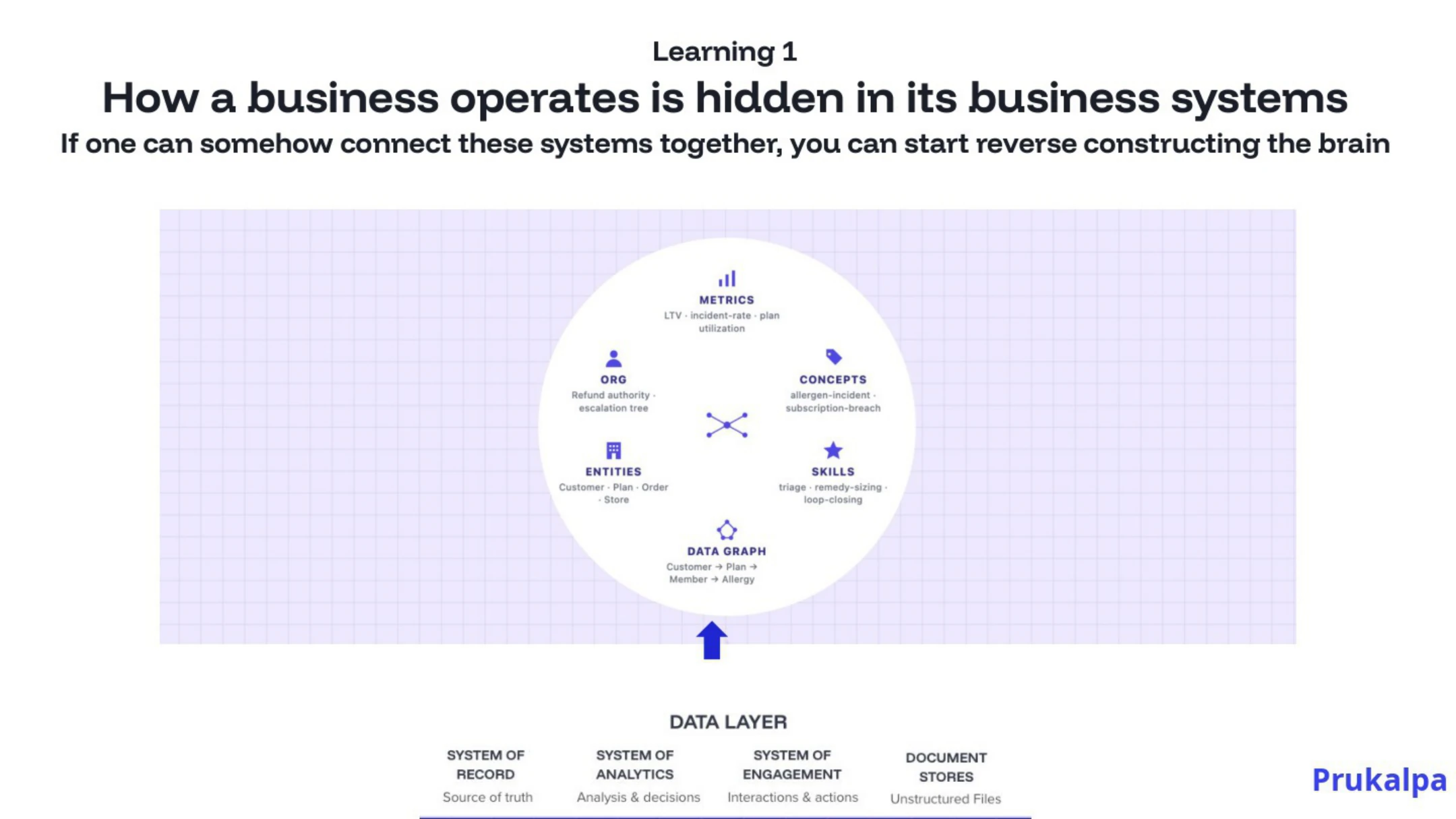

Permalink to “What the context layer actually is”The context layer is the organizational living brain. It is the layer that sits between your data estate and your AI agents, encoding everything Maya accumulated over four months: metrics and their definitions, concepts and the rules that govern them, entities and how they relate across systems, skills and the judgment calls embedded in them, and the data graphs that connect all of it.

Concretely: the terminology layer is what “qualified lead” means in this organization, for this AI agent. The entity resolution is the recognition that the “customer” in one system, the “account” in the data warehouse, and the “contact” in the CRM are the same entity. The business rules are the edge cases and exceptions that took the human team three years to encode and that no new hire learns on day one.

This is not theoretical. The organizations doing this well have already built versions of it. Most have not, which is why their agents are still on Day 1.

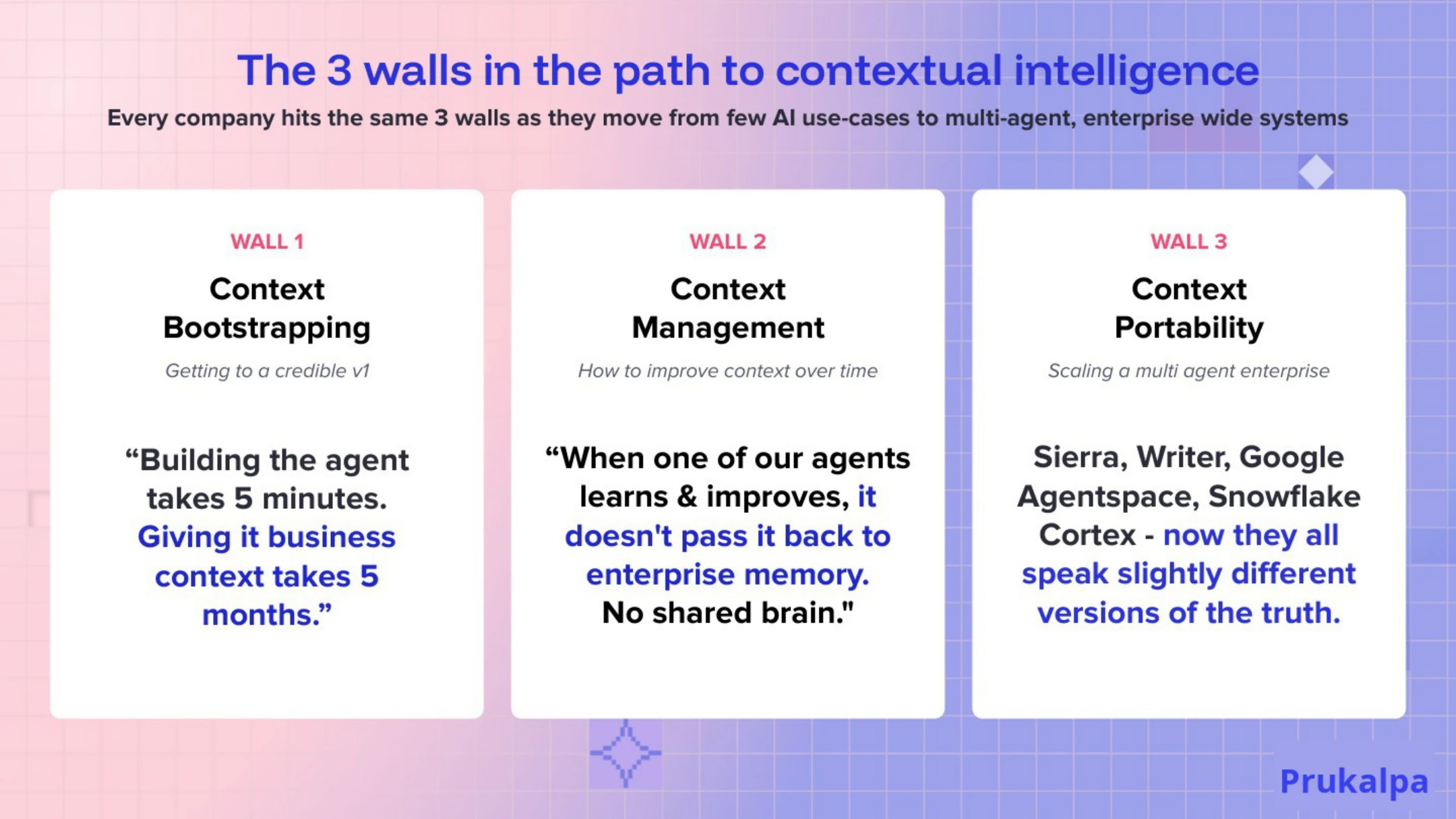

The three walls every enterprise hits

Permalink to “The three walls every enterprise hits”This was the slide that CDOs and CIOs tend to photograph.

These are the three walls every enterprise hits when they start scaling AI agents. They’re organizational problems, not model problems.

Wall 1: Building the agent takes five minutes. Adding business context takes five months.

Everyone can spin up an agent in an afternoon. The capability has been democratized, but the context infrastructure has not. The five-month clock starts the moment you realize the agent does not know what your organization means when it says “revenue” or “customer” or “resolved.” This means hand-mapping forty columns to a semantic model. Writing definitions for what “active customer” means when the marketing team and the finance team disagree. Encoding the three exceptions to the refund policy that everyone in support knows but nobody wrote down.

Wall 2: Agents do not share their learnings.

When Agent 1 gets corrected, Agent 2 does not know about it. Every agent you deploy starts from zero, not just on Day 1 but forever, unless you have built the infrastructure for corrections to propagate. One agent has memory but groups of agents have amnesia. The tenth agent knows no more about your specific business than the first one did. This is the compounding tax on every organization that has skipped the context layer.

Wall 3: Multi-agent semantic conflicts.

When multiple agents work the same domain, they will inevitably interpret ambiguous concepts differently. One agent’s “revenue” is closed-won; another’s is recognized. In isolation, both produce plausible outputs. At the seam, when they hand off to each other, when a human tries to reconcile their outputs, the conflict surfaces. Confidently, persuasively, and wrongly. Your finance team spends its week reconciling the variance instead of doing finance.

These three failure modes appear in every enterprise deployment I have watched across industries. They are the pattern, not edge cases.

Four things that compound when you get past the walls

Permalink to “Four things that compound when you get past the walls”Four things have become clear from my time watching enterprises build context infrastructure at scale. Let’s take them in order, because each one builds on the last.

1. Business operations are already encoded in your systems. The way your organization actually works is legible in your data, if you read it the right way. The lineage in your warehouse encodes which tables feed which reports, and therefore which definitions are load-bearing. The SQL your analysts wrote encodes the joins, the filters, and the business logic that produces every number on every dashboard. The descriptions your governance team curated encode the semantic intent of each field, even where the coverage is uneven. Modern AI can read all of that, synthesize across it, and produce a first draft of your business’s semantic map in hours, not months. The same context work that used to take five months can be bootstrapped in a week and refined by your domain experts in their language on the terms they care about. That changes the unit economics of context from artisanal to industrial.

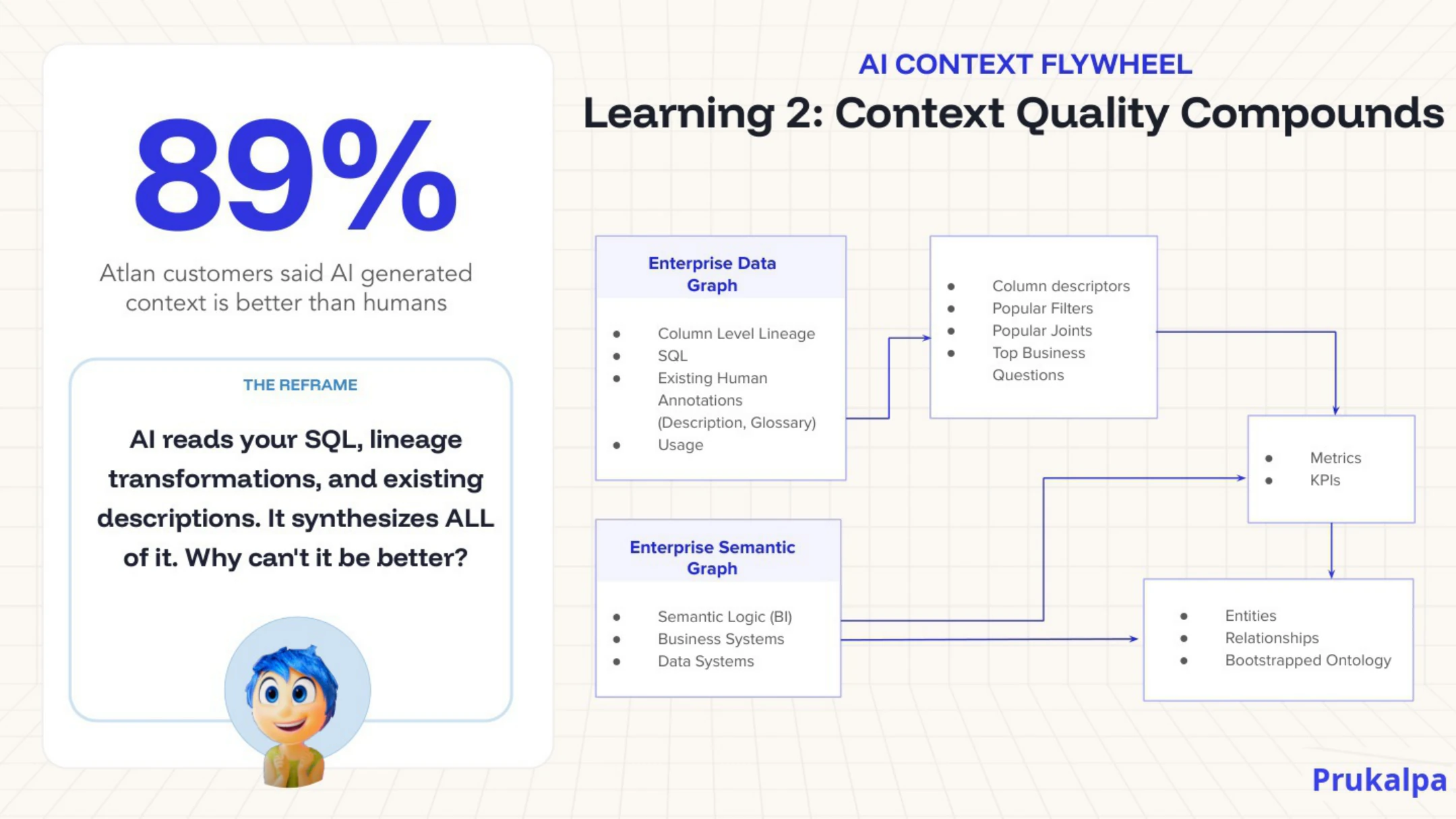

2. Context quality compounds. The first pass is rough. Each refinement is informed by the last. When AI generates a draft definition and a human refines it, the next definition is generated against the refined version, and the quality curve climbs. The data is solid: AI-generated context, validated by humans, ends up rated higher quality than human-authored context done from scratch, because the AI sees the whole graph while the human only sees the field. This is a flywheel, not a one-time project. The enterprises that recognized this early are now meaningfully ahead of the ones that did not.

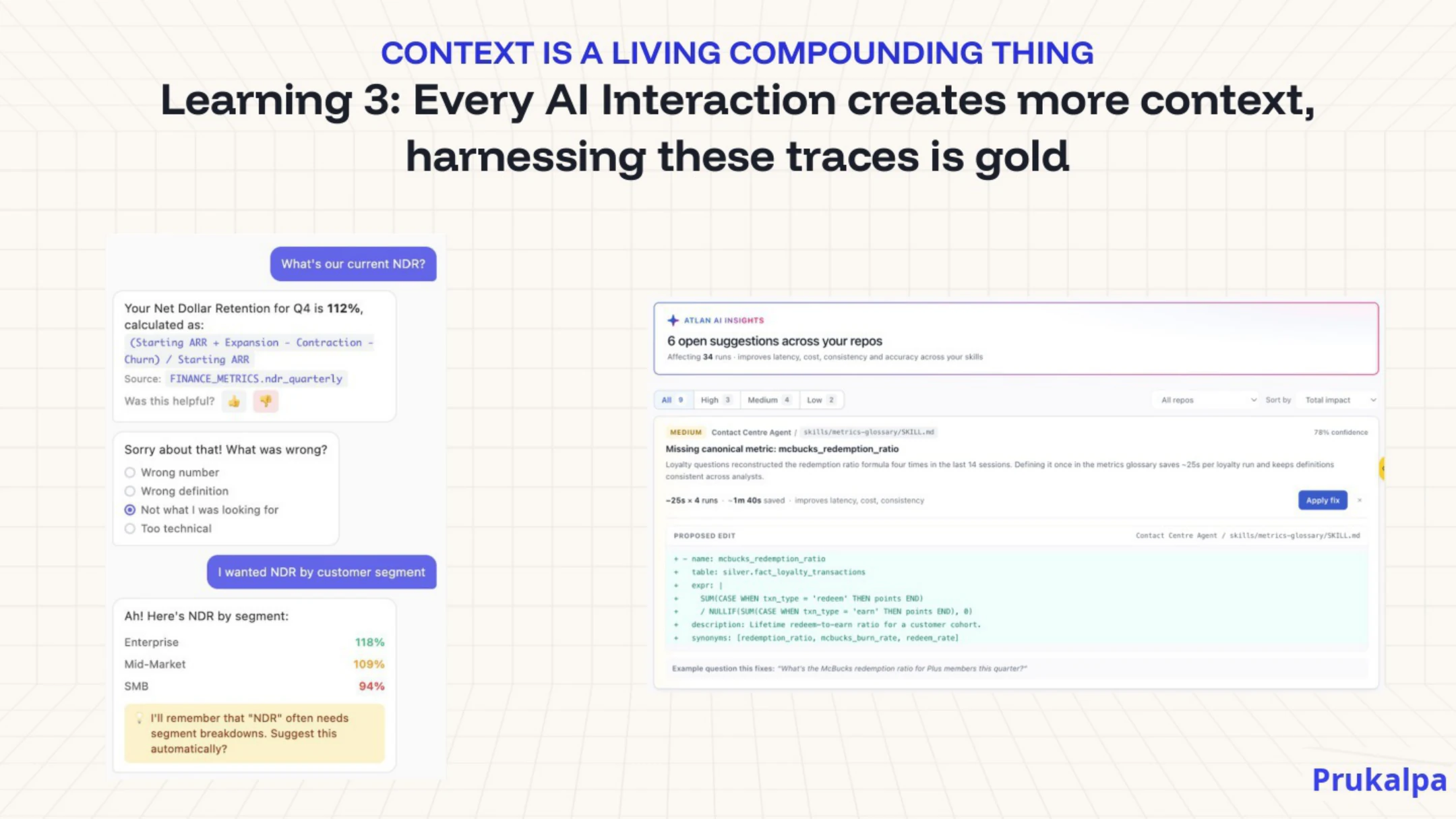

3. Interactions generate context, and the traces are gold. Every time an agent operates in production and a human reviews the output, that interaction is signal. The correction is data. The approval is data. The “that’s not how we do it here” is data. Most organizations are discarding this signal. The ones making real progress are capturing it. The enterprises that capture traces and feed them back into the context layer are building a moat that nobody outside the company can replicate.

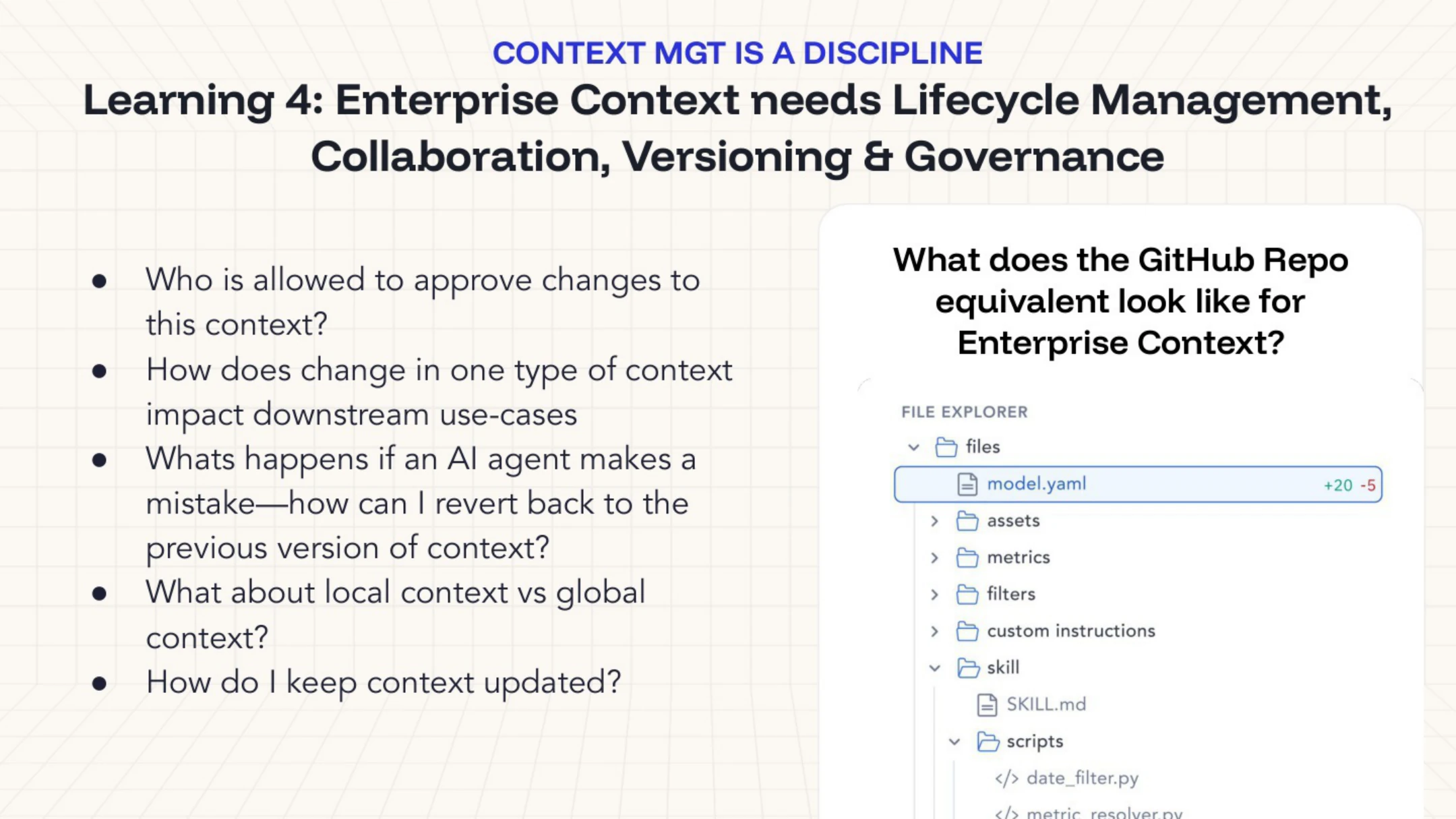

4. Enterprise context requires lifecycle management. Context decays. Your business changes. A metric definition updated in a team wiki, a product line restructured, a compliance carve-out quietly expired. The context layer needs to be maintained the way a codebase is maintained. Versioned, tested, governed. If the definition of “active customer” changes, who approves it? What downstream agents need to be notified? How do you roll back if the new definition turns out to be wrong? If you cannot answer those questions today, you are not yet running context as infrastructure. You are running it the way every company ran code before version control, which is to say, on hope.

Context is the new content

Permalink to “Context is the new content”I ended with a bet.

Context will become as foundational as content was during the internet era.

In the early 2000s, every organization scrambled to get their content online. It at first felt optional and then it felt urgent and then it became table stakes. You simply could not operate without a web presence, a content strategy, a digital footprint.

Context is following the same curve. Right now, it feels optional. Within three years, it will feel urgent. Within five, you will not be able to run an enterprise AI strategy without it.

Context is the new content | Source: Author

The knowledge graph community has been doing this work for decades: quietly building our understanding of ontologies, taxonomies, semantic models, controlled vocabularies, entity resolution, and lineage. The discipline of asking what things actually mean, who decides, and how that meaning travels across systems. This was unglamorous work that got cut from budgets or rolled into data engineering or pushed into governance teams that were treated as cost centers. But this community kept doing it because they knew it mattered.

The Knowledge Graph Conference Session | Source: Author

Now the rest of the field is arriving at conclusions this community reached years ago. “Memory” papers are rediscovering typed relations. “Context engineering” is reinventing things that have names in W3C standards. Every frontier lab is, in some form, building toward what knowledge graph practitioners have been telling enterprises to build all along.

Because the systems being built today have orders of magnitude more reach and consequence, this discipline is more important than ever before. The fundamental decisions about what “customer” means, what “revenue” means, what a “decision” means, are about to be made for every enterprise in the world. This will be done either by the people who have spent their careers thinking carefully about meaning, or by the people who are just now discovering its value. The first outcome is much better.

My hope for this community is that it refuses to become a historical footnote. Rather than disappearing into the AI noise, I want the knowledge graph community to lead the conversation and take a central role in shaping our AI-forward future. The room at KGC was full of people who have done the difficult, unglamorous work to make today possible. After quietly building powerful foundations of meaning, this community is best positioned to lead the way to successful enterprise AI.

The Cats of Context & Chaos

Permalink to “The Cats of Context & Chaos”Thanks for reading Context and Chaos!

About Context & Chaos

Permalink to “About Context & Chaos”Context & Chaos isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: context engineering, governance, architecture, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Context & Chaos is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

Share this article