Make your data AI-ready.

Your AI-ready catalog, live for your humans and AI in 2 weeks.

Going from zero data context to a catalog your team trusts takes years of manual work. Context Agents do it in weeks. Previous cohorts generated 2M+ metadata updates, saving over 210,000 hours of manual work.

210,000+ hours of manual work. Done in two weeks.

Context is the hardest part

You've been here. Your sources are connected. Your governance policies are set. But building the actual context — accurate descriptions, metrics glossary, business lineage, quality signals — is the work that stalls every rollout. It depends on the 3–4 people who truly understand the data, and they're too busy running pipelines. Context Agents change the math.

It takes forever

Manually documenting thousands of assets takes 12–18 months. And by the time you finish, half of it is already stale. The work never ends.

It depends on too few people

The knowledge lives in the heads of 3–4 people. They're the only ones who know what tbl_cx_v3_final actually means, and they're too busy to write it all down.

Descriptions alone aren't enough

Even when assets are documented, people still don't trust them. They need metrics glossary, quality signals, business lineage, and freshness: context that goes far beyond a one-line description.

It never stays current

Every week, new tables and dashboards appear. Schemas change. Teams rename things. The context you painstakingly built drifts out of date faster than anyone can maintain it.

Watch Context Agents work

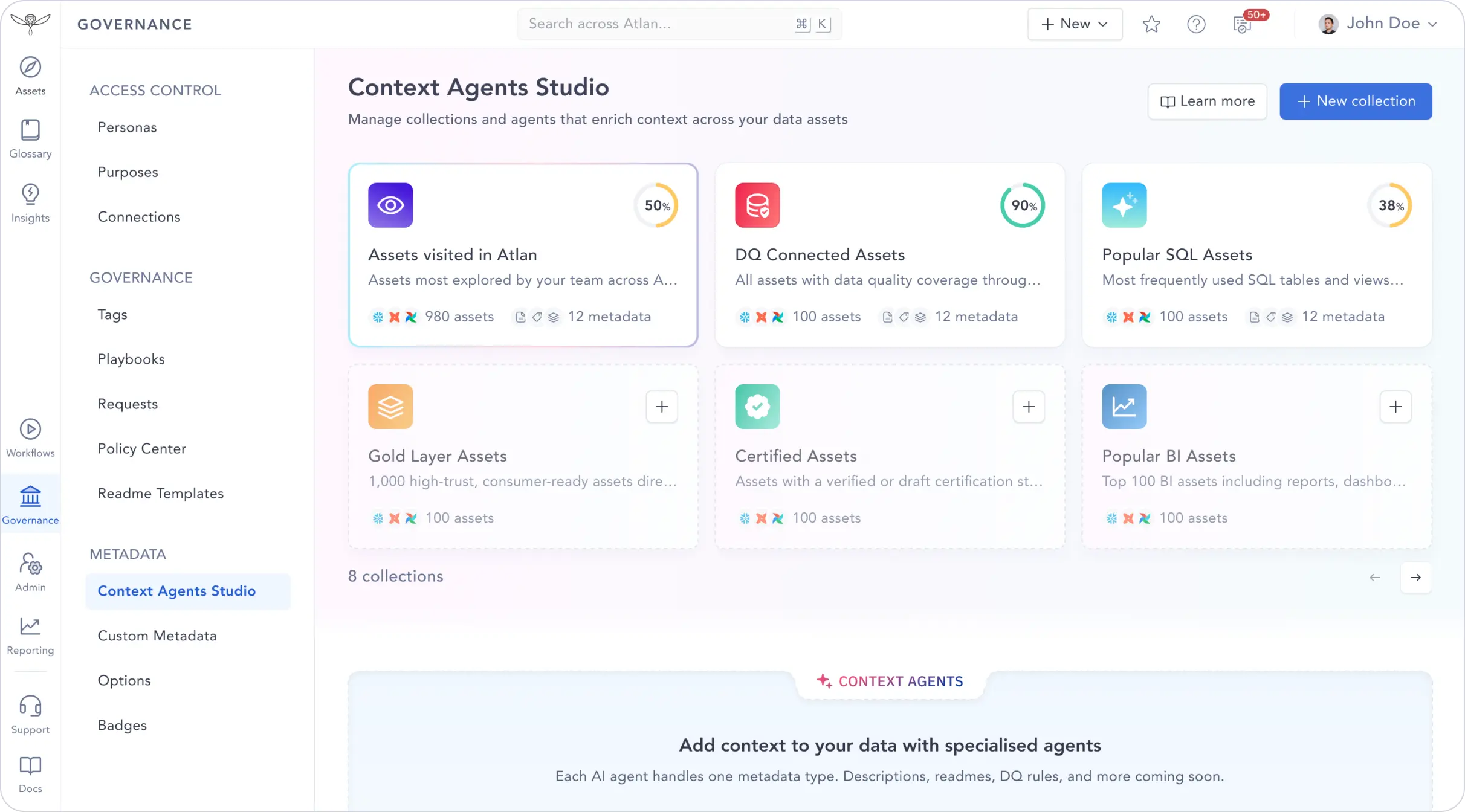

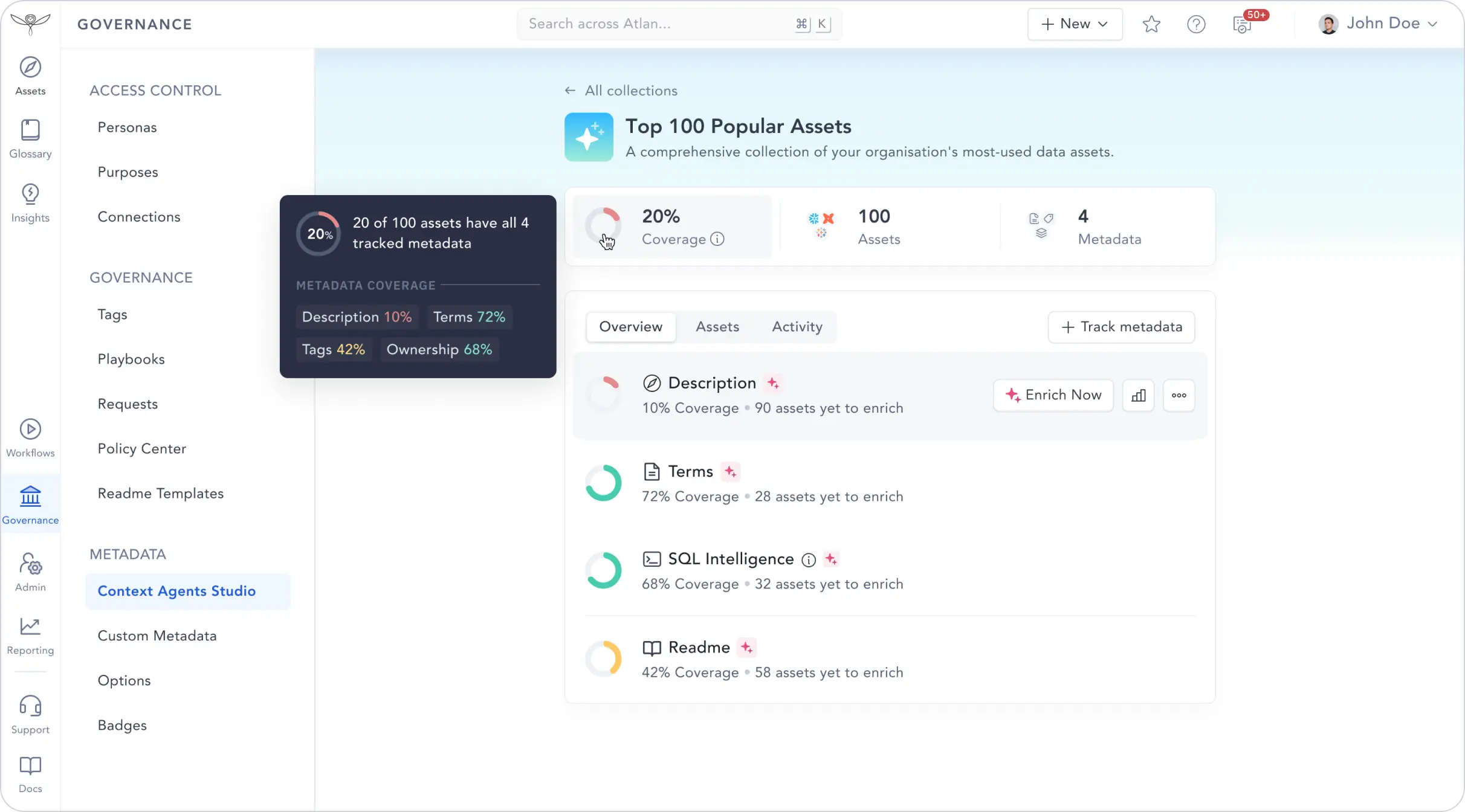

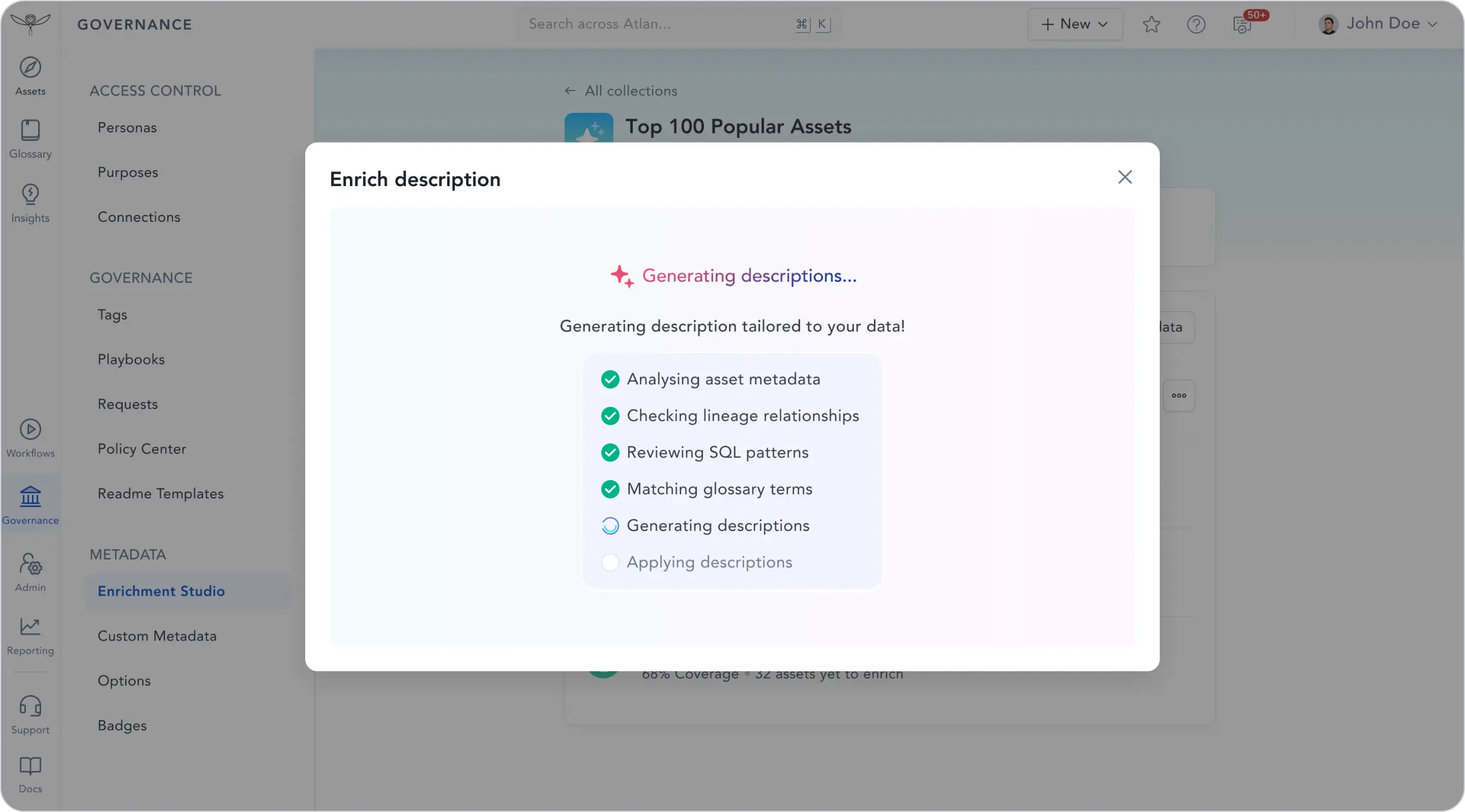

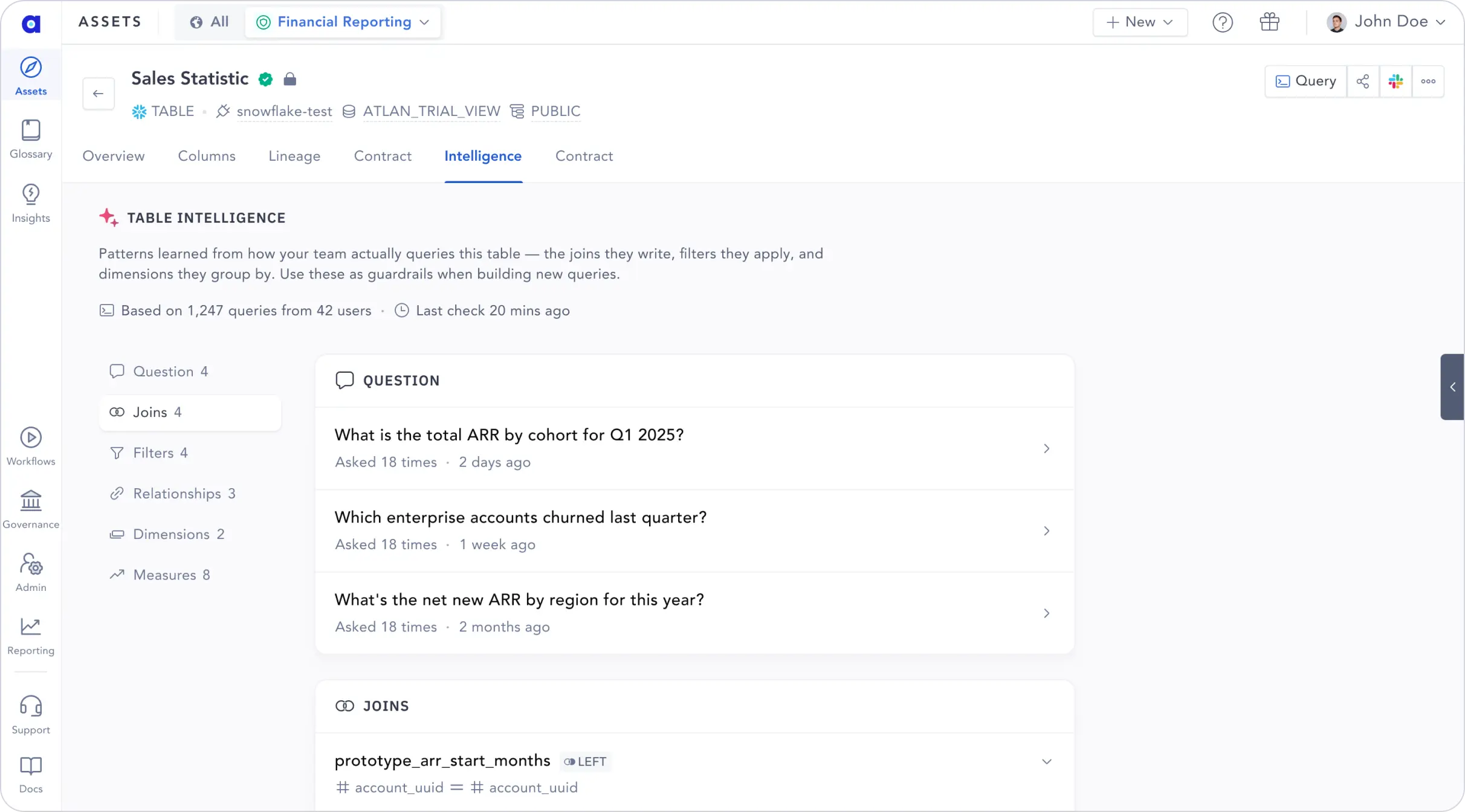

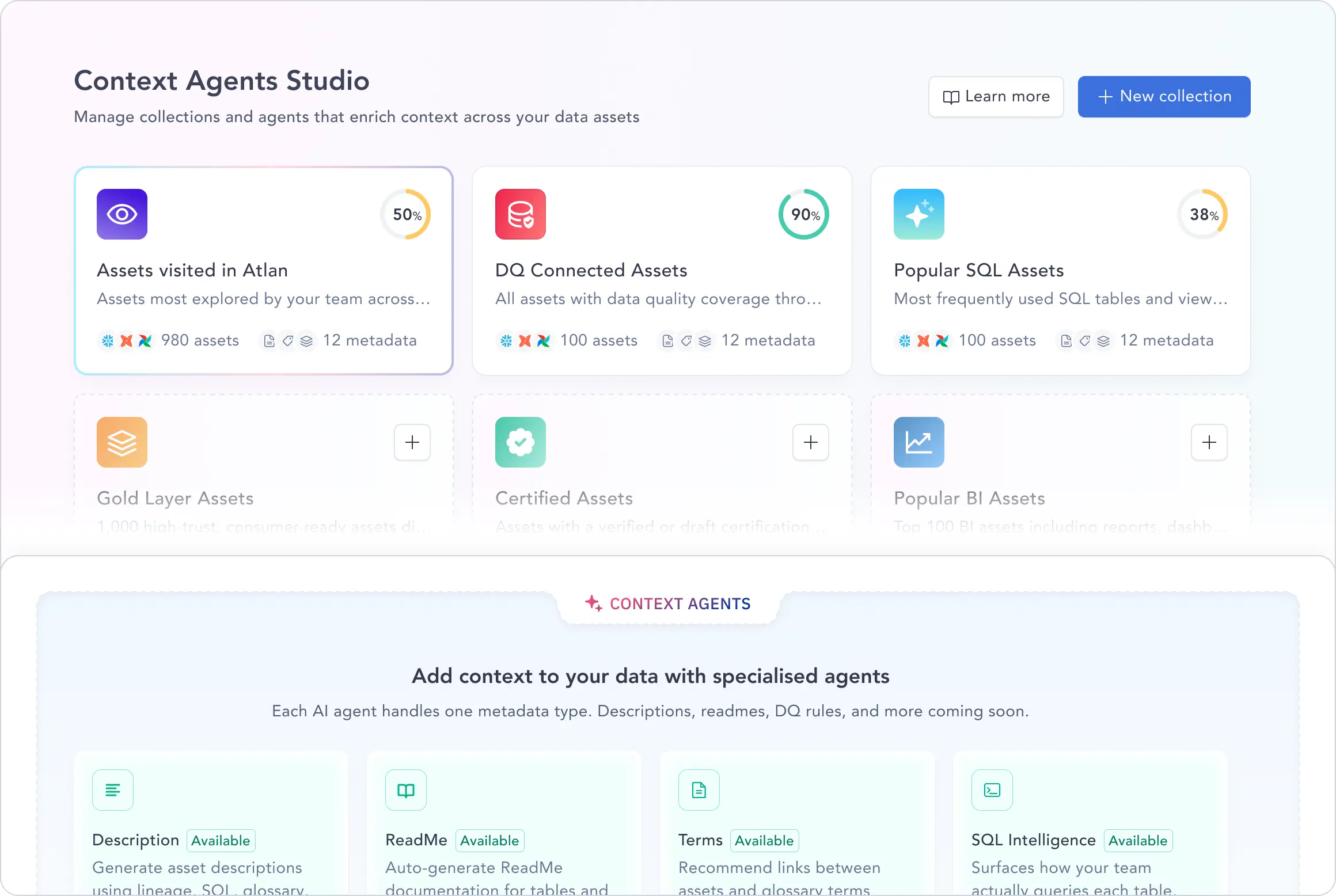

Swipe or use the arrows to browse product screenshots: Context Agents enriching assets, popular resources, and the enrichment studio in Atlan.

What teams learned

AI identified obscure airport codes and zip-level distance calculations from just column names. Who knew that AEX was an airport? 9 out of 10 quality across tens of thousands of assets. The estimates from our stewards were six months — this gave us a huge leap forward.

The context was accurate, and it identified relationships and connections between assets that usually take a lot of time to build manually — same happened almost instantly. That's easily more than two weeks of focused work, assuming this was the only thing on my plate — which it never is.

80 to 90% accuracy on descriptions at the table level. Our biggest takeaway was the mindset shift — from perfection to iterative MVP. It significantly reduced manual work and freed up time for our stewards to focus on higher-value work.

Instead of asking new data owners to fill in blanks — which is overwhelming — it's now: hey, take a look at this, does this make sense? The READMEs especially — I don't think a person would or could put that much information in themselves.

Get a head start on AI-ready data

We're investing 200,000 Flexi Credits in your team to generate AI-ready data you can roll out to your humans and AI agents. You keep everything that gets built — it's yours from day one.

Your 2-week head start

- →200,000 Flexi Credits to run Context Agents across your priority assets — at no cost during the program

- →Your catalog enriched with descriptions, README, and SQL intelligence generated across your highest-impact assets

- →A catalog your people can roll out to, with context built in weeks so your team has something worth trusting from day one

- →AI-ready data: the same context serves humans and AI agents. Both benefit from what you build

- →Your Atlan account team works with you throughout — no new contacts, no handoffs

- →AI-Ready Progress Report — a concrete artifact to show leadership what you accomplished

Three commitments

- ←Commit to rollout. If we get your context right, you roll this out to your end users. We're not building a sandbox. We're building a catalog people will actually open every day. The goal is real adoption.

- ←Commit to measuring value. Help us measure the real business impact: time saved, adoption rates, trust scores, tickets deflected. We want to prove this works, together. Your results become the case study, shared at Atlan Activate with your team in the spotlight.

- ←Commit to thinking differently. AI is changing the ground rules of how data governance can operate. We intend to approach it differently, rethinking what the role of AI should be and what the role of a human should be in this new world. There's a lot to figure out, and we can't do it alone. We want to push the boundary of what's possible with AI, and we need partners who will push with us.

Zero data context to rollout-ready in 2 weeks

Assess

Day 1Context Agents audit your data estate. We score your catalog's completeness and identify the highest-impact gaps.

Connect

Day 1–2Plug in your sources: warehouse, BI, dbt, pipelines. Agents begin reading across your entire data estate.

Enrich

Day 2–12Context Agents generate descriptions, readme, metrics glossary, linked assets, domain tagging, synonyms, and SQL intelligence.

Roll Out

Day 12–14Your enriched catalog goes live to your organization. DG team continues with certification of assets. AI-ready context activated via API and MCP. Measure the delta.

Join hundreds of teams already building AI-ready data

Teams in our previous cohorts enriched 2M+ assets — the equivalent of 210,000+ hours of manual work. This is the final cohort. Same program, same support — your last chance to join.

The practical stuff

Do we need to be an existing Atlan customer?

Yes. Context Agents enrich your Atlan catalog directly. You need an active Atlan instance with sources connected.

What data sources do Context Agents support?

Any source connected to Atlan, including Snowflake, Databricks, BigQuery, Redshift, dbt, Tableau, Looker, Power BI, and more. Agents read across your entire connected estate.

Does our data leave our environment?

Context Agents work within your Atlan instance and connected metadata. Your actual data stays in your warehouse. We enrich metadata: descriptions, readme, metrics glossary, linked assets, domain tagging, synonyms; not the underlying data itself.

What happens after the two weeks?

You keep everything. All AI-generated context stays on your assets permanently. Context Agent Studio is a paid feature — if you want to continue running agents, you can choose the right Flexi Credits tier with your account team. Credits from the accelerator expire at the end of the program. Talk to your account team for details.

How much time does our team need to invest?

2–3 hours per week from a point-of-contact on your data team. Their job is to review what Context Agents generate and make governance decisions, not to write documentation.

Which assets get enriched?

Context Agents don't enrich everything blindly. We start with your most popular and most useful assets. You can also choose specific assets to prioritize. The goal is impact, not coverage for coverage's sake.

What if the AI-generated context isn't good enough?

That's what the program is for. Your team reviews and refines. In practice, teams in our previous cohorts saw 80–90% accuracy on first pass. The 10–20% that needs human input is exactly where your stewards add value.

What does the case study commitment involve?

We co-create it with you. Atlan's team handles the writing, design, and production. You review and approve before anything goes public. We make you look good. That's the whole point.

AI is changing the ground rules

We get it. The first reaction is skepticism. Ours was too. AI is rewriting what's possible in data governance, and we needed to start thinking very differently before we could build this. Here's what we worked through, and what every team works through when they first see it.

"Can AI really generate better descriptions than the people who built the data?"

AI knows the real implementation: every join written against it, every filter applied, every dashboard built on top. Humans know the business intent. The combination is more powerful than either alone. Use human judgment to review what AI drafted, not to start from a blank page.

"Shouldn't our stewards be the ones writing the definitions?"

Writing descriptions is data entry, not governance. Your stewards were hired for certification and judgment. AI pre-populates from real usage. Stewards approve, edit, or reject. The interface is a queue, not a blank form. Their time goes to the decisions that actually matter.

"How can AI know what our data actually means to our business?"

The best context is not what people say in meetings. It is what they do. Context Agents read behavioral signals (real queries, real joins, real dashboards), not hypothetical definitions. Usage patterns don't lie.

"Won't AI-generated descriptions become outdated as our data changes?"

Human-written metadata goes stale the moment a pipeline changes. AI re-runs against the latest source of truth. The thing that feels most reliable is actually the least. The thing that feels risky is actually more durable.

"This sounds too good. What's the catch?"

The catch is that it requires a different mindset. You have to be willing to let AI do the first pass and trust the process of human review, not insist on humans writing everything from scratch. Teams that make that shift see results in days. Teams that don't will stay stuck in the 18-month backlog.

Make your data ready.

For your humans. For your AI agents.

2 weeks. 200,000 free Flexi Credits. Build AI-ready data — and see the impact on yours.

- 200,000 Flexi Credits — free for the program

- Dedicated Atlan team for the full two weeks

- AI-Ready Progress Report for your leadership

- Join the Context Pioneers community

Register Now

ABOUT YOU

All commitments required to register. We'll respond within 2 business days. Credits expire at the end of the 2-week program.

Make your data ready.

For your humans. For your AI agents.

Register by June 5 · Program starts June 8 · Credits expire at program end

Make your data ready.

For your people. For AI.