A context layer for AI is the governed infrastructure that delivers business meaning, lineage, and policy to AI agents at the moment they reason. Gartner predicts 40%+ of agentic AI projects will be canceled by 2027, citing escalating costs, unclear business value, and inadequate risk controls.

Underneath all three is the same root: agents that cannot share governed context cannot be costed, valued, or risk-managed at scale. McKinsey research finds the same gap from the demand side: only 1 percent of executives describe their AI rollouts as mature.

Many enterprises are now running agents across Snowflake Cortex, Databricks Genie, Claude, and Agentspace. Ask sales and finance for “the revenue number” and humans give two different answers. Now we have handed that same fragmented context to AI agents, and agents do not call a colleague to double-check. Every new agent makes the problem worse, unless the context underneath is shared, governed, and active.

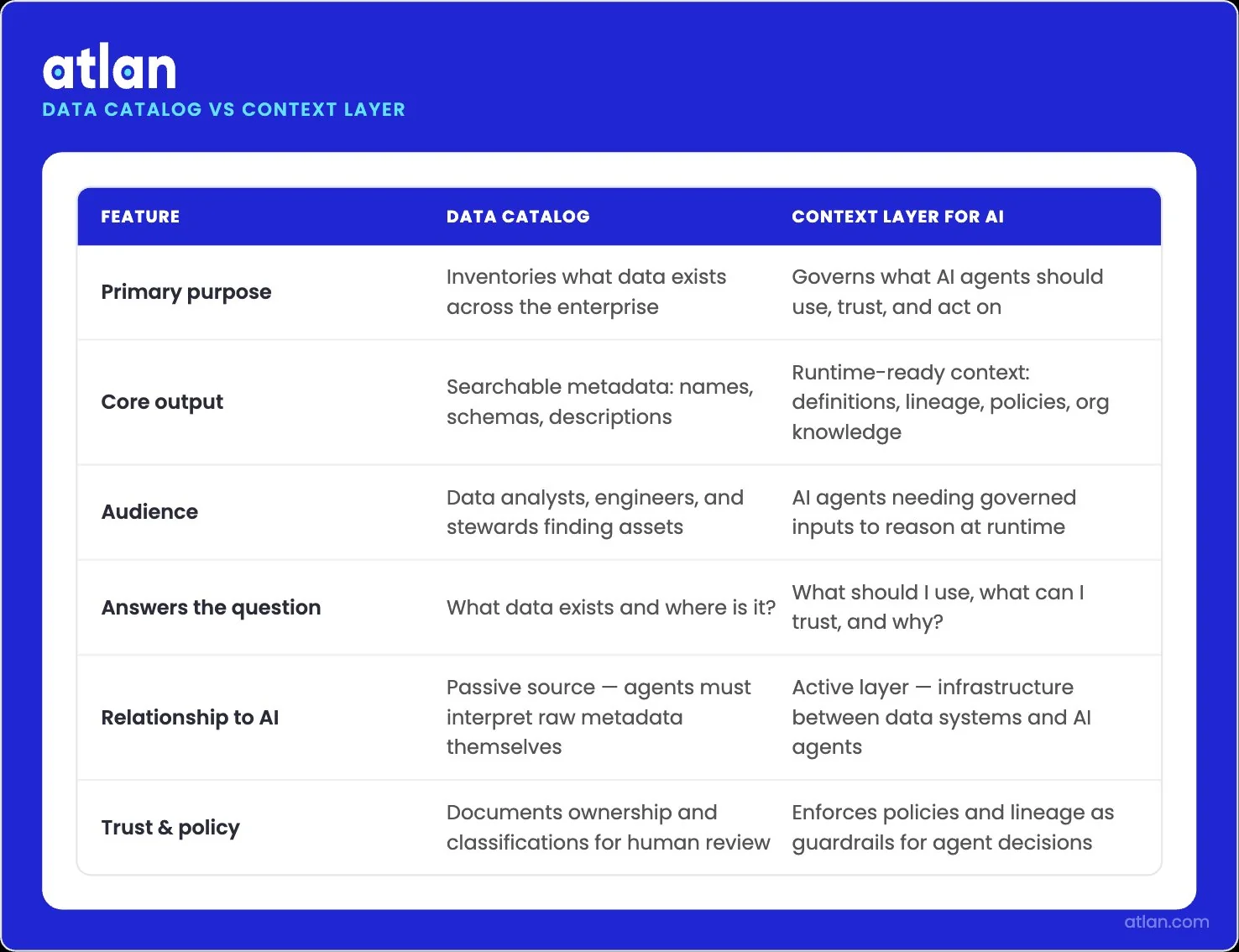

A context layer tells AI agents what to use, trust, and why; not just what exists. Image by Atlan.

Quick facts about a context layer for AI

Permalink to “Quick facts about a context layer for AI”| What it is | Where it sits | What it delivers | Who builds it | Why it matters now |

|---|---|---|---|---|

| Governed infrastructure between data systems and AI agents | Above warehouses, BI tools, and SaaS apps; below agent platforms | Meaning, lineage, policy, and organizational knowledge at runtime | Joint: data engineering, governance, and AI platform teams | Most agentic AI projects stall in testing without it; the gap is context, not model capability |

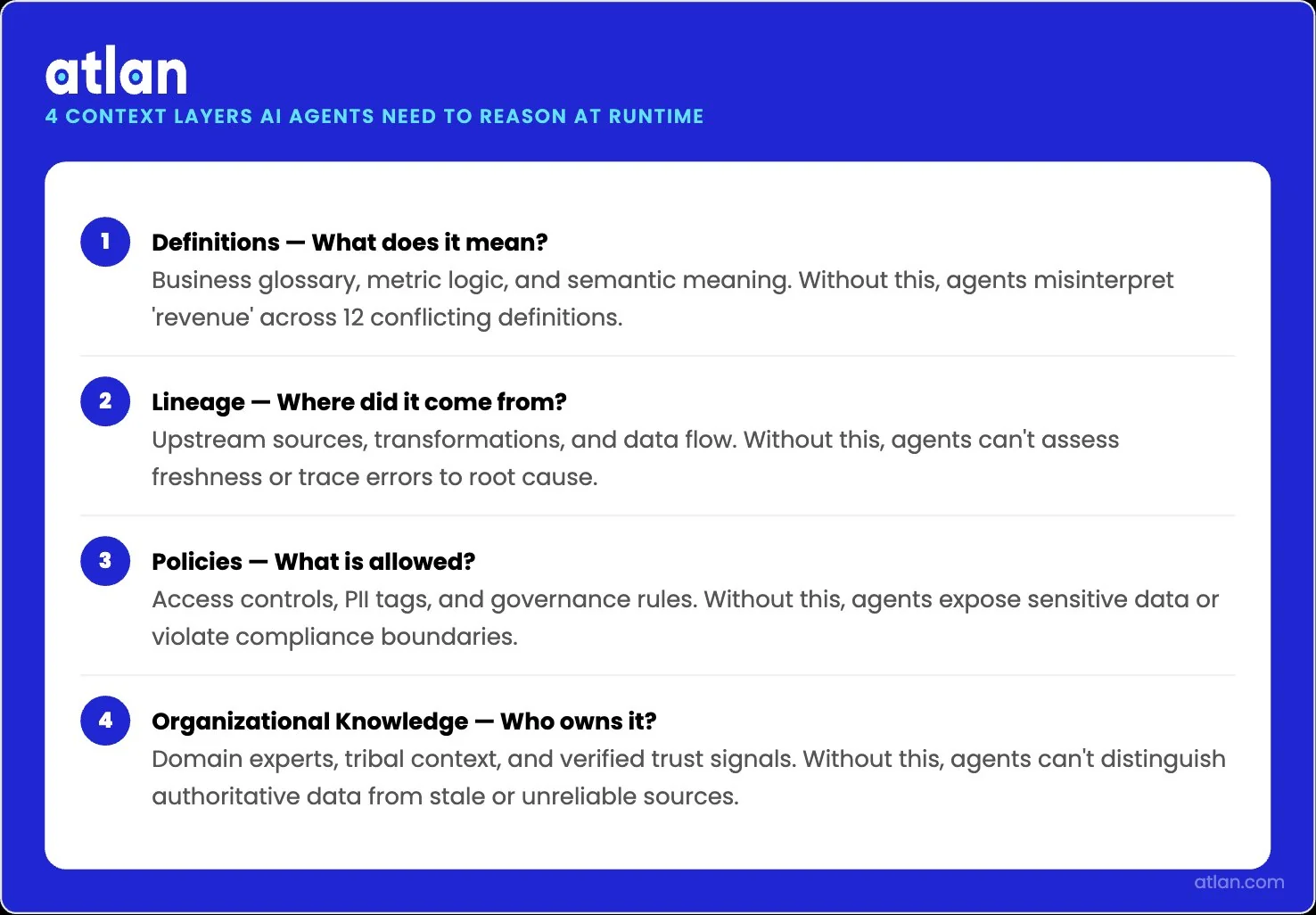

Why context, not model intelligence, is the bottleneck for enterprise AI

Permalink to “Why context, not model intelligence, is the bottleneck for enterprise AI”Enterprise AI stalls before production because the same business question crosses four distinct layers of context, and each layer has its own failure mode. A human analyst resolves all four in five minutes of tribal knowledge. AI agents have none of it. Across 690,000+ Context Agent descriptions on Atlan, the failure pattern is consistent: agents fail at the layers they cannot see.

| Layer | The question it answers | What breaks without it |

|---|---|---|

| Data context | Which of 50+ tables should I query? | Agent queries the wrong source |

| Meaning context | Does “top 10” mean by views, watch time, or revenue? | Agent answers the wrong question |

| Knowledge context | Is “political” a genre tag or a free-text keyword? | Agent uses tribal knowledge it does not have |

| User context | Marketing wants views; editorial wants watch time | Generic answers that serve no one |

One question, four layers, multiplied by every agent in production. A context layer for AI exists to give every agent the same answer to all four, every time. Atlan’s WTF is the Context Layer series goes even deeper into this emerging topic.

Why context, not model intelligence, is the bottleneck for enterprise AI. Image by Atlan.

The three walls every enterprise hits when building AI context

Permalink to “The three walls every enterprise hits when building AI context”Every enterprise building agentic AI hits three walls in the same order. Most are stuck at Wall 1.

Wall 1: context bootstrapping

Permalink to “Wall 1: context bootstrapping”Building the agent takes five minutes. Giving it business context takes five months. Reaching the 70 to 80 percent accuracy that earns user trust requires synonyms, business logic, and edge cases nobody has written down.

Wall 2: testing hell

Permalink to “Wall 2: testing hell”You know you need 70 to 80 percent accuracy, but against which questions, and how do you know when you are there? Teams get stuck for months on spot checks and intuition because there is no definition of done. Imagine a typical Wall 2 scenario: a team deploys 1,000 Genie rooms in a month, then watches 90 percent get abandoned within four weeks because the business does not trust the answers.

Wall 3: the scaling trap

Permalink to “Wall 3: the scaling trap”Agent one works. Now you need ten. Use cases never live in silos: a website agent needs marketing content, support docs, product specs, and pricing. Without shared context, every new agent is a new build. Effort grows linearly with agent count, and improvements never compound.

Five approaches to AI context, and what each one gets wrong

Permalink to “Five approaches to AI context, and what each one gets wrong”Most “context layers” being pitched today are one slice of the four-layer problem above. Each approach solves something real, and each one stops where its capabilities end.

| Approach | What it solves | What it misses | When it’s enough | When it isn’t |

|---|---|---|---|---|

| Semantic layer alone | Consistent metric definitions for BI | Lineage, governance, runtime delivery to non-BI agents | Single-team analytics, dashboard-only use | Agents reason across systems, not only BI |

| Knowledge graph only | Entity relationships and ontology | Active updates, policy enforcement, agent-platform delivery | Research-grade modeling | Production agents that need real-time context |

| Prompt engineering and RAG | Retrieval over unstructured text | Governed meaning, lineage, conflict resolution | Single-domain Q&A | Multi-agent enterprise with cross-system reasoning |

| Consulting framework | Strategic roadmap and alignment | Working software, runtime delivery | Pre-investment planning | Past planning into production |

| Static catalog (no event bus, no agent write-back, no runtime delivery surface) | Centralized inventory of metadata | Real-time push to agents; bidirectional write-back; runtime delivery via MCP or APIs | Documentation and human discovery | When metadata needs to drive agent decisions at runtime |

The static catalog hides in plain sight. Most catalogs treat metadata as documentation. A context layer for AI requires three things they lack: an event bus that pushes changes when systems shift, runtime delivery to agents via MCP or APIs, and bidirectional write-back so agents can record observations. Without those, active metadata is just a label.

What does a working context layer for AI actually require?

Permalink to “What does a working context layer for AI actually require?”A context layer for AI works when five properties hold simultaneously. Drop any one and you collapse back into one of the partial approaches above. The five properties of an active context layer are Unified, Governed, Synchronized, Active, and Agent-Ready. The framework is Atlan’s, but the underlying open standards and protocols (Iceberg, MCP, A2A) are open and anyone can implement them. Each property has a primary wall it removes and a primary layer of context it covers.

| Property | Primary wall it removes | Primary layer of context it serves |

|---|---|---|

| Unified | Wall 1: Bootstrapping (one source for every agent) | Data context (which source to query) |

| Governed | Wall 2: Testing hell (defining “done” for context) | Knowledge context (tribal rules, certified definitions) |

| Synchronized | Wall 2: Testing hell (state freshness across systems) | All four layers, kept current |

| Active | Static-catalog failure mode (runtime delivery to agents) | Meaning context (the right answer at the right moment) |

| Agent-Ready | Wall 3: Scaling trap (corrections propagate across agents) | User context (delivered to the right surface for the right role) |

1. Unified: one source for every agent

Permalink to “1. Unified: one source for every agent”Every agent reads the same governed map across data systems, BI tools, and operational apps. Atlan’s Enterprise Data Graph builds this with 100+ native connectors and column-level lineage reverse-engineered from SQL and pipeline code. That removes Wall 1.

2. Governed: humans on the loop, not in the way

Permalink to “2. Governed: humans on the loop, not in the way”AI scales the work; humans certify the hard calls. When the system finds three definitions of net revenue retention, it surfaces all three with evidence and routes the conflict to a named owner. The resolution becomes canonical for every downstream agent. Column-level lineage makes the trace explainable for audit and risk.

3. Synchronized: context that reflects current system state

Permalink to “3. Synchronized: context that reflects current system state”State freshness. Schema changes, lineage updates, ownership shifts, and policy revisions show up without manual sync. The Context Lakehouse is Iceberg-native and BYOC, so context lives on your compute and time-travels for audit. That closes Wall 2: testing converges when context is verifiably current.

4. Active: context that ships to agents at runtime

Permalink to “4. Active: context that ships to agents at runtime”Behavior, not state. A context layer is active when it pushes governed context to agents at the moment they reason. Atlan’s Context Engineering Studio bootstraps, simulates, and delivers context through MCP at decision time.

5. Agent-Ready: open delivery to any agent platform

Permalink to “5. Agent-Ready: open delivery to any agent platform”Atlan’s MCP server delivers governed context to Snowflake Cortex, Databricks Genie, Anthropic Claude, Google Agentspace, OpenAI, and LangGraph. A2A handles bidirectional signals; SQL and APIs cover the rest. That closes Wall 3: corrections propagate across every agent, so agent ten starts smarter than agent one.

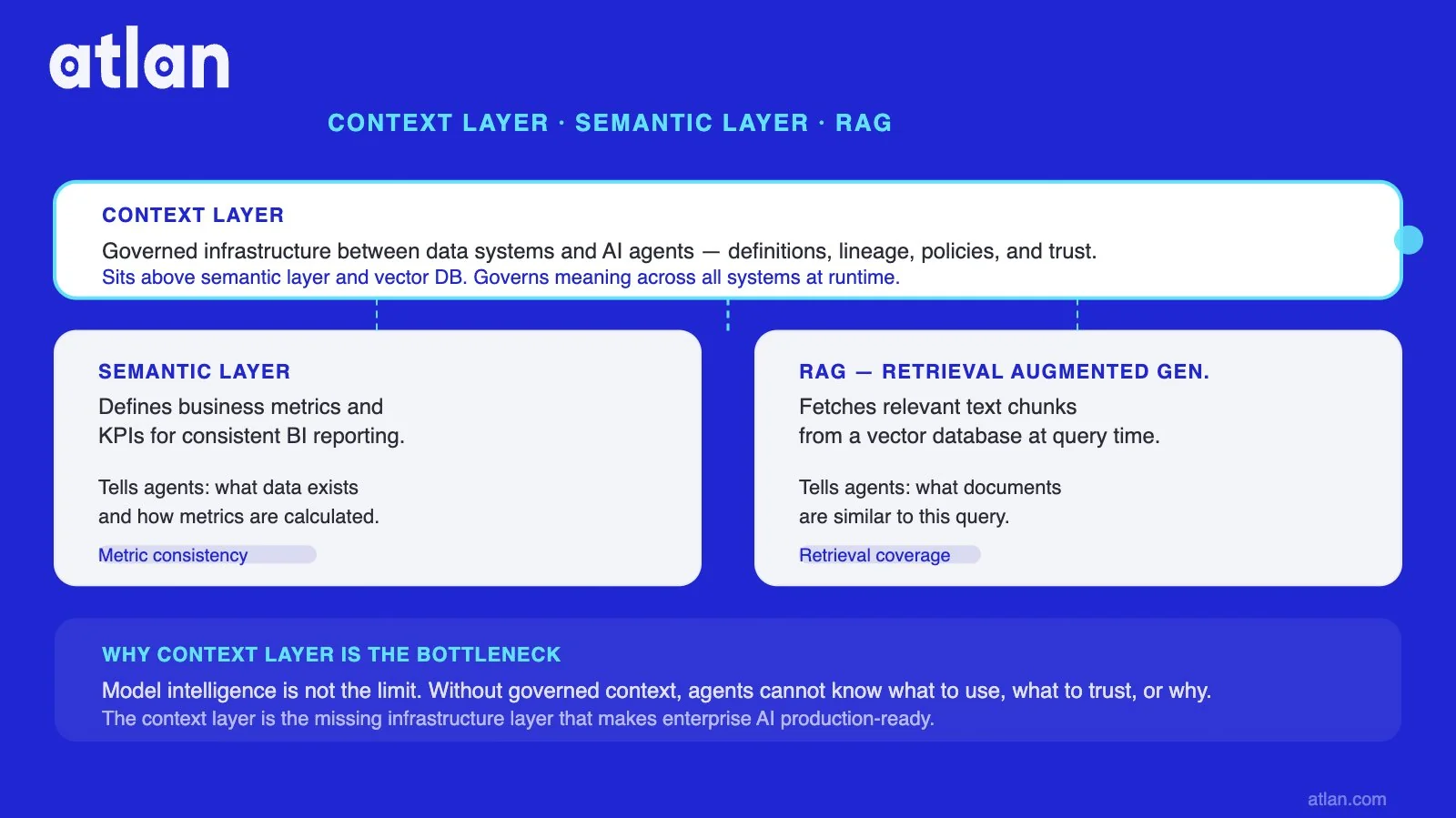

How does a context layer for AI compare to a semantic layer or vector database?

Permalink to “How does a context layer for AI compare to a semantic layer or vector database?”These three are complements, not alternatives. A context layer for AI sits above the semantic layer and the vector database, and only it governs meaning across systems.

| Context layer for AI | Semantic layer | Vector database / RAG | |

|---|---|---|---|

| What it does | Delivers governed meaning, lineage, and policy to agents at runtime | Defines metrics for BI tools | Retrieves text chunks by similarity |

| Scope | All systems, all agents | Analytics and BI tools | Unstructured corpus |

| Governance | Human on the loop, lineage, policy | Metric definitions only | None (retrieval only) |

| What breaks if you only have this | n/a (this is the layer) | Agents cannot reason across systems | No governed meaning, no lineage |

For a deeper side-by-side, see context layer vs. semantic layer.

Three complements: context layer governs meaning across all enterprise AI systems. Image by Atlan.

How to audit your stack against the five properties

Permalink to “How to audit your stack against the five properties”A self-assessment. Answer no to any one and you have a partial approach.

- Unified. Do all your AI agents read from one governed map of your data systems?

- Governed. When two systems disagree on a definition, does a named human owner reconcile it, and does that resolution propagate to every downstream agent automatically?

- Synchronized. When a schema, dashboard, or policy changes upstream, do dependent agents see the change within minutes, or does it require a manual sync?

- Active. Do agents reason on context delivered to them at runtime, or only on metadata they remember to query?

- Agent-Ready. Can the same governed context reach Snowflake Cortex, Databricks Genie, Anthropic Claude, and any future agent platform without rebuilding integrations?

Two or more nos and the gap is exactly why agentic AI is not yet in production.

How Atlan delivers a context layer for AI

Permalink to “How Atlan delivers a context layer for AI”Atlan delivers the five properties as a single pipeline: UNIFY → ENGINEER → SHIP, on the Context Lakehouse foundation. The platform serves 400+ global customers representing over $10T in market value and handles 14 billion API calls monthly.

UNIFY → Enterprise Data Graph. 100+ native connectors capture metadata across your stack with column-level lineage reverse-engineered from SQL and pipeline code. Context Agents compound on the graph: descriptions improve metrics, metrics improve ontology. See the Enterprise Context Layer hub.

ENGINEER → Context Engineering Studio. Bootstrap context repos from existing data graphs. Simulate against real business questions. Deploy one Context Repo across Cortex, Genie, Claude, and Agentspace. Atlan’s Context Agents have generated more than 690,000 descriptions across 50+ enterprise customers, with 87% rated on par or better than human writing.

SHIP → Any agent platform. Atlan’s MCP server delivers governed context to Snowflake Cortex, Databricks Genie, Anthropic Claude, Google Agentspace, OpenAI, and LangGraph.

"Atlan stands out in its focus to deliver AI-native governance through context-based ecosystem partnerships, agentic stewardship and orchestration of enterprise agentic systems."

Gartner, Magic Quadrant for Data & Analytics Governance Platforms 2026

Forrester reached the same conclusion from a different angle, calling Atlan a top choice for organizations seeking a modern, AI-native governance platform that blends intelligent automation with deep integration in The Forrester Wave: Data Governance Solutions, Q3 2025.

Want to see Context Engineering Studio in action?

Watch Context Studio Demo →Customer stories: Workday and CME Group

Permalink to “Customer stories: Workday and CME Group”Two examples of context-layer foundations already powering enterprise AI in production:

Grounded an agentic platform in shared, governed enterprise context delivered via MCP

Workday partnered with Atlan AI Labs to ground their agentic platform in shared, governed enterprise context delivered via MCP. Joe DosSantos, VP Enterprise Data and Analytics, explained, "Atlan captures Workday's shared language to be leveraged by AI via its MCP server. As part of Atlan's AI labs, we're co-building the semantic layer that AI needs." Agents now reason on the same business definitions humans already standardized, instead of rebuilding context per agent.

Joe DosSantos, VP Enterprise Data & Analytics

Workday

Want to see how Atlan delivers a context layer for AI?

Book a Demo →

Cataloged 18 million assets and 1,300 glossary terms across Oracle, BigQuery, and Looker in year one

CME Group built foundational L1-L2 scale across on-premises Oracle, BigQuery, and Looker, establishing the substrate later AI use cases compound on. Kiran Panja, Managing Director, shared, "Within the first year after that we cataloged over 18 million assets, defined more than 1,300 glossary terms. Atlan had lineage across our on-prem Oracle databases, BigQuery, and Looker."

Kiran Panja, Managing Director

CME Group

What does a context layer for AI need to do to actually work?

Permalink to “What does a context layer for AI need to do to actually work?”Relabeling a catalog or tightening a RAG pipeline does not solve the three walls. Five properties define a context layer for AI that actually works.

- Active beats passive. Catalogs that document metadata fail when agents need it to drive decisions in real time. Activation is the dividing line.

- Five properties, not four. Unified, Governed, Synchronized, Active, and Agent-Ready. Drop any and you collapse into a partial approach.

- Context, not model size, is the moat. Model intelligence is commoditizing. Governed semantics, lineage, and policy are your enterprise IP.

- Open delivery is non-negotiable. A context layer that runs on one vendor’s compute and ships to one vendor’s agents is a silo. MCP, A2A, SQL, and APIs are the cost of entry.

If your agents are disagreeing, hallucinating, or failing to scale, the gap is almost never the model. It is the layer underneath.

Ready to see how Atlan delivers a context layer for AI?

Book a Demo →FAQs about the context layer for AI

Permalink to “FAQs about the context layer for AI”How is a context layer for AI different from a semantic layer?

Permalink to “How is a context layer for AI different from a semantic layer?”A semantic layer defines metrics for BI tools. A context layer for AI delivers governed business definitions, data provenance, and access rules across all systems and ships them to any agent. The semantic layer is one component of a context layer; a context layer covers analytics, operational systems, and unstructured knowledge.

How is a context layer for AI different from a vector database or RAG pipeline?

Permalink to “How is a context layer for AI different from a vector database or RAG pipeline?”Vector databases retrieve text chunks by similarity. RAG pipelines ground prompts in retrieved text. Neither governs the meaning of the data being retrieved. A context layer for AI sits above retrieval and tells the agent what to trust, whose definitions to use, and which lineage path the data took.

Who owns the context layer: data engineering, the platform team, or AI?

Permalink to “Who owns the context layer: data engineering, the platform team, or AI?”The context layer is built jointly. Data engineering owns connections and lineage. Governance owns policy and stewardship. The AI or platform team owns delivery surfaces such as MCP and APIs. The CDO is accountable for outcomes. Without joint ownership, a context layer becomes another stalled cross-functional initiative.

Can you build a context layer on top of an existing data catalog?

Permalink to “Can you build a context layer on top of an existing data catalog?”Sometimes. If the catalog has active metadata, lineage, and open delivery protocols, it is the foundation you activate. If it is a static inventory that updates manually, you need to add the active and agent-ready layers separately. Most enterprises start by activating the catalog they already have.

What is active metadata, and why does an AI context layer need it?

Permalink to “What is active metadata, and why does an AI context layer need it?”Active metadata flips metadata from documentation to signal. Instead of waiting to be queried, it pushes lineage updates, quality scores, and policy changes to the systems where decisions happen. AI agents need it because their context window resets every interaction; static metadata cannot keep up.

How do you measure if a context layer for AI is working?

Permalink to “How do you measure if a context layer for AI is working?”Three dimensions. Accuracy: whether agent answers match certified answers on a domain-specific eval set, with 70 to 80 percent as the typical first bar. Coverage: percentage of business definitions, metrics, and policies represented across priority domains. Reuse: whether the same context backs at least three agents, so each new one inherits the work.

How does a context layer handle conflicting definitions across agents?

Permalink to “How does a context layer handle conflicting definitions across agents?”With humans on the loop. When the system finds three definitions of net revenue retention, it surfaces all three with evidence and pushes the conflict to a human owner. The human resolution becomes canonical for every downstream agent. AI scales the discovery; humans decide the canonical answer once.

Is a context layer a product or an architectural pattern?

Permalink to “Is a context layer a product or an architectural pattern?”Both. The pattern is open and vendor-independent: a governed layer between data systems and agents. Several vendors offer products that fill the pattern. The architecturally important point is that the pattern requires the five properties to function. A product missing any one does not deliver a context layer for AI.