Yes, enterprises need a context layer. AI agents without a governed context layer between them and the data estate routinely hallucinate, misinterpret business metrics, and violate governance rules in production. The context layer is the infrastructure that prevents this by giving agents the same grounded, certified understanding of data that a good analyst would already carry. Atlan implements this as a live metadata layer that sits between the data estate and any AI system querying it.

Context layer key facts and takeaways for enterprises

Permalink to “Context layer key facts and takeaways for enterprises”| Question | Answer |

|---|---|

| What is a context layer | Governed infrastructure that gives AI systems the metadata, business definitions, lineage, and policies a human analyst would already know |

| Why enterprises need it | AI agents without context hallucinate, misinterpret metrics, and violate governance rules |

| Key metric | Properly grounded AI reaches 94–99% accuracy vs. 10–31% without context (Moveworks/Promethium research, cited by Atlan) |

| Implementation time | 60–90 days for initial production deployment, with continuous enrichment afterward |

| Common misconception | “It’s just a rebranded semantic layer or data catalog.” It isn’t, though it builds on both |

| What it replaces | Per-agent prompt engineering, siloed RAG stacks, and brittle one-off integrations. See our full enterprise context layer guide. |

What questions are enterprises asking in 2026 around the context layer?

Permalink to “What questions are enterprises asking in 2026 around the context layer?”The MIT NANDA State of AI in Business 2025 report found that 95% of enterprise GenAI pilots deliver no measurable P&L impact, even as organizations spent $30–40B on them (MIT NANDA). MIT researchers did not call it a model problem. They called it a learning gap. Systems that do not retain feedback, adapt to context, or improve over time stall at the same wall.

Gartner’s own data tells a parallel story. In a May 2025 survey of 506 CIOs, 72% reported their organizations are breaking even or losing money on AI investments. At the same time, only 37% of organizations are confident in their AI data management practices (as referenced in Atlan’s Gartner D&A Summit 2026 recap), which suggests most teams do not realize they are not ready.

This is the uncomfortable math every CDO is walking into: a large gap between AI investment and AI value, and a larger gap between readiness and awareness of readiness.

The context layer is the infrastructure’s response to questions about AI readiness that are constantly being iterated on.

What is a context layer?

Permalink to “What is a context layer?”A context layer sits between your enterprise data platforms and your AI systems. It carries four things that raw data alone never carries: business meaning, trust signals, governance, and memory.

Here’s a close look at them:

- Business meaning: what your company calls “revenue,” “active customer,” or “churned account,” and how that differs from a textbook definition

- Trust signals: lineage, quality scores, certifications, freshness, and ownership for every asset an agent might touch

- Governance: policies, sensitivity classifications, access rules, and approval workflows, enforced at the moment the agent queries

- Memory: decision traces, past corrections, and the institutional know-how that used to live in tribal knowledge

Gartner defines context engineering as designing and structuring the relevant data, workflows, and environment so AI systems can understand intent and make better decisions. It delivers enterprise-aligned outcomes, without relying on manual prompts. A context layer is where that design work is stored, versioned, and served.

“In a world where everyone has access to the same intelligence, what differentiates one company from another? It’s about who you are as a company. It’s about how you do business. That’s context. In this new world, context will be the IP for companies. Intelligence won’t.”

— Prukalpa Sankar, Co-founder, Atlan

Why AI agents break without a context layer

Permalink to “Why AI agents break without a context layer”AI systems fail in production because they cannot see the business. A revenue agent built at Workday hit that wall early. Joe DosSantos, VP of Enterprise Data & Analytics, Workday, said, “We built a revenue analysis agent, and it couldn’t answer one question. We started to realize we were missing this translation layer. We had no way to interpret human language against the structure of the data.”

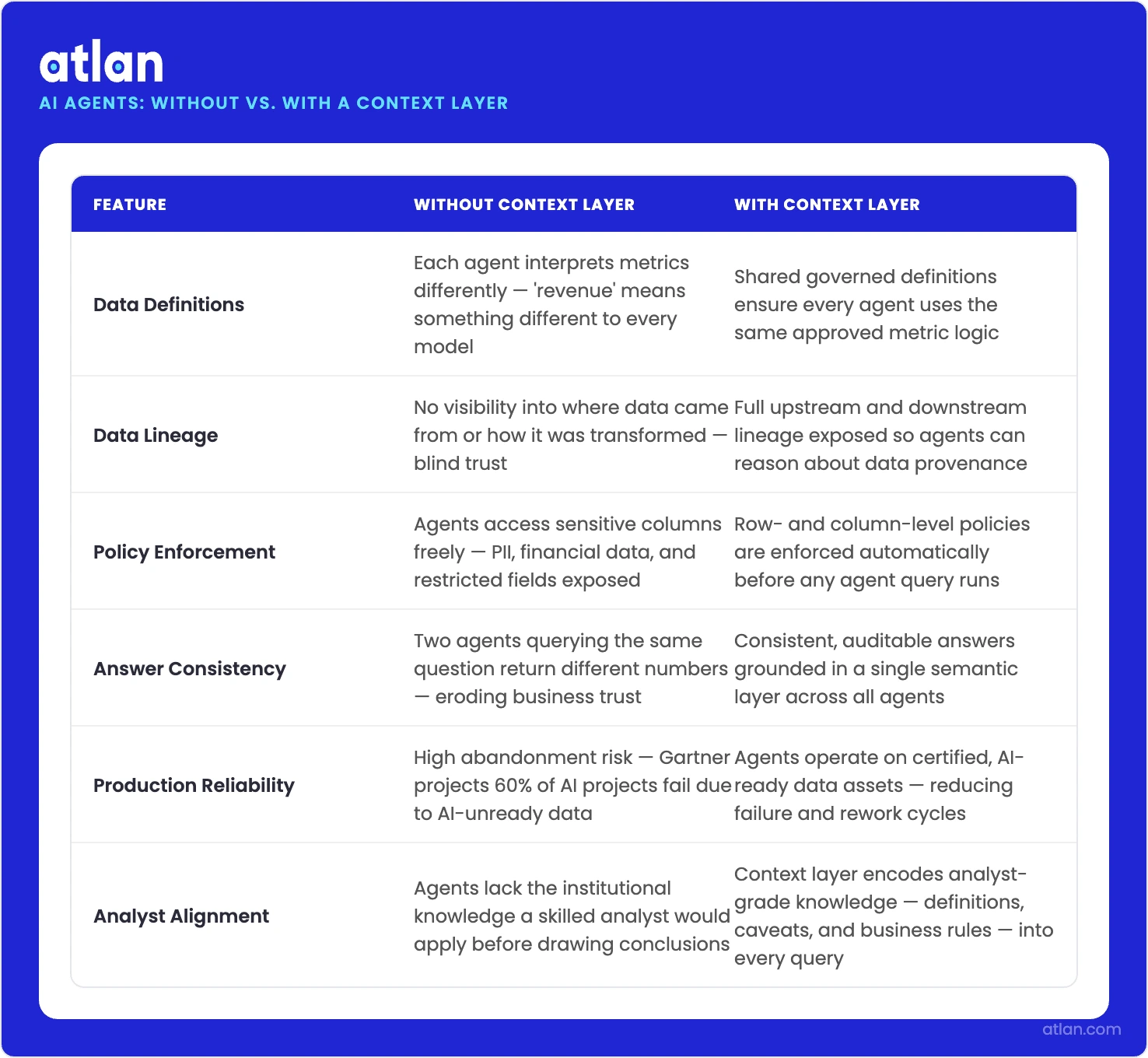

A context layer gives every AI agent the governed definitions, lineage, and policies a good analyst already knows. Source: Atlan.

| Failure mode | What it looks like in production |

|---|---|

| Ambiguous definitions | Marketing’s “active customer” differs from Sales’. The agent picks one and presents it as the truth. |

| Missing lineage | The agent pulls a number from a dashboard that was deprecated two quarters ago. Nobody told the agent. |

| Governance blind spots | A customer-support agent answers a compliance-sensitive question because nothing in its path flagged the data as restricted. |

| No memory | The same question asked on Monday and Thursday produces two different answers. Neither knows the other exists. |

Atlan’s own State of Enterprise Data & AI 2025 report surveyed 550+ data leaders and found that 84% are investing in AI, only 17% are successfully scaling it, and 49% attribute the failure to insufficient business context.

The numbers line up cleanly with MIT’s diagnosis. The fix is not a bigger model. The fix is the layer underneath it.

How does the failure pattern change with agentic AI?

Permalink to “How does the failure pattern change with agentic AI?”Single-query AI was forgiving. A chatbot produces one answer, a user reviews it, and the session ends. If the context was thin, the damage was contained. Agentic AI is not forgiving. Agents now execute multi-step workflows, hand off tasks to other agents, and maintain state across sessions. The context must persist across every step, survive every handoff, and remain governed throughout.

The architectural requirement shifts in three directions:

- Persistent memory: context does not reset after each query; it compounds as the agent works through a task

- Session state: agents remember what they have seen, what they have decided, and what they were told is out of scope

- Multi-agent coordination: a sales agent and a finance agent working on the same account must share the same definition of “revenue” or the workflow breaks

By 2030, Gartner expects 50% of AI agent deployment failures to trace back to insufficient runtime governance enforcement (Gartner Newsroom, Mar 11, 2026).

What did the Snowflake experiment observe?

Permalink to “What did the Snowflake experiment observe?”One of the few published engineering studies on context layers comes from Snowflake. Their engineering team ran a controlled internal experiment comparing a data agent operating on raw schemas against the same agent operating through an ontology layer that carried business semantics and relationships.

The results were sharp:

- Answer accuracy: improved by roughly 20 percentage points

- Tool calls per query: reduced by about 39%

- End-to-end latency: improved by roughly 20%

The agent stopped guessing. It stopped making extra calls to figure out what the column meant or whether the table was authoritative. A context layer answered those questions before the query ran.

This is a single-source data point from a vendor with an interest in the outcome, so it should be read with that in mind.

What do MIT’s findings actually say about context?

Permalink to “What do MIT’s findings actually say about context?”The “95% of GenAI pilots fail” statistic has become the most-cited statistic in the context-layer conversation. It usually gets used to argue that enterprises need to buy a context platform.

That reading skips past what the MIT NANDA report actually recommends. The same study found that purchasing AI tools from specialized vendors and building partnerships succeeds about 67% of the time, while internal builds succeed at one-third of that rate (MIT NANDA via Fortune). The report also points to vertical workflow embedding, line-manager empowerment, and starting with back-office automation as the shared characteristics of the enterprises that actually succeed.

The honest summary of the MIT data is narrower than the vendor interpretation. Context matters, and most enterprises are bad at it. The fix is a combination of governed infrastructure and workflow discipline, not any single product purchase.

Andy Chen, an engineer at Abnormal Security, demonstrated a working in-house context layer built in roughly 1,000 lines of Python with 20 parallel agents running over two days. It’s not the only path, but it is a real one, and it shows that some of the work is genuinely buildable.

What enterprises actually need is a layer that governs, versions, and shares context across agents, without rebuilding every time a new workflow launches. Whether that layer is bought, built, or composed depends on scale and existing investment.

Is there a vocabulary problem around the term “context layer” in 2026?

Permalink to “Is there a vocabulary problem around the term “context layer” in 2026?”A CDO walking into the context-layer conversation will hear the term used in five different ways:

- Governance infrastructure between data and AI: The framing used by analyst firms and most enterprise platforms

- Decision-trace graph inside vertical agents: Foundation Capital’s and Glean’s usage, where context lives inside a specific workflow agent

- Semantic layer plus governance plus lineage: The enterprise data catalog view, extended for AI

- The information environment passed into an LLM prompt: The “context engineering” meaning from the developer side

- An in-house markdown repo of how the company operates: The practitioner’s view from engineers building small-scale versions in-house

People tend to argue about these definitions without noticing that they’re not synonyms. Before your team commits a budget line, it is worth writing down which of these meanings applies to your use case.

The choice between “we need a governed enterprise layer” and “we need decision traces inside our sales agent” leads to completely different architectures. But choose intelligently.

The cost of not building context infrastructure shows up as project abandonment on one side and regulatory exposure on the other. Organizations are increasingly moving more budgets into AI infrastructure to solve this.

Deloitte’s State of AI in the Enterprise 2026 survey of 3,235 senior leaders adds a sharper detail. Only one in five companies has a mature governance model for autonomous AI agents, and 86% expect AI infrastructure budgets to more than triple in the next three years.

How to build a working context layer

Permalink to “How to build a working context layer”A context layer that does its job has six components. It includes an enterprise data graph, business glossary, active metadata, context products, governance workflows, and an open delivery interface. Most platforms implement some of them. Full coverage is rare.

- An enterprise data graph that connects tables, dashboards, metrics, business terms, owners, and policies into one queryable structure

- A business glossary that links plain-English terms to technical assets, so “active customer” resolves to a specific SQL definition

- Active metadata that updates in near real time as lineage, quality, and policies change, rather than sitting as static documentation

- Context products are bounded, versioned bundles of context for a specific domain or agent, similar in spirit to Git repos for code

- Governance workflows that enforce policies at query time, including certification, sensitivity classification, and approval routing

- An open delivery interface (typically MCP, plus SQL and APIs) so any agent platform can read from the same context without bespoke integration

For teams already running dbt, Snowflake, or Databricks, the delivery interface matters most. A context layer that speaks MCP and SQL plugs into existing pipelines without rewriting them. That is the difference between an architecture that ships and one that stalls in integration review.

The test for whether your organization has a context layer is simple. Is every AI agent drinking from the same well? If two agents owned by two teams can produce two different answers to the same governed question, you have context islands, not a context layer.

How does MCP change the delivery problem?

Permalink to “How does MCP change the delivery problem?”Model Context Protocol (MCP) has emerged as the standard interface for delivering governed context to AI assistants at query time. MCP usage has scaled quickly, with 97M+ monthly SDK downloads as of early 2026 and adoption across Anthropic, OpenAI, Google, and Microsoft.

For a CDO, MCP matters for two concrete reasons:

- Governance follows the agent: when your AI assistants (Claude, Copilot, Cursor, or similar tools) query through MCP, they read from the same governed context layer your data team manages. Access controls, sensitivity tags, and policies travel with the query.

- Integration overhead drops: instead of bespoke connectors per AI tool, a native MCP server exposes the context once, and any MCP-compatible agent can consume it.

Sridher Arumugham, Chief Data and AI Officer at DigiKey, described the practical effect this way: “Atlan is much more than a catalog of catalogs. It’s more of a context operating system. Atlan enabled us to easily activate metadata for everything from discovery in the marketplace to AI governance to data quality to an MCP server delivering context to AI models.”

Workday’s Joe DosSantos made a similar point from a different angle. “As part of Atlan’s AI Labs, we’re co-building the semantic layers that AI needs with new constructs like context products that can start with an end user’s prompt and include them in the development process. All of the work that we did to get to a shared language amongst people at Workday can be leveraged by AI via Atlan’s MCP server.”

In both cases, the value is the same: context built once, consumed everywhere.

The case for a unified context layer

Permalink to “The case for a unified context layer”A single vendor’s retrieval layer is not a unified context layer. It covers one tool’s context. Unification means something stricter: cross-stack, multi-agent, and human-facing. One governed layer that serves analysts querying dashboards and agents executing workflows.

Prukalpa has been explicit about the risk of getting this wrong. “My Sales agent has context. My Customer Success agent has something else. These things are not talking to each other. I have agent sprawl. I have context sprawl.”

At Atlan Activate 2026, Atlan positioned itself as the unified context layer for humans and AI agents. It’s the governed layer that sits across warehouses, BI tools, SaaS apps, and agent platforms, accessible through MCP, A2A, SQL, and APIs. The broader principle behind the announcement is worth separating from any vendor claim. Unification matters because your governance team should own one layer, not six.

A fragmented context layer rebuilds the data silo problem of the last decade, with higher stakes.

What are the signals that tell you the investment case for a context layer has arrived?

Permalink to “What are the signals that tell you the investment case for a context layer has arrived?”Not every enterprise needs a full context layer on day one. The following signals usually mean the decision has landed on your desk, whether you wanted it or not:

- AI pilots reach demo and then stall when moving to production

- Different teams give different answers to the same question from different agents

- Compliance and security teams are pushing back on AI deployments because policies don’t travel with the data

- Agents are being built on top of raw schemas, with prompt engineering doing the work that a context layer should do

- You have more than one AI use case in production, or are planning to, and each one has its own private context store

If three or more of these apply to your organization, the cost of continuing without a context layer is already higher than building one.

How Atlan solves for your context layer gap

Permalink to “How Atlan solves for your context layer gap”Atlan is the context layer for AI, built on three capabilities that map directly to the six building blocks above:

- Enterprise Data Graph: metadata and lineage ingested from 80+ connectors into a unified, queryable graph. Field-level examples:

asset.owner,asset.description,column.lineage. - Context Engineering Studio: AI-assisted workflow for building, testing, and deploying context products with versioning, evals, and lifecycle management. Field-level examples:

context_product.version,context_product.status. - Metadata Lakehouse: Iceberg-native storage that makes governance rules, sensitivity classifications, and access policies machine-readable and enforceable at runtime. Field-level examples:

policy.classification,asset.sensitivity_label.

Atlan has been named a Leader in the 2026 Gartner Magic Quadrant for Data & Analytics Governance. Here’s what Atlan’s customers have observed and documented so far:

- Mastercard cataloged 18M+ assets and 1,300+ glossary terms in year one, building a reusable context foundation across their exchange.

- Workday reported a 5x improvement in AI analyst response accuracy after grounding agents in shared context.

- DigiKey unified six systems and over 1M assets during a supply-chain resilience initiative, supported by more than 1,000 standardized glossary terms.

If you want to see the context layer live, you can watch the context studio demo.

FAQs around the need for a context layer

Permalink to “FAQs around the need for a context layer”What is a context layer in enterprise AI?

Permalink to “What is a context layer in enterprise AI?”A context layer is a governed infrastructure that sits between enterprise data platforms and AI systems. It supplies business definitions, lineage, quality signals, governance policies, and organizational memory so AI agents reason over the enterprise as it actually works, not just over raw schemas.

Why do AI agents need a context layer?

Permalink to “Why do AI agents need a context layer?”Agents without context hallucinate, misinterpret metrics, and violate governance rules. A context layer gives every agent access to the same definitions, the same policies, and the same audit trail. Gartner projects that 50% of AI agent deployment failures by 2030 will trace back to insufficient runtime governance enforcement.

What’s the difference between a semantic layer and a context layer?

Permalink to “What’s the difference between a semantic layer and a context layer?”A semantic layer defines what metrics mean, primarily for BI. A context layer is broader. It includes semantic definitions plus governance, lineage, decision traces, and runtime policy enforcement for AI agents. Semantic layers answer “what is this metric?” Context layers answer who owns it, whether the agent can use it right now, and whether anything has changed since yesterday.

How long does it take to implement a context layer?

Permalink to “How long does it take to implement a context layer?”Initial deployment typically takes 60–90 days for the first use case. Enterprises that treat context as infrastructure rather than a one-time documentation project see the biggest gains over 12–18 months, as more agents and teams consume the same layer.

Who owns the context layer inside the organization?

Permalink to “Who owns the context layer inside the organization?”The emerging answer is shared ownership. Data teams own the infrastructure, governance teams own the policy layer, and business domain owners certify the meaning inside each domain. CDOs increasingly own the overall program because context sits at the intersection of data, AI, and governance.

The verdict: Do enterprises need a context layer?

Permalink to “The verdict: Do enterprises need a context layer?”The question “do enterprises need a context layer?” stopped being theoretical somewhere in late 2025. It is now a budget-cycle question with measurable consequences on both sides of the decision.

The data is consistent across analyst firms, academic research, and vendor engineering blogs. Enterprise AI investment is rising. Enterprise AI value is not rising at the same rate. The gap is context. The infrastructure response is a governed, shared, active context layer that every agent and every human can read from, versioned and portable enough to outlive any single model or vendor cycle.

For most enterprises in 2026, the real question is no longer whether to build one. It is who owns it, how fast it gets to production, and whether it is unified from the start.