Fivetran’s 2026 benchmark of more than 500 senior data leaders found enterprises now run an average of 328 pipelines and spend 53% of data-engineering time on pipeline maintenance. A unified context layer replaces that fragmentation with a single governed substrate across four dimensions: cross-stack, multi-agent, human-plus-AI, and governed.

Two different products go by this name. Some vendors use a “unified context layer” for a retrieval product that unifies data access for AI models inside their platform. The enterprise governance view, which this article covers, uses the same phrase for cross-stack infrastructure that governs context for every agent, every human, and every system in the company.

This article is about the second kind.

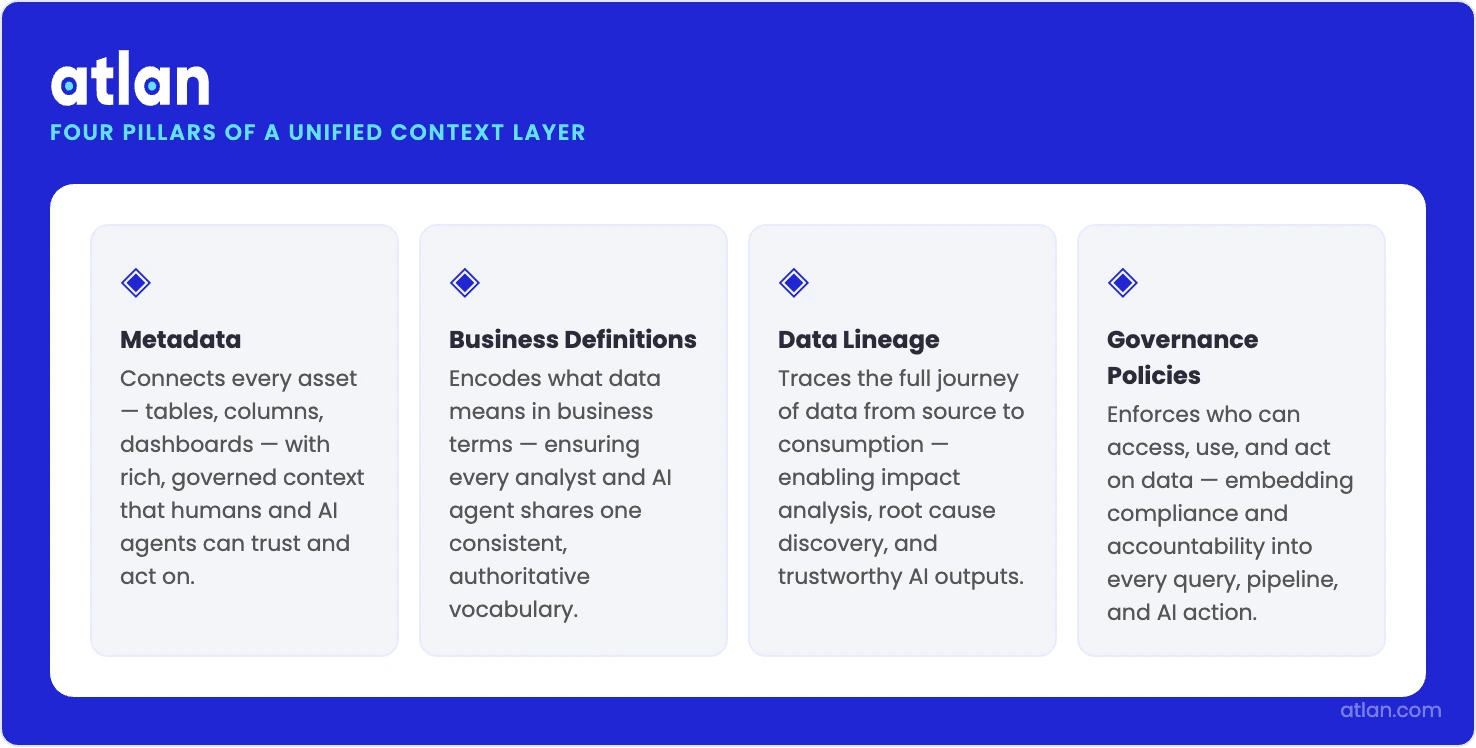

The governed infrastructure connecting metadata, definitions, lineage, and policies for every user and AI agent. Source: Atlan.

What is a unified context layer in practice?

Permalink to “What is a unified context layer in practice?”A unified context layer is a governed infrastructure that integrates metadata, business definitions, data lineage, and governance policies into a single layer. Humans use it through a catalog. AI agents use it through APIs. Both get context shaped by the same rules. For the foundational primer, start with context layer 101.

Quick facts about a unified context layer

Permalink to “Quick facts about a unified context layer”| Attribute | Detail |

|---|---|

| What it is | Governed infrastructure that connects metadata, lineage, and policies into one layer for humans and AI agents |

| Key benefit | Ends context fragmentation; every agent and analyst pulls from the same governed source |

| What unified means | Cross-stack, multi-agent, human and AI, and governed — all four at once, not just retrieval |

| Core architecture | Context graph, active metadata management, and a context product lifecycle |

| Who owns it | The CDO, Chief Data and AI Officer, or Head of Data Platform, as a centralized owner rather than individual engineering or product teams |

Andreessen Horowitz partners Jason Cui and Jennifer Li noted in March 2026 that the industry has settled on many names for the same idea: “context OS, context engine, contextual data layer, ontology, and more.” The goal behind all of them is the same: to tie together messy enterprise data and package it so agents can use it.

A retrieval layer can live inside a unified context layer, but it is not one by itself. Neither is a RAG pipeline. A context layer for enterprise AI earns the word “unified” only when one governed substrate serves every agent and every analyst across every tool.

What separates fragmented from unified context architecture?

Permalink to “What separates fragmented from unified context architecture?”In a fragmented world, each AI agent or business tool holds its own context store. Vector databases sit separately. Metadata catalogs don’t speak to each other. Governance rules change system by system. A unified context layer replaces the entire pattern with a single governed substrate where every agent pulls from the same lineage, glossary, and policy enforcement.

| Dimension | Fragmented approach | Unified context layer |

|---|---|---|

| Data sources | Each agent accesses separately | One governed layer for all agents |

| Governance | Per-system, inconsistent | Centralized, policy-enforced |

| Lineage | None or per-tool | Full cross-stack lineage |

| Context versioning | Manual or none | Context-as-code, versioned |

| Human and AI access | Separate systems | One layer serves both |

| Time-to-deployment | Weeks per agent | Reuse context products across agents |

Picture a CDO’s reality. Fifteen AI agents. Six context stores. Each one carries a slightly different definition of the same business term. The finance copilot calls something “revenue” one way. The BI reporting agent calls it something else. Now the company has a governance failure, not a data quality failure. You can clean every underlying table and still not fix a definitional fork that sits above them.

Unification changes this in three ways.

- Policies are published once and apply everywhere.

- Lineage reaches the column level across supported systems, so teams can trace AI outputs back to their source columns.

- New agents ship in days because context products are reusable infrastructure, not one-off setup work.

Fivetran’s 2026 benchmark of more than 500 senior data leaders found that enterprises already spend 53% of data-engineering time on pipeline maintenance and run an average of 328 pipelines per organization. Fragmented context piles onto that workload and makes it worse with every new agent.

What “unified” actually means: 4 dimensions of unification

Permalink to “What “unified” actually means: 4 dimensions of unification”Four specific dimensions define the word: cross-stack coverage, multi-agent access, shared human and AI governance, and policy-enforced context with versioning. Hit one or two, and you have a useful retrieval tool. Hit all four, and you have governance infrastructure. The label holds only when all dimensions are present at the same time.

What does cross-stack unification mean in practice?

Permalink to “What does cross-stack unification mean in practice?”Cross-stack unification means the context layer pulls metadata from every tool in the stack, not just from the retrieval index of a single AI app. The warehouse, the BI tool, the data catalog, and the transformation pipeline all contribute to one shared graph governed by one set of policies. The graph is the substrate on which a context graph sits.

- Connects metadata across the warehouse, BI, transformation, catalog, and ML tools

- Ends semantic drift when different tools define the same entity differently.

- Let’s have an AI agent walk the lineage from a metric back to its source column across every system it touched.

Think of a CDO at a financial services firm running Snowflake, dbt, Looker, Collibra, and three AI copilots. Cross-stack unification means one definition of “revenue” governs every output across all five tools, not five subtly different versions living in five places. Our breakdown of context layer vs. semantic layer walks through why this is an architectural choice, not just a UX one.

How do context products work across multiple agents?

Permalink to “How do context products work across multiple agents?”Multi-agent unification means every AI agent in the enterprise pulls context from the same governed layer. Context products, curated and versioned packages of metadata and business definitions, get built once and served to whichever agents need them.

- Build context products once, govern them centrally, and deploy them to any requesting agent.

- Conflicting definitions of the same business entity across agents become structurally much harder, because agents draw from the same governed definitions.

- Model Context Protocol (MCP) and similar standards deliver context at request time.

For the discipline behind this, learn more about what context engineering is.

How does one layer govern both analysts and AI agents?

Permalink to “How does one layer govern both analysts and AI agents?”Human and AI unification means analysts and agents draw from the same governed metadata substrate. When an analyst searches the catalog and an agent answers a business question, both are looking through the same policy-shaped lens. The shadow-governance problem goes away.

- Analysts reach context through the catalog UI. Agents reach it through APIs or MCP. Both hit the same underlying layer.

- Policy changes apply to humans and agents at the same moment, so you maintain rules once, not twice.

- The audit trail covers human queries and agent queries in one lineage record.

What does governed context-as-code enable?

Permalink to “What does governed context-as-code enable?”Governed unification treats context as a managed product. You get to promote it through environments and put it behind access control and audit. Context-as-code brings software engineering discipline to metadata and business definitions. Column-level lineage traces every chunk of context back to its origin. Policies fire when context gets requested, not in a report the next morning.

- Context products move through dev, staging, and production like any software artifact.

- Column-level lineage makes AI outputs traceable to their source columns across the governed stack.

- Policies fire at context delivery time, not after the fact.

- Rollback, testing, and change review become routine, not exceptional.

Imagine a new regulation changes how customer data can be used. Under governed unification, the CDO’s team publishes one policy update, and it applies to every agent, in every environment, with a full audit trail. Under fragmentation, the same change takes manual updates across six separate stores and a spreadsheet to track progress.

Ownership questions come next, and we cover them in our guide to context layer ownership.

Why fragmented context fails in production

Permalink to “Why fragmented context fails in production”Fragmented context fails in production because governance gaps compound as you scale. Each new agent adds a new store. Each store adds its own definitions, its own lineage gaps, and its own access rules. The symptoms are familiar: AI outputs that contradict each other, audit trails you cannot reconstruct, and a maintenance bill that grows with every agent you deploy.

Four failure modes show up over and over:

- Semantic inconsistency at scale: When 15 agents each define “active customer” differently, no report or AI output is trustworthy without manual reconciliation. That is a governance break, not a data-quality one.

- Ungovernable audit trails: Regulators want explainability for AI outputs. Stanford’s 2026 AI Index recorded 362 AI incidents in 2025, up from 233 in 2024. Fragmented stores cannot produce a unified audit record.

- Maintenance debt that compounds. Adding an agent means building a new store, duplicating definitions, and keeping them in sync by hand. At any real scale, this is unsustainable.

- Shadow governance risk. Context built outside the governed catalog becomes a shadow context. The CDO cannot see it. Policies cannot touch it. Lineage cannot audit it.

The data may be perfectly correct. It is the context sitting above the data that is ungovernable. This is a fresh category of infrastructure risk, one that did not exist before enterprise AI went to production, and it is the trigger that drives CDOs toward a dedicated context layer for enterprise AI.

For a CFO or COO, the cost shows up in three numbers: the engineering hours lost to per-agent context builds, the time-to-deployment penalty on every new AI use case, and the audit exposure that grows with each ungoverned store.

How do you evaluate vendors for a unified context layer?

Permalink to “How do you evaluate vendors for a unified context layer?”When you evaluate a unified context layer vendor, focus on governance completeness, not retrieval performance. Most vendors will sell you on query latency and vector accuracy. A CDO needs to know something different: does the platform govern context across the whole stack, does it serve humans and agents from the same layer, and does it enforce policies at delivery time? Five questions separate real unification from marketing unification.

Q1. Does it serve both humans and AI agents from one governed layer?

- Look for: one metadata layer with UI access for analysts and API or MCP access for agents. Not two systems that sync on a cron.

- “Good” looks like: policy changes hit human and agent access at the same moment. One audit trail covers both. There is no separate “AI catalog” running alongside the human one.

- Most vendors claim: “Our platform supports AI agents.” Check whether that is a bolt-on RAG layer or the same governed layer analysts use.

Q2. Does it have cross-stack context, not just one tool’s retrieval layer?

- Look for: native integrations with warehouse, BI, transformation, catalog, and ML platforms. Not scheduled metadata syncs.

- “Good” looks like: column-level metadata from dbt, Snowflake, Looker, and Databricks visible in one traversable context graph. Lineage crosses system boundaries.

- Most vendors claim: “100+ integrations.” Check whether they contribute to a single unified graph or just appear as assets side by side.

Q3. Does it version and test context products like code?

- Look for: context-as-code practices, version history, environment promotion, and rollback.

- “Good” looks like: a context product change goes through review before it reaches production. You can see a difference. Rollback takes one click, not three days of recovery.

- Most vendors claim: “Export and import of metadata.” That is not the same as versioned, reviewable context products.

Q4. Does it have column-level lineage, not just access control?

- Look for: lineage that traces an AI output back to a source column, not a table or a dataset.

- “Good” looks like: an AI-generated number can be traced from metric to column to transformation to source table to source system. End-to-end provenance.

- Most vendors claim: “Data lineage.” Check the granularity. Table-level lineage is not enough when a regulator asks which field produced a given output.

Q5. Does it enforce governance policies at context delivery time?

- Look for: policies that fire when context is requested. Not batch audits overnight.

- “Good” looks like: an agent asking for restricted data gets blocked or masked at request time, not flagged in tomorrow’s report.

- Most vendors claim: “Governance built in.” Check whether that means enforcement of delivery times or post-hoc monitoring. For more on the enforcement side, see our AI governance overview.

How Atlan built the unified context layer for enterprise AI

Permalink to “How Atlan built the unified context layer for enterprise AI”Enterprise AI governance needs infrastructure that ties catalog metadata, column-level lineage, business glossaries, and quality scores into one governed layer usable by humans and agents alike. Atlan ships this as the unified context layer: a governed substrate where context products get built, versioned, and deployed to every agent in the enterprise from one place.

Scale AI inside a large organization, and a structural context problem shows up quickly. Fifteen agents across different business units. Six context stores, each owned by a different team, each with its own definition of the same entity. No single place where the CDO can govern any of it. New agents take weeks to bring online because context configuration doesn’t travel between them. Audit trails are incomplete by design.

What most organizations really need is a way to govern context for AI agents using the same infrastructure they already use to govern context for human analysts.

Approach

Permalink to “Approach”Atlan’s architecture wires catalog metadata, column-level lineage, business glossary, and data quality scores into a cross-stack metadata lakehouse. That graph is the governed substrate. Every AI agent that asks for context draws from it under the same policies governing human analyst access. Metadata from Snowflake, dbt, Looker, and Databricks lives in one traversable structure, not in six siloed catalogs. For the architectural reasoning, see our deep dive on context graph vs. ontology.

The Context Engineering Studio gives data and AI teams a place to build context products, version them, and promote them through environments, just as developers ship code. A context product built for the finance copilot can be reused for the regulatory reporting agent without a rebuild.

Active metadata management keeps context current with the live data. Rename a column, and the graph updates. The change then propagates to every agent drawing from that context product.

The operational payoff shows up quickly. New agents ship in days, not weeks, because they draw on existing context products rather than requiring a custom store. Ownership moves from engineering heroics to governed infrastructure that the CDO can see, audit, and evolve.

Sridher Arumugham, CDAO at DigiKey, offered the one-line framing of what unification actually delivers: “Atlan is much more than a catalog of catalogs. It’s more of a context operating system.”

The unified context layer is the next phase of that governance platform, extending governed context from human analysts to AI agents without rebuilding the infrastructure underneath.

Frequently asked questions about unified context layers

Permalink to “Frequently asked questions about unified context layers”What is a unified context layer for enterprise AI?

Permalink to “What is a unified context layer for enterprise AI?”A unified context layer for enterprise AI is a governed infrastructure that connects data catalog metadata, business definitions, data lineage, and governance policies into one layer accessible by AI agents and human analysts alike. It replaces fragmented stores (separate vector databases, disconnected catalogs, per-system governance) with one source of governed context for the whole enterprise.

Context layer vs. unified context layer: what is the difference?

Permalink to “Context layer vs. unified context layer: what is the difference?”A context layer provides context to AI systems. Usually, that means a retrieval index or metadata store serving one application or team. A unified context layer goes wider. It is cross-stack and multi-agent, serves every agent and every human from one governed substrate, enforces consistent policies across every access point, and maintains full lineage across every tool in the stack.

How does a unified context layer work?

Permalink to “How does a unified context layer work?”It connects metadata from every tool in the stack into one governed context graph. Humans reach that graph through a catalog interface. Agents access it via APIs or protocols such as MCP. Governance, versioning, and lineage tracking cover all access points simultaneously.

What does “unified” mean in the unified context layer?

Permalink to “What does “unified” mean in the unified context layer?”It covers four dimensions. Cross-stack (one layer for every tool). Multi-agent (one layer for every agent). Human plus AI (one governed layer for analysts and agents both). Governed (policy-enforced, lineage-tracked, versioned like code). A context layer that only covers one or two dimensions is not unified, even if the vendor uses the word.

How does a unified context layer support AI agents?

Permalink to “How does a unified context layer support AI agents?”It delivers governed, consistent context at request time through APIs or MCP-style protocols. Agents pull from context products, curated packages of metadata and definitions, instead of building their own stores. Because every agent draws from the same governed layer, context stays consistent across agents, and one set of governance policies covers them all.

What vendors provide a unified context layer?

Permalink to “What vendors provide a unified context layer?”Vendors fall into two camps. Some sell a product called the unified context layer, focused on retrieval-layer unification for AI models inside their platform. Atlan sells a unified context layer as enterprise governance infrastructure, built on an active metadata management platform and covering the cross-stack, multi-agent, and human-plus-AI use cases. One is a retrieval product. The other is governance infrastructure.

How do you move from a fragmented to a unified context layer?

Permalink to “How do you move from a fragmented to a unified context layer?”Three steps work well. First, audit your existing context stores and map the lineage gaps. Second, set up a governed context graph that connects metadata across every tool in the stack. Third, migrate per-agent stores to reusable context products governed by that central layer, starting with the agents that share the most business entities.

The infrastructure shift: from context chaos to governed context

Permalink to “The infrastructure shift: from context chaos to governed context”Unified context layers mark an infrastructure shift in enterprise AI governance. The move is from per-agent configuration maintained by individual engineering teams to governed context products maintained by one platform and accessible to every agent and analyst in the enterprise. That is what makes AI governance auditable, scalable, and ownable.

The fragmented era gave us shadow governance, semantic inconsistency, and audit trails no one could reconstruct. The unified era turns context governance into enterprise infrastructure. The CDO owns it, governs it, and audits it. It no longer lives in scattered Slack threads and team wikis.