Most AI agent failures are not model failures. They are context failures. The metadata, definitions, ownership, and lineage that agents need to act correctly is missing, stale, or ungoverned. Atlan’s context layer closes this vacuum: it surfaces what data means, who owns it, and what rules govern its use, making all of it queryable by AI agents at inference time from a single certified source.

A context vacuum is the condition where AI agents and data workflows operate without the metadata they need to produce reliable outputs. Gartner predicts more than 40% of agentic AI projects will be canceled by the end of 2027 (Gartner, June 2025). The failure is rarely the model. It is the missing context, including definitions, ownership, lineage, freshness signals, and business rules, that AI agents need to produce outputs humans can trust.

| Quick Fact | Detail |

|---|---|

| What it is | The condition where AI agents and data systems operate without the metadata (definitions, lineage, ownership, freshness) needed to produce reliable outputs |

| Who it affects | Data teams building AI agents, ML engineers, CDOs managing AI readiness, AI platform teams in production |

| Primary cause | Fragmented data catalogs, manual context assembly, no active metadata propagation to the systems that need it at query time |

| Business impact | AI hallucinations, failed production deployments, inconsistent metrics across teams, unauditable data decisions |

| How to detect it | Use the Context Vacuum Scorecard in this article: 10 binary questions; score 0-3 indicates a critical vacuum |

| How to fix it | Build an enterprise context layer with active metadata, lineage, and unified business definitions delivered to agents at query time |

Your team spent six weeks building a LangGraph agent to answer analyst questions about product revenue. In the demo, it is flawless. It pulls from Snowflake, references correct metric definitions, and surfaces the right owner when data looks off. Then you push it to production. Within three days, it starts returning wrong numbers. The metric “ARR” means one thing in Snowflake and something different in Salesforce. The dbt model the agent is reading from was updated two days ago with no lineage signal to the agent. The governance policy restricting access to EMEA revenue data was never communicated to the LLM context window. Nobody flagged it. The agent answered anyway. That six-week sprint just created a liability instead of a capability.

This is what a context vacuum looks like in production.

The cost of a context vacuum used to be confused analysts and inconsistent dashboards. With AI agents running in production, the cost is decisions made at machine speed on false premises, at enterprise scale, with no human in the loop to catch them.

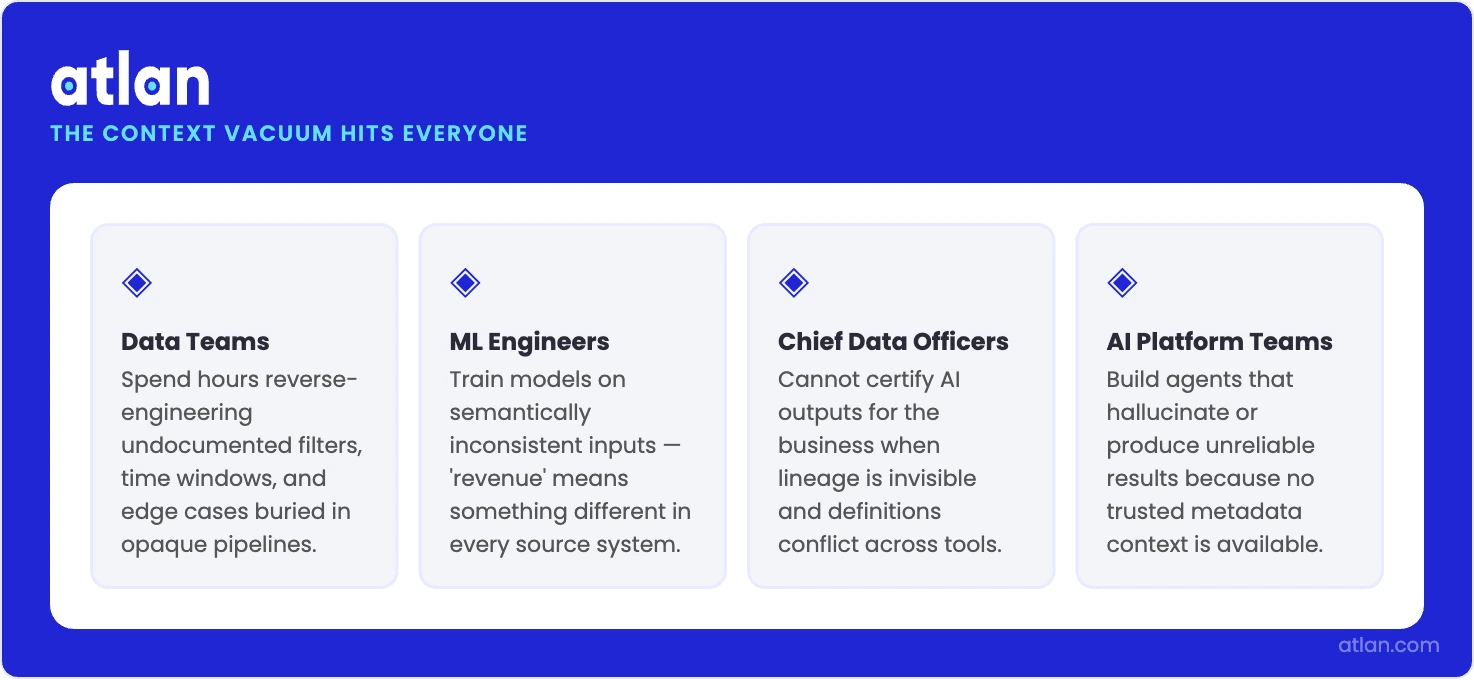

The context vacuum hits every team that depends on data and AI to make decisions. Source: Atlan.

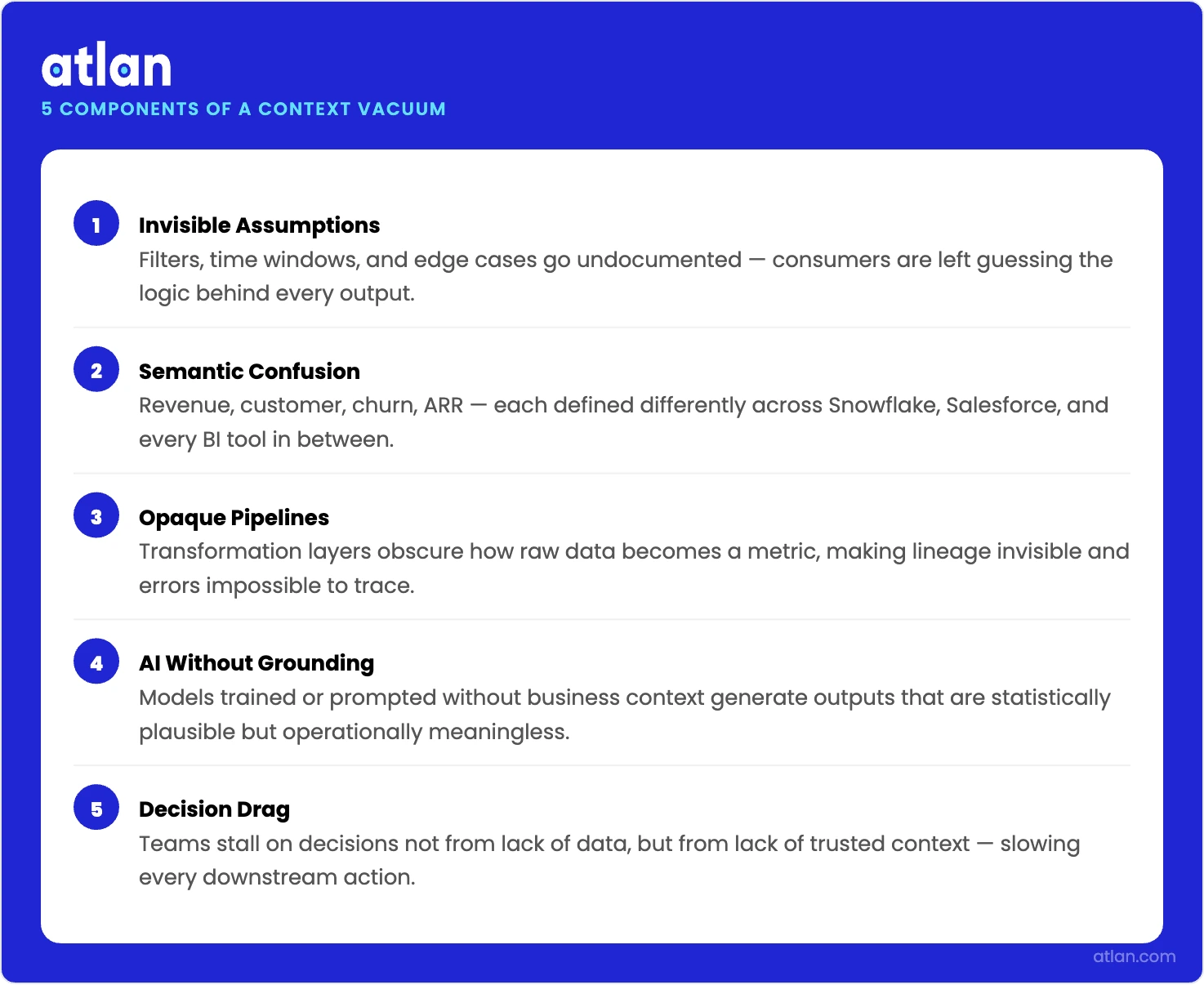

The five structural components of a context vacuum

Permalink to “The five structural components of a context vacuum”A context vacuum has five structural components that show up in a predictable sequence.

Invisible assumptions come first: the filters, time windows, and edge cases behind a dataset that nobody documented, leaving consumers of data and AI outputs to guess at the logic behind what they see. Semantic confusion follows: core business terms like revenue, customer, churn, and ARR carry distinct definitions across Snowflake, Salesforce, and every BI tool in between, each set by a different team at a different time. Opaque pipelines make the problem worse: transformation layers may be technically correct, but the original business intent is never recorded anywhere. By the time an LLM encounters this environment, it has no grounding. It produces fluent, confident answers from incomplete inputs with no mechanism to flag what it does not know. The downstream result is decision drag: meetings that stall on “which number is right?” instead of acting on what the data shows.

Five structural failure modes that drain meaning from data and AI systems. Source: Atlan.

Why context vacuums happen in modern data and AI

Permalink to “Why context vacuums happen in modern data and AI”Context vacuums form when teams scale tooling faster than shared understanding. The pattern repeats across organizations of every size, and it accelerates as AI adoption outpaces the governance infrastructure needed to support it.

Fragmented knowledge across tools and time

Permalink to “Fragmented knowledge across tools and time”Context lives scattered across Confluence pages, Jira tickets, Slack threads, and stale slide decks. Each tool captures a fragment of the truth. No layer connects those fragments to the AI agents and BI tools that need them when a query runs. When a new analyst or a new agent needs to understand a dataset, they either find a human to ask or they proceed without context.

Metric sprawl and semantic drift

Permalink to “Metric sprawl and semantic drift”As teams grow, similar metrics acquire different logic. Revenue in the data warehouse reflects recognized revenue. Revenue in the CRM reflects booked ARR. Revenue in the BI tool for the board deck reflects a third definition that accounts for specific segment exclusions agreed upon in a Slack thread two years ago. These differences are invisible until someone compares numbers across systems.

Pipeline complexity hides intent

Permalink to “Pipeline complexity hides intent”Modern data stacks introduce transformation layers through dbt, Spark, and orchestration tools where the technical logic is correct but the original business intent is never recorded. A column named net_revenue_adjusted passes every data quality check and still means something different to Finance than it does to Sales.

LLM adoption outpaces governance

Permalink to “LLM adoption outpaces governance”AI assistants and AI agents are deployed into environments where governance has not caught up. The agent accesses what it can reach. It answers based on what it finds. When the metadata an agent needs (lineage, ownership, access policies, freshness signals) is not connected to the system it is querying, the agent fills the gap with inference. That inference is the hallucination.

Symptoms of a context vacuum

Permalink to “Symptoms of a context vacuum”Context vacuums announce themselves through friction. The signals appear in different forms depending on where in the stack the vacuum exists.

Analytics and BI teams get different numbers from the same data

Permalink to “Analytics and BI teams get different numbers from the same data”Multiple dashboards answer the same business question with different numbers. Meetings open with “which number is right?” before any analysis can begin. The same metric has three definitions depending on which team you ask. Analysts spend the first half of every sprint validating data rather than analyzing it. Shadow spreadsheets proliferate because people trust their own calculations more than shared assets. These are all symptoms of a context vacuum at the analytics layer.

AI copilots lose user trust after the first few weeks

Permalink to “AI copilots lose user trust after the first few weeks”Users describe the AI as sounding smart but not trustworthy. The agent returns an answer that looks correct but cannot be traced back to a source. When users probe for methodology, the agent either fabricates a plausible explanation or surfaces a definition that does not match what the data team uses. Adoption stalls after the first few weeks because users stop relying on the AI for anything consequential. That abandonment pattern is a context vacuum revealing itself. The six structural failure modes behind these symptoms follow a predictable pattern across AI deployments.

Governance audits become multi-week reconstruction exercises

Permalink to “Governance audits become multi-week reconstruction exercises”A routine data access request triggers a week-long email chain because no one can quickly confirm who owns the dataset, whether it contains PII, or what downstream systems depend on it. An audit reveals that three AI tools consumed a dataset after it was reclassified as restricted, but no alert was ever sent. A governance policy change in one system never reached the AI agents consuming data covered by that policy. EMEA-specific regulations were documented somewhere, but not in a location the LLM context window can reach.

The context vacuum scorecard

Permalink to “The context vacuum scorecard”Answer yes or no to each question about your current data and AI environment.

- Does your AI agent know when a dbt model it depends on has changed?

- Can your agent access consistent definitions for core metrics (revenue, churn, ARR) across Snowflake AND Databricks?

- Does your data catalog automatically update when upstream source tables change?

- Can you trace any AI output back to its source data in under 10 minutes?

- Do all agents in your stack share the same business glossary, or does each have its own context?

- Are PII and governance policies automatically communicated to agent pipelines when they change?

- Does your agent know who owns the data it is querying and whether that owner has validated it?

- Can agents access metadata about data freshness (when was this last updated?) before acting?

- Are semantic definitions versioned, so agents can reason about historical metric definitions?

- If you added a new data platform tomorrow, would agents on the new platform have the same context as agents on existing platforms?

Scoring:

- 0–3 yes: Your agents are operating blind. This is a critical context vacuum: do not scale any AI deployment until the infrastructure gaps are addressed.

- 4–6 yes: Partial vacuum. You have some context in place, but the gaps are creating production failures. The work is connecting what already exists to the systems that consume it.

- 7–10 yes: You have the infrastructure for reliable agent outputs. The next step is making that context active and current rather than static.

Real-world examples of context vacuum

Permalink to “Real-world examples of context vacuum”The pattern most teams recognize first is the demo-to-production gap. An AI assistant performs well in a controlled demo because the context was manually assembled: the right definitions were provided, the right time windows were set, the right dataset was selected. In production, that manual assembly does not scale. The agent encounters a metric it has seen before but in a different context, a dataset it was not given explicit instructions about, a governance policy it was never told. The output degrades. Hallucinations increase. Users lose trust.

The second pattern is the cross-system definition problem. If your team is manually reconciling what “ARR” means between Snowflake and Salesforce before every agent run, you have a context vacuum. If your BI tool and your AI copilot are pulling from the same warehouse but surfacing different numbers because each was configured with different metric logic, you have a context vacuum. If a governance policy change in your catalog does not automatically propagate to the AI tools consuming data governed by that policy, you have a context vacuum. The manual workarounds that hold these systems together are the vacuum itself.

Business impact of a context vacuum

Permalink to “Business impact of a context vacuum”Decision quality and speed

Permalink to “Decision quality and speed”Teams operating in a context vacuum spend real time reconciling definitions and re-running analyses before any decision can reach the agenda. When reconciliation fails, decisions are delayed or made on wrong premises. Neither shows up as a line item in sprint planning, but both show up in outcomes.

Trust and adoption

Permalink to “Trust and adoption”When people cannot explain where a number came from, they stop using it. Shadow spreadsheets replace shared dashboards. Analysts duplicate work because they do not trust the canonical source. AI tools get abandoned after the first high-profile failure. The trust deficit compounds: the less the shared infrastructure is used, the less it is maintained, and the less it is maintained, the less trustworthy it becomes.

Operational and financial risk

Permalink to “Operational and financial risk”Upstream changes in source systems silently break downstream AI outputs and KPIs. The breakage is often discovered only after a stakeholder flags an anomaly or after a decision has already been made and acted on. Gartner predicts more than 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear ROI, and inadequate risk controls. Missing context is a primary driver of the risk controls failure.

Compliance and audit exposure

Permalink to “Compliance and audit exposure”AI data governance requires documented evidence of where data came from, who approved its use, and what controls were in place when an AI system made a decision. Organizations operating in a context vacuum cannot produce that evidence quickly. What should be a straightforward audit response becomes a multi-week reconstruction exercise that exposes gaps that were always present but never documented.

How can you close a context vacuum?

Permalink to “How can you close a context vacuum?”Define a context checklist for high-stakes datasets and AI-consumed metrics: definition, owner, source systems, data lineage, freshness expectation, caveats, and applicable governance policies. This is not documentation for its own sake. It is the minimum information an AI agent needs to use an asset reliably. Assign clear owners and data stewards for each asset so there is an explicit human accountable for keeping context current. Teams assessing their baseline should start with an AI readiness evaluation to identify which assets are already context-ready and which carry the highest vacuum risk.

Build and operationalize a shared business glossary

Permalink to “Build and operationalize a shared business glossary”Define core business terms in plain language with calculation rules, approved variants, and which systems each definition applies to. The business glossary must be connected to the tools that consume it, not stored in a separate Confluence page that agents cannot reach. When “ARR” has one definition in the glossary and a different one in the warehouse, the glossary is not being used. The active metadata layer must deliver glossary definitions to agents and BI tools when they run, not stored somewhere they cannot access. A well-structured data catalog is the foundation that makes this delivery possible, connecting definitions, ownership, and lineage in a single queryable layer.

Lineage should factor into change management

Permalink to “Lineage should factor into change management”Before any significant transformation change is deployed, require a lineage check: what AI agents, dashboards, and downstream pipelines depend on the affected model? Column-level lineage must be documented and accessible to the tools analysts and AI agents use daily. When a dbt model changes, every agent consuming that model should receive a signal. This is not achievable with static documentation. It requires active metadata infrastructure.

What is the 30-60 day sequence for closing a context vacuum?

Permalink to “What is the 30-60 day sequence for closing a context vacuum?”- Week 1: Identify your five most-used KPIs and five datasets consumed by AI tools or executive dashboards. Assign temporary owners where ownership is unclear.

- Weeks 2–3: Document minimum context for each: definition, owner, source system, freshness expectation, caveats. Link each asset to related dashboards and pipelines.

- Weeks 4–6: Implement a lightweight change management process for critical metrics: propose, review, approve, announce, version. Require a lineage impact check before any significant transformation change.

- Ongoing: Track the number of clarification questions, duplicated dashboards, and metric-related incidents. Review monthly and expand to the next highest-impact domain.

Context vacuum in AI agent deployments

Permalink to “Context vacuum in AI agent deployments”The arrival of agentic AI infrastructure has changed what a context vacuum costs. An analyst operating in a context vacuum loses time. An AI agent in a context vacuum produces wrong outputs faster than any human can catch them, and nothing flags that something is wrong.

Two infrastructure protocols now define how context reaches agents at the architectural level. MCP (Model Context Protocol) allows AI tools to access external context sources, including data catalogs, business glossaries, and lineage graphs, when a query runs rather than at setup time. When an organization’s metadata infrastructure is MCP-compatible, agents can pull active context (current definitions, current ownership, current freshness signals) directly from the catalog without manual assembly. This is the architectural difference between a context vacuum and a context-ready deployment.

A2A (Agent-to-Agent protocol) introduces a second context risk that most organizations have not yet addressed. When AI agents communicate with each other to complete multi-step tasks, each agent brings its own context window to the interaction. If those context windows contain inconsistent definitions, conflicting business rules, or outdated metadata, the agents will produce outputs that are internally consistent but factually wrong. Agent-to-agent hallucination chains are a new failure mode that emerges directly from context vacuum conditions at the protocol level.

Closing a context vacuum in an agentic AI environment requires that metadata infrastructure is not just documented but active: delivering definitions, lineage, ownership, and governance signals to agents continuously, propagating changes automatically, and accessible through the protocols agents use to reason and communicate. A 2025 ModelOp benchmark found that 58% of enterprises cite fragmented governance systems as the top obstacle to AI deployment, which is exactly the infrastructure condition context vacuums produce.

How Atlan closes the context vacuum

Permalink to “How Atlan closes the context vacuum”Most AI and data teams don’t have a metadata problem. They have a metadata delivery problem. Definitions exist somewhere: scattered across Confluence, glossary tools, and slide decks. Lineage is partially documented. Ownership is on record. None of it reaches the agent when it needs to act, because the metadata infrastructure is passive: it stores information, it does not push it.

Atlan’s enterprise context layer changes the delivery model. Rather than requiring manual context assembly before each agent run, it connects technical metadata (schemas, lineage, usage signals) with business context (ownership, definitions, governance policies) and propagates that combination automatically. When a dbt model is updated, lineage signals update across connected downstream agents and dashboards; teams see impact before and after changes, not after something breaks. When a governance policy changes, integrated data platforms receive the update through Atlan’s active metadata propagation. The business glossary is accessible through Atlan’s MCP server at the moment agents need it, not stored in a system they cannot reach.

The results are measurable. Kiwi.com reports a 53% reduction in central engineering workload for data documentation and governance as context assembly shifted from manual to automated. AI agent deployments that previously stalled at POC reach production because the demo-to-production degradation was caused by a context vacuum, and that vacuum has been closed. Data decisions become auditable because the metadata trail from output to source is maintained automatically, not reconstructed after the fact.

Build a context layer for your AI deployments.

Talk to the Atlan teamFAQs about context vacuum

Permalink to “FAQs about context vacuum”How do I know if my team has a context vacuum?

Permalink to “How do I know if my team has a context vacuum?”The fastest diagnostic is the Context Vacuum Scorecard in this article. As a quick test, ask whether your AI agents can answer three questions without a human lookup: When was this data last refreshed? Who owns it? What does this metric mean for this specific team or use case? If any of those requires a human, you have at least a partial context vacuum.

What causes a context vacuum in data teams?

Permalink to “What causes a context vacuum in data teams?”Context vacuums form when metadata lives in disconnected systems (definitions in Confluence, ownership in Jira, lineage in a catalog, freshness in monitoring tools) and no active layer delivers that metadata to the AI agents and BI tools that need it when a query runs. The problem compounds as AI adoption accelerates faster than governance infrastructure matures.

How does a context vacuum affect AI agent performance?

Permalink to “How does a context vacuum affect AI agent performance?”AI agents operating in a context vacuum produce hallucinated outputs, fail to detect data staleness, and apply inconsistent definitions across queries. In production, this manifests as incorrect summaries, wrong metric values, and governance violations. Enterprise AI deployments that score 0–3 on the Context Vacuum Scorecard typically fail to scale beyond proof-of-concept.

What is the difference between a context vacuum and a data quality problem?

Permalink to “What is the difference between a context vacuum and a data quality problem?”Data quality problems are about the accuracy of values: wrong numbers, duplicates, nulls. A context vacuum is about the accuracy of meaning: missing definitions, unknown ownership, absent lineage, stale business rules. Both cause bad decisions, but a context vacuum persists even when the underlying data is perfectly accurate. It is a metadata problem, not a data problem.

How does a context layer close a context vacuum?

Permalink to “How does a context layer close a context vacuum?”A context layer is an active metadata infrastructure that delivers definitions, lineage, ownership, and freshness signals to AI agents and data tools as they query data, rather than storing metadata passively in a catalog. Closing a context vacuum requires connecting metadata to the systems consuming it: LLMs, BI tools, pipelines, and governance workflows, automatically and continuously.

Agents run at machine speed. The infrastructure has to keep up.

Permalink to “Agents run at machine speed. The infrastructure has to keep up.”Context vacuum conditions are not a new problem. What is new is that agents act before anyone pauses to check. The organizations closing the vacuum are not waiting for the model to improve. They are building the infrastructure that gives the model something accurate to work with.