What does the EU AI Act risk classification framework mean for enterprise AI teams?

Permalink to “What does the EU AI Act risk classification framework mean for enterprise AI teams?”The AI Act, which entered into force on August 1, 2024, structures obligations around four risk tiers for trustworthy AI in the EU.

Understanding which tier your AI systems fall into determines every compliance decision that follows. The four tiers, from most to least restricted:

-

Prohibited (Article 5): Systems that present an unacceptable threat to safety or fundamental rights, banned outright. Examples include social scoring systems, real-time biometric surveillance in public spaces for law enforcement, and AI that exploits behavioral vulnerabilities.

-

High-risk (Articles 8-15): Systems operating in sensitive domains where errors can harm health, safety, or fundamental rights. This is the category where the bulk of compliance obligations apply.

-

Transparency risk (Article 50): Systems like chatbots and deepfake generators that require disclosure obligations so users know they are interacting with AI or AI-generated content.

-

Minimal to no risk: Most commercial AI applications fall here. No specific obligations apply, though voluntary codes of conduct exist.

What counts as high-risk?

Permalink to “What counts as high-risk?”AI systems listed under Annex III are considered high-risk if they pose a high risk of harm to health and safety or to fundamental rights. The eight areas are:

- Biometrics: Remote biometric identification systems and AI used for biometric categorization of natural persons.

- Critical infrastructure: AI managing utilities, transport networks, heating, electricity supply, and public safety systems.

- Education and vocational training: Systems that determine access to educational institutions or evaluate and assess students.

- Employment: AI used in recruitment, candidate screening, performance monitoring, and task allocation.

- Access to essential services: AI used to evaluate eligibility for public assistance benefits and healthcare services, assess creditworthiness, set insurance premiums, or dispatch emergency first response services.

- Healthcare: Medical devices, patient triage, and diagnostic support systems.

- Administration of justice and democratic processes: AI assisting judicial authorities in researching and interpreting facts, law, and in applying the law.

- Migration: AI used for asylum, border control, or visa processing decisions.

The first practical compliance step is a thorough, documented portfolio assessment against Annex III.

What does the compliance timeline look like?

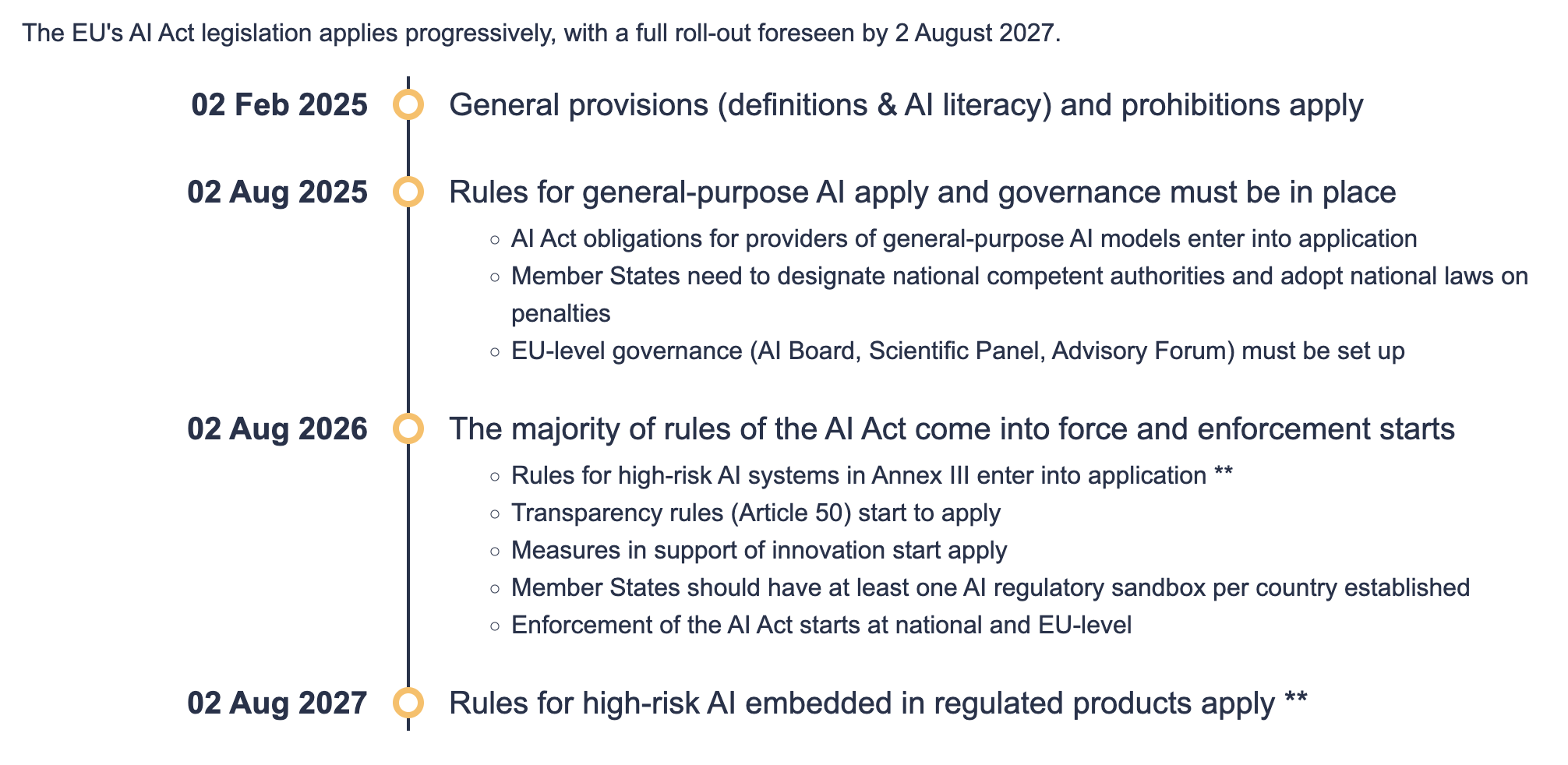

Permalink to “What does the compliance timeline look like?”The AI Act entered into force on August 1, 2024. For most enterprise AI teams, August 2026 is the operative deadline.

EU AI Act timeline. Source: AI Act Service Desk

Exceptions include:

- Prohibited AI practices and AI literacy obligations entered into application from February 2, 2025.

- The governance rules and obligations for general-purpose AI models became applicable on August 2, 2025.

- High-risk AI systems embedded in regulated products (such as medical devices and machinery) have an extended transition period until August 2, 2027.

Why are the data governance requirements for EU AI Act compliance a context infrastructure problem?

Permalink to “Why are the data governance requirements for EU AI Act compliance a context infrastructure problem?”Article 10 of EU AI Act is a mandate for governed context infrastructure, not just data quality.

While the article calls for training, validation, and testing data sets to be fit for purpose, relevant, sufficiently representative, error-free, and complete, it also demands: “Training, validation and testing data sets shall be subject to data governance and management practices appropriate for the intended purpose of the high-risk AI system.”

Each element of these requirements maps directly to a context layer capability:

- Relevance and representativeness: Requires documented canonical definitions of what constitutes a valid dataset for each use case — a business glossary function with canonical terms, owners, and review dates.

- Error-free and complete: Requires continuous data quality monitoring and lineage documentation showing where data originated and how it was processed.

- Bias detection and mitigation: Requires documented governance practices with records of bias checks performed and corrective actions taken.

- Data collection processes and origin: Requires data lineage at the asset level, including the original purpose of collection.

- Data governance and management practices: The operational infrastructure that governs what data an AI system can use, how that data is tracked, and what records exist when something goes wrong.

Where most teams are exposed

Permalink to “Where most teams are exposed”Teams that have invested in context infrastructure — governed metadata, lineage graphs, active data quality monitoring, canonical business definitions — are already partially compliant with Article 10.

Teams that haven’t are facing two problems simultaneously: they need to build the compliance evidence, and they need to build the underlying infrastructure first. Compliance cannot be retrofitted onto ungoverned context.

A sovereign context layer provides the operational workspace where teams document data lineage, maintain canonical definitions, run quality checks, and record the governance decisions that Article 10 requires as evidence.

Why do EU AI Act’s record-keeping requirements need more than inference monitoring?

Permalink to “Why do EU AI Act’s record-keeping requirements need more than inference monitoring?”Article 12 of the EU AI Act is a mandate for continuous, system-generated audit infrastructure. While it calls for high-risk AI systems to automatically record events over their lifetime, it also demands: “Logging capabilities shall enable the recording of events relevant for: identifying situations that may result in the high-risk AI system presenting a risk; facilitating post-market monitoring; and monitoring the operation of high-risk AI systems.”

This article requires you to track:

- Session records: The start date and time and end date and time of each use.

- Configuration records: System state and model version at the time of each decision.

- Reference system records: The reference database against which input data has been checked by the system.

- Input system records: The input data for which the search has led to a match.

- Human intervention and ownership records: The identification of people involved in the verification of the results.

How standard inference logs fall short

Permalink to “How standard inference logs fall short”Standard application logs and inference monitoring tools record what the model produced and how long it took. They don’t record what context the model operated on, what business definitions it applied, what data lineage backed those definitions, or what governance policies were active at decision time.

Why decision traces are the architectural answer

Permalink to “Why decision traces are the architectural answer”Decision traces capture how and why an AI system reached a decision. They capture the reasoning path, the policies applied, the precedents referenced, and the data sources accessed at each step.

This connects regulatory requirements with a queryable organizational memory, rather than static audit documents. Unlike flat log files, decision traces are:

- Queryable: Regulators and auditors can retrieve the exact decision context for any flagged output without manual reconstruction.

- Structured: Each trace follows a consistent schema that maps to Article 12’s requirements for input data, database references, and human verification.

- Linked to lineage: Each data element in the trace connects back through the lineage graph to its source, so provenance is automatic rather than asserted.

Why third-party AI deployments don’t transfer your compliance obligation

Permalink to “Why third-party AI deployments don’t transfer your compliance obligation”Article 26 of the EU AI Act makes the deployer obligation explicit, and purchasing a third-party AI platform doesn’t satisfy it.

A significant portion of enterprise AI deployment in 2026 runs on third-party platforms: Salesforce Agentforce, Google Agentspace, AWS Bedrock, Microsoft Copilot Studio. The assumption that compliance transfers with the contract is incorrect.

Article 26 requires deployers of high-risk systems to maintain logs themselves: “Keep the logs automatically generated by that high-risk AI system to the extent such logs are under their control… for a period that is appropriate to the intended purpose of the high-risk AI system, of at least six months.”

The article calls for human oversight mechanisms, regardless of which platform the agent runs on. The onus of ensuring that input data is relevant and sufficiently representative also lies with the deployer.

The context layer as evidence trail

Permalink to “The context layer as evidence trail”When Salesforce Agentforce makes a credit recommendation, or an AWS Bedrock-powered agent screens a job application, the compliance question is not whether Salesforce or AWS is compliant. The question is whether your organization can demonstrate that the context those agents operated on was governed, representative, unbiased, and auditable.

A sovereign, vendor-agnostic context layer provides this evidence trail. It enforces access policies, ensures third-party agents access only governed and policy-compliant data, and generates automatic records of what was accessed and when.

How to comply with the EU AI Act: The practical context architecture that enterprise teams need

Permalink to “How to comply with the EU AI Act: The practical context architecture that enterprise teams need”EU AI Act compliance needs a technical architecture that governs what AI systems know, what they are permitted to do, and what record exists of every decision they made.

This architecture has three core components:

- A governed context layer: Handles data quality monitoring, canonical definitions, column-level lineage, and bias documentation.

- An AI control plane: Oversees access control and guardrails that govern what agents are permitted to do.

- Decision traces: Generate automatic audit trails capturing core entities involved, context used, outcome, and provenance (full chain of custody).

Governed context: Satisfying Article 10

Permalink to “Governed context: Satisfying Article 10”The context layer is where Article 10 compliance starts. It encompasses:

- Canonical business definitions: A managed business glossary with ownership, last-reviewed dates, and approval workflows. This satisfies Article 10’s requirement for documented data governance practices.

- Data lineage: End-to-end traceability from source system to AI retrieval pipeline. This satisfies both Article 10’s data origin requirements and Article 12’s requirement to record the reference database against which inputs were checked.

- Data quality monitoring: Continuous quality signals (completeness, freshness, accuracy, consistency) with documented thresholds and violation records. This demonstrates the error-free and complete standard Article 10 requires.

- Bias documentation: Records of bias detection methods applied, findings, and corrective actions taken.

Without governed context, nothing downstream can satisfy the regulation’s requirements, because there is no documented evidence of what the AI knew or where that knowledge came from.

AI control plane for access control and guardrails: Satisfying Article 14

Permalink to “AI control plane for access control and guardrails: Satisfying Article 14”The AI control plane governs what agents are permitted to do, enforcing the human oversight requirements of Article 14 through:

- Access controls: Policy enforcement restricting agent access to specific datasets based on role, use case, and sensitivity classification.

- Guardrails: Constraints on agent behavior, such as requiring human review above a defined confidence threshold or blocking certain classes of automated decisions.

- Human oversight mechanisms: Structured workflows that surface high-stakes decisions for human review, with records of who reviewed, what they decided, and why.

Providers must design their high-risk AI systems to allow deployers to implement human oversight. The AI control plane is where that design requirement becomes operational.

Decision traces: Satisfying Article 12

Permalink to “Decision traces: Satisfying Article 12”Decision traces are the automatic logging capability the context layer generates for every agent action. A well-structured decision trace captures:

- Core entities: The data objects and assets the decision was built on, with lineage links.

- Context: The business definitions, quality states, and governance rules active at decision time.

- Outcome: The specific action or recommendation the agent produced.

- Provenance: The full chain of custody from data source through retrieval pipeline to decision output.

Architectural considerations for EU AI Act compliance: At a glance

Permalink to “Architectural considerations for EU AI Act compliance: At a glance”| EU AI Act requirement | Technical component |

|---|---|

| Article 10: Data quality and governance | Governed context layer (lineage, glossary, quality monitoring) |

| Article 10: Bias documentation | Context layer bias records and quality signals |

| Article 12: Automatic event logging | Decision traces (queryable, structured, auto-generated) |

| Article 13: Transparency for deployers | Decision trace schema linked to lineage graph |

| Article 14: Human oversight | AI control plane (access controls, guardrails, review workflows) |

| Deployer obligation: Context accountability | Context layer access logs and governance records |

How Atlan operationalizes the context architecture needed for EU AI Act compliance

Permalink to “How Atlan operationalizes the context architecture needed for EU AI Act compliance”Atlan operationalizes each layer of this architecture:

- The Context Engineering Studio is where teams bootstrap canonical definitions, document lineage, record governance decisions, and generate decision traces.

- The Context Lakehouse maintains all information on primary producers and consumers of context across the full data estate.

- AI Governance acts as a single source of truth for all AI models and applications, auto-discovering deployed systems and enforcing access controls.

- The Data Quality Studio maintains continuous data quality signals and bias documentation.

Moving forward with EU AI Act compliance

Permalink to “Moving forward with EU AI Act compliance”Meeting the EU AI Act’s compliance checklist requires redesigning your infrastructure. Governing the context your AI operates on, generating automatic and queryable decision traces, and enforcing access control through a structured AI control plane: these are the technical building blocks the Act is describing, even if it does not use those terms.

Teams that have invested in context governance have a head start. Teams that have not should treat the compliance deadline as the forcing function it is — not a documentation sprint, but a mandate to build the infrastructure that makes high-risk AI trustworthy by design.

Atlan’s sovereign, vendor-agnostic enterprise context layer provides the foundation to make compliance demonstrable rather than merely claimed.

FAQs about EU AI Act compliance

Permalink to “FAQs about EU AI Act compliance”1. What is the EU AI Act and who does it apply to?

Permalink to “1. What is the EU AI Act and who does it apply to?”EU AI Act is a risk-based legal framework governing AI systems used in the European Union. It applies to providers and deployers regardless of where they are based, as long as the AI system’s output is used within the EU. The bulk of obligations fall on providers and deployers of high-risk AI systems as defined in Annex III and Annex I of the regulation.

2. When does EU AI Act compliance become mandatory?

Permalink to “2. When does EU AI Act compliance become mandatory?”Core compliance obligations for most high-risk AI systems apply from August 2, 2026. Prohibited practices have been banned since February 2, 2025. Governance rules for general-purpose AI models have applied since August 2, 2025. High-risk AI systems embedded in regulated products such as medical devices have an extended deadline of August 2, 2027.

3. What makes an AI system “high-risk” under the EU AI Act?

Permalink to “3. What makes an AI system “high-risk” under the EU AI Act?”An AI system is classified as high-risk if it operates in one of the eight sensitive domains listed in Annex III, including biometrics, critical infrastructure, employment, credit and insurance, healthcare, law enforcement, migration, or administration of justice. Systems used as safety components in regulated products under Annex I are also high-risk. The determining factor is not sector but function: if the system influences a consequential decision about a person, it likely qualifies.

4. Does using a third-party AI platform mean my organization is automatically compliant?

Permalink to “4. Does using a third-party AI platform mean my organization is automatically compliant?”No. The deployer obligation under the EU AI Act remains with your organization regardless of which platform you use. You must demonstrate that the context the agent operated on was governed and auditable, that human oversight was in place, and that your logs satisfy Article 12 requirements. The platform vendor’s compliance certification does not extend to the data governance practices of organizations deploying on top of that platform.

5. What do Article 12 logging requirements mean in practice for AI teams?

Permalink to “5. What do Article 12 logging requirements mean in practice for AI teams?”Article 12 requires high-risk AI systems to automatically generate event logs over their full operational lifetime. These logs must allow reconstruction of any decision the system made: what data was used, which database was referenced, what the outcome was, and who verified it. Manually assembled logs, PDF audit trails, and inference-layer monitoring tools do not satisfy these requirements. The logging must be system-generated and retrievable on demand.

6. What is the difference between an inference log and a decision trace for compliance purposes?

Permalink to “6. What is the difference between an inference log and a decision trace for compliance purposes?”An inference log records what a model produced, how quickly, and at what cost. A decision trace captures the reasoning layer: what data the agent accessed, what business definitions applied, what governance policies were active, and what the agent decided and why. Article 12 requires both dimensions, but most teams only have inference logs. Decision traces are what close that gap.

7. What are the penalties for EU AI Act non-compliance?

Permalink to “7. What are the penalties for EU AI Act non-compliance?”Fines for violations involving prohibited AI practices can reach 35 million euros or up to 7% of global annual turnover, whichever is higher. Violations of obligations for high-risk AI systems can result in fines of up to 15 million euros or up to 3% of global annual turnover. Non-compliance with information obligations carries fines of up to 7.5 million euros or up to 1% of global annual turnover, whichever is higher.