Every CDO says they’re building toward being AI-native. But almost none can define what that actually looks like, let alone where they are on the journey.

That’s a definitional problem, not a leadership one. “AI-native” has become an arbitrary destination without coordinates. Organizations announce AI programs, hire CAIOs, and deploy agents, then watch the results erode. As accuracy drops, teams lose confidence. Pilots that worked in controlled settings break in production. Something is off but it’s hard to place a finger on exactly what, let alone figure out how to start fixing it.

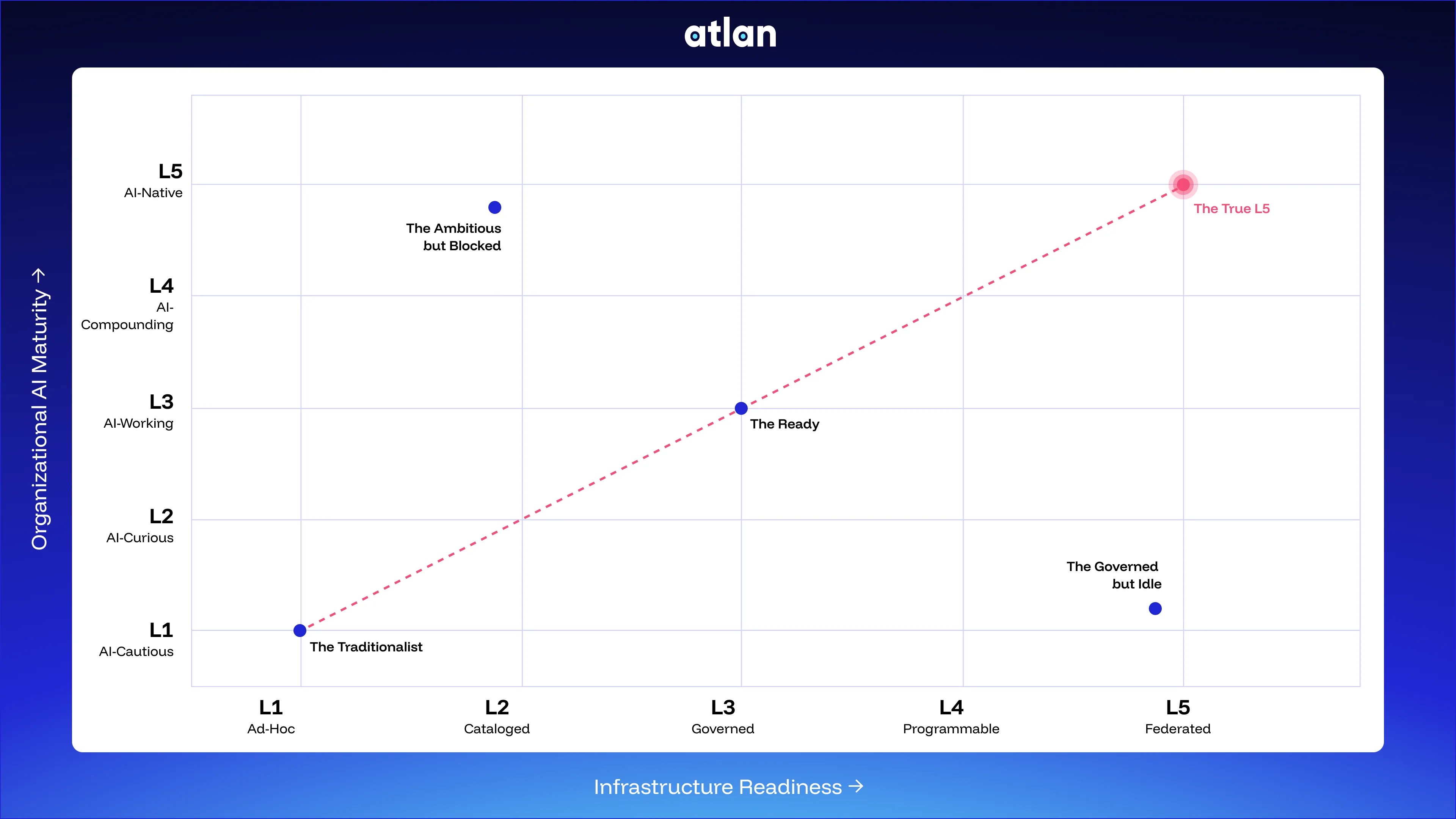

AI maturity isn’t one dimensional. The right approach involves two axes, and understanding where you fall on both.

The two axes of AI maturity

Permalink to “The two axes of AI maturity”Most frameworks treat AI readiness as a single spectrum: you’re either ahead or behind. But organizational ambitions and infrastructure capabilities are independent variables that can diverge significantly.

A company can have a CAIO, a board mandate, and a dozen deployed agents, while still running on infrastructure built in 2019. A company can have 18 million cataloged assets and rigorous governance, but no AI mandate to activate any of it.

You need to answer two questions to truly understand where you fall on the AI maturity matrix: Where is your organization on the AI maturity curve, and where is your data infrastructure?

Axis 1: Organizational AI Maturity

How AI-native is the company as an organization? This encompasses its strategy, staffing, and what it’s actually shipping.

| Level | Label | What it looks like |

|---|---|---|

| L1 | AI-Cautious | No AI roadmap. Traditional analytics-first. Status quo is comfortable. |

| L2 | AI-Curious | ChatGPT Enterprise active. Pilots underway. No agentic use cases in production. |

| L3 | AI-Working | One to three agents in production. An AI leader emerging. Stuck in testing. |

| L4 | AI-Compounding | Ten or more agents live, hundreds in development. CAIO or equivalent. Board-level mandate. |

| L5 | AI-Native | AI is the business model. Not “uses AI” — the product is AI. |

Axis 2: Infrastructure Readiness

How AI-ready is the data estate? This involves governance, lineage, semantic clarity, and how context reaches agents. (See: Data Infrastructure for AI)

| Level | Label | What it looks like |

|---|---|---|

| L1 | Ad hoc | No catalog. Manual pipelines. No governance. |

| L2 | Cataloged | Centralized storage. Basic catalog. Limited discoverability. |

| L3 | Governed | Governed metadata, automated lineage, semantic definitions. Minimum viable for production AI. |

| L4 | Programmable | Policy-aware pipelines. Column-level access control. Real-time context APIs. MCP delivery. |

| L5 | Federated | Multi-agent orchestration. Federated metadata across domains. Automated freshness SLAs. Context that compounds over time. |

The aligned journey looks like this: L1/L1 → L2/L2 → L3/L3 → L4/L4 → L5/L5. Each axis reinforces the other. But almost no organization follows that path.

The archetypes: Where most organizations actually land

Permalink to “The archetypes: Where most organizations actually land”| Archetype | Org level | Infra level | The tell | The risk |

|---|---|---|---|---|

| The Traditionalist | L1 | L1 | No AI roadmap, no modern stack | Talent attrition as competitors pull practitioners |

| The Ambitious but Blocked | L4–5 | L1–2 | “We’ve killed three agent projects” | Agent failures erode program credibility |

| The Governed but Idle | L1–2 | L4–5 | “Our catalog exists — no one uses it” | Infrastructure depreciates without activation |

| The Ready | L3 | L3 | “Our first agent took nine months” | Stuck in testing, can’t scale |

| The True L5 | L5 | L5 | AI is the product | N/A — this is the destination |

The Traditionalist (L1 org / L1 infra)

No AI strategy. No modern infrastructure. Legacy analytics, manual reporting, governance-by-spreadsheet. This doesn’t mean the organization is complacent. It’s just as possible that the data team hasn’t had upward support to modernize, or that the board simply hasn’t asked yet.

The risk is twofold: being at a competitive disadvantage and becoming a talent desert. As AI-working organizations pull data practitioners with modern mandates and tooling, L1 organizations find that recruiting becomes harder every quarter.

The Ambitious but Blocked (L4–5 org / L1–2 infra)

Big AI mandate. CAIO hired. Board-level pressure. Agents shipped. Results that are inconsistent, and getting worse.

For these companies, the infrastructure can’t support what the organization wants to build. Agents return different answers to the same question depending on which data they happened to query. Every new agent requires rebuilding context from scratch. Different teams have different definitions of the same metric, and agents have no way to arbitrate.

The tell: “We’ve had to kill three agent projects because we couldn’t trust the outputs.” Or: “Every agent team is manually wiring their own context.”

This archetype generates the most public announcements and the most private frustration. Published research puts AI accuracy without proper context grounding at 10–31%. With governed context in place, that number jumps to 94–99%. That gap is an infrastructure problem.

The Governed but Idle (L1–2 org / L4–5 infra)

These companies have world-class data governance, millions of cataloged assets, column-level lineage, and certified definitions across the enterprise. But agentic use cases? Nonexistent.

The governance investment was built for compliance, regulatory reporting, or legacy data quality programs, not AI. The infrastructure is mature, but the org mandate isn’t. (What is AI governance?)

The tell: “We have a full data catalog, our teams just don’t use it.” Or: “Governance is strong, but we don’t have an AI strategy yet.”

This is expensive: Governed data that no agents use is documentation. The catalog depreciates without activation.

The Ready (L3 org / L3 infra)

This is the most common position for enterprises investing in a context layer. They have a modern data stack, some agents in production, an AI leader, and the minimum viable foundation for AI: governed metadata and automated lineage. But something is still blocking scale.

Agents that worked in demos and pilots keep breaking in production. Different teams get different answers to the same business question. Standing up a new agentic use case requires months of context work before the team can even start evaluating accuracy. This is the context bootstrapping problem that keeps teams from even reaching the starting line.

The tell: “It took nine months to build the context for our first agent. We don’t know how to scale that.”

One public media company reached exactly this wall: nine months of manual context work for an AI accuracy rate of 10%. But after building a proper context layer and running five iterations, they hit 90%. All that changed was the context infrastructure, not the underlying model.

The True L5 (L5 org / L5 infra)

For L5 organizations, AI is the business model and the product is the agent. Their infrastructure is built to sustain that at scale.

These teams treat context as a first-class asset: governed, federated, machine-readable, and compounding. Every new agent starts smarter than the last because context propagates from shared infrastructure. The context layer is core infrastructure, the same way the database is.

The competitive moat isn’t the model, because models commoditize. The moat is the context the organization has built and governed over time. That intelligence compounds in ways that other organizations can’t replicate.

The key insight: Most organizations are off-diagonal

Permalink to “The key insight: Most organizations are off-diagonal”Pull too far ahead on the organizational axis without the infrastructure to match, and you burn credibility. Agents fail, AI programs get walked back, and trust in the whole initiative collapses.

Pull too far ahead on the infrastructure axis without the org mandate to activate it, and you burn budget. The governance investment sits, and the catalog becomes a graveyard of documented assets no one queries.

Getting to L5 isn’t about advancing on one axis as fast as possible. It’s about staying close to the diagonal: advancing both, roughly in parallel.

Organizations that recognize they’re off-diagonal in either direction and correct for it move faster than organizations that keep doubling down on the axis where they’re already ahead. The key is to periodically review where the company is, and take intentional steps to fix misalignments.

How to move up the diagonal

Permalink to “How to move up the diagonal”Phase 1: L1–2 → L3. Build the foundation.

Document assets across your data estate automatically, not manually. Establish column-level lineage to source. Define business semantics, like what “revenue” means in your organization. Make sure your definitions are clear and enforced everywhere — this is where data quality standards become load-bearing.

On the org side, identify one AI leader with budget, ship one agent into production, and start learning what breaks at scale.

Phase 2: L3 → L4. Make context programmable.

Move from documented metadata to delivered metadata. Context should flow to agents through APIs, MCP, and SQL, not through manual handoffs or one-off engineering work. Implement attribute-based access control so agents can query context at scale without working around governance.

Automate freshness tracking so agents know when data was last verified. Build a Context Engineering practice that gives someone ownership over the layer between your data estate and your agents.

On the org side, get three to five agents into production. At this point, the AI leader should be elevated to a CAIO or VP-equivalent role, legitimizing the context layer so that it’s a line item, not a project.

“Atlan captures Workday’s shared language to be leveraged by AI via its MCP server. As part of Atlan’s AI Labs, we’re co-building the semantic layer that AI needs.” — Joe DosSantos, VP Enterprise Data and Analytics, Workday

Phase 3: L4 → L5. Scale to autonomous.

Federate context across domains. Product, marketing, finance, and operations each need governed context that’s also portable, not siloed per team. Enabling agent-to-agent context sharing also ensures that context is propagated across agent boundaries, powering multi-agent orchestration.

Automate freshness SLAs to avoid agent failure when data drifts. The infrastructure should ensure that stale context doesn’t reach agents. Corrections propagate and each agent run makes the next one smarter, creating a compounding effect.

“With Atlan, we cataloged over 18 million assets and 1,300-plus glossary terms in our first year, so teams can trust and reuse context across the exchange.” — Kiran Panja, Managing Director, CME Group

On the org side, make AI a board-level metric and adopt an AI-first product strategy. The question shouldn’t be whether to use AI, but what to build next.

Gap-Closing Checklists: The Path to L5

Three phase-by-phase checklists — leadership steps and infrastructure steps for each transition. Download and use it as a working document.

Download the checklist →Where the context layer fits

Permalink to “Where the context layer fits”The context layer is what moves organizations up the diagonal. It’s the active infrastructure between your data estate and your AI agents where context is governed, enriched, tested, and shipped to any agent platform.

For L3/L3 organizations stuck in testing, a context layer turns governed metadata into programmable context that agents can consume at scale, through MCP, API, or SQL. Accuracy goes from untrustworthy to production-grade.

For organizations running org-ahead, the context layer allows the infrastructure to catch up to the ambition without slowing the AI program down.

For organizations running infra-ahead, it activates what’s already there. Governed metadata that’s been documented for compliance can be reverse-engineered into context that agents can actually use.

If you have agents in production — or agents planned — a context layer should be non-negotiable. The question is how long you’re willing to keep building without it.

If you’re at L3 or L4 and want to understand exactly where your organization sits, talk to our team.

What reaching AI-native status requires

Permalink to “What reaching AI-native status requires”L5 isn’t the most ambitious AI announcement. It’s not the most agents in flight. It isn’t even the most advanced model.

It’s the organization where AI and infrastructure grow together. It’s where each new agent makes the next one smarter, where context compounds instead of silos, and where the infrastructure that supports agents is treated as seriously as the strategy that mandates them.

Most organizations are off-diagonal. The ones making real progress toward being AI-native are those who figured out that the path runs straight up the middle, with neither axis too far ahead of the other.

The architecture underneath your agents matters as much as the agents themselves. Aligning it with your organizational maturity and readiness creates the engine you need to reach AI-native status.