An AI registry is the central record of every AI system your company runs. IBM’s 2025 Cost of a Data Breach Report found that 1 in 5 organizations has already experienced a breach linked to shadow AI, systems that never appeared on any list. The registry covers trained models, bought-in agents, internal copilots, and the AI features your SaaS vendors activated last quarter. For each item, it records who owns the system, what data feeds it, how risky it is, and where it stands with regulators.

The absence of an AI registry shows up as a gap. Consider a routine governance review meeting. The CDO interrupts and asks how many AI agents the company is running. Security guesses one number. Data engineering offers a different one. Legal stays quiet because legal was never given a list. Three people, three answers, none of them defensible.

An AI registry prevents that from happening again. It puts every AI system in one place, with the owner, the risk tier, and the regulatory status attached to each entry.

AI registry Quick facts

Permalink to “AI registry Quick facts”| Attribute | Detail |

|---|---|

| What it is | A live catalog of all AI models, agents, and use cases across an enterprise |

| Key benefit | One place to find an AI system’s owner, risk classification, and compliance status |

| Best for | Enterprises with multiple AI deployments preparing for EU AI Act, NIST RMF, or ISO/IEC 42001 |

| Core components | Model metadata, ownership, risk tier, data inputs, deployment environment, audit history |

| Who uses it | CISOs, Chief Compliance Officers, Heads of AI Governance, data governance teams, legal, and risk |

| Regulatory alignment | EU AI Act (Articles 12, 49, 71), NIST AI RMF (GOVERN, MAP), ISO/IEC 42001 |

What does an AI registry do?

Permalink to “What does an AI registry do?”AI registries are a continuously updated record of all AI systems within an enterprise. It covers models, agents, and use cases. Each entry includes the owner, risk classification, data lineage, and compliance status. Governance teams use the registry to answer audit questions and prove compliance with the EU AI Act and the NIST AI Risk Management Framework.

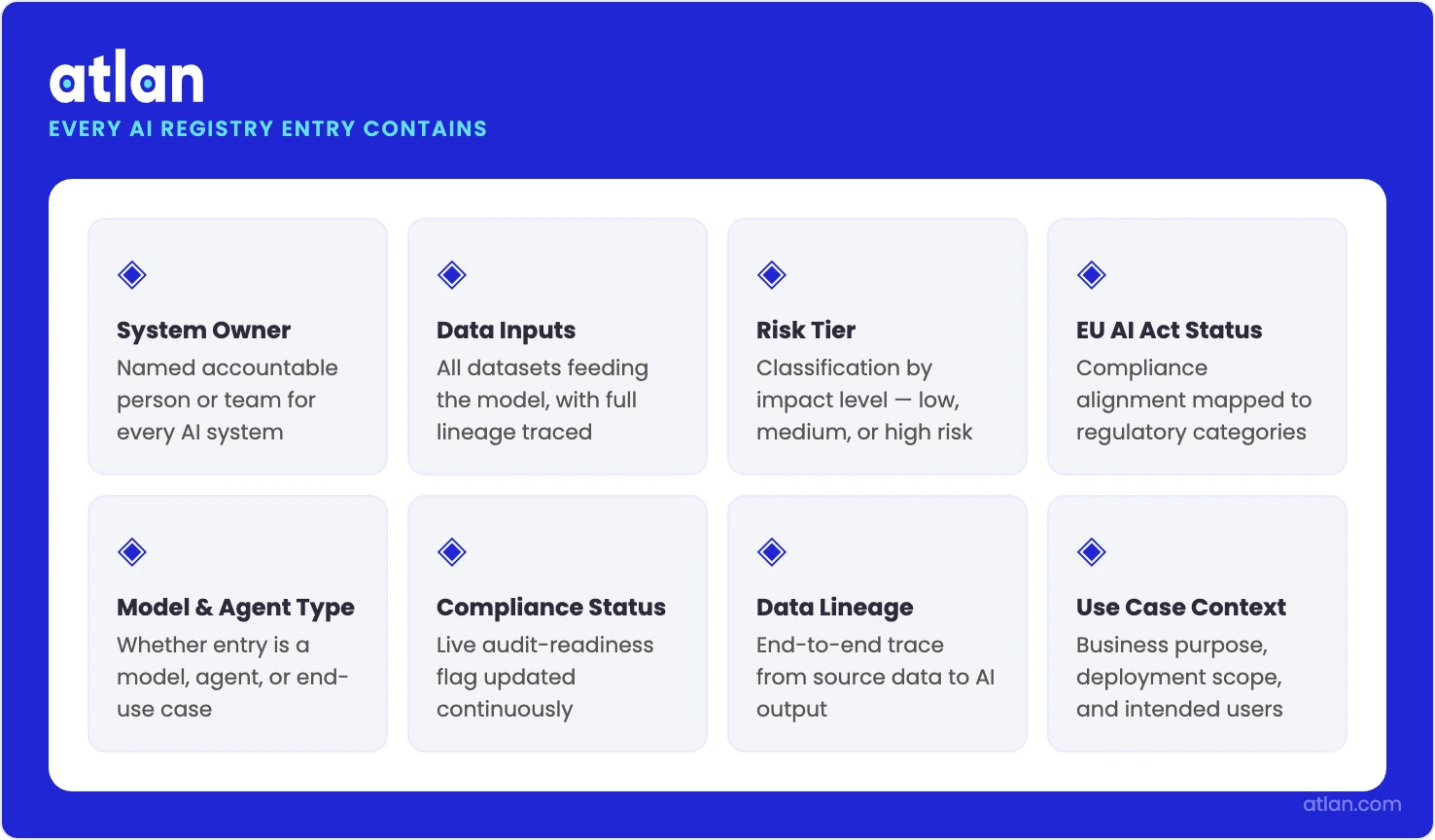

The central record of every AI system, model, and agent your company runs. Source: Atlan.

An AI registry:

- Records every AI model, agent, and automated use case the enterprise has deployed

- Tracks ownership, risk class, data inputs, and regulatory status for each system

- Produces audit-ready answers when regulators or internal auditors come asking

- Surfaces shadow AI by flagging systems with no documented owner or risk review

- Connects each AI entry to the data it consumes through column-level lineage

The same IBM report adds another data point. 97% of the organizations that experienced an AI-related security incident had no proper AI access controls in place. The registry exists to close that gap.

A working registry answers three questions a board or regulator can ask on a random Wednesday. What AI are we running? Who owns each piece? What risk does each one carry? Without a registry, those answers are scattered across engineering tickets, vendor contracts, and Slack threads that were never built for compliance.

A spreadsheet built once and forgotten will not survive 2026. Gartner expects more than 40% of enterprises to face security or compliance incidents linked to unauthorized shadow AI by 2030. Static lists go stale in weeks. The registries that hold up are the ones plugged into the same observability and metadata systems that already show what is running in production.

AI registry vs. model registry vs. agent registry: understanding the difference

Permalink to “AI registry vs. model registry vs. agent registry: understanding the difference”Three different things share this name. Confusing them is one of the more expensive mistakes in AI governance.

A model registry is what data scientists use to determine which version of a model is in production. It holds training metrics, deployment lineage, and the rest of the standard MLOps furniture.

An agent registry is newer ground. It records autonomous agents, the permissions they hold, and the actions they are allowed to take autonomously.

The enterprise AI registry sits on top of both of those. It carries the business context, risk classification, and regulatory paperwork that compliance, legal, and governance teams need across the entire AI portfolio.

A useful test is to walk a CDO, an ML platform lead, and a CISO into the same procurement meeting. Ask each one to describe their ideal AI registry. All three will use the phrase. None of them will be picturing the same product. The CDO has a unified inventory built on the data catalog in mind. The ML platform lead is thinking of a versioned model artifact store wired into MLflow. The CISO sees an asset inventory built from network telemetry, OAuth scans, and browser monitoring. Nobody is wrong on their own terms. The trouble starts when the company tries to buy “an AI registry” without working out which of the three it actually needs.

The table makes the differences concrete.

| Dimension | AI model registry | AI agent registry | Enterprise AI registry |

|---|---|---|---|

| Scope | ML and DL models with versioned artifacts | Autonomous software agents and their decision logic | All AI systems: models, agents, use cases, and workflows |

| Primary user | Data scientists, ML engineers, MLOps teams | Platform engineers, AI product managers | CISOs, Compliance Officers, CDOs, and AI Governance leads |

| What it tracks | Model version, metrics, training data, lineage | Agent identity, permissions, data access, action scope | Ownership, risk tier, data inputs, regulatory classification |

| Governance level | Technical (experiment tracking, deployment gates) | Operational (runtime behavior, access controls) | Enterprise (risk, compliance, audit, policy enforcement) |

| Regulatory relevance | Indirectly supports training data documentation | Moderate, relevant for agentic EU AI Act categories | Direct, maps to EU AI Act Annex IV, NIST GOVERN, ISO 42001 |

| Example tools | MLflow, Weights & Biases, SageMaker Model Registry | Emerging category, often custom-built or platform-specific | Atlan AI Governance, ServiceNow, purpose-built catalogs |

The terminology overlap creates governance failures. Teams with a mature MLOps stack often assume their model registry already covers compliance. It does not. A model registry can answer “which version of this is in production?” It cannot tell you who approved the model, what personal data it touches, or whether it qualifies as high-risk under the EU AI Act. Those answers live in the enterprise registry.

Agentic AI is making this picture messier. Autonomous agents spread through customer service, marketing, and internal tooling. The questions you ask about an agent are different from the questions you ask about a model. The right move is to plan for an enterprise registry that pulls records from both the model registry and the agent tracking system. Three disconnected silos will not survive the first audit.

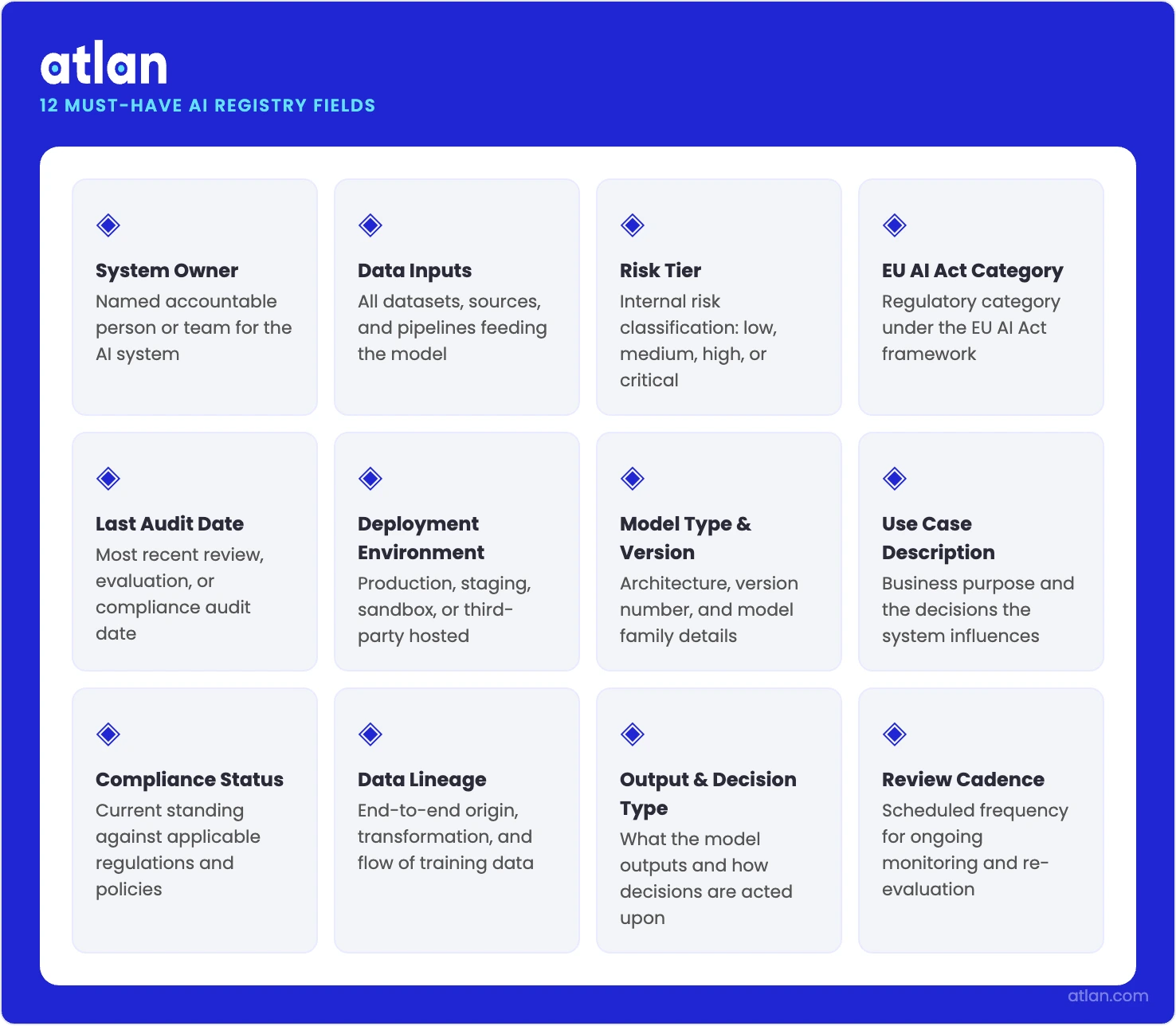

What an AI registry should contain: the 12-field metadata schema

Permalink to “What an AI registry should contain: the 12-field metadata schema”A complete AI registry entry needs twelve things at a minimum. The list is: system owner, data inputs, risk tier, EU AI Act category, last audit date, deployment environment, model version, training data sources, intended use case, output actions, incident history, and any regulatory exemptions claimed.

The 12 essential metadata fields every AI registry should contain. Source: Atlan.

EU AI Act Article 12 also requires high-risk systems to support automatic event logging across their full lifetime. That phrase, sitting in the legal text, rules out a static spreadsheet as a compliant control.

EU AI Act Annex IV sets out the technical documentation that a high-risk system must maintain when it goes on the EU market. The list includes a general system description, design specifications, training data, monitoring measures, and performance metrics. A well-built schema turns each documentation requirement into a structured field that can be queried later.

The 12 fields below cover the core EU AI Act questions. They also stay extensible for NIST AI RMF and ISO/IEC 42001 on top.

- model_owner: The accountable person or team. Maps to the EU AI Act Article 16 obligations for providers.

- data_inputs: Data sources and categories consumed at inference time, with personal data flagged separately.

- risk_tier: One of unacceptable, high, limited, or minimal risk under the EU AI Act risk framework.

- eu_act_category: The specific Annex III category if the system is high-risk (employment, biometrics, critical infrastructure, and so on).

- last_audit_date: When the most recent governance review happened. Drives automated re-review triggers.

- deployment_environment: Production, staging, or experimental, plus the geographic region for jurisdictional scoping.

- model_version: Current production version, linked back to the model registry artifact for full version history.

- training_data_sources: Datasets used for training and fine-tuning, with provenance, licensing, and bias assessment status.

- intended_use_case: The documented purpose and approved use boundaries, with out-of-scope usage flagged.

- output_actions: What the system actually does. Distinguishes advisory output from automated or binding decisions.

- incident_history: Adverse events, near-misses, bias reports, and remediation actions taken.

- regulatory_exemptions: Any exemptions claimed (research, national security, and so on) with supporting documentation.

The schema choices made in the first month are the hardest to walk back later. Risk tier and EU Act category fields are the most sensitive. They must follow the classification logic in the EU AI Act Articles 6 and 7 verbatim. A simplified internal rubric will fail the first audit it meets.

The data_inputs field carries similar weight. Auditors now expect machine-readable references to the data catalog rather than free-text descriptions. That way, column-level lineage queries can verify what each AI system actually consumes.

A precedent worth studying

Five years before most enterprises began building AI registries, Amsterdam and Helsinki launched public algorithm registers that document every AI system the cities operate. Each entry covers the data behind the system, the human oversight in place, and the risks managed. The Dutch national government has since extended the model into a country-wide Algorithm Register for high-risk systems. Nine European cities then agreed on a shared open data schema for their registers. Enterprise teams designing schemas from scratch can save themselves weeks of work by reading what these governments have already published.

What makes an AI registry essential in 2026?

Permalink to “What makes an AI registry essential in 2026?”The EU AI Act high-risk classification rules, the NIST AI RMF governance controls, and the ISO/IEC 42001 audit obligations all carry hard deadlines, making an AI registry urgent. Stack those on top of the sprawl, and exposure goes up fast. Organizations without a maintained registry can face record-keeping fines.

Gartner surveyed 302 cybersecurity leaders between March and May 2025. 69% of them either suspect or have direct evidence that employees are using prohibited public GenAI tools. Almost none of those tools have ever been through a formal AI governance review.

What remains is a category of shadow agents. These are autonomous systems handling enterprise data with no documented owner, no risk assessment, and no audit trail that anyone can produce on demand.

When an AI-driven incident triggers a regulatory inquiry, the first thing investigators ask for is the AI inventory. A company that cannot produce one is now defending two failures: the original incident and the missing governance documentation that should have caught it.

EU AI Act, NIST AI RMF, and ISO/IEC 42001: what each requires

Permalink to “EU AI Act, NIST AI RMF, and ISO/IEC 42001: what each requires”The EU AI Act tells providers of high-risk AI systems to do three things. They must maintain technical documentation per Annex IV. They must register those systems in the EU database under Article 71. They must complete a conformity assessment before the system goes live.

Article 12 sits underneath all of that. It requires high-risk systems to be technically capable of automatic event logging across their full lifetime. No manual spreadsheet meets that standard.

The deadlines are now close. Core obligations land on August 2, 2026. The deadline for AI embedded in regulated products extends to August 2, 2027. Article 99 sets fines for record-keeping violations at up to €15 million or 3% of total worldwide annual turnover, whichever is larger. Get more details on what the EU AI Act means for you.

The NIST AI Risk Management Framework breaks AI risk management into four functions: GOVERN, MAP, MEASURE, and MANAGE. The MAP function names an organizational AI inventory as a precondition for everything else.

ISO/IEC 42001 is the international standard for AI management systems. It requires organizations to define the context of their AI use and document AI objectives. Like NIST, it depends on having a real registry to begin with.

These frameworks stack on top of each other. They do not replace one another. A multinational typically has to satisfy EU AI Act obligations on its EU-market deployments, NIST alignment as a US federal contractor or customer trust signal, and ISO/IEC 42001 certification as an emerging procurement requirement.

A well-structured AI registry serves as the documentation spine for all three. Building three separate tracking systems is the slower and far more expensive path.

How an AI registry connects to your data governance infrastructure

Permalink to “How an AI registry connects to your data governance infrastructure”An AI registry cannot float free of the data infrastructure on which the AI systems depend. It has to be wired into the data catalog, the lineage graphs, and the active metadata systems that already track which data feeds each AI model. Without that connection, risk assessments are guesses dressed up as records.

Most first-generation AI registries are spreadsheets. The data_inputs column might say something like “customer transaction data.” That phrase will not survive an audit.

The follow-up questions are the ones that matter. Which tables hold that data? Which columns? What is the retention policy? Is any of it covered by GDPR? Without a connection to a live catalog and column-level lineage, none of those questions has an answer.

The registry has to sit on top of the data infrastructure. It cannot float above it as a document.

A registry tied into active metadata management behaves differently. It picks up new AI deployments as they appear. It refreshes data_inputs fields when pipeline schemas change. It reflags risk tiers when an AI system starts touching newly sensitive data. The quarterly clean-up exercise turns into a live governance instrument.

The metadata foundation: why registry accuracy requires data lineage

Permalink to “The metadata foundation: why registry accuracy requires data lineage”Column-level lineage is the technical floor that makes accurate AI registries possible. It traces exactly which columns of which tables flow into a given model.

With that lineage in place, a governance team gets to answer three questions in seconds. Is this model consuming PII? Are those columns under data residency restrictions? Have any upstream schema changes altered the model’s input profile since its last review? Take the lineage away, and the data_inputs field is back to guesswork.

The enterprise context layer takes this one step further. It builds a semantic map of how data assets, business concepts, and AI systems relate to each other.

When an AI model’s context layer entry is linked to its registry record, governance teams get a single view of the system. The view shows the model, what it does, the data behind it, who owns it, and its risk tier. The view is what makes a registry useful in practice.

How to build an enterprise AI registry in 4 phases

Permalink to “How to build an enterprise AI registry in 4 phases”Building an enterprise AI registry breaks down into four phases. Each phase has a clear job, and skipping any of them leaves the others weaker. The four jobs are: find everything that is already running, define the schema against EU AI Act Annex IV and NIST AI RMF, wire the registry into data infrastructure so lineage and ownership stay current, and stand up a governance workflow for intake, risk review, and continuous monitoring.

Phase 1: AI inventory audit, discover everything, including shadow AI

Permalink to “Phase 1: AI inventory audit, discover everything, including shadow AI”Find every AI system currently deployed across the organization. That includes vendor-supplied AI features, embedded ML models, internal agents, and AI capabilities baked into SaaS platforms.

Who owns this? AI Governance lead, CISO, and department heads.

Example: Run automated discovery against data pipelines, API logs, and cloud configurations to surface AI-related endpoints and model-serving infrastructure. Cross-reference procurement records for vendor AI addendums. Any system without a documented owner gets flagged as a shadow agent and routed to immediate triage.

However, there are practitioners who feel differently about how this discovery should run. Tim Morris, Chief Security Advisor at Tanium, told CIO.com that security teams should be aware of a new AI app or browser plugin the moment it appears in the environment.

Some tag along the hybrid path where there’s a self-service registry, in which employees log AI tools they use, and update it quarterly. Network scans and API monitoring, then validate the entries.

None of the approaches is the only right approach. The shared lesson is that questionnaire-only discovery misses a large slice of what is running. The biggest blind spot is the AI features quietly embedded inside marketing automation platforms, HR tools, and customer service software.

The audit produces a raw inventory. The list is unverified, with whatever metadata is available at the time of discovery. It becomes the intake queue for Phase 2.

Aim for completeness here, not accuracy. A complete list can be enriched later. A list of missing systems cannot be fixed by polishing the entries that did make it in.

Phase 2: Define the registry schema, align with the EU AI Act, and NIST RMF

Permalink to “Phase 2: Define the registry schema, align with the EU AI Act, and NIST RMF”Set the metadata schema that will govern every entry going forward, using the 12-field structure or something similar. Map each field to the EU AI Act or NIST RMF requirement it satisfies.

Who owns this? Compliance, data governance, and AI governance.

Example: Pin down risk_tier classification criteria against the EU AI Act Article 6 before any system gets classified. Set the eu_act_category lookup values to the exact Annex III categories rather than internal labels. Decide on last_audit_date refresh cadences, for example, high-risk reviewed every 90 days, limited-risk once a year. Make data_inputs a reference to data catalog asset IDs, never free text.

The schema choices made here are the most expensive ones to undo. Spending two or three weeks on schema governance pays back later. That work should include a legal review of how the EU AI Act categories map to your specific deployments. The investment saves months of rework when the first regulatory inquiry comes through.

Phase 3: Integrate with data infrastructure, lineage, quality, and ownership

Permalink to “Phase 3: Integrate with data infrastructure, lineage, quality, and ownership”Connect the registry to the data catalog and lineage system. The goal is for data_inputs, model_owner, and risk_tier to update from authoritative sources, not from someone retyping fields by hand.

Who owns this? Data engineering and data governance.

Example: Bind each data_inputs entry to specific data catalog asset IDs. Set up column-level lineage so upstream schema changes throw alerts to the registry. Resolve model_owner against the HR directory so ownership updates immediately when someone changes roles. Apply risk_tier scoring rules that auto-escalate when sensitive data signals appear in the catalog.

Whether the registry stays accurate beyond its first month is decided here. Teams that skip the integration step generally watch their accuracy fall apart within a quarter.

The registry needs to behave like a dependent system that breaks loudly when its sources change. It cannot survive as a static document that gets refreshed if anyone remembers to.

Phase 4: Governance workflow, intake, risk review, monitoring

Permalink to “Phase 4: Governance workflow, intake, risk review, monitoring”Set up mandatory intake processes that block any new AI system from reaching production without registration. Add continuous monitoring for unregistered deployments. Define escalation paths for high-risk classifications and audit triggers.

Who owns this? AI governance, CISO office, legal, and compliance.

Example: Route every new AI deployment request through a structured intake form that pre-populates the registry. Run automated risk scoring against EU AI Act classification logic, with high-risk candidates escalated to a human reviewer before any approval. Scan monthly for AI endpoints not present in the registry. Audit compliance with last_audit_date across active entries every quarter.

The workflow is what turns the registry from documentation into a control. Once registration becomes a prerequisite for production access, the registry grows alongside the AI portfolio rather than chasing it.

That single change does more to stop shadow AI proliferation than any tool. It works because it makes unregistered deployment operationally hard, not just discouraged in principle.

The cost of skipping this step is on the books. EU AI Act Article 99 sets fines for record-keeping violations at up to €15 million or 3% of total worldwide annual turnover, whichever is higher. A shadow agent that never made it into the registry is the exact gap that triggers those penalties when an incident surfaces.

How Atlan builds a live AI registry on your existing data infrastructure

Permalink to “How Atlan builds a live AI registry on your existing data infrastructure”Atlan maintains an AI registry by wiring structured intake, active metadata discovery, column-level lineage, and automated risk scoring directly into existing data infrastructure, so the registry stays accurate without manual reconciliation. Static tools and spreadsheets degrade within weeks as deployments shift underneath them. Live AI registries need live connections to data infrastructure. A platform that combines active metadata discovery, structured intake, column-level lineage, and automated risk scoring can keep a registry accurate continuously. It removes the need for compliance teams to rebuild the registry before every audit.

Manual registry maintenance is the default state at most enterprises, and it fails on a predictable schedule. According to IBM’s 2025 Cost of a Data Breach Report, 63% of breached organizations either have no AI governance policy or are still drafting one. Only 34% of those with a policy in place run regular audits for unsanctioned AI.

The cause of failure is policies that exist on paper, sit within a control framework that no one references, and never get tied to enforcement or accountability for actual violations.

AI models ship faster than the governance reviews scheduled to catch them. Vendors push AI feature updates that quietly alter data access patterns, while shadow agents accumulate in the gaps between review cycles. By the time an audit begins, the registry shows the AI posture from three months ago.

Atlan’s AI Governance capabilities address this through four connected functions.

- Structured intake forms enforce registration at the deployment request stage. They capture model_owner, risk_tier, data_inputs, and eu_act_category before any system reaches production.

- Active metadata discovery scans the data environment continuously. It surfaces AI systems that bypassed intake, addressing shadow AI at the infrastructure level rather than through periodic questionnaires.

- Column-level lineage refreshes data_inputs fields automatically as upstream pipelines change. Compliance teams no longer have to reconcile the registry by hand.

- Configurable risk scoring runs the EU AI Act classification logic over each entry. It flags high-risk systems for human review, so compliance teams do not have to evaluate every deployment individually.

Atlan was named a Leader in the 2026 Gartner® Magic Quadrant™ for Data & Analytics Governance Platforms. See the Atlan AI Governance Studio demo for a walkthrough.

With Atlan, new AI deployments show up in the registry on their own, whether they came through formal intake or were caught by metadata discovery.

Audit readiness becomes continuous. The pre-audit sprint goes away. Ownership can be resolved against your identity directory and source systems, so model_owner records stay accurate as the organization changes shape.

The registry stops being a parallel system that has to be reconciled against the data infrastructure. It becomes a governance view of the data infrastructure itself.

FAQs about AI registries

Permalink to “FAQs about AI registries”What is an AI registry in governance?

Permalink to “What is an AI registry in governance?”In an AI governance program, the registry is the central record of every AI model, agent, and use case the organization runs. Each entry holds ownership, risk classification, data inputs, deployment status, and regulatory compliance for that system. Governance teams use the registry to assign accountability, run review workflows, respond to audits, and prove EU AI Act compliance when regulators come asking.

What is the difference between an AI registry and an AI model registry?

Permalink to “What is the difference between an AI registry and an AI model registry?”A model registry is an MLOps tool. Data scientists and ML engineers use it to track model versions, training metrics, and deployment artifacts. The enterprise AI registry is a different kind of system. It covers every AI system the company runs, including models, agents, and vendor-supplied AI. It also carries the business context, risk classification, ownership, and regulatory metadata that compliance teams need. The model registry feeds the enterprise registry, which is the layer that regulators care about.

What should an AI registry include?

Permalink to “What should an AI registry include?”A complete entry should cover the system owner, data inputs, risk tier, EU AI Act category, last audit date, deployment environment, model version, training data provenance, intended use case, output actions, incident history, and any regulatory exemptions claimed. For high-risk AI systems under the EU AI Act, Annex IV adds further mandatory technical documentation that the registry must capture for the conformity assessment to hold up.

Is an AI registry required by the EU AI Act?

Permalink to “Is an AI registry required by the EU AI Act?”The EU AI Act does not use the phrase “AI registry,” but the documentation and registration obligations it creates amount to the same thing. Article 12 requires high-risk systems to support automatic event logging across their full lifetime. Article 49 requires providers of high-risk systems to register them in the EU database under Article 71 before placing them on the market. Annex IV specifies the technical documentation content, which maps directly onto a well-built registry schema.

What is the difference between an AI registry and an AI inventory?

Permalink to “What is the difference between an AI registry and an AI inventory?”An AI inventory is the starting point. It is the list pulled together during a discovery exercise, often as a one-off spreadsheet ahead of an audit. An AI registry is the governed version of that list. It has structured fields, ownership workflows, risk scoring, and live connections to the data infrastructure underneath. The inventory feeds the registry. A registry that loses its governance workflows decays back into an inventory within a few weeks.

How do you build an AI agent registry?

Permalink to “How do you build an AI agent registry?”Start with a discovery audit that catches every deployed agent, including the shadow agents running outside formal channels. Define a schema with fields for agent identity, data access scope, decision authority, risk tier, and regulatory classification. Connect the schema to the data infrastructure so inputs and outputs are traceable through lineage. Stand up an intake workflow that requires registration before any agent reaches production. The four steps mirror the enterprise registry build, with extra emphasis on permissions and decision authority because agents act on their own.

An AI registry without live data infrastructure is an audit liability

Permalink to “An AI registry without live data infrastructure is an audit liability”An AI registry is the operational floor that makes enterprise AI governance possible. Without one, every question about risk, ownership, and compliance turns into a manual investigation across systems that were never built to answer it.

A registry tied into live data infrastructure changes the picture. It connects to active metadata, lineage, and risk scoring. AI governance stops being a sprint before each audit and becomes a continuous operational discipline. Any organization deploying AI at scale needs a registry that grows alongside its AI portfolio.