Active metadata management is the continuous, automated collection of metadata signals that keep AI agents and data catalogs accurate in real time. The enterprise metadata market is projected to reach $12.89 billion in 2026 (The Business Research Company, Enterprise Metadata Management Global Market Report, 2026). Unlike a catalog feature configured after the fact, active metadata is the prerequisite infrastructure for trustworthy data and AI.

A data engineer modifies a dbt model at 9 a.m. By 9:04, active metadata has detected the schema change, propagated it to every downstream dashboard, updated the affected business glossary entries, and surfaced a quality flag for the data team, without a single manual intervention. Without active metadata, that same change sits undocumented until someone notices a broken report, an AI agent returns a confidently wrong answer, or a governance audit reveals a gap.

Quick Facts

| What it is | Continuously updated metadata that reflects the real-time state of your data, not a static snapshot |

| Key benefit | AI agents and data teams operate on current, accurate context rather than stale catalog entries |

| Who uses it | Data engineers, data architects, CDOs, AI platform teams |

| Replaces | Manual catalog curation, scheduled batch metadata updates, spreadsheet-based data dictionaries |

| Complementary tools | dbt, Apache Atlas, Collibra alternative |

| Key metric | 53% reduction in manual data management work (Kiwi.com, Atlan customer outcomes) |

How does active metadata work?

Permalink to “How does active metadata work?”Active metadata management continuously watches how teams query tables, join columns, use documentation, and encounter quality issues. Those signals power automatic classification, smarter recommendations, and stronger governance, all without manual setup for each new data asset.

How the loop works in practice:

Metadata is captured continuously from across the stack. When a data engineer updates a Snowflake table, schemas sync to BI tools, lineage refreshes in the catalog, downstream users are notified in Slack, and data quality checks trigger automatically. Context stays current everywhere teams work.

As teams query tables, join columns, and collaborate, the system captures usage frequency, access patterns, and popularity trends. Machine learning uses these signals to auto classify sensitive data, recommend relevant datasets, and surface potential issues before they become incidents.

Active metadata does not stop at insight. It takes action. Pipelines can pause when quality thresholds fail, unused assets can be archived to reduce cost, and access policies can update automatically as classifications change.

The result is a metadata layer that acts on your stack, not just documents it.

What are the four key functions of active metadata?

Permalink to “What are the four key functions of active metadata?”Active metadata platforms share four fundamental attributes that distinguish them from traditional catalogs. Understanding these characteristics helps evaluate whether a solution truly activates metadata or simply aggregates it.

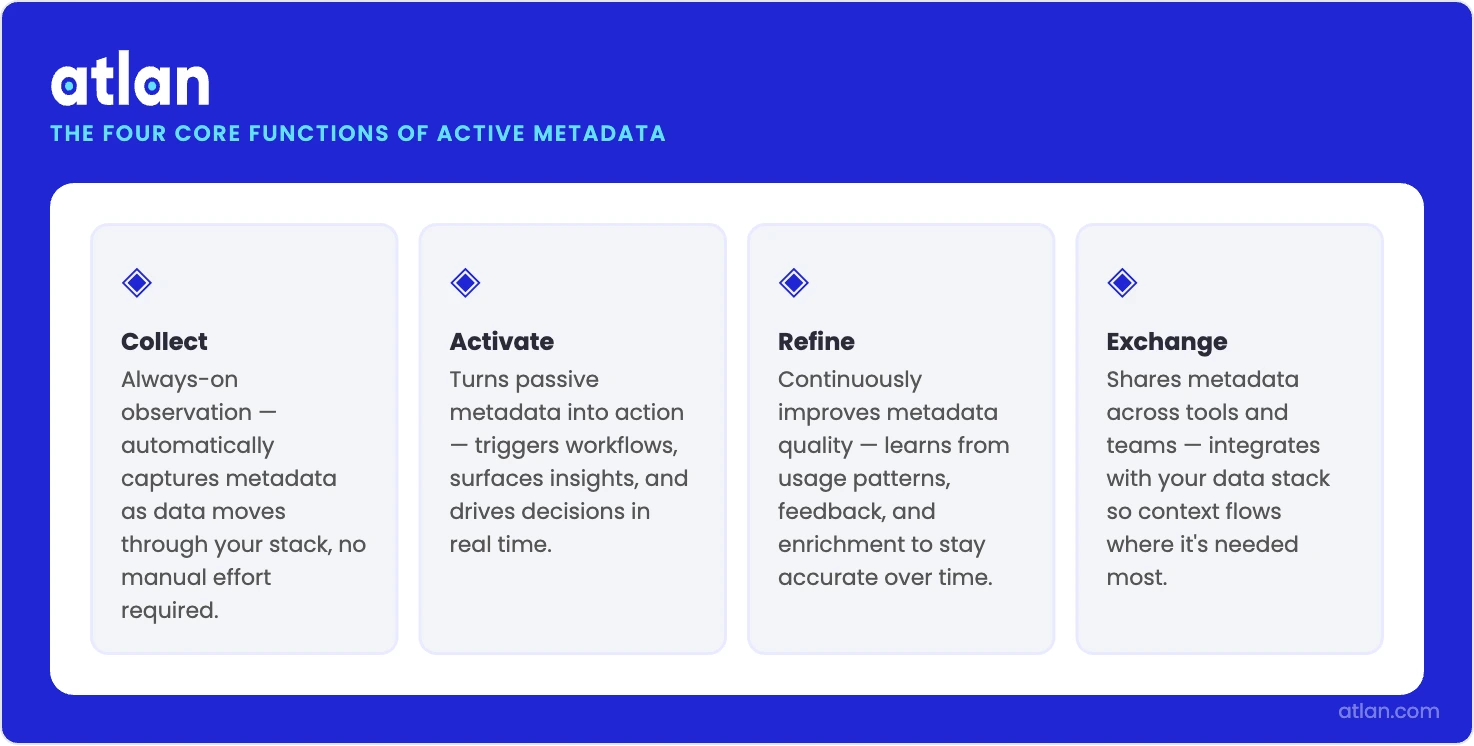

Atlan organizes active metadata capability into four core functions: Collect, Activate, Refine, Exchange.

Collection and enrichment

Permalink to “Collection and enrichment”It starts with always on observation. As data moves through your stack, the system quietly watches. Query logs, lineage events, usage patterns, transformation logic, and team conversations are captured in real time. There is no manual documentation and no lag from batch updates. Your view of the data estate stays aligned with how the data is actually being used.

Raw signals are then connected and interpreted. Machine learning links metadata streams, classifies sensitive columns, infers business meaning from usage, and spots early signs of quality degradation. With every query and interaction, recommendations sharpen, documentation improves, and anomalies surface sooner.

Action and exchange

Permalink to “Action and exchange”Intelligence turns into action. When a critical upstream table changes, active metadata does not just flag it. It alerts impacted dashboard owners, opens remediation tickets, and can stop downstream pipelines before bad data spreads. What once took hours of coordination now happens in seconds, automatically.

Everything is held together by open, API driven architecture. Metadata flows both ways between systems. Lineage appears inside BI tools, quality scores show up in query editors, and business definitions surface in Slack. Context meets teams where they work. Metadata stops being a passive record and starts running the stack.

How Atlan's four functions distinguish active metadata from passive aggregation. Source: Atlan.

Active metadata vs. passive metadata

Permalink to “Active metadata vs. passive metadata”| Dimension | Passive Metadata | Active Metadata |

|---|---|---|

| Core role | Static documentation | Living intelligence layer |

| How metadata is captured | Manually documented or batch crawled | Automatically collected in real time |

| Where it lives | Separate catalog users must visit | Embedded in BI tools, editors, and collaboration apps |

| Update frequency | Periodic and reactive | Continuous and proactive |

| Interaction with systems | Describes data only | Observes, learns, and acts on data behavior |

| Response to change | Discovered after failures occur | Detected instantly and addressed automatically |

| Primary outcome | Awareness | Automation and prevention |

- Passive metadata tells you what changed after something breaks.

- Active metadata detects the change, understands the impact through lineage, and alerts the right people before issues spread.

Passive metadata records the state of your data. Active metadata runs your data operations.

Six high-impact use cases for metadata activation

Permalink to “Six high-impact use cases for metadata activation”Active metadata enables automation across governance, operations, and user experience. These use cases demonstrate measurable value for different stakeholders.

How does active metadata automate compliance and data security?

Permalink to “How does active metadata automate compliance and data security?”An active metadata-driven stack can automatically identify and tag PII and sensitive data across your systems, then propagate security classifications through column-level lineage. In Atlan, teams typically combine rule-based playbooks, tag propagation, and connected data-quality or classification tools so that when a new table is flagged as containing credit card numbers, encryption policies apply, access is restricted based on roles, and interactions are logged for audit trails with minimal manual intervention.

How does active metadata enable intelligent cost optimization?

Permalink to “How does active metadata enable intelligent cost optimization?”Track asset popularity and usage patterns to identify waste. Active metadata monitors which Snowflake tables, BigQuery datasets, and Looker dashboards actually get used. Automatically archive assets unused for 60 days and deprecate those idle for 90 days. Organizations using this approach commonly report 15–30% annual reductions in cloud data warehouse spending by eliminating redundant storage and processing for stale assets.

How does active metadata accelerate root cause analysis?

Permalink to “How does active metadata accelerate root cause analysis?”When reports break or dashboards show unexpected numbers, active metadata’s automated lineage traces the issue from symptom to source in minutes rather than days. The platform highlights exactly which upstream transformation changed, who made the modification, and which other assets may be affected. Teams using active lineage report 50–70% faster incident resolution compared to manual investigation, with Gartner (2023) predicting active metadata can reduce manual data remediation effort by up to 60%.

How does active metadata enable self-service data discovery?

Permalink to “How does active metadata enable self-service data discovery?”Business users find trusted, relevant data without engineering support. Active metadata surfaces the most popular datasets for specific use cases, displays quality scores and freshness indicators, and recommends related assets based on what similar users accessed. This reduces time-to-insight for analysts while commonly decreasing data discovery requests to engineering teams by 30–50% (Atlan customer data, 2025).

How does active metadata support proactive data quality monitoring?

Permalink to “How does active metadata support proactive data quality monitoring?”Rather than discovering quality issues when reports fail, active metadata continuously monitors completeness, accuracy, and consistency metrics. The system alerts data owners when anomalies appear (sudden spikes in null values, unexpected schema drift, or broken freshness SLAs). Early detection prevents bad data from reaching production dashboards before it causes downstream failures.

How does active metadata support AI governance and model context?

Permalink to “How does active metadata support AI governance and model context?”Active metadata provides the semantic layer that makes AI initiatives viable. It automatically documents which data sources feed which models, tracks data lineage for explainability requirements, and enforces access policies on training data. As organizations deploy more AI agents and LLM-powered applications, active metadata keeps these systems on contextually appropriate, governed data rather than hallucinating or exposing sensitive information.

How active metadata powers AI agents

Permalink to “How active metadata powers AI agents”Active metadata is the signal layer that keeps AI agent context accurate. When a dbt model is modified, active metadata propagates the change through the catalog, to connected agents, and to downstream consumers, without human intervention. Agents querying recognized_revenue_q4 after the model update receive the current definition, not the stale one.

AI agents do not operate on raw data. They operate on the metadata layer that describes data: what a table means, who owns it, how fresh it is, what downstream systems depend on it. When that layer is passive (updated by hand, on a schedule, or not at all) the agent’s understanding of the data environment is a snapshot of the past. This is the structural cause of context rot and context starvation: two of the six failure modes that break production AI agents.

Active metadata changes this. When a dbt model is modified and a downstream dashboard breaks, an active metadata system detects the lineage event within minutes, propagates the schema change to every dependent system, updates the business glossary entry automatically, and (if an AI agent is querying that data) surfaces the change as a context update before the agent runs its next task. The agent does not act on stale information because the metadata layer never goes stale.

This matters because the failure mode for AI agents in data environments is not model quality. It is context quality. A well-trained model querying an undocumented column returns a confidently wrong answer. A well-trained model querying a column with accurate, active metadata (ownership, usage history, quality score, lineage) produces a useful one. When the data governance layer is active, the model’s context is governed by default.

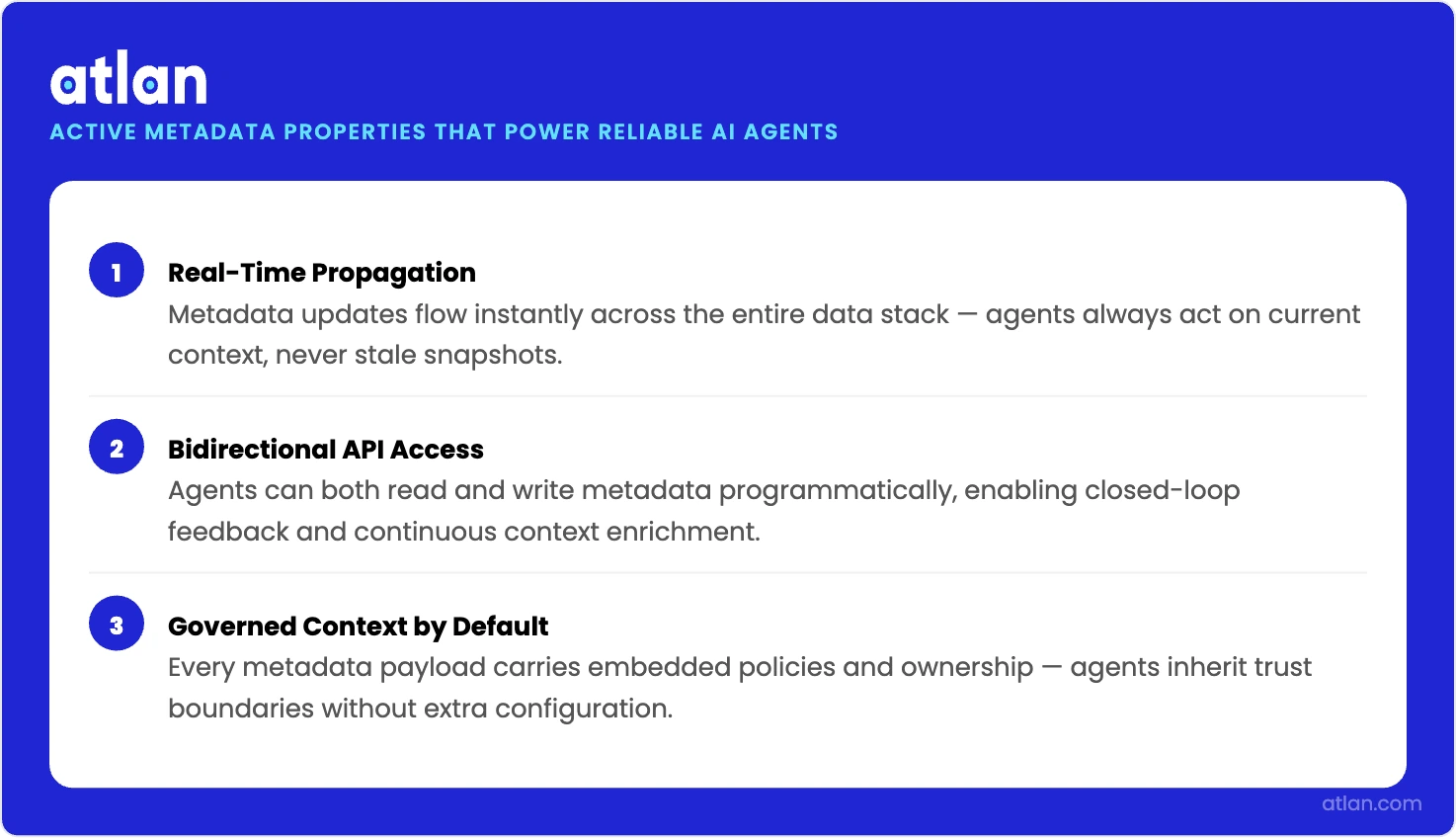

Three properties of active metadata are specifically relevant to AI agent reliability:

Real-time propagation

Permalink to “Real-time propagation”Schema changes, ownership transfers, and quality flag updates flow to every connected system as they happen, not on the next scheduled crawl. Agents always query the current state.

Bidirectional API access

Permalink to “Bidirectional API access”Active metadata is readable by agents and writable by agents. An agent that discovers a data quality issue can flag it back to the metadata layer, creating a feedback loop that improves the entire system.

Governed context

Permalink to “Governed context”Classification labels, access permissions, and compliance tags are embedded in the metadata itself. Agents that consume active metadata inherit governance automatically.

How active metadata makes AI agents reliable, current, and policy-aware. Source: Atlan.

Active metadata is one component of a broader enterprise context layer: the infrastructure stack that gives AI agents trusted, governed, real-time access to enterprise knowledge. AI-ready data programs run on active metadata infrastructure. Those still on static documentation are running on a snapshot that ages the moment someone updates a schema.

How to evaluate an active metadata platform

Permalink to “How to evaluate an active metadata platform”Not all metadata management tools truly activate metadata. Platforms that deliver these benefits share specific architectural components.

The foundation is a metadata lakehouse: a scalable repository that stores technical, operational, business, and collaboration metadata in both raw and processed forms. Modern platforms use open standards like Apache Iceberg so metadata stays accessible and interoperable, not locked in proprietary formats.

From there, bidirectional API connectivity is what makes metadata actually flow. Deep integrations with warehouses (Snowflake, Databricks, BigQuery), transformation tools (dbt), BI platforms (Looker, Tableau), and collaboration tools (Slack, Jira) create the continuous feedback loops that power automation. Connectors that only pull metadata on a schedule won’t cut it; they need to push context back out, embedding lineage, quality scores, and business definitions directly in the tools teams use.

Intelligent automation engines sit on top of that connectivity. Machine learning classifies columns, generates recommendations, and surfaces anomalies. The better platforms let you codify your own governance logic through rule-based playbooks rather than relying entirely on vendor defaults.

Two other capabilities separate strong platforms from weak ones: where metadata surfaces, and how observable the metadata layer itself is. Look for embedded collaboration interfaces that bring context into operational tools (BI, Slack, query editors) without requiring users to context-switch, and for observability features that monitor metadata completeness and alert when documentation is missing or automated processes fail.

Prioritize openness, automation depth, and proven integrations over feature checklists. The goal is a system that makes metadata flow, not another tool that aggregates it into a new silo.

How to get started with active metadata management

Permalink to “How to get started with active metadata management”Active metadata works best when rolled out in focused, measurable phases.

-

Start with high-impact problems. Pick one to three high impact problems like faster impact analysis, better data discovery, or automated compliance. Choose areas where success is easy to measure.

-

Audit your current metadata state. Assess what you already have across warehouses, orchestration tools, BI, and docs. This sets a realistic baseline.

-

Evaluate platforms on integration depth. Look for native integrations, bidirectional metadata flow, and open standards to avoid lock in.

-

Automate metadata collection first. Connect core systems like your warehouse and BI tools. Enable automated lineage and usage tracking.

-

Build targeted automations. Start small with workflows like PII tagging, policy enforcement, or unused asset cleanup.

-

Embed metadata into team workflows. Surface context directly in Slack, BI tools, and query editors so teams see value without extra effort.

-

Measure impact and expand. Track time saved, risk reduced, or costs optimized, then scale to new use cases.

Most teams see initial value within three to six months, with impact compounding as adoption grows.

Real stories from real customers

Permalink to “Real stories from real customers”How did Tide achieve GDPR readiness with active metadata?

Permalink to “How did Tide achieve GDPR readiness with active metadata?”Tide, a UK digital bank serving nearly 500,000 small business customers, needed to strengthen GDPR compliance as they scaled rapidly. Their original process for identifying and tagging personally identifiable information would have required 50 days of manual effort (half a day per schema across 100 schemas), carrying high risk of human error and inconsistency.

After implementing Atlan, Tide’s data and legal teams collaborated to define personally identifiable information standards and documented them in Atlan as their source of truth. Using Atlan’s Playbooks feature, they implemented rule-based automations to identify, tag, and classify personal data across their entire data estate. What would have taken 50 days of manual work was accomplished in just 5 hours. The team now maintains continuous compliance monitoring and can respond to data subject requests with confidence.

How Tide achieved GDPR readiness with Atlan

"Okay, our source of truth for personal data is Atlan. We were blessed by Legal. Everyone, from now on, can start to understand personal data."

Michal Szymanski, Data Governance Manager

Tide

🎧 Listen to podcast: How Tide achieved GDPR readiness

Discover how a modern data governance platform drives real results

Book a Personalized Demo →How does Nasdaq use active metadata to evangelize their data strategy?

Permalink to “How does Nasdaq use active metadata to evangelize their data strategy?”Nasdaq uses Atlan’s active metadata capabilities to embed data context directly into business intelligence tools and collaboration platforms. By making metadata flow to where work happens, rather than requiring users to visit a separate catalog, they’ve accelerated data democratization and governance adoption across their global organization.

How Nasdaq uses active metadata to evangelize their data strategy

"Active metadata allows us to push context into every tool our teams use, from Tableau to Slack. That embedded collaboration drives adoption in ways a standalone catalog never could."

Data Platform Team

Nasdaq

🎧 Listen to podcast: How Nasdaq cut data discovery time by one-third with Atlan

How Atlan activates metadata

Permalink to “How Atlan activates metadata”Atlan turns metadata into a shared, always-current operational layer across the data stack through a purpose-built architectural approach.

At the foundation sits a metadata lakehouse: a scalable, unified repository built on open standards (Apache Iceberg) that stores technical, operational, business, and social metadata in both raw and processed forms. This is not a proprietary silo; it is an open substrate that any connected system can read from or write to.

Bidirectional connectors sit between the lakehouse and every tool in your stack. Atlan maintains deep integrations with warehouses (Snowflake, Databricks, BigQuery), transformation layers (dbt), BI platforms (Looker, Tableau, Power BI), and collaboration tools (Slack, Jira). These connectors do not simply pull metadata on a schedule; they push context back out, embedding lineage, quality scores, and business definitions directly into the tools teams already use.

Event-driven propagation ties the system together. When a schema changes, an ownership record updates, or a quality threshold is breached, Atlan detects the event and propagates the downstream effects within minutes, not on the next nightly crawl. This keeps catalog entries aligned with reality.

An open API layer exposes all of this to AI agents and automated workflows. Agents can query the current state of any asset, write observations back to the metadata layer, and trigger governance actions programmatically. The result is metadata infrastructure that participates in agentic workflows rather than passively serving them.

FAQs about active metadata

Permalink to “FAQs about active metadata”What is active metadata and how does it differ from passive metadata?

Permalink to “What is active metadata and how does it differ from passive metadata?”Active metadata is a dynamic approach to metadata management where metadata is continuously collected, analyzed, and orchestrated to drive real-time insights, automation, and decisioning across data tools and workflows. Traditional metadata catalogs document data assets on a scheduled batch cycle; active metadata adds continuous analysis, alerts, recommendations, and workflow integrations so teams can act on metadata signals in near real time rather than discovering problems after failures occur.

What does implementation require?

Permalink to “What does implementation require?”Active metadata requires API-driven integrations with your data stack, typically your data warehouse (Snowflake, Databricks, BigQuery), transformation layer (dbt), BI platform, and orchestration tools. Most modern platforms offer pre-built connectors for common tools. You will also need an architecture that supports bidirectional metadata flow and real-time updates rather than batch-only processing. Initial implementation typically takes four to eight weeks for core integrations and first use cases, with full value realization over three to six months as teams adopt the platform and additional automations are built.

What is the business case for active metadata?

Permalink to “What is the business case for active metadata?”Organizations see ROI through faster root cause analysis (50–70% improvement), lower storage and compute costs (15–30% reduction from eliminating unused assets), and faster compliance processes (40–50% time savings). Gartner (2023) reports active metadata can reduce time to deliver new data assets by up to 70%. For AI initiatives specifically, active metadata documents data lineage for model training datasets, enforces access policies on governed data, and creates the semantic context that reduces hallucinations in agentic workflows, directly linking metadata infrastructure to AI reliability outcomes.

Does Atlan auto-detect PII or data issues?

Permalink to “Does Atlan auto-detect PII or data issues?”Atlan propagates tags via hierarchy and lineage and can automate enrichment with workflows, but it does not auto-detect PII by itself; organizations typically connect data-quality tools and use Atlan’s AI-suggested rules and automations to operationalize quality and governance signals.

What happens to AI agents when active metadata is absent?

Permalink to “What happens to AI agents when active metadata is absent?”Without active metadata, agents encounter one of three failure patterns at production scale. Schema changes propagate without context updates, so agents query deprecated columns and return confidently wrong answers. Model updates modify the meaning of a metric without notifying consuming agents, so outputs are mathematically correct but business-wrong. Access policy changes in one system never reach agents querying governed data in another. Each failure is invisible until a stakeholder flags a wrong number or an audit surfaces a compliance gap, and the root cause is always the same: the metadata layer was not active.

How often does active metadata update?

Permalink to “How often does active metadata update?”Active metadata updates continuously, not on a scheduled batch cycle. When a schema changes, an ownership record is modified, or a data quality threshold is breached, the metadata layer reflects it within minutes across every connected system. The update frequency is event-driven, not time-driven, which is what distinguishes active metadata from traditional catalog crawlers that run nightly or weekly.

Active metadata is the prerequisite for AI that doesn’t hallucinate

Permalink to “Active metadata is the prerequisite for AI that doesn’t hallucinate”AI that hallucinates in production almost always has a metadata problem underneath it: a stale definition, an undocumented column, an access policy that never propagated. Active metadata closes those gaps at the infrastructure level. Organizations that treat metadata as an operational signal rather than static documentation give their AI agents, governance programs, and data teams the accurate, current context they need to produce reliable outcomes. The alternative is catching failures after they reach a stakeholder dashboard.