Healthcare produces more decision-relevant data per person than almost any other domain. Every encounter, lab result, prescription, claim, and social determinant is a potential AI input. But the richest data for AI is also the most regulated. Resolving that compliance paradox is an architectural challenge — one that separates organizations that move from pilot to production from those that stay in perpetual evaluation mode.

Why is healthcare AI’s most compelling and most constrained domain?

Permalink to “Why is healthcare AI’s most compelling and most constrained domain?”McKinsey points out that AI agents can be thought of as virtual workers that can operate independently “once they’re given a specific goal, details on what tasks to perform and how, additional contexts to consider, guardrails to work within, and existing online tools to help them implement tasks.”

Top use cases for AI agents in healthcare include:

- Predict readmission

- Close care gaps

- Speed up prior authorization

- Reduce documentation burden

- Reconcile medications across care settings

The opportunity is not hypothetical. In a 2021 article, McKinsey flagged how administrative spending accounted for a quarter of the $4 trillion spent on healthcare annually in the United States.

According to JAMA (Journal of the American Medical Association) Network, healthcare organizations can save $210 billion annually by tackling manual, inefficient workflows. These include patient admission and discharge planning, nonstandardized submission processes for prior authorization forms, and disconnected tools and systems.

“A multiagent system could collaborate with call center representatives at providers and payers by retrieving answers and completing tasks like scheduling and sending documents.”

This is already happening in production. Microsoft introduced Dragon Copilot for nurses at Microsoft Ignite 2025, an ambient clinical assistant that captures and generates documentation from clinician-patient encounters.

The benefits are numerous, but so are the constraints — the biggest being data privacy compliance.

HIPAA’s Privacy Rule governs every disclosure and use of PHI. The 21st Century Cures Act mandates interoperability while prohibiting information blocking. State laws in California, Texas, and New York layer additional controls on top.

This creates the compliance paradox:

- The richest data for AI is clinical data: Diagnosis history, lab trends, social risk scores, care plan details.

- That same data is the most restricted: Governed at field, role, and use-case level by overlapping regulatory frameworks.

- Most AI pipeline architectures were not designed with HIPAA in mind: Chunk, embed, retrieve, and generate patterns were built for enterprise search, not PHI governance.

Healthcare organizations must treat compliance as an architectural requirement from day one, and embed it within the context fed to AI agents.

Build Your AI Context Stack

Get the blueprint for implementing context graphs across your enterprise. This guide walks through the four-layer architecture — from metadata foundation to agent orchestration — with practical implementation steps for 2026.

Get the Stack GuideHow do AI agents work in healthcare and where is PHI exposed?

Permalink to “How do AI agents work in healthcare and where is PHI exposed?”Healthcare AI agents follow the same perceive-reason-act loop as enterprise agents in other domains. Each step carries compliance obligations that general architectures ignore:

-

Perceive: The agent begins by perceiving context from connected systems (EMR, CRM, payer feed, etc.). Each retrieval decision is a potential HIPAA exposure if access controls are not enforced at the retrieval layer.

-

Reason: The agent then reasons over retrieved data. This is where hallucination risk becomes consequential: an agent reasoning over stale data (a care plan updated 18 hours ago that the agent has not seen) produces potentially harmful recommendations.

-

Act: The agent acts by triggering a downstream workflow — flagging a patient for outreach or surfacing a coding suggestion. HIPAA’s Security Rule requires audit controls over every PHI access event, including records of who accessed what, when, and for what purpose.

Traditional data governance addressed one attack surface: restrict who can query which tables. Today, PHI is exposed at three distinct layers that classical governance does not address.

PHI attack surface 1: Training data

Permalink to “PHI attack surface 1: Training data”When fine-tuning a model on clinical notes, PHI can become embedded in model weights. LLMs can reproduce verbatim text from training data under adversarial prompting. A model fine-tuned on an EHR corpus without rigorous de-identification is a latent PHI liability, regardless of access controls on the source tables.

PHI attack surface 2: Retrieval windows

Permalink to “PHI attack surface 2: Retrieval windows”In RAG-based clinical agents, the retrieval step surfaces PHI into the model’s context window. HIPAA’s minimum necessary standard (45 CFR 164.502(b)) requires that PHI access be limited to what is needed for a specific task. An agent scheduling a follow-up appointment might pull the full patient chart when all it needs is the patient’s name, provider, and preferred time slot. Row-level security does not solve this — the agent retrieves far more than the task requires.

PHI attack surface 3: Model output

Permalink to “PHI attack surface 3: Model output”Even when retrieval is controlled, LLMs can reproduce PHI in generated responses when those facts appear in the context window. A model summarizing highest-risk patients may surface individually identifiable information, even when the requesting coordinator is authorized only for de-identified population-level views.

Healthcare AI governance must address all three attack surfaces by enforcing policies at the context delivery layer. PHI sensitivity tags propagate downstream via lineage automatically, so access restrictions travel to every agent pipeline querying that field without being reimplemented per agent.

The vector database erasure problem

Permalink to “The vector database erasure problem”Many RAG-based agent architectures embed patient data in vector databases for semantic retrieval. This creates a GDPR Article 17 and HIPAA right-of-access challenge: when a patient requests deletion or data access, the organization must identify and act on every record, including records encoded as numerical representations in vector indices.

Standard vector databases do not support targeted deletion without a complete provenance map. Maintaining that map requires treating vector indices as governed data assets, with every embedding build logged back to the source PHI record.

The explainability problem causing a trust gap

Permalink to “The explainability problem causing a trust gap”Consider two versions of a readmission risk alert:

Version A: “This patient has a readmission risk of 73%.”

Version B: “Readmission risk flagged at 73%, based on: HbA1c trending upward across three encounters, missed follow-up within 60 days, and social risk score of 4 for transportation barriers, classified using Hopkins criteria for diabetic complication risk.”

Version B is the only version a clinician will use to change a care decision. Without the reasoning chain, the recommendation cannot be trusted or acted upon.

An enterprise AI operating layer improves explainability by linking every agent recommendation to the clinical data products, definitions, and policies that produced it, so care coordinators can interrogate the full reasoning chain.

CIO Guide to Context Graphs

For data leaders evaluating where to start, Atlan's CIO guide to context graphs walks through a practical four-layer architecture — from metadata foundation to agent orchestration — with implementation priorities for 2026.

Get the CIO GuideWhat does a governed AI agent architecture look like? 4 fundamental layers to implement.

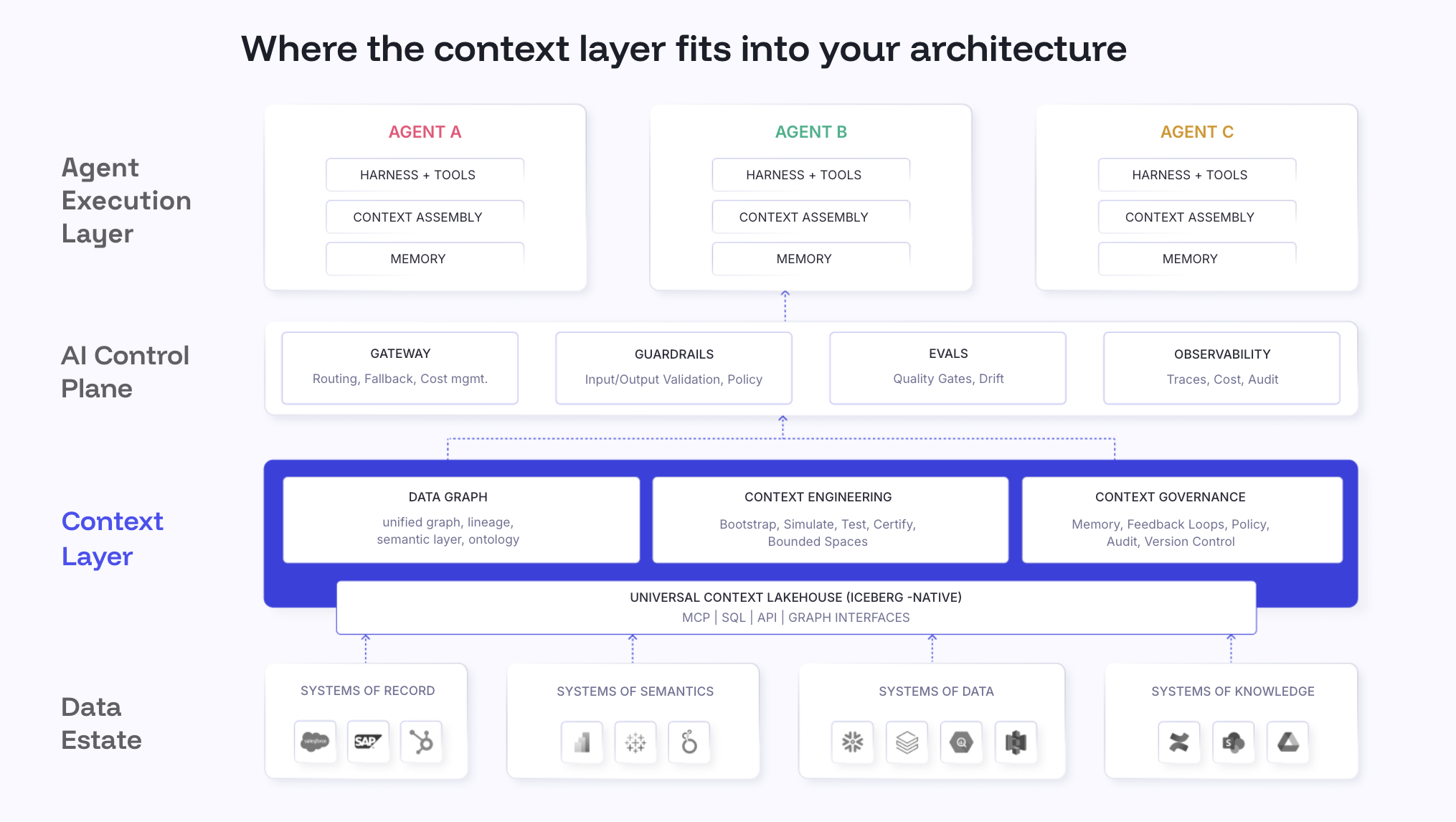

Permalink to “What does a governed AI agent architecture look like? 4 fundamental layers to implement.”The architecture that moves healthcare AI agents from pilot to production has four layers. Each layer resolves one or more of the three PHI attack surfaces described above.

Layer 1: PHI classification and sensitivity propagation

Permalink to “Layer 1: PHI classification and sensitivity propagation”Before any data enters an AI pipeline, every field that constitutes PHI must be identified and tagged. This requires automated classification that propagates downstream through cross-system, column-level lineage.

When a new column is added to the EMR feed, it needs to be evaluated and tagged before any agent can query it. When a field is tagged as PHI, access restrictions travel automatically to every downstream agent pipeline querying that field via lineage propagation — without manual re-tagging at each integration point.

Layer 2: Minimum necessary enforcement at the context delivery layer

Permalink to “Layer 2: Minimum necessary enforcement at the context delivery layer”The minimum necessary standard cannot be enforced inside each individual agent. That approach requires reimplementing access logic for every use case, role, and data source. A centralized context delivery layer evaluates role, use case, and consent before deciding what PHI the agent receives.

Atlan’s MCP server operates as this context delivery layer. Care coordinators receive de-identified cohort views by default. Individual PHI is surfaced only when the access policy permits it for that specific role and task combination.

Layer 3: Data freshness thresholds

Permalink to “Layer 3: Data freshness thresholds”Clinical data changes continuously. A care plan updated this morning invalidates a care gap recommendation generated last night. Agents that act on outdated clinical state can generate outreach for patients who have already received the recommended intervention, or produce risk stratification based on test results that have since been superseded.

Each clinical data source must carry a last-updated timestamp that agents check before retrieval. For time-sensitive workflows such as care gap closure and medication reconciliation, sources that fall outside the recency threshold get flagged before recommendations are generated.

Layer 4: Decision traces for clinical audit and adoption

Permalink to “Layer 4: Decision traces for clinical audit and adoption”Every agent action must be linked to the clinical data products, definitions, and policies that produced it — decision tracing. A context layer like Atlan links every agent recommendation back to the governed context graph that produced it, so care coordinators can act on recommendations they can interrogate. For a deeper look at how this architecture connects EMR, claims, CRM, and population health data into a governed context graph, see Context Layer for Healthcare AI.

Inside Atlan AI Labs & The 5x Accuracy Factor

Learn how context engineering drove 5x AI accuracy in real customer systems. Explore real experiments, quantifiable results, and a repeatable playbook for closing the gap between AI demos and production-ready systems.

Download E-bookHow Atlan supports healthcare AI agents in production

Permalink to “How Atlan supports healthcare AI agents in production”Atlan operates as the governed context layer between clinical data systems and the AI agents querying them.

Enterprise data graph: The clinical knowledge foundation

Permalink to “Enterprise data graph: The clinical knowledge foundation”The Enterprise Data Graph is a unified, traversable map of every clinical data asset across the health system: EMR tables, claims fields, population health metrics, lab result schemas, care plan definitions, and the lineage connecting them.

It handles automated PHI propagation, relationship traversal, and decision traceability to build a foundation that makes minimum necessary enforcement possible at scale.

Context Lakehouse: Open, queryable, and time-travel compliant

Permalink to “Context Lakehouse: Open, queryable, and time-travel compliant”The Context Lakehouse stores all clinical metadata in Apache Iceberg format, giving it properties that directly address healthcare compliance requirements — time travel, point-in-time retrieval, vector-native search, and an open-by-design setup.

For healthcare organizations managing GDPR deletion requests against vector indices, the Lakehouse’s provenance model tracks which source records contributed to which embeddings.

Context Engineering Studio: Build, test, and validate clinical context before agents reach production

Permalink to “Context Engineering Studio: Build, test, and validate clinical context before agents reach production”The Context Engineering Studio is the workspace where clinical informatics teams define, test, and continuously refine the governed context for AI agents in healthcare. It provides context repos, automated evals, domain-scoped resolution, and more capabilities to close the trust gap and continuously improve the enterprise context layer.

MCP server: Minimum necessary enforcement at the delivery point

Permalink to “MCP server: Minimum necessary enforcement at the delivery point”Atlan’s MCP server is the protocol layer through which any MCP-compatible agent accesses governed clinical context. It is where HIPAA’s minimum necessary standard is enforced in practice.

Before any context is delivered to an agent, the MCP server checks three things in a single call:

- What this asset means in the clinical ontology

- Whether it is trusted and current against the configured freshness threshold for this workflow

- Which policies apply to the requesting role and use case

This MCP server works with any agent framework — clinical agents built on custom infrastructure, general-purpose models like Claude or GPT-4, and platform agents like Snowflake Cortex.

AI governance: Closing the loop between data governance and agent governance

Permalink to “AI governance: Closing the loop between data governance and agent governance”Atlan’s AI governance auto-discovers every AI agent deployed across the health system and maintains auditable records of what each agent queried, what it changed, and under what policy it acted.

With AI asset registry, you get a governed, queryable inventory of every model, agent, prompt, and workflow in production, with lineage connecting each one to the clinical data products it consumes and the decisions it drives. Each registry entry carries PHI classifications, the access policies in effect at each interaction, and a full decision audit trail.

Moving forward with AI agents in healthcare

Permalink to “Moving forward with AI agents in healthcare”Successful AI agent deployments in healthcare require governance infrastructure from the beginning, treating PHI policy, data freshness, and decision traceability as first-class architecture decisions.

Start with operational agents — revenue cycle, coding, prior authorization — where compliance approvals move faster and ROI is measurable.

Next, build governance infrastructure: PHI classification, access controls at the context layer, freshness thresholds, and audit logs. Use that governance baseline to enable clinical agents: diagnostic support, care plan assistance, and risk stratification.

The compliance paradox resolves architecturally. The richest and most restricted AI data are the same. Organizations that build the context layer before the agent cross from pilot to production.

FAQs about AI agents in healthcare

Permalink to “FAQs about AI agents in healthcare”1. What is an AI agent in healthcare?

Permalink to “1. What is an AI agent in healthcare?”An AI agent in healthcare is an autonomous or semi-autonomous software system that perceives data from clinical and administrative systems, reasons over that data, and takes action — drafting a clinical note, flagging a care gap, submitting a prior authorization. Unlike chatbots that respond to single prompts, healthcare AI agents execute multi-step workflows across connected systems including EMRs, claims platforms, and population health databases.

2. Are AI agents in healthcare HIPAA compliant?

Permalink to “2. Are AI agents in healthcare HIPAA compliant?”They can be, but compliance is not automatic. HIPAA applies to any system that accesses, uses, or discloses PHI, including AI agents. Key obligations include minimum necessary access controls, audit trail logging of every PHI access event, and business associate agreements with every AI vendor handling PHI. Existing Privacy and Security Rule requirements apply fully to agentic systems.

3. What is the minimum necessary standard and why does it matter for AI agents?

Permalink to “3. What is the minimum necessary standard and why does it matter for AI agents?”The minimum necessary standard (45 CFR §164.502(b)) requires PHI access to be limited to what is needed for a specific task. An agent scheduling a follow-up appointment should not retrieve the full patient clinical history. Most AI agents are designed to retrieve broad context for better performance, which creates direct tension with this requirement. Enforcing minimum necessary at a centralized context delivery layer is the most practical architectural approach at scale.

4. Why is explainability so important for clinical AI agents specifically?

Permalink to “4. Why is explainability so important for clinical AI agents specifically?”Clinicians are accountable for every care decision they make. An AI recommendation without a traceable reasoning chain cannot be verified, challenged, or incorporated into clinical judgment. Explainability in healthcare AI is an adoption requirement: the reasoning chain covering which data sources were used, how current they were, and what clinical framework was applied must be surfaced at the point of recommendation.

5. What is the vector database erasure problem in healthcare AI?

Permalink to “5. What is the vector database erasure problem in healthcare AI?”When patient data is embedded in vector databases for RAG-based agents, PHI is encoded as numerical representations. When a patient requests deletion under GDPR Article 17 or access under HIPAA, the organization must identify and act on every record including those embedded in vector indices. Standard vector databases do not support targeted deletion without a complete provenance map of which source records contributed to which embeddings.

6. What is the difference between operational and clinical AI agents in healthcare?

Permalink to “6. What is the difference between operational and clinical AI agents in healthcare?”Operational agents handle administrative workflows such as billing, coding, prior authorization, and scheduling, where errors affect efficiency and revenue but not direct patient outcomes. Clinical agents handle or inform care decisions such as diagnostic support, medication reconciliation, and risk stratification, where errors can affect patient safety. Most healthcare organizations deploy operational agents first, then use that governance foundation to enable clinical agents.

Sources

Permalink to “Sources”1.AI Agents in Healthcare, McKinsey

2.Administrative Simplification, McKinsey

3.Healthcare Workflow Savings, JAMA

4.Dragon Copilot for Nurses, Microsoft

5.HIPAA Privacy Rule, HHS

6.21st Century Cures Act, HealthIT.gov

7.HIPAA Security Rule, HHS

8.Minimum Necessary Standard, HHS

9.Right to Erasure, GDPR

10.Right of Access, HIPAA