What happens when five coordinated AI agents make hundreds of decisions per hour against live data, and the governance committee designed to approve them meets once a month? That mismatch is where most enterprise AI governance programs break down. Only 14% of enterprises enforce AI governance enterprise-wide (ModelOp, 2025).

In 2023, governance committees at large enterprises began running into the same problem. A use case intake form would arrive. A cross-functional review would be scheduled. Sign-off would be issued weeks later. The process had worked well enough when each AI deployment was a discrete, human-supervised model. Then agentic AI entered production. A single orchestration layer running five coordinated agents makes hundreds of decisions per hour against live data. No committee designed for one approval per model could govern that. The old operating model did not need an update. It needed a replacement.

| Quick Facts | |

|---|---|

| What it is | A structured operating model that converts AI governance policy into automated technical controls, with defined roles, decision rights, and enforcement mechanisms |

| Key components | Decision rights, RACI accountability, escalation paths, enforcement mechanisms, review cadence |

| Who needs it | CAIOs, CDOs, AI Platform Teams at enterprises with 3+ AI systems in production or approaching regulatory compliance requirements |

| Infrastructure requirement | Active metadata layer, lineage tracking, access controls at inference time, policy propagation across all data platforms |

| Maturity signal | Only 14% of enterprises enforce AI governance enterprise-wide (ModelOp, 2025) |

| Related standards | EU AI Act, NIST AI RMF, ISO/IEC 42001 |

What is an AI governance operating model and how does it differ from a framework?

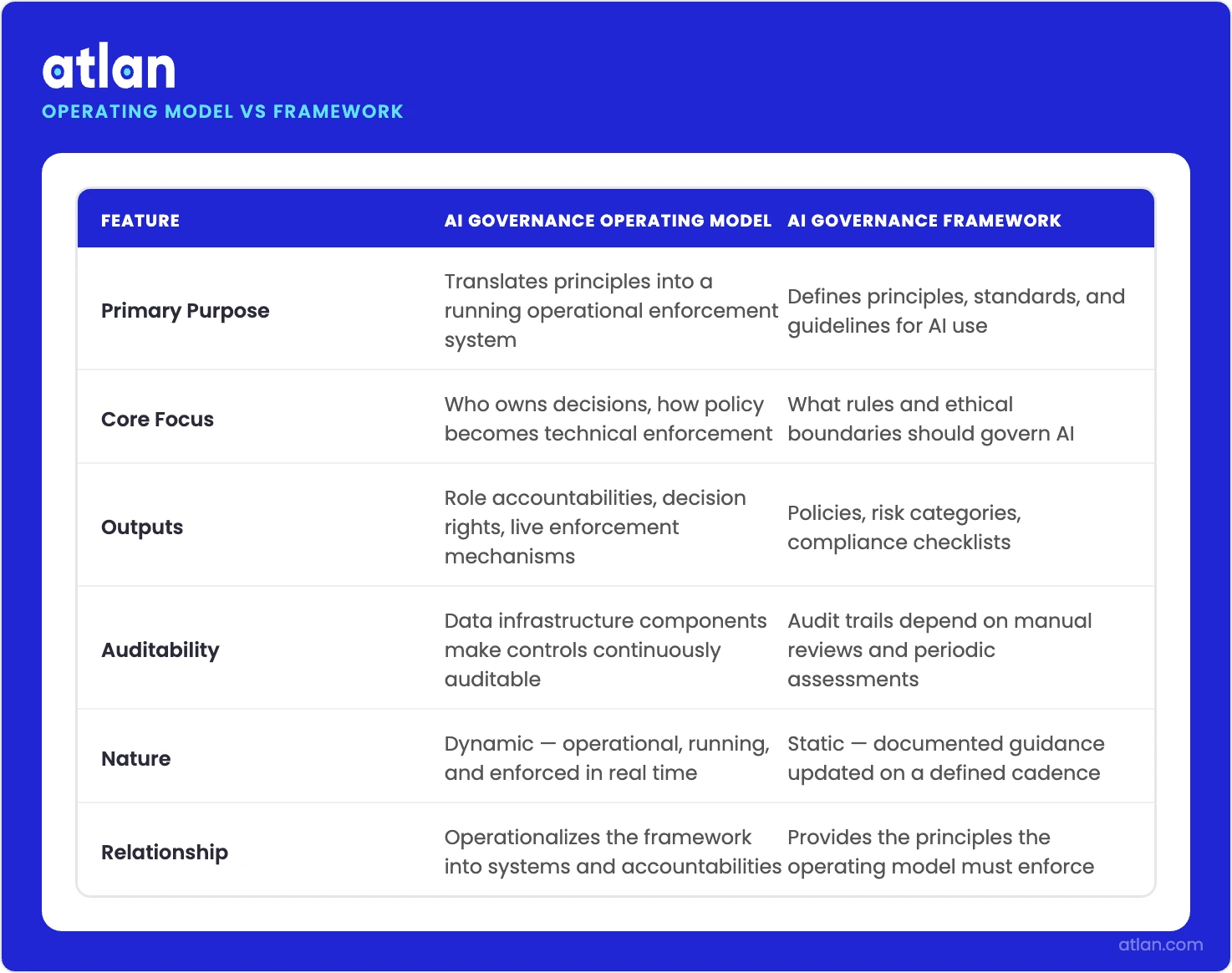

Permalink to “What is an AI governance operating model and how does it differ from a framework?”An AI governance operating model is the organizational and technical machinery that makes a governance framework operational. Where a framework defines what AI governance should achieve, including principles, standards, and regulatory requirements, the operating model defines who does what, who decides what, and how policy becomes a running technical constraint on AI system behavior.

An AI governance operating model is the organizational structure, role accountabilities, decision rights, and enforcement mechanisms that translate AI governance principles into a running operational system. It specifies who approves AI use cases, who monitors model behavior, who owns the data controls that enforce policy, and how governance incidents are escalated and resolved. It is distinct from an AI governance framework, which defines principles and regulatory requirements.

An operating model has five structural components, each covered in detail later in this article: decision rights that specify who decides what; a RACI accountability matrix; escalation paths for policy violations; enforcement mechanisms embedded in the data infrastructure; and a review cadence that matches AI deployment velocity. These five components are what convert a governance framework from policy to practice.

An AI governance framework documents the principles, regulatory standards, and prohibited use cases that govern AI system behavior. Standards like NIST AI RMF, EU AI Act, and ISO 42001 provide the regulatory scaffolding a framework translates into organizational policy. What a framework does not prescribe is who enforces those principles, through what technical mechanisms, or at what cadence. That is the operating model’s job.

Organizations adopt governance frameworks in response to regulatory pressure or board mandates. A framework can be documented in weeks: assemble a cross-functional team, reference NIST AI RMF or EU AI Act requirements, publish a policy document. An operating model requires organizational design work that frameworks do not prescribe: role definition, RACI construction, data infrastructure integration. McKinsey found fewer than one-third of organizations have begun scaling AI across the enterprise, which means most have documented policy that is not operationally enforced. That gap is the operating model problem.

How an operating model differs from a framework in AI governance. Source: Atlan.

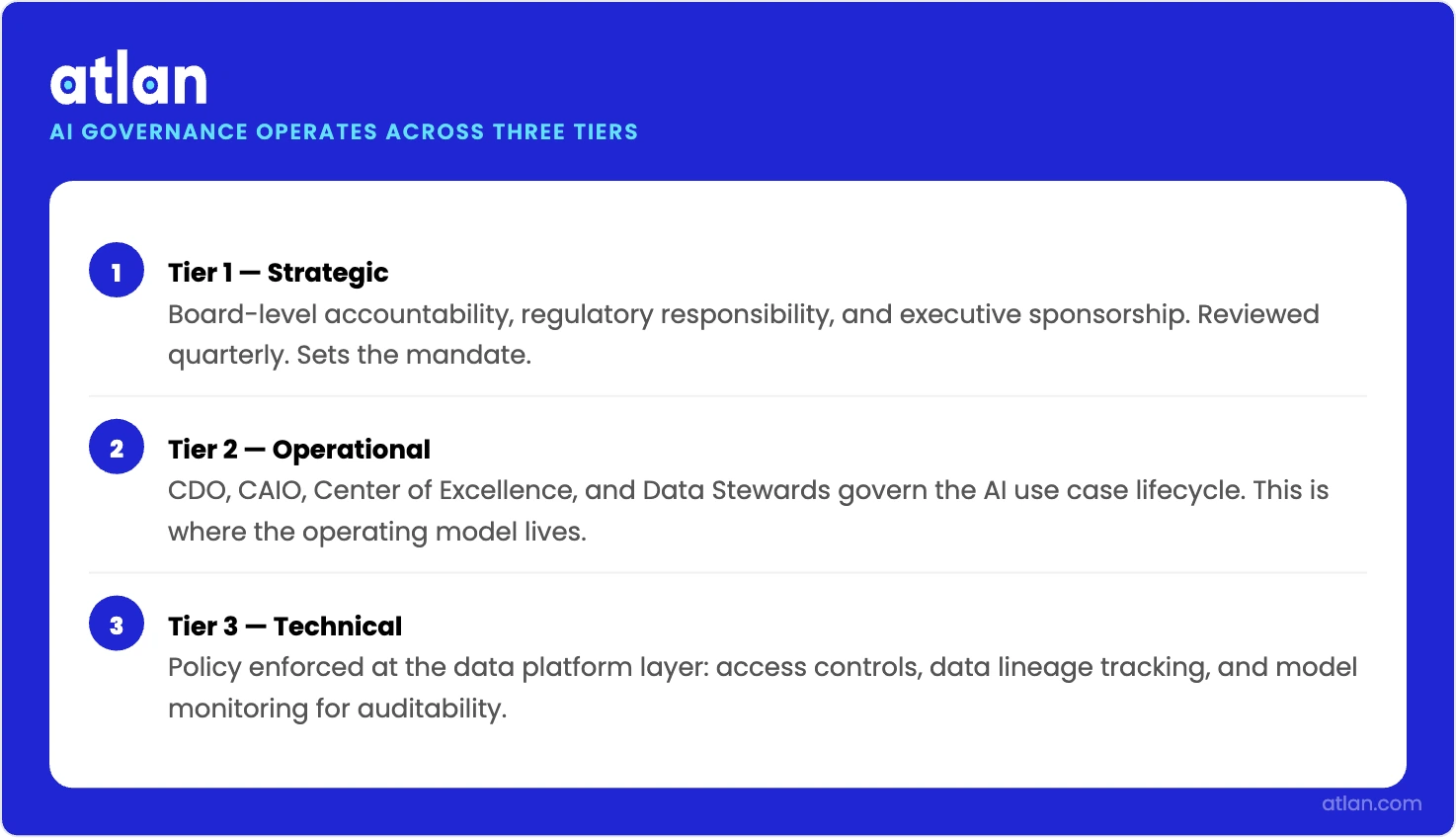

The three tiers of an AI governance operating model

Permalink to “The three tiers of an AI governance operating model”AI governance operates across three tiers. Tier 1 (strategic) establishes board-level accountability, regulatory responsibility, and executive sponsorship, reviewed quarterly. Tier 2 (operational) is where the operating model lives: CDO, CAIO, CoE, and Data Stewards govern the AI use case lifecycle. Tier 3 (technical) enforces policy at the data platform level: access controls, lineage, and model monitoring.

| Tier | Primary Roles | Primary Accountability | Cadence |

|---|---|---|---|

| Tier 1: Strategic | Board, C-Suite, CRO | AI risk oversight, regulatory accountability | Quarterly |

| Tier 2: Operational | CDO, CAIO, CoE, Data Stewards | AI use case lifecycle governance | Monthly |

| Tier 3: Technical | AI Platform Team, data platform | Access controls, lineage, model monitoring | Continuous |

The board and executive leadership own AI risk oversight at the strategic tier. This includes regulatory accountability under EU AI Act requirements for high-risk AI systems, executive sponsorship for the governance program, and risk tolerance thresholds the operational tier enforces. The CDO and CAIO report upward into this tier on governance posture and regulatory compliance status. EU AI Act enforcement for high-risk AI systems begins August 2026, which has moved this tier’s review cadence from annual to quarterly for most large enterprises.

Tier 2 is where the operating model is built, maintained, and executed. The CDO and CAIO split accountability at a specific boundary: the CDO owns the data layer (lineage, access controls, metadata policy), and the CAIO owns the AI use case lifecycle (intake, approval, monitoring, decommission). A GenAI Center of Excellence operating at this tier coordinates cross-functional reviews, manages the governance calendar, and arbitrates disputes at the CDO/CAIO boundary. The most common source of governance dysfunction at this tier is an undefined CDO/CAIO boundary that creates ownership gaps at the enforcement intersection. 44% of enterprises say their AI governance process is too slow (ModelOp, 2025). The operational tier is where that bottleneck lives.

At Tier 3, the AI Platform Team owns the enforcement work: data access controls that govern what AI models and agents can query, lineage tracking across training and inference cycles, metadata tagging that classifies assets with governance-relevant attributes, and model monitoring for drift and policy violations in production. Most operating model content treats this tier as an afterthought. That is a mistake. Tiers 1 and 2 are aspirational without a functioning Tier 3 because the enforcement backbone is the data platform, not the governance committee. See the data governance operating model for the data layer that makes Tier 3 operational.

From board accountability to technical enforcement: how AI governance is structured and owned. Source: Atlan.

The five structural components of an AI governance operating model

Permalink to “The five structural components of an AI governance operating model”An operating model that is missing any one of its five structural components will fail under agentic AI conditions. Not because the policy is wrong, but because the operational or technical mechanism to enforce it is absent. 58% of enterprises cite fragmented systems as a top obstacle to AI governance adoption (ModelOp, 2025). Fragmentation is usually a structural component failure, not a policy failure.

How do decision rights prevent AI governance dysfunction?

Permalink to “How do decision rights prevent AI governance dysfunction?”Decision rights specify which governance decisions are centralized (CDO/CAIO authority), which are delegated (business unit AI leads), and which require cross-functional sign-off from Legal, Compliance, and the Chief Risk Officer. A decision rights map prevents governance dysfunction by establishing ownership before a conflict arises. Without defined decision rights, the RACI becomes ambiguous. Accountability rows in a RACI matrix are only as meaningful as the underlying authority to act. For AI model governance decision rights detail, the model lifecycle provides the clearest ownership boundaries: intake through decommission, with CDO/CAIO split at the deployment approval gate.

How does the RACI accountability matrix assign ownership?

Permalink to “How does the RACI accountability matrix assign ownership?”The RACI maps every governance activity to one of five roles: CDO, CAIO, Data Steward, AI Platform Team, and Business Owner. Each governance activity has exactly one Accountable role, the person answerable when governance breaks down, and one or more Responsible roles who execute the work. Data Stewards function as operational enforcers in this model, not consultants. The RACI is a governance artifact: it should be documented, versioned, and reviewed on the same cadence as the operating model itself. The full AI governance RACI for these five roles is in the section below.

What do escalation paths govern in an AI operating model?

Permalink to “What do escalation paths govern in an AI operating model?”When a model produces output outside policy bounds, or when an agent accesses a restricted data asset, the escalation path defines what happens next. A three-level escalation works for most enterprises: automated flag at the data layer, Data Steward review within 24 hours, CDO/CAIO decision on halt or remediation. Under agentic AI conditions, the first level must be automated. A policy violation triggers an access halt or output block, not a manual ticket. Human review enters at Level 2, after automated controls have already acted.

How do enforcement mechanisms convert policy into automated controls?

Permalink to “How do enforcement mechanisms convert policy into automated controls?”Enforcement is not a process. It is a technical capability embedded in the data infrastructure: access controls, metadata policies, and lineage monitoring. A governance policy that is documented but not reflected in a data access control is a suggestion, not a constraint. The data layer section below covers this in full. See data governance for AI for the data layer requirements that underpin enforcement. Refer also to AI governance platforms and AI governance tools for the tooling layer.

Why does governance require a structured review cadence?

Permalink to “Why does governance require a structured review cadence?”A governance program running quarterly reviews on monthly AI deployments is structurally outdated before the first agentic workload enters production. The working model for most enterprises: continuous monitoring at the Tier 3 data layer (automated, real-time), monthly operational review by the CoE (use cases, policy updates, escalation retrospectives), and quarterly strategic review by the board and C-suite (risk posture, regulatory alignment, operating model health). As deployment velocity increases under agentic AI conditions, the continuous layer absorbs more of the governance workload, reducing the volume hitting the monthly and quarterly review cycles.

AI governance RACI: who owns what across CDO, CAIO, and AI platform teams

Permalink to “AI governance RACI: who owns what across CDO, CAIO, and AI platform teams”In an AI governance RACI, the CAIO holds accountability for the AI use case lifecycle: intake, approval, monitoring, and decommission. The CDO holds accountability for the data layer: access controls, lineage, and metadata policy. Data Stewards are responsible for day-to-day enforcement. The AI Platform Team owns technical enforcement controls. Business Owners hold responsibility for use case approval.

How to read the AI governance RACI

Permalink to “How to read the AI governance RACI”R (Responsible) = does the work. A (Accountable) = answerable when governance fails. C (Consulted) = provides input before the decision. I (Informed) = notified after the decision. Every row must have exactly one A, except Row 10, where CDO and CAIO share accountability for the operating model’s own governance review. This is a documented design decision: both roles must co-own the operating model’s evolution, so dual-A on that row is intentional, not an error. Business Owner holds R on use case approval. Governance does not own the business case; the business does.

| Governance activity | CDO | CAIO | Data Steward | AI Platform Team | Business Owner |

|---|---|---|---|---|---|

| Define acceptable AI use policies | A | C | C | I | R |

| Maintain business glossary and semantic definitions | R | I | A | C | I |

| Approve AI use cases for production deployment | C | A | I | R | I |

| Enforce data access policies for agent pipelines | A | I | R | R | I |

| Monitor model outputs for drift and accuracy | I | A | C | R | I |

| Audit agent decisions for compliance | A | C | R | I | I |

| Respond to governance incidents | A | R | C | R | I |

| Manage model lifecycle (versioning, retirement) | I | A | I | R | C |

| Enforce EU AI Act / NIST RMF controls | A | C | R | R | I |

| Conduct governance model review and update | A/C | A/C | R | I | C |

Row 10 note: CDO and CAIO intentionally share accountability for governance model review. Both roles must co-own the operating model’s evolution.

Why agentic AI requires a new operating model, not just an update

Permalink to “Why agentic AI requires a new operating model, not just an update”Agentic AI systems make thousands of autonomous decisions per day on live data, far exceeding human review capacity. Legacy AI governance, designed for one committee approval per model, cannot operate at agent velocity. The operating model must shift from pre-deployment approval processes to continuous, automated enforcement at the data layer, with escalation paths triggered by automated policy violation detection.

Gartner predicts more than 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear ROI, and inadequate risk controls (Gartner, June 2025). The inadequate risk controls component is an operating model failure. The controls exist in policy. They are not embedded in the execution infrastructure.

How governance breaks at agent velocity

Permalink to “How governance breaks at agent velocity”To illustrate the scale: a LangGraph-based agent orchestrating five sub-agents, querying 12 data assets per decision cycle at 800 decisions per hour, would generate approximately 48,000 data access events per hour. This is illustrative math, but representative of real agentic deployment patterns. The governance committee that takes 6-18 months per use case (ModelOp, 2025) cannot review even a fraction of those events. 80% of enterprises have more than 50 GenAI use cases in the pipeline, yet most have only a handful in production. That gap is not a development problem. It is a governance velocity problem. The operating model cannot pace deployment if it relies on human review at every decision point.

What the operating model actually needs to change

Permalink to “What the operating model actually needs to change”Enforcement must shift from pre-deployment approval to continuous data layer monitoring. Policy enforcement cannot wait for a governance committee review; it must be embedded in the access control layer that triggers on every data query. Escalation paths must be automated as well. A policy violation cannot generate a manual review ticket at agent velocity. It must trigger an automatic access halt, with human review entering at the point where automated controls have already blocked the action. And audit trails must track agent-specific decisions, not just model-level deployments. Lineage must capture what each agent action touched, including which data assets, which model version, and at what timestamp, because without that granularity, post-incident governance analysis is retrospective and incomplete.

The data layer as the enforcement foundation for AI governance

Permalink to “The data layer as the enforcement foundation for AI governance”The data layer is where AI governance policy becomes enforceable or remains aspirational. Metadata tags that classify data assets as restricted only enforce governance when they trigger access control decisions. Data lineage that traces what every AI model and agent accessed is the technical audit trail governance accountability requires. Without data layer enforcement, governance is documented but not running.

The data governance for AI layer and the AI governance operating model are not parallel systems. They are the same system. Policy documentation lives at Tier 2. Enforcement lives at Tier 3. When the two tiers are not integrated, governance is a reporting exercise, not a control function. See also data governance solutions for the infrastructure layer.

Static metadata describes. Active metadata enforces.

Permalink to “Static metadata describes. Active metadata enforces.”When a metadata tag classifies an asset as PII-restricted-to-approved-models-only, that classification is governance-relevant only if it triggers an access control decision at the moment an AI system queries the asset. Active metadata connects the policy layer to the enforcement layer automatically, without requiring a manual review step between the policy and the enforcement action. This is the mechanism that makes Tier 2 governance decisions enforceable at Tier 3 in real time.

Lineage as the audit trail for governance accountability

Permalink to “Lineage as the audit trail for governance accountability”When a model produces a biased output, or when a regulatory auditor under EU AI Act requests proof of technical documentation and oversight controls, lineage is what traces which data assets were used in training and inference, which agents accessed what, and when. Lineage is the technical foundation for NIST AI RMF’s Measure and Manage functions. Without complete lineage, governance audits are retrospective, incomplete, and legally exposed. For agentic AI specifically, lineage must capture agent-level actions, not just model-level deployments, because agents access data dynamically at runtime, not just at training time.

Access controls as first-line defense

Permalink to “Access controls as first-line defense”Data access policy determines what AI systems, including agents operating as part of multi-agent pipelines, can query. An access control that blocks an agent from querying restricted PII is governance enforcement at the moment it matters: before the action, not after the audit. Governance operating models that do not integrate with data platform access controls are relying on manual processes for enforcement. Under agentic AI conditions, manual enforcement cannot operate at the required velocity. The data governance AI multiplier analysis shows how data governance becomes the prerequisite for AI governance across the enterprise.

How Atlan operationalizes the AI governance enforcement layer

Permalink to “How Atlan operationalizes the AI governance enforcement layer”Atlan operationalizes AI governance at the data layer by connecting policy documentation to active enforcement infrastructure. The platform provides data lineage tracking that traces every model and agent action to its source data assets, active metadata controls that enforce access policies automatically, and AI use case governance workflows that embed approval and audit into the operating model. The full capability set is at Atlan’s AI governance capabilities.

Data lineage for agent audit trails

Permalink to “Data lineage for agent audit trails”Atlan’s field-level lineage tracks which version of an upstream data asset an agent used in a specific decision cycle, verified against the lineage graph. In practice: when a retention decision agent queries customer_churn_score, Atlan records which version of that field was accessed, which upstream pipeline produced it, and at what timestamp, creating the agent-level audit trail that EU AI Act technical documentation requirements and NIST AI RMF Manage function controls demand. This is distinct from model-level lineage: agent lineage captures runtime data access, not just training provenance.

Active metadata for real-time policy enforcement

Permalink to “Active metadata for real-time policy enforcement”Metadata tags in Atlan function as policy enforcement triggers, not just descriptors. When pii_flag = true is set on a field, Atlan propagates an access restriction to all agent pipelines querying that field within seconds. The policy change becomes a running technical constraint without requiring a manual access control update. The connection between the metadata policy layer and the enforcement layer is automated. When a governance team updates a data classification, every downstream AI pipeline inherits that constraint in real time.

AI use case governance workflows

Permalink to “AI use case governance workflows”The model_approval_status field in Atlan’s AI use case governance capability gates production deployment: agents query this field before executing against a new model version, and deployment is blocked if the status is not set to approved. This embeds the CDO/CAIO approval workflow from the RACI directly into the execution infrastructure. Governance checkpoints run inside the operating model rather than as a separate process layer alongside it. Compliance metadata management provides additional detail on how metadata governance integrates with regulatory compliance controls.

Atlan connects governance policy to data layer enforcement across AI use cases and agentic pipelines.

Book a demoRegulatory anchoring: how the operating model maps to NIST AI RMF, EU AI Act, and ISO 42001

Permalink to “Regulatory anchoring: how the operating model maps to NIST AI RMF, EU AI Act, and ISO 42001”NIST AI RMF’s four functions, Govern, Map, Measure, and Manage, map directly to AI governance operating model components. Govern establishes organizational accountability and risk tolerance (Tier 1 and 2). Map identifies use case risk profiles (CoE intake). Measure evaluates risk (model monitoring). Manage applies controls (data layer enforcement). The operating model is how the framework runs in practice.

NIST AI RMF: the Govern function as operating model anchor

Permalink to “NIST AI RMF: the Govern function as operating model anchor”The Govern function establishes organizational policies, accountability, and AI risk tolerance thresholds. In the three-tier operating model, the Govern function maps to Tier 2: the CDO and CAIO own organizational accountability, and the CoE owns the risk tolerance assessment process for each use case intake. The Map function maps to CoE intake and classification. The Measure function maps to Tier 3 model monitoring. The Manage function maps to the data layer enforcement mechanisms: access controls, metadata policies, and lineage-backed audit trails. The operating model is the organizational implementation of the NIST AI RMF. See Gartner AI governance guidance for independent validation of this mapping. External reference: NIST AI RMF 1.0.

EU AI Act: August 2026 enforcement creates operating model urgency

Permalink to “EU AI Act: August 2026 enforcement creates operating model urgency”High-risk AI systems under EU AI Act require technical documentation, human oversight mechanisms, and record-keeping capabilities. Each requirement maps to a specific operating model component: data lineage provides the technical documentation of what data each AI system used and how; escalation paths provide the human oversight mechanism that can halt or override AI system outputs; audit trails provide the record-keeping the regulation mandates. August 2026 is not a future consideration for enterprises with high-risk AI systems in production. It is the current operating model design constraint. Responsible AI principles are the layer where regulatory requirements translate into organizational policy. External reference: EU AI Act.

ISO 42001: the AI management system standard

Permalink to “ISO 42001: the AI management system standard”ISO 42001 provides the management system structure for AI governance, including organizational context, leadership accountability, risk assessment processes, and continual improvement requirements. The operating model is the organizational implementation of ISO 42001: roles map to the RACI, controls map to the enforcement mechanisms, and the review cadence maps to the continual improvement requirement. ISO 42001 certification requires an operating model that demonstrates governance controls are running, not just documented. External reference: ISO 42001.

FAQs about AI governance operating models

Permalink to “FAQs about AI governance operating models”How do you know in practice whether you have a framework or an operating model?

Permalink to “How do you know in practice whether you have a framework or an operating model?”The practical test: if your AI governance lives in a document but does not automatically block a restricted data query or generate an audit trail when an agent accesses PII, you have a framework, not an operating model. An operating model runs continuously at the data layer. Access controls fire on every query, lineage records every agent action, and escalation paths trigger automatically on policy violations, without requiring a human to initiate any of it. A framework without an operating model is a policy document. Most organizations have governance frameworks; fewer have governance operating models that enforce policy automatically.

Who owns AI governance, the CDO or the CAIO?

Permalink to “Who owns AI governance, the CDO or the CAIO?”Both, with distinct boundaries. The CDO owns the data layer: data quality, lineage, access controls, and metadata policy governing what AI systems can access. The CAIO owns the AI use case lifecycle: intake, approval, monitoring, and decommission. The enforcement intersection, data access decisions for AI systems, is co-owned. Governance dysfunction most often occurs at this boundary when it is not explicitly defined in the RACI.

How do you build an AI governance operating model from scratch, and where does a GenAI center of excellence fit?

Permalink to “How do you build an AI governance operating model from scratch, and where does a GenAI center of excellence fit?”Start with the three-tier model: define strategic accountability (board and C-suite), operational accountability (CDO, CAIO, CoE, Data Stewards), and technical enforcement (data platform controls). Then build the RACI matrix mapping each governance activity to each role. Define escalation paths for governance incidents. Embed enforcement in the data layer: access controls, metadata policies, and lineage tracking, so governance runs automatically rather than requiring manual review at each step.

A GenAI Center of Excellence (CoE) is the Tier 2 operational governance body that coordinates AI use case intake, cross-functional reviews, and governance calendar management. It sits between the executive tier and the technical enforcement tier. The CoE governs the process: which use cases get approved, under what conditions, and what monitoring requirements apply. The data platform enforces the decisions the CoE makes. When building from scratch, stand up the CoE as the operational hub before defining the RACI, because the CoE structure will determine how accountability rows are assigned.

How does agentic AI change the AI governance operating model and what breaks without those changes?

Permalink to “How does agentic AI change the AI governance operating model and what breaks without those changes?”Agentic AI systems make thousands of autonomous decisions per day, far exceeding the review capacity of human-governed approval workflows. The operating model must shift from pre-deployment approval to continuous enforcement at the data layer: automated policy enforcement on every agent action. Escalation paths must be automated; audit trails must track agent-level decisions, not just model-level deployments. The governance cadence must match the deployment velocity.

When governance is documented but not enforced at the data layer, agentic AI systems can query restricted data assets, produce outputs that violate policy, and create audit gaps that are impossible to reconstruct retrospectively. A fragmented governance stack, where policy documentation, access controls, and model monitoring run in separate systems without integration, cannot enforce consistent governance at agent velocity. The result is governance-in-name-only: policies exist on paper, enforcement does not exist in practice.

When governance runs at the data layer, it runs continuously

Permalink to “When governance runs at the data layer, it runs continuously”The enterprises that will govern AI effectively in the agentic era are not the ones with the most elaborate governance frameworks. They are the ones where governance enforcement is embedded in the data infrastructure, where metadata, lineage, and access controls run continuously, automatically, and at the speed of AI deployment.

August 2026 is when EU AI Act enforcement for high-risk AI systems moves from regulatory preparation to legal obligation. For enterprises still at governance maturity Level 2, with policies documented but not technically enforced, that deadline is not a compliance milestone. It is a structural gap that an operating model, not a framework, can close. The five structural components covered here are not aspirational. They are the minimum viable governance architecture for an agentic AI production environment.

The CDO and CAIO who get this right stop managing a governance process that runs alongside AI deployments. They manage a data layer that enforces governance inside those deployments, at every data access event. Most enterprises are not there yet. The distance between where they are and where that model operates is exactly the work the five components in this article are designed to close.