AI governance principles ensure the responsible, ethical, and effective deployment of AI. While AI systems can drive innovation and growth, they also pose risks, such as biases, unexpected outputs, and confidentiality breaches.

With AI governance principles as guidelines, organizations can tackle AI risks, build transparency, and ensure regulatory compliance.

This article will define AI governance principles, explore their key components, and discuss how to realize them within organizations.

What are AI governance principles?

Permalink to “What are AI governance principles?”AI governance principles are a set of guidelines designed to ensure the ethical, transparent, and accountable use of AI.

The goal of AI governance is to minimize risks, while maximizing business benefits. AI governance principles are guidelines that help implement AI governance effectively.

Since AI systems are still evolving, the principles and regulations surrounding them are also in flux. Different organizations have various interpretations of AI governance principles.

For the National Institute of Standards and Technology (NIST), the characteristics of trustworthy AI systems include “valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair with harmful bias managed.”

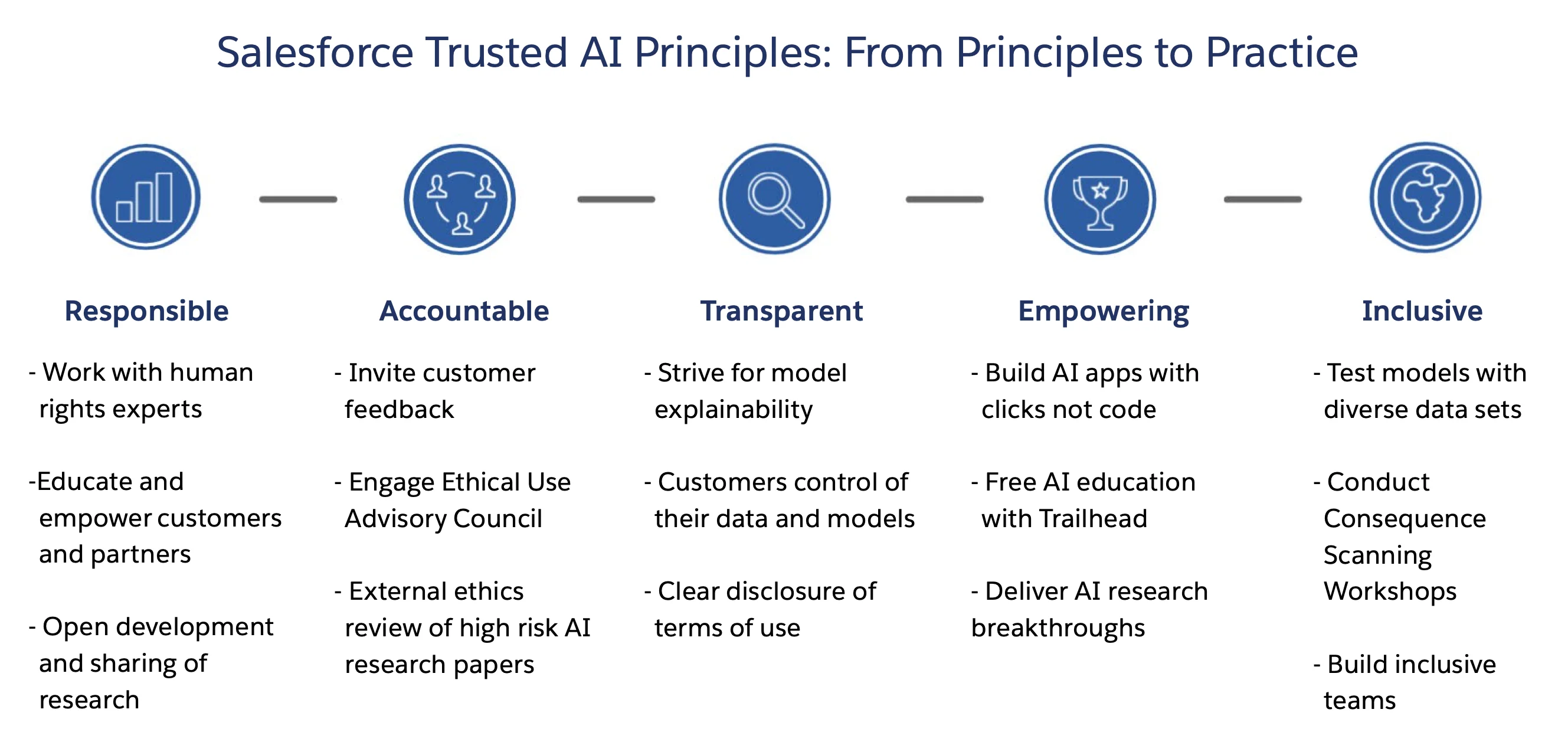

Meanwhile, Salesforce believes in AI systems being responsible, accountable, transparent, empowering, and inclusive.

AI governance principles for Salesforce - Source: Salesforce AI Research.

Whereas, for S&P Global, AI governance principles include transparency & explainability, ethical & responsible use, accountability, privacy, security, reliability, and human centrism and oversight.

Fundamental principles for AI governance - Source: S&P Global.

6 AI governance principles to follow

Permalink to “6 AI governance principles to follow”The common threads across the above interpretations are:

- Transparency: Clear and open communication about how AI systems operate and make decisions, explaining the logic and reasoning behind AI-driven outcomes and underlying algorithms

- Accountability: Clear lines of responsibility for the outcomes produced by AI systems, ensuring continuous monitoring of AI deployments and their associated risks

- Fairness: AI systems operate without bias and do not discriminate against any individual or group; IBM calls this bias control — rigorously examine training data to prevent embedding real-world biases into AI algorithms, ensuring fair and unbiased decisions

- Reliability: AI systems are consistent and dependable, with organizations testing for for “safety, security, and effectiveness across their (AI systems) entire lifecycles”

- Privacy and security: Protect data used and generated by AI systems from unauthorized access and breaches with granular data protection measures

- Responsible use: Deploy AI technologies in a manner that is ethical and aligned with societal values; IBM advocates understanding “the societal implications of AI, not just the technological and financial aspects”

Realizing AI governance principles: 4 actions to consider

Permalink to “Realizing AI governance principles: 4 actions to consider”To effectively implement these AI governance principles, organizations can adopt the following four action points:

- Translate diversity into a common language

- Adapt governance to people, not people to governance

- Embed governance as code, so that it’s not lost as documentation

- Evolve governance continuously

Let’s explore the specifics of each action point.

Translate diversity into a common language

Permalink to “Translate diversity into a common language”Data governance relies on information flowing seamlessly across teams. If different groups speak different “languages” when discussing data, you face challenges with AI governance principles, such as transparency, accountability, and reliability of that data.

So, establish a common language that works for both technical and non-technical users. That’s where data products can help — curated, reusable, reproducible, and trusted by diverse humans of data.

Adapt governance to people, not people to governance

Permalink to “Adapt governance to people, not people to governance”For AI governance to be flexible, adaptable, and enforceable, it must fit the unique needs and processes of different teams.

Instead of enforcing a rigid, one-size-fits-all approach, it must adapt to fit the organization’s structure, whether centralized, decentralized, or federated. This adaptability ensures that governance processes are practical and effective across various contexts.

Embed governance as code, not as documentation

Permalink to “Embed governance as code, not as documentation”Governance policies often get lost in static documents that are rarely referenced.

The best way to implement AI governance effectively is to integrate governance into the actual code and processes — across diverse personas, tools, and outcomes. This can be done using data contracts, metadata tags, embedding data governance policies into workflows, and more.

Evolve governance continuously

Permalink to “Evolve governance continuously”The data landscape is ever-changing, with new technologies and use cases emerging regularly. AI governance must evolve along with these changes to stay relevant.

By being open and extensible to change, AI governance can build the foundation for today and the future.

Read more → Active data and AI governance manifesto

Summing up

Permalink to “Summing up”AI governance principles are critical for the responsible and effective deployment of AI technologies. Prioritizing AI governance is vital for enterprises to build trust, ensure compliance, and enhance the overall effectiveness of their AI initiatives.

The four action points mentioned above provide practical ways to embed these principles into your organization’s workflows. As AI continues to evolve, staying committed to these principles and action points will be essential for ethical, sustainable, and responsible AI deployment.