Build Your AI Context Stack

Get the blueprint for implementing context graphs across your enterprise. This guide walks through the four-layer architecture from metadata foundation to agent orchestration, with practical implementation steps for 2026.

Get the Stack GuideContext layer for Databricks: At a glance

Permalink to “Context layer for Databricks: At a glance”| Context dimension | Native Databricks capability | What it covers | Where it ends |

|---|---|---|---|

| Technical and governance metadata | Unity Catalog | Objects, schemas, tags, classifications, policies, access controls | Metadata for assets outside Databricks |

| Lineage | Unity Catalog automated lineage | Column-level provenance for SQL, Python, R, Scala notebooks and pipelines | External pipeline lineage (Tableau, Salesforce, dbt Cloud) |

| Semantic definitions | Unity Catalog Business Semantics | Governed metrics, dimensions, agent metadata | Semantics defined in BI tools, dbt, spreadsheets |

| Quality signals | Data Quality Monitoring | Anomaly detection, profiling, drift, certifications | Quality signals from non-Databricks upstream sources |

| Organizational knowledge | AI-generated docs, ownership, domains, tags, certifications | Descriptions, owners, usage, endorsements | Decisions, approvals, and tribal context outside Databricks |

| AI control | Unity AI Gateway | Access, guardrails, cost, MCP governance | Agents and prompts built outside the gateway |

| Conversational access | Genie Spaces, Agent Mode | Natural language queries over governed data | Federated reasoning across non-Databricks systems |

| Agent platform | Agent Bricks | Knowledge Assistant, Document Intelligence, Supervisor Agent | Shared agent memory across non-Databricks agents |

| Tracing and evaluation | MLflow | OpenTelemetry traces, evals, production monitoring | Context-layer monitoring (drift, definition staleness, lineage gaps) |

What native context layer capabilities does Databricks provide?

Permalink to “What native context layer capabilities does Databricks provide?”Databricks has built a deeply integrated set of features forming the native context layer for agents operating on lakehouse data. The components break into two groups:

- Layers of context that describe data (metadata, lineage, semantics, quality, organizational knowledge).

- Platforms that consume that context (AI Gateway, Genie, Agent Bricks, MLflow).

Metadata and lineage (Unity Catalog)

Permalink to “Metadata and lineage (Unity Catalog)”Unity Catalog is the structural foundation for context inside Databricks, capturing the metadata that describes objects, schemas, and relationships agents need to reason on any asset.

Unity Catalog automatically captures end-to-end column-level lineage across SQL, Python, R, and Scala for notebooks, workflows, dashboards, and model pipelines — what agents and stewards rely on for impact analysis, troubleshooting, and AI audits.

Three boundaries matter when planning agent workloads:

- Native scope: Column-level lineage is captured for assets governed by Unity Catalog, including managed and external Delta and Iceberg tables produced by dbt, Lakeflow, Workflows, or Model Serving.

- External sources via Lakehouse Federation: SQL sources like MySQL, PostgreSQL, Salesforce, Snowflake, and Redshift can be federated into Unity Catalog, but federation pushes queries down rather than ingesting full operational lineage from those systems.

- Outside the lakehouse: Pipelines that flow through Tableau dashboards, Salesforce automations, or external dbt Cloud projects do not appear natively in Unity Catalog lineage.

Semantic definitions (Unity Catalog Business Semantics)

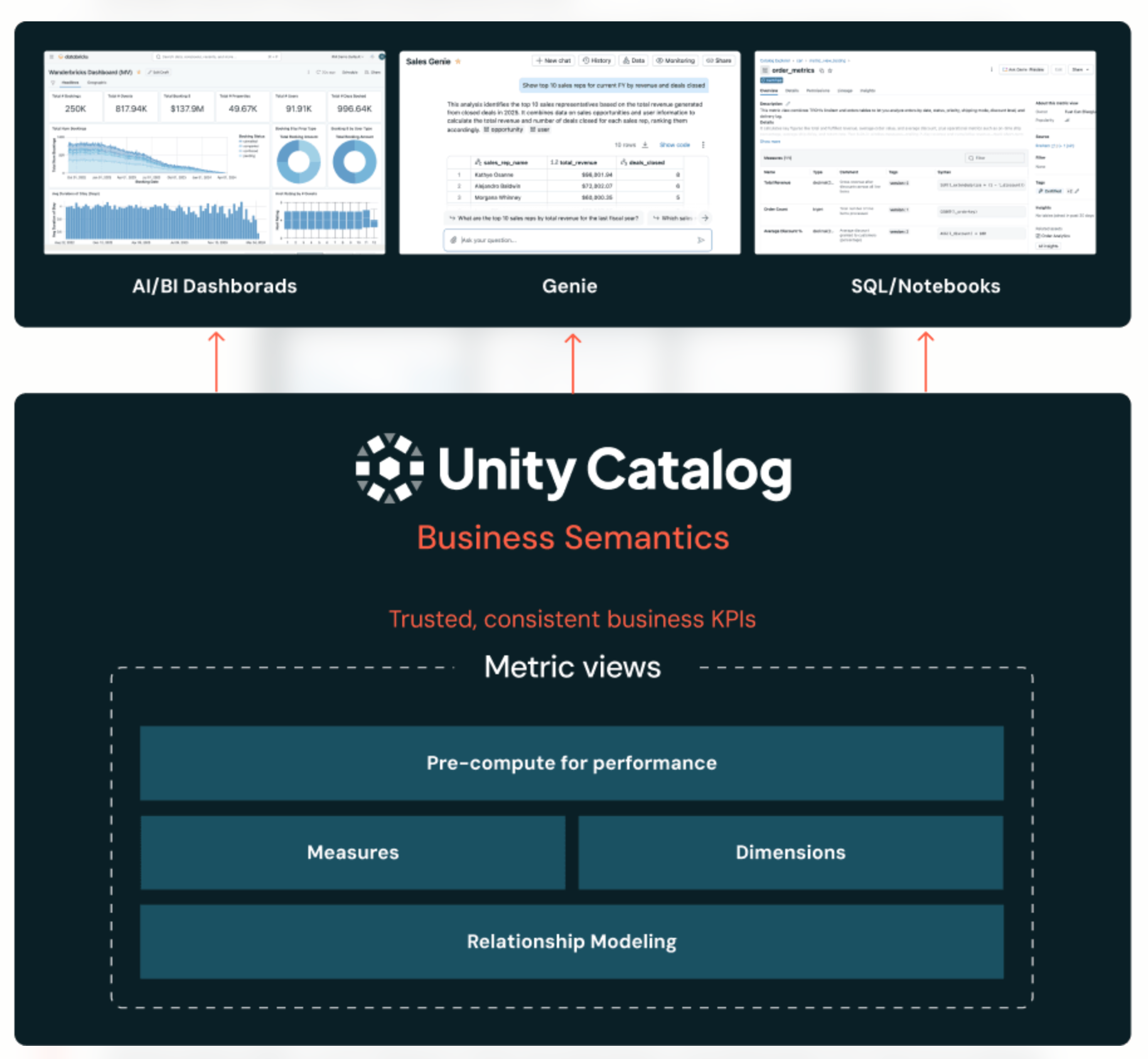

Permalink to “Semantic definitions (Unity Catalog Business Semantics)”Unity Catalog Business Semantics, generally available in 2026, centralizes metric and KPI definitions at the data layer. It has two integrated components:

- Metric Views: Reusable SQL objects that separate measure definitions from dimension groupings, so a metric is defined once and queried across any dimension at runtime.

- Agent metadata: Synonyms, display names, LLM instructions, certifications, and formatting rules that help AI tools interpret data in business terms.

Unity Catalog Business Semantics — Source: Databricks

Integrating Unity Catalog Business Semantics with an enterprise context layer like Atlan builds a single, trusted view of metrics and ties them to lineage, owners, business definitions, and more. This speeds up troubleshooting, decision-making, and AI deployments at scale.

Quality signals

Permalink to “Quality signals”Databricks Data Quality Monitoring (formerly Lakehouse Monitoring) is built directly into Unity Catalog. It provides the freshness, completeness, and reliability indicators agents use to assess whether the data they are about to act on is trustworthy.

This covers anomaly detection and data profiling, while surfacing intelligent signals (certifications, deprecation tags, etc.) in Unity Catalog.

Organizational knowledge

Permalink to “Organizational knowledge”Organizational context — the soft layer giving data its business meaning — is collected through Catalog Explorer and surfaced wherever users author queries. This includes AI-generated descriptions, ownership and usage insights, and certifications, all living inside Databricks.

Unity AI Gateway

Permalink to “Unity AI Gateway”Unity AI Gateway is the centralized control plane for AI access, standardizing connectivity to any LLM, applying guardrails, enforcing rate limits, capturing cost and payload data, and governing MCP server connections.

Genie Spaces

Permalink to “Genie Spaces”Genie is Databricks’ natural language interface to structured data, consuming Unity Catalog metadata to answer business questions in plain English using table descriptions, verified answers, and trusted metric definitions. Agent Mode extends this to multi-step reasoning over governed lakehouse data.

Agent Bricks

Permalink to “Agent Bricks”Agent Bricks is Databricks’ platform for building and governing enterprise agents, using Unity Catalog metadata — schema, business definitions, lineage, permissions, and data quality signals — to improve agent reasoning and action.

MLflow

Permalink to “MLflow”MLflow handles tracing, evaluation, and production monitoring. Traces follow the OpenTelemetry format and integrate with AI Gateway logs, giving engineering, FinOps, and security teams a unified view of agent behavior, cost, and quality.

CIO Guide to Context Graphs

For data leaders evaluating where to start, Atlan's CIO guide to context graphs walks through a practical four-layer architecture from metadata foundation to agent orchestration.

Get the CIO GuideThe Databricks agent context layer: Where it ends

Permalink to “The Databricks agent context layer: Where it ends”The native stack is robust inside Databricks, but it has clear boundaries. Outside of the Databricks perimeter, several gaps become critical as teams scale from pilots to production:

-

Cross-platform lineage: Unity Catalog lineage tracks movement within Databricks. It doesn’t natively trace dependencies that flow through Snowflake, Redshift, dbt Cloud, Tableau, Power BI, Looker, or Salesforce.

-

Enterprise knowledge graph: Databricks has no native construct for connecting assets, people, processes, and policies into a queryable graph that agents can traverse.

-

Multi-agent context sharing: Supervisor Agent orchestrates agents inside Databricks, but agents on other platforms cannot share a common semantic memory with Databricks agents.

-

Bidirectional governance propagation: Definitions and policies in Unity Catalog don’t automatically flow into BI tools or external catalogs, and external semantic changes don’t land in Unity Catalog.

-

No active ontology spanning systems: Native semantics describe Databricks objects but don’t maintain a living model of how the enterprise works as a whole.

What are the challenges with using only the Databricks native context features?

Permalink to “What are the challenges with using only the Databricks native context features?”Most data estates have multiple platforms, and not just Databricks. Relying on Databricks alone can create challenges, such as:

-

Definition drift: A metric defined in Unity Catalog Business Semantics may be calculated differently in a Tableau dashboard or Salesforce report, and agents pulling from each will produce conflicting answers.

-

Stale or partial lineage: When pipelines cross from Salesforce to Snowflake to Databricks to a BI dashboard, native lineage breaks at each platform boundary.

-

Trapped semantics: Business semantics created in Databricks cannot govern non-Databricks BI tools, data science notebooks, or external SaaS reports.

-

Compliance blind spots: GDPR, CCPA, and the EU AI Act require auditable context across the full data path, and a Databricks-only audit trail leaves gaps.

-

Agent fragmentation: Enterprises typically run agents in Slack, ServiceNow, Salesforce, and custom apps alongside Databricks, each with separate memory and no shared semantic foundation.

How can enterprises close the context gap for multi-agent systems across platforms?

Permalink to “How can enterprises close the context gap for multi-agent systems across platforms?”Closing the context gap for multi-agent systems requires treating context as cross-platform infrastructure built on four pillars.

1. Enterprise-wide context lakehouse

Permalink to “1. Enterprise-wide context lakehouse”A context lakehouse is a single, open, queryable store of metadata, lineage, semantics, governance rules, and decision traces that spans Databricks plus every other system the enterprise uses. It is the foundation on which reliable, low-hallucination agents are built.

2. Context engineering

Permalink to “2. Context engineering”Context engineering is the discipline of deliberately designing the context agents receive, rather than letting them inherit whatever metadata happens to be available. It involves bootstrapping, testing, deploying, and maintaining context with human-in-the-loop review and automated evals.

3. Context assimilation and sync

Permalink to “3. Context assimilation and sync”Context assimilation pulls metadata from every platform into a unified model, resolving conflicts and maintaining a live, synchronized view that reflects schema changes, ownership updates, and policy amendments in near real-time.

4. Governance and lineage propagation bidirectionally

Permalink to “4. Governance and lineage propagation bidirectionally”Policies, classifications, and metric definitions flow both ways between Databricks and external systems. Bidirectional propagation creates an auditable chain from raw source to AI decision, which is exactly what compliance and risk teams need to demonstrate responsible AI in regulated environments. This treats Databricks as one critical node in a wider context graph rather than the entire graph.

Inside Atlan AI Labs & The 5x Accuracy Factor

Learn how context engineering drove 5x AI accuracy in real customer systems. Explore real experiments, quantifiable results, and a repeatable playbook for closing the gap between AI demos and production-ready systems.

Download EbookHow does Atlan bring the enterprise context layer to Databricks?

Permalink to “How does Atlan bring the enterprise context layer to Databricks?”Atlan operates as the enterprise context layer that sits alongside Databricks, federating semantics, lineage, and governance across the full data estate — designed to amplify Databricks, not replace its native components.

Unifying Databricks semantics into the enterprise data graph

Permalink to “Unifying Databricks semantics into the enterprise data graph”Atlan ingests Unity Catalog assets, Metric Views, agent metadata, and lineage through native connectors and unifies them with metadata from every other system in the stack. The result is a single enterprise data graph where a Databricks metric can be linked to the Tableau dashboard that consumes it, the dbt model upstream of it, and the Salesforce field that originally seeded it.

“Metrics are the pulse of every enterprise’s Data & AI platform. By bringing UC Metrics into Atlan’s Context Graph — with lineage, business context, and zero additional permissions — our customers gain operational intelligence that was previously out of reach. This is a meaningful step toward AI-ready data at scale.”

— Chandru, Product Leader, Atlan

Cross-platform context for Databricks agents

Permalink to “Cross-platform context for Databricks agents”Agents built on Agent Bricks can call Atlan through MCP to retrieve context that originates outside Databricks, such as Snowflake table definitions, BI dashboard ownership, or governance policies enforced on a SaaS system.

Context Engineering Studio for agent context

Permalink to “Context Engineering Studio for agent context”Atlan’s Context Engineering Studio is the workspace where you can bootstrap, test, and certify context. It combines specialist AI agents that pre-populate definitions from existing metadata, human review for edge cases, automated evals, and a versioned Context Repo that any agent reads through MCP, including Databricks Genie Spaces and Agent Bricks supervisor agents.

MCP-ready context delivery

Permalink to “MCP-ready context delivery”Atlan’s MCP server exposes the context layer to any MCP-compatible agent. Databricks agents can discover assets, traverse cross-platform lineage, read glossary definitions, and verify governance policies in a single call.

Governance and AI graph

Permalink to “Governance and AI graph”Atlan’s AI Governance auto-discovers AI models and agents across the enterprise, including those running on Agent Bricks, classifies them against compliance frameworks, and maintains auditable records of every action.

The same lineage graph and policy layer cover Databricks and non-Databricks workloads.

Knowledge graph and active ontology

Permalink to “Knowledge graph and active ontology”The knowledge graph captures business concepts, policies, owners, and decision traces, while the active ontology keeps a living model that continuously updates as systems evolve — giving agents a structured map of relationships across Databricks and beyond.

Real stories from real customers building enterprise context layers with Atlan

Permalink to “Real stories from real customers building enterprise context layers with Atlan”"As part of Atlan's AI Labs, we're co-building the semantic layers that AI needs with new constructs like context products that can start with an end user's prompt and include them in the development process. All of the work that we did to get to a shared language amongst people at Workday can be leveraged by AI via Atlan's MCP server."

— Joe DosSantos, VP Enterprise Data & Analytics, Workday

"Nasdaq adopted Atlan as their 'window to their modernizing data stack' and a vessel for maturing data governance. The implementation of Atlan has also led to a common understanding of data across Nasdaq, improved stakeholder sentiment, and boosted executive confidence in the data strategy. This is like having Google for our data."

— Michael Weiss, Product Manager, Nasdaq

"Within the first year after that we cataloged over 18 million assets, defined more than 1300 glossary terms. Atlan had lineage across our on-prem Oracle databases, BigQuery, and Looker."

— Kiran Panja, Managing Director, Cloud & Data Engineering, CME Group

Next steps: Building an enterprise context layer for Databricks

Permalink to “Next steps: Building an enterprise context layer for Databricks”The native Databricks stack solves context inside the lakehouse well. The harder problem, keeping semantics, lineage, and governance consistent across every platform an enterprise runs, requires a context layer above any single vendor. Pair Unity Catalog and Agent Bricks with an enterprise context layer like Atlan, and Databricks agents become reliable, governed participants in cross-platform workflows.

FAQs about context layer for Databricks

Permalink to “FAQs about context layer for Databricks”1. What is the difference between Unity Catalog and an enterprise context layer?

Permalink to “1. What is the difference between Unity Catalog and an enterprise context layer?”Unity Catalog governs data and AI assets that live inside Databricks, including tables, models, and semantic definitions. An enterprise context layer federates metadata, semantics, lineage, and governance across every system in the stack — including Databricks plus warehouses, BI tools, and SaaS apps — so agents reason on a unified view rather than a single platform’s slice.

2. Do I still need Atlan if I use Unity Catalog Business Semantics?

Permalink to “2. Do I still need Atlan if I use Unity Catalog Business Semantics?”Yes, if your data and decision-making span more than Databricks. Unity Catalog Business Semantics governs metrics defined inside Databricks, but most enterprises also have metric logic embedded in dbt, Tableau, Looker, Salesforce reports, and spreadsheets. An enterprise context layer reconciles those definitions and exposes a single source of truth to agents and humans.

3. How does Atlan integrate with Agent Bricks?

Permalink to “3. How does Atlan integrate with Agent Bricks?”Atlan exposes context to Agent Bricks through MCP, which the Unity AI Gateway already governs. Supervisor agents and custom agents can call Atlan to retrieve cross-platform metadata, lineage, and policy context, then act on Databricks data with that broader context grounded in their reasoning loop.

4. Does Databricks already have a knowledge graph?

Permalink to “4. Does Databricks already have a knowledge graph?”Databricks provides asset graphs, lineage, and semantic relationships inside Unity Catalog, which serve graph-like purposes for lakehouse content. A full enterprise knowledge graph also covers business concepts, policies, ownership, decision traces, and assets outside Databricks, which is what an enterprise context layer is designed to provide.

5. Can Databricks agents share memory with non-Databricks agents?

Permalink to “5. Can Databricks agents share memory with non-Databricks agents?”Not natively. Agent Bricks coordinates agents inside its supervisor framework, but cross-platform memory sharing requires a shared context layer that all agents read and write through standard interfaces like MCP. This is the architecture pattern an enterprise context layer is built to support.

6. What is the fastest way to start extending the Databricks context layer?

Permalink to “6. What is the fastest way to start extending the Databricks context layer?”Begin with the highest-friction context gap your agents face, which is usually metric reconciliation between Databricks Business Semantics and an external BI tool, or lineage that breaks at the Snowflake-to-Databricks boundary. Connect both systems through an enterprise context layer, run a context engineering pass, and route agents to the unified source through MCP.