The central question of enterprise AI right now is disarmingly simple: how do you create data that’s useful, reliable, and valuable enough that someone can confidently build an agentic application on top of it?

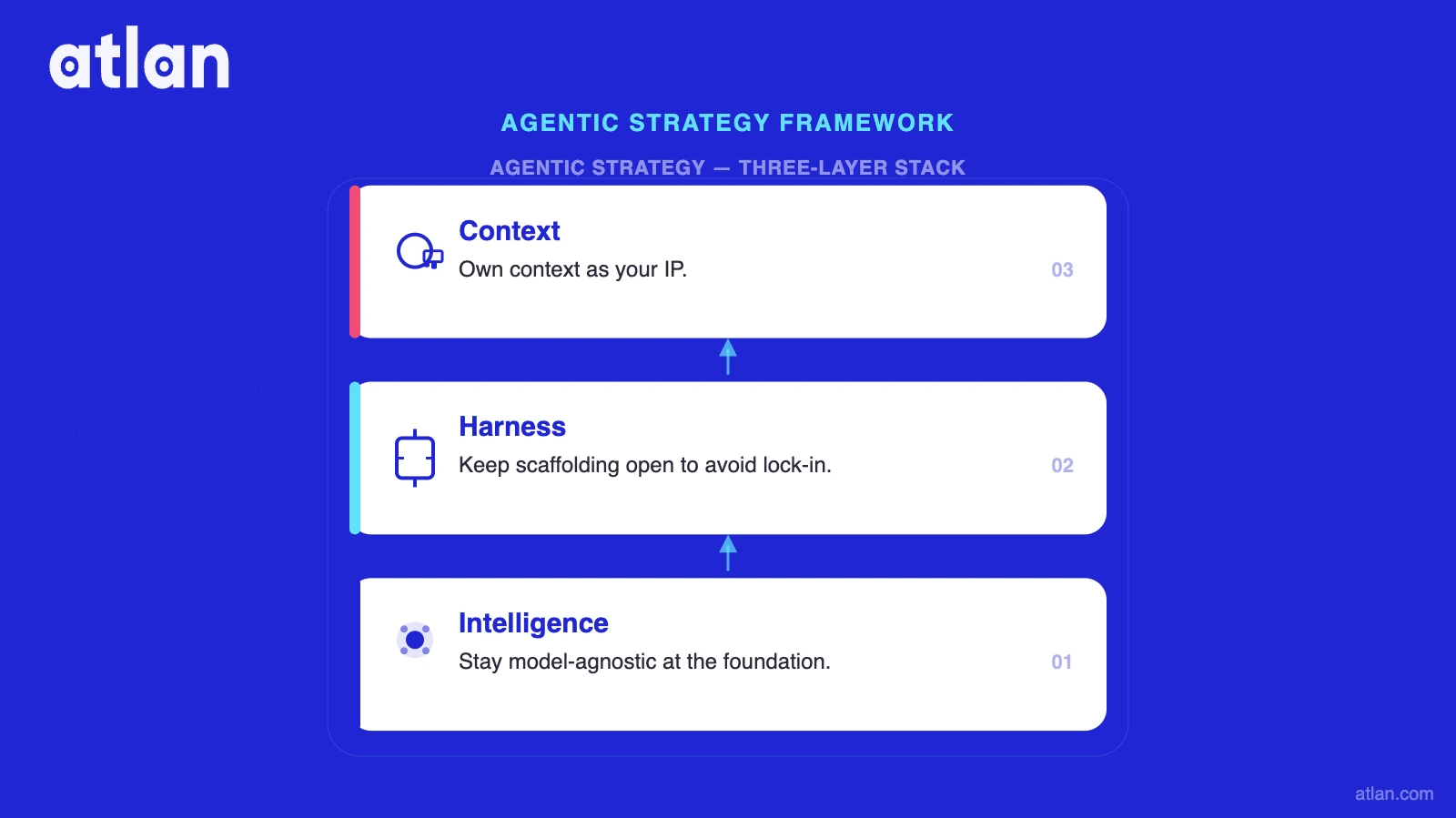

Answering it requires a clean mental model of the stack you’re building on. Every agentic strategy is really three decisions layered on top of each other: intelligence, harness, and context.

Three decisions define every agentic strategy: intelligence, harness, and context. Source: Atlan.

Most enterprises are quietly making two of the three wrong.

Intelligence: The model layer

Permalink to “Intelligence: The model layer”Start by focusing on the model layer. You may be tempted to pick a favorite and standardize on it. Don’t.

Models are changing faster than any standardization cycle can keep up with. Your use cases will be multi-model whether you plan for it or not.

Inside Atlan’s own SDLC, we use OpenAI models for code review and Claude Opus for writing code. The best model for reasoning is rarely the best model for review, or for cheap classification, or for summarization. Every serious hyperscaler now ships a model garden (Vertex, Bedrock, Foundry) because even they’ve accepted that no single model wins.

The implication for an architecture: stay model-agnostic at the foundation. Anything that forces a single-model assumption upstream is a liability the first time a better model ships, which will be about six weeks from now.

Harness: The lock-in is moving up the stack

Permalink to “Harness: The lock-in is moving up the stack”The model providers can see their own commoditization happening in real time. So the lock-in strategy has shifted one layer up, into what we call the harness: the scaffolding that wraps around the model and turns it into a working agent.

This is why Anthropic wants every developer on Claude Code. It’s why OpenAI is pushing Codex so hard.

The harness looks like a developer convenience, and it is, but it’s also the mechanism by which your cost curve and your model choice quietly get yoked together. Lock into the harness and you’ve locked into the model it’s optimized for, and the pricing that comes with it.

Context: The one thing you can actually own

Permalink to “Context: The one thing you can actually own”In a world of agentic applications, your company will own neither the model nor the harness. That’s the hard truth. That leaves exactly one layer in the stack that can be your IP: context.

Context is the business information embedded in your data, metadata, lineage, and glossaries. It’s the undocumented knowledge sitting in a thousand Snowflake tables and S3 buckets that nobody can fully map. It’s the thing that makes a generic reasoning model useful for your specific company. And it’s the thing that, if you hand it over to a model provider, you will never get back.

This is why the sharpest enterprise AI teams have been starting with governance. The policy is usually some version of: no model trains on our data, full stop. That’s a good floor that can keep your context yours. But context isn’t just something to protect; it’s something to build.

Why context engineering needs automation

Permalink to “Why context engineering needs automation”Context engineering is the hard (but increasingly necessary) part of enterprise AI. It’s also the part that can’t be solved by throwing humans at it.

Palantir learned this lesson pre-AI, selling large armies of Forward Deployed Software Engineers to do context engineering by hand. That model doesn’t survive the agentic era. A modern enterprise will build hundreds of agents across different platforms, low-code and high-code, bought and built, and every one of them will need to retrieve the right context at runtime. You cannot staff that at scale.

This is where Atlan sits. We’ve built Context Agents that harness the business information already buried in your data estate. They automatically generate glossaries and taxonomies, writing well-researched readmes in unstructured form so that any agent can find the right tables and know whether to trust the data. We’ve put it all in open format (Iceberg) with multiple interfaces on top (CLI, API, SQL, vector, semantic APIs) so the agents you build in whatever framework, in whatever year, can plug in.

Think of it as the backend server for your agents. Every agent is an application, and every application needs a server before it hits the reasoning model. The difference here is that the server is yours.

Over time, context agents won’t just fix metadata. They’ll start fixing the data itself, because they’ll be the first system that actually sees where agents are breaking and why.

The biggest risk in the agentic era: Not building a context moat

Permalink to “The biggest risk in the agentic era: Not building a context moat”The real challenge for most data platform leaders is getting a complex, multi-divisional business to fund a platform whose ROI shows up later, and often for someone else’s use case. It’s a familiar enterprise problem, and it’s acute right now because the AI landscape rewards the companies that move before the business case is airtight.

The biggest risk in the agentic era isn’t picking the wrong model. Models will keep improving. It isn’t picking the wrong harness either. Those will keep opening up. The biggest risk is not taking the risk, and letting someone else’s three-person startup with a GCP account build the context moat that should have been yours.

Get the intelligence layer right by staying open. Get the harness layer right by staying open. And then get serious about the one layer that’s actually yours: context.