If you’re evaluating Informatica EDC alternatives, you’re probably running into one of three friction points: metadata that lags 6–24 hours behind cloud stack changes, IPU pricing that’s hard to forecast as volumes grow, or a catalog that only your data stewards actually use. This guide covers all nine alternatives on architecture, lineage depth, pricing and fit.

Quick Comparison: 9 Informatica EDC Alternatives at a Glance

| Alternative | Best For | Architecture | Lineage Depth | Pricing Model | Setup Time |

|---|---|---|---|---|---|

| Atlan | Cloud-native Snowflake / Databricks / dbt stacks | Active metadata | Automated column-level, 200+ connectors | Per data asset | Weeks |

| Alation | Large enterprises with mature BI and SQL-heavy teams | Search-driven, behavioral analytics | Strong, BI-connector depth | Per user | Months |

| Collibra | Regulated industries (financial, healthcare) | Policy & workflow engine | Strong | Enterprise contract | 12–24 weeks |

| Microsoft Purview | Azure-first organizations | Native Azure integration | Solid within Microsoft ecosystem | Azure consumption | Days to weeks |

| Databricks Unity Catalog | Teams running primarily on Databricks | Native lakehouse | Zero-latency within Databricks | Bundled with Databricks | Hours |

| DataHub (OSS) | Engineering-led teams who self-host | API-first, open-source | Strong, extensible | Free + infra cost | 2–3 months |

| Apache Atlas | Existing Hadoop / HDP infrastructure | Hadoop native | HDFS / HBase focused | Free + support cost | Variable |

| erwin Data Intelligence | Teams with existing erwin modeling investments | Modeling-integrated | Automated from data model | Enterprise contract | Variable |

| Octopai | Mid-market needing fast lineage without heavy setup | Agentless | BI-focused automated lineage | Mid-market | 2–4 weeks |

What is the difference between Informatica EDC and Informatica PowerCenter?

Permalink to “What is the difference between Informatica EDC and Informatica PowerCenter?”Informatica sells multiple distinct product lines: ETL and integration tools (PowerCenter, IICS) and data catalog and governance tools (EDC, CDGC). A common mistake in comparison articles is conflating these categories, recommending data pipeline tools to teams that actually need a metadata catalog. This section clarifies what you should be comparing.

Informatica PowerCenter and Intelligent Cloud Services (IICS) are data integration products. They extract, transform and load data between systems. Informatica Enterprise Data Catalog (EDC) and Cloud Data Governance and Catalog (CDGC) are metadata management products. They index, classify and govern data assets across your stack. These are fundamentally different product categories solving different problems. When a listicle recommends Talend or Fivetran as an “Informatica alternative,” it’s comparing integration tools — not catalog tools. If your pain point is metadata discovery, lineage visibility or governance enforcement, those recommendations are irrelevant.

If your data team struggles to find trusted data assets, trace lineage for compliance audits or enforce governance policies at scale, you are evaluating the right category. The alternatives in this guide address those specific problems. If your primary need is replacing batch ETL pipelines or modernizing data ingestion from mainframes to cloud, this is not the right guide. Informatica PowerCenter alternatives are a separate evaluation with a different set of criteria and competitors.

Why are data teams reconsidering Informatica EDC in 2026?

Permalink to “Why are data teams reconsidering Informatica EDC in 2026?”Three factors are driving teams away from Informatica EDC: scheduled crawls that lag 6–24 hours behind cloud schema changes, IPU-based pricing that becomes unpredictable as metadata volumes grow, and an interface built for specialist administrators rather than broad team adoption. The Salesforce acquisition adds a fourth concern: roadmap neutrality.

If your infrastructure is shifting toward Snowflake, Databricks or dbt, you’re probably running into the same three problems: architecture limitations in cloud environments, pricing unpredictability and low catalog adoption outside specialist teams. Each makes the others worse.

Why do Informatica EDC’s scheduled crawls fail in cloud-native environments?

Permalink to “Why do Informatica EDC’s scheduled crawls fail in cloud-native environments?”Informatica EDC was built for on-premises data environments where metadata changed on predictable schedules. Its core architecture relies on scheduled crawls that scan data sources at configured intervals, then update the catalog in batch. If your team runs on Snowflake, Databricks or dbt, where schemas evolve continuously and pipelines deploy 10–50 times per day, this creates a persistent gap between the actual state of data and what the catalog reflects. Catalog entries can lag behind real data changes by 6–24 hours. When analysts query the catalog and find stale metadata, they stop trusting it, and an untrusted catalog is functionally equivalent to no catalog at all.

How does Informatica’s IPU pricing model create budget unpredictability?

Permalink to “How does Informatica’s IPU pricing model create budget unpredictability?”Informatica uses a consumption-based pricing model built around Informatica Processing Units (IPUs). IPU consumption scales with the volume of metadata processed, the number of connectors active and the frequency of catalog operations. If your metadata volumes are growing (which describes nearly every team expanding its cloud data stack), predicting quarterly IPU consumption is difficult. G2 reviews indicate that 40% of Informatica customers cite pricing unpredictability as a top concern. Customers on complex multi-source deployments frequently report that actual spend diverges from initial estimates as metadata volumes grow. This makes budget forecasting unreliable and creates friction with finance teams accustomed to predictable software licensing costs.

Why does Informatica EDC struggle with adoption beyond data stewards?

Permalink to “Why does Informatica EDC struggle with adoption beyond data stewards?”Informatica EDC was designed primarily for data stewards and catalog administrators. Its interface reflects that audience: powerful for specialist users, but requiring meaningful training for business analysts, data engineers and other stakeholders. When catalog adoption is limited to a small group of specialists (often fewer than one in five team members), you don’t realize the full value of your metadata investment. The catalog becomes a tool maintained by a few rather than a shared resource used by many.

HelloFresh experienced this pattern directly. After switching to Atlan, HelloFresh grew from roughly 10% catalog adoption to over 500 monthly active users within three months. That adoption shift turned their catalog from a governance checkbox into an operational resource used across analytics, engineering and business teams.

How does the Salesforce acquisition affect Informatica EDC’s roadmap neutrality?

Permalink to “How does the Salesforce acquisition affect Informatica EDC’s roadmap neutrality?”Salesforce completed its $8 billion acquisition of Informatica on November 18, 2025. For the data community, this raises an immediate concern: Informatica built its enterprise reputation on being the “Switzerland of data,” a multi-cloud, neutral integration platform that worked equally well with AWS, Azure, Google Cloud, Snowflake, and Databricks. Under Salesforce ownership, that neutrality is in question. Salesforce also owns MuleSoft, creating significant product overlap in the integration space. If your ecosystem isn’t built around Salesforce, you’re right to evaluate whether Informatica’s roadmap will continue to serve your interests.

What should you look for in an Informatica EDC alternative?

Permalink to “What should you look for in an Informatica EDC alternative?”Prioritize six capabilities: automated column-level lineage, active metadata monitoring, predictable pricing, native integrations with your cloud stack (Snowflake, Databricks, dbt), self-serve discovery for non-technical users, and open APIs for extensibility. Your weighting should reflect your primary pain point — not all six matter equally.

Six capabilities consistently separate modern catalogs from legacy platforms. Map the ones below to the gaps creating the most operational friction today.

| Capability | Why It Matters |

|---|---|

| Automated column-level lineage | Full data flow visibility without manual setup per source |

| Active metadata monitoring | Near-real-time reflection of schema and pipeline changes |

| Predictable pricing | Cost trajectory tied to assets cataloged, not consumption spikes |

| Native cloud integrations | Deep connectors for Snowflake, Databricks, dbt — not generic JDBC |

| Self-serve discovery | Interface usable by analysts and engineers, not just catalog admins |

| Open APIs | Extensibility for custom integrations and programmatic access |

If you’re running 80–90% of workloads on Databricks, weight native platform integration more heavily than if you’re operating a hybrid on-prem and cloud stack with diverse data sources. Similarly, if your team faces BCBS 239 compliance deadlines, you’ll prioritize formal governance workflow automation over self-serve discovery features. There’s no universally correct weighting. Map the criteria above to your actual environment and rank by the gaps that create the most operational friction today.

The 9 best Informatica EDC alternatives

Permalink to “The 9 best Informatica EDC alternatives”The strongest Informatica EDC alternatives in 2026 are Atlan (cloud-native stacks), Collibra (regulated industries), Microsoft Purview (Azure-first environments), Databricks Unity Catalog (Databricks-primary teams), and DataHub (engineering-led open-source). Options 6–9 cover specialist needs: legacy Hadoop infrastructure, modeling tool integration, and fast lineage without a full governance engine.

1. Atlan

Permalink to “1. Atlan”Best for: Cloud-native data stacks on Snowflake, Databricks, dbt or Fivetran

Atlan’s core architectural difference from Informatica EDC is its active metadata approach. Where EDC relies on scheduled crawls that scan data sources at configured intervals, Atlan monitors data systems continuously across 200+ connectors and propagates metadata changes in near-real time. When a dbt model changes, a Snowflake schema evolves or a Fivetran pipeline updates, Atlan reflects that change without waiting for the next crawl cycle. If your team deploys multiple times per day, this eliminates the metadata lag that erodes catalog trust.

Column-level lineage in Atlan is automated across more than 200 connectors without manual configuration. Informatica EDC offers column-level lineage, but it typically requires setup and tuning for each data source. Atlan’s approach reduces the implementation burden, particularly for organizations with diverse data stacks spanning cloud warehouses, BI tools and transformation layers. This lineage depth is directly relevant for GDPR, CCPA and emerging AI governance requirements where regulators expect traceable data flows from source to consumption.

Atlan’s pricing model charges per data asset rather than through consumption-based IPUs. For your growing team, this creates more predictable cost trajectories. As metadata volumes expand (which they will in any active data environment), per-asset pricing scales linearly rather than producing the variable consumption spikes that IPU models can generate. This makes budget forecasting straightforward for finance teams reviewing annual software spend.

When to choose Atlan: Organizations migrating from on-premises infrastructure to cloud-native stacks, or teams where low catalog adoption is the primary pain point. Atlan’s self-serve interface is designed for business analysts, data engineers and non-technical stakeholders, not just catalog administrators. It’s not the strongest fit if you have heavy mainframe or legacy Hadoop metadata that requires specialist connectors Informatica has developed over decades.

HelloFresh: From 10% catalog adoption to 500+ monthly active users in 3 months

"With Informatica, adoption was extremely low despite significant manual effort from our team. Within the first three months of using Atlan, we achieved over 500 monthly active users—more than 3x the adoption we saw with previous tools."

Natalie Hallak, Director of Global Data Management

HelloFresh

2. Alation

Permalink to “2. Alation”Best for: Large enterprises with mature BI environments and high governance maturity

Alation’s catalog is built around search-driven discovery and behavioral analytics. It tracks how analysts query and interact with data assets, then surfaces recommendations based on usage patterns. This approach works well in organizations with large Tableau, MicroStrategy or Looker deployments where analysts rely heavily on SQL-based exploration. Alation’s connector depth for legacy BI tools is among the strongest in the category, which matters for enterprises with long-standing reporting infrastructure that predates cloud migration.

Alation has an established presence in regulated industries, with hundreds of enterprise customers including a substantial Fortune 500 footprint. Its catalog maturity reflects years of iteration on enterprise requirements: trust scoring, data quality flags and collaborative documentation features are well-developed. If your primary use case is helping analysts find and trust the right dataset for reporting, Alation’s search-first design is a genuine strength.

When to choose Alation: Organizations with large Tableau or MicroStrategy deployments and heavy reliance on SQL-based discovery patterns. Consider Alation if business analyst adoption is a primary driver and the team is accustomed to a search-first catalog interface.

Key consideration: Alation’s governance automation is less deep than Collibra’s for policy enforcement workflows. Pricing is per-user rather than per-asset, which can scale unpredictably as catalog adoption grows.

3. Collibra

Permalink to “3. Collibra”Best for: Regulated industries (financial services, healthcare, insurance) requiring formal governance workflows

Collibra is built around policy management, data stewardship workflows and audit trails, serving over 700 enterprise customers globally. For organizations subject to GDPR, CCPA, BCBS 239 or similar regulatory frameworks, Collibra’s formal workflow engine is a genuine differentiator. It supports configurable approval chains, stewardship assignments and compliance reporting out of the box. In heavily regulated environments where governance is not optional but mandated, Collibra’s depth of workflow automation reduces the manual coordination that compliance teams typically absorb.

Collibra’s governance operating model is comprehensive, but that comprehensiveness comes with implementation cost. Deployments typically require dedicated professional services engagement, 12–24 week timelines and organizational change management. The platform’s value compounds over time as governance workflows mature, but teams expecting fast time-to-value or self-serve adoption out of the box will find the ramp-up significant. Collibra is not a “start small and expand” tool. It is an enterprise governance platform that delivers its full value when deployed at scale.

When to choose Collibra: If your data governance is driven by a formal compliance mandate and the budget supports a multi-month implementation. Not typically a fit for teams wanting quick time-to-value or lightweight, self-serve adoption.

4. Microsoft Purview

Permalink to “4. Microsoft Purview”Best for: Organizations running primarily on Azure, Microsoft 365 or Microsoft Fabric

Microsoft Purview’s deepest value is in environments already standardized on Microsoft infrastructure. It scans Azure Data Lake, Azure SQL, Synapse and Microsoft 365 data sources across 25+ native connectors with no additional licensing beyond what the organization already pays for its Microsoft cloud services. For Azure-first organizations, this eliminates a separate procurement process and reduces integration complexity. Purview’s lineage capabilities within the Microsoft ecosystem are solid and improving with each release cycle.

If you’re running a hybrid or multi-cloud environment with significant non-Microsoft data sources, Purview’s connector depth is more limited. Snowflake, Databricks and non-Microsoft BI tools are supported, but the integration depth does not match what purpose-built catalog platforms offer for those ecosystems. If you’re running a mixed stack, evaluate whether Purview’s connectors cover your critical data sources with sufficient depth, particularly for lineage tracking across non-Microsoft transformation layers.

When to choose Microsoft Purview: Azure-first organizations that want catalog and governance consolidated into their existing Microsoft licensing. Consider alternatives if your stack is primarily Snowflake or Databricks and you need deep native integration with those platforms.

5. Databricks Unity Catalog

Permalink to “5. Databricks Unity Catalog”Best for: Teams running primarily on Databricks Lakehouse Platform

Unity Catalog provides governance, lineage and discovery natively within Databricks. There is no separate catalog tool to procure, deploy or integrate. If Databricks is your primary analytics platform, Unity Catalog eliminates the integration complexity of connecting an external catalog to the lakehouse. Lineage is captured at zero latency within Databricks workloads, and access controls are enforced at the catalog level rather than through external policy engines. This tight coupling between compute and governance is a genuine architectural advantage.

Unity Catalog’s scope is bounded by what lives in Databricks. Data assets in Snowflake, Azure SQL, external BI tools or other systems outside the Databricks environment require additional configuration or fall outside Unity Catalog’s coverage entirely. If 80% or more of your data processing runs through Databricks (Databricks reports over 10,000 customers as of 2025), this limitation is manageable. If you have significant data assets outside Databricks, Unity Catalog functions as one component of a broader governance architecture rather than a standalone enterprise catalog.

When to choose Unity Catalog: Teams where the majority of data processing runs through Databricks and the goal is governance within that platform. Not a fit as a standalone enterprise catalog for organizations with significant data assets across multiple platforms.

6. DataHub (open source)

Permalink to “6. DataHub (open source)”Best for: Engineering-led data teams with capacity to self-host and customize

DataHub is an open-source metadata platform originally developed at LinkedIn, now maintained by Acryl Data with over 11,000 GitHub stars and 2,500+ community contributors. It provides data discovery, lineage and observability with no licensing cost. The API-first architecture supports high customization: teams can build custom ingestion pipelines, extend the metadata model and integrate DataHub into existing developer workflows. If your platform engineering team wants full control over catalog infrastructure, DataHub provides that flexibility.

Self-hosting DataHub requires meaningful engineering investment, typically 2–3 months for initial production deployment. Deployment, infrastructure management, upgrades and integration work all fall on the internal team. The total cost of ownership includes engineering time rather than licensing fees, which shifts the cost structure but does not eliminate it. Evaluate whether your platform team has the capacity to maintain a production metadata platform alongside its other responsibilities. For teams with that capacity, DataHub offers a level of architectural control that commercial platforms do not.

When to choose DataHub: Organizations with strong platform engineering teams that want full control over their catalog infrastructure and are prepared to invest engineering capacity in deployment and maintenance. Not a fit for teams without dedicated platform engineering resources.

7. Apache Atlas

Permalink to “7. Apache Atlas”Best for: Organizations with existing Hadoop/HDP infrastructure and established Atlas investment

Apache Atlas was designed for the Hadoop ecosystem. If you’ve already built governance workflows on top of Atlas in Hortonworks Data Platform (HDP) or Cloudera Data Platform (CDP) environments, it remains a functional catalog for on-premises HDFS and HBase assets. Atlas provides metadata classification, lineage tracking and access control within that ecosystem. For greenfield deployments or cloud-native stacks, Atlas is not a common choice and its development pace has slowed relative to commercial alternatives and DataHub.

When to choose Apache Atlas: Existing Hadoop shops that need to maintain catalog coverage for on-prem HDFS and HBase assets without adding new tooling. Not recommended as a primary catalog for modern cloud-native data stacks.

8. erwin Data Intelligence

Permalink to “8. erwin Data Intelligence”Best for: Organizations with existing investments in erwin’s data modeling suite

erwin Data Intelligence combines data catalog, governance and data modeling capabilities in a single platform. If your team relies heavily on erwin’s modeling tools for database design and enterprise architecture documentation, the integration between the model and the catalog reduces manual synchronization. Changes in the data model flow into the catalog automatically, which keeps technical metadata aligned with design-time documentation. This matters most if data modeling is a core governance function.

When to choose erwin: Organizations where data modeling is a core function and the team wants catalog and modeling in one system. Not a common choice for organizations without existing erwin investments.

9. Octopai

Permalink to “9. Octopai”Best for: Mid-market teams needing fast automated lineage without a long implementation

Octopai focuses on automated metadata discovery and lineage using an agentless approach. It scans BI tools and databases without requiring agent installation on source systems, which reduces deployment time to 2–4 weeks compared to 12–24 weeks for heavier enterprise platforms. If you need lineage visibility quickly and don’t require a full governance workflow engine, Octopai provides a focused solution with faster time-to-value than platforms like Collibra or Informatica EDC.

When to choose Octopai: Mid-size organizations where lineage coverage is the primary need and speed of deployment matters more than governance workflow depth. Not typically a fit for organizations needing comprehensive policy management or stewardship automation.

When Informatica EDC is still the right choice

Permalink to “When Informatica EDC is still the right choice”Switching platforms carries real cost. Informatica EDC remains the right choice if you rely on its legacy system connectors (mainframe, AS/400, COBOL), have active governance workflows built on CLAIRE AI, carry bundled enterprise contracts that make unbundling financially disadvantageous, or are in the middle of an active compliance audit cycle. Here are the specific scenarios where staying on Informatica is the stronger call.

Your organization runs mainframe or legacy system metadata at scale with existing Informatica connectors, and those sources are not migrating to cloud. Informatica’s connector library for legacy systems (mainframe, AS/400, COBOL copybooks) covers 100+ enterprise sources and is broader than any alternative on this list.

You have a significant existing investment in Informatica’s CLAIRE AI metadata engine and active governance workflows built on top of it. Rebuilding those workflows in a new platform carries real migration cost and operational risk.

Your contracts bundle EDC with Informatica’s broader platform (PowerCenter, IICS, MDM) and unbundling creates more friction than switching delivers value. Enterprise license agreements often make it financially disadvantageous to remove individual products.

You are in a heavily regulated industry with audit trails already embedded in Informatica’s governance workflow and a compliance certification cycle is approaching. Switching catalogs during an active audit period introduces unnecessary risk.

Your metadata volumes are very large and on-premises, and cloud migration is not on the roadmap within 24 months. Informatica EDC’s on-premises deployment model is mature and proven at scale in these environments.

If none of these conditions apply to your situation, evaluating alternatives is a reasonable step.

How do you choose between Informatica EDC and its alternatives?

Permalink to “How do you choose between Informatica EDC and its alternatives?”Choosing the right alternative depends on two primary factors: your data stack composition and your organizational profile. Most successful evaluations narrow the field to 2–3 finalists within 2 weeks, then run a 4-week proof of concept against real data sources. The frameworks below provide a starting point for that narrowing process.

By data stack:

- Primarily Snowflake, Databricks or dbt: Consider Atlan or Databricks Unity Catalog first. Both offer native integration depth with these platforms that Informatica EDC does not match.

- Primarily Azure/Microsoft: Consider Microsoft Purview first. Native licensing and integration advantages reduce total cost of ownership.

- Mixed cloud with legacy BI (Tableau, MicroStrategy): Consider Alation or Atlan. Both handle hybrid environments with strong BI connector depth.

- Primarily on-prem with Hadoop: Consider Apache Atlas or evaluate full cloud migration first. Investing in a new on-prem catalog may not be the highest-value decision.

- Need formal governance workflow automation: Consider Collibra alongside Atlan. Both offer governance workflows, with Collibra providing deeper policy enforcement and Atlan providing faster time-to-value.

By company profile:

- Mid-market (50–500 employees, cloud-native stack): Atlan, Purview or DataHub. These platforms offer the fastest path to value without requiring multi-month enterprise implementations.

- Enterprise (500+ employees, mixed environments): Atlan, Alation, Collibra or Purview based on primary pain point. Run a POC against your actual data sources rather than relying on feature matrices alone.

- Enterprise (regulated industry): Collibra or Atlan with governance package. Prioritize audit trail depth and policy workflow automation in your evaluation.

- Engineering-led platform team: DataHub or Atlan. DataHub if you want full architectural control and can absorb maintenance cost; Atlan if you want a managed platform with strong API extensibility.

What does migrating away from Informatica EDC involve?

Permalink to “What does migrating away from Informatica EDC involve?”Migration from Informatica EDC is a structured process with well-understood phases that typically spans 8–16 weeks for mid-market deployments. The technical metadata transfer is typically not the bottleneck, accounting for roughly 20% of total project time. Governance workflow remapping and user training consume the remaining 80% of calendar time.

Metadata export: Informatica EDC supports metadata export via its REST API. The primary asset types (technical metadata, business glossary terms, custom attributes and lineage relationships) can be exported in structured formats. You’ll typically export tens of thousands to hundreds of thousands of metadata assets during this phase. Most target platforms provide import utilities or professional services to facilitate the transfer.

Connector remapping: Each data source connected to Informatica EDC needs to be reconnected in the target catalog. If you have 50 or more sources, this is typically the most time-consuming phase. Prioritize critical sources first and migrate secondary sources in subsequent waves.

User migration and training: Business glossary structures and stewardship assignments often need manual review rather than automated migration. Terminology mappings, ownership assignments and approval workflows rarely transfer one-to-one between platforms. Plan for 2–4 week review cycles with business stakeholders.

Parallel running period: Running both systems simultaneously for 4–8 weeks lets you validate metadata coverage, lineage accuracy and governance workflow behavior before cutting over. This overlap period is where you’ll catch most gaps that would otherwise surface in production.

Typical mid-market migrations (50–200 data sources) take 8–16 weeks end to end, with the longest timelines driven by governance workflow remapping rather than technical metadata transfer.

Is it time to move on from Informatica EDC?

Permalink to “Is it time to move on from Informatica EDC?”Informatica EDC remains a strong choice if you have large on-premises metadata estates, mainframe connectors or existing Informatica license bundles. Its limitations surface most clearly in cloud-native environments where scheduled crawls, IPU pricing and specialist-oriented interfaces create friction that compounds over time.

The shift to Snowflake, Databricks and dbt has exposed specific gaps where purpose-built alternatives are a better fit. Atlan addresses cloud-native adoption and active metadata needs. Collibra serves regulated industries requiring formal governance automation. Microsoft Purview consolidates catalog within Azure. DataHub and Apache Atlas offer open-source flexibility for engineering-led teams.

Rather than relying on feature matrices alone, run a 4–6 week POC with two to three finalists against your actual data sources and user personas. The right tool solves your specific pain points, not the one with the longest feature list.

FAQs about Informatica EDC alternatives

Permalink to “FAQs about Informatica EDC alternatives”What is the difference between Informatica EDC and Informatica PowerCenter?

Permalink to “What is the difference between Informatica EDC and Informatica PowerCenter?”Informatica EDC (Enterprise Data Catalog) is a metadata management and data governance product that indexes, classifies and tracks data assets across your organization. Informatica PowerCenter is a data integration and ETL tool that extracts, transforms and loads data between systems. They serve fundamentally different purposes. EDC helps you find and govern data; PowerCenter moves and transforms it. Replacing one does not replace the other.

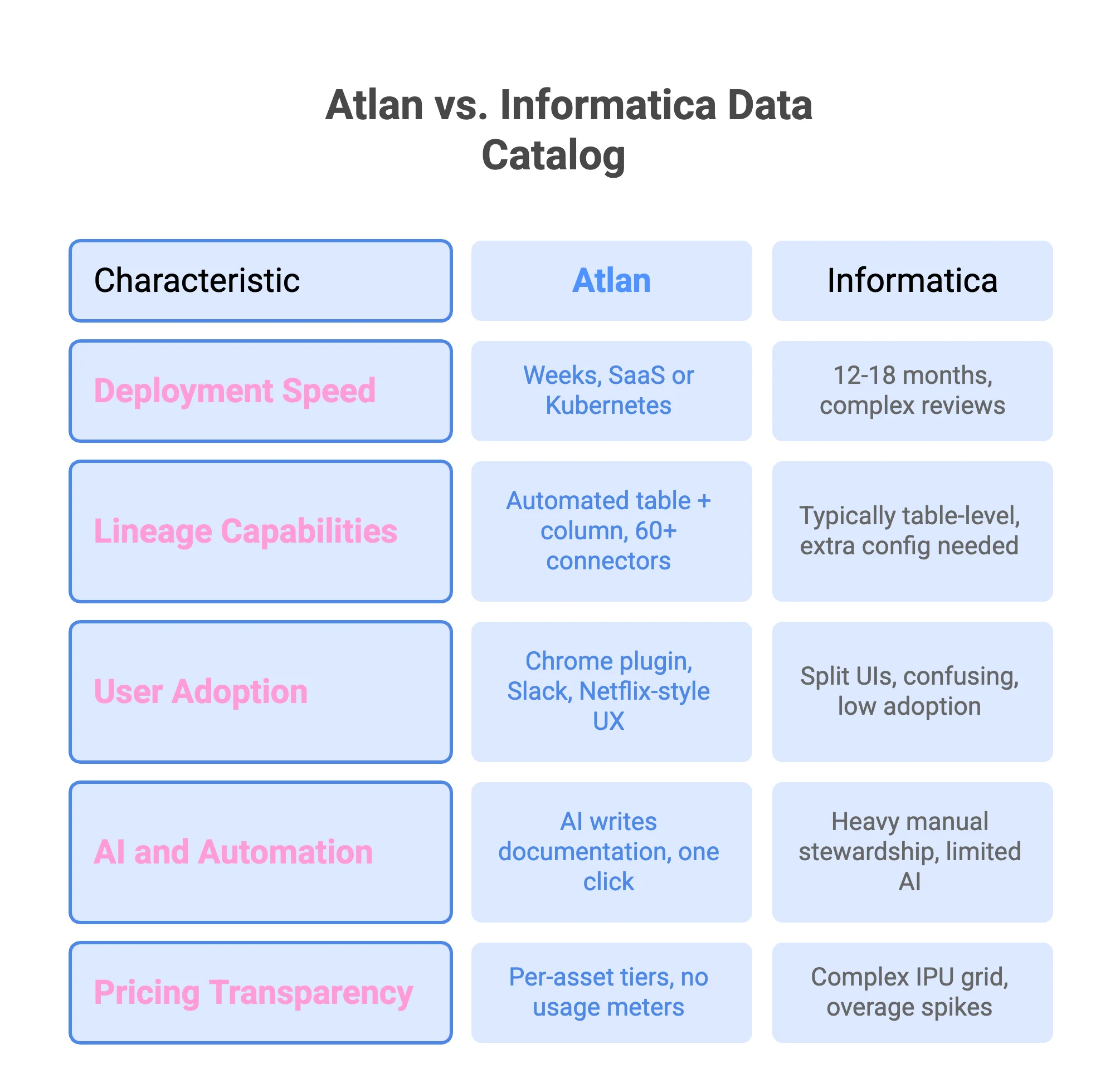

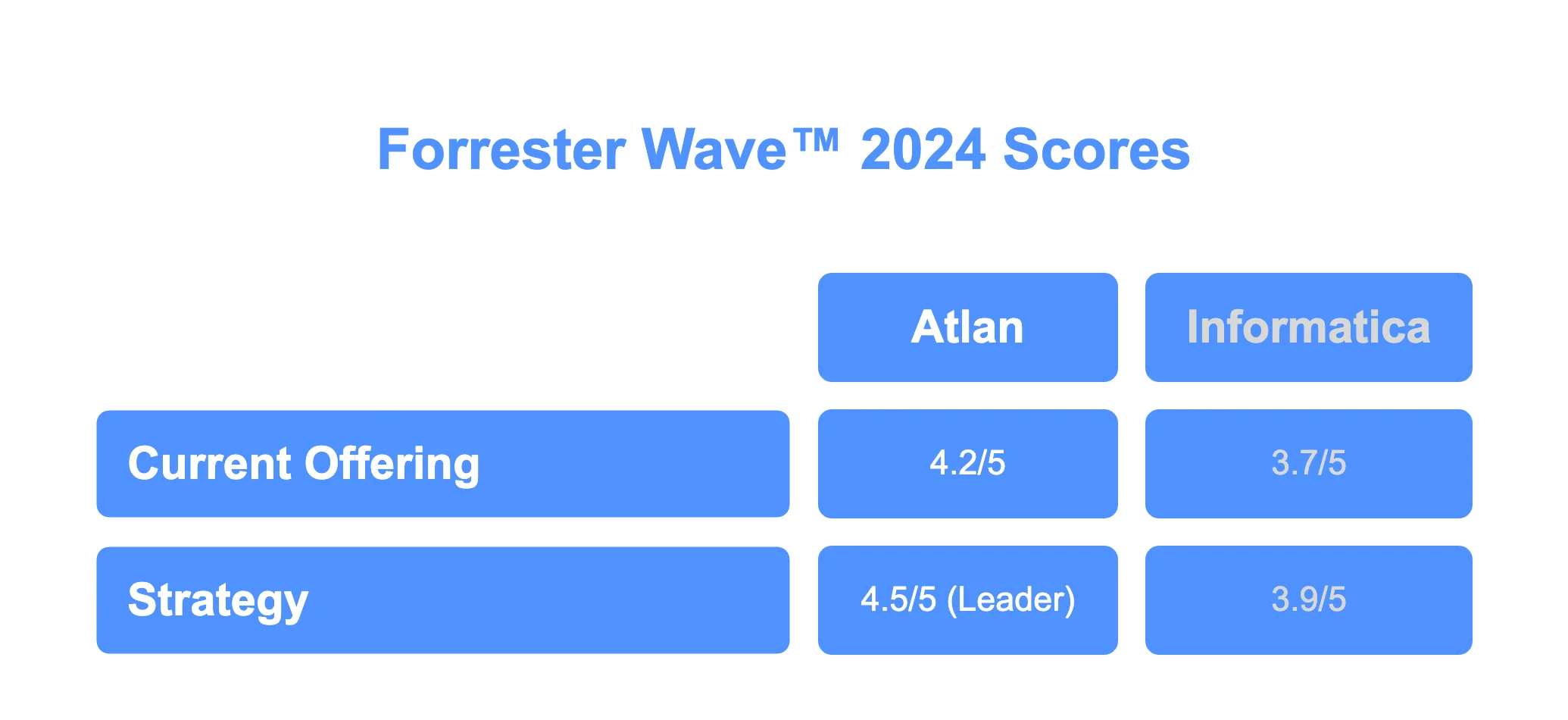

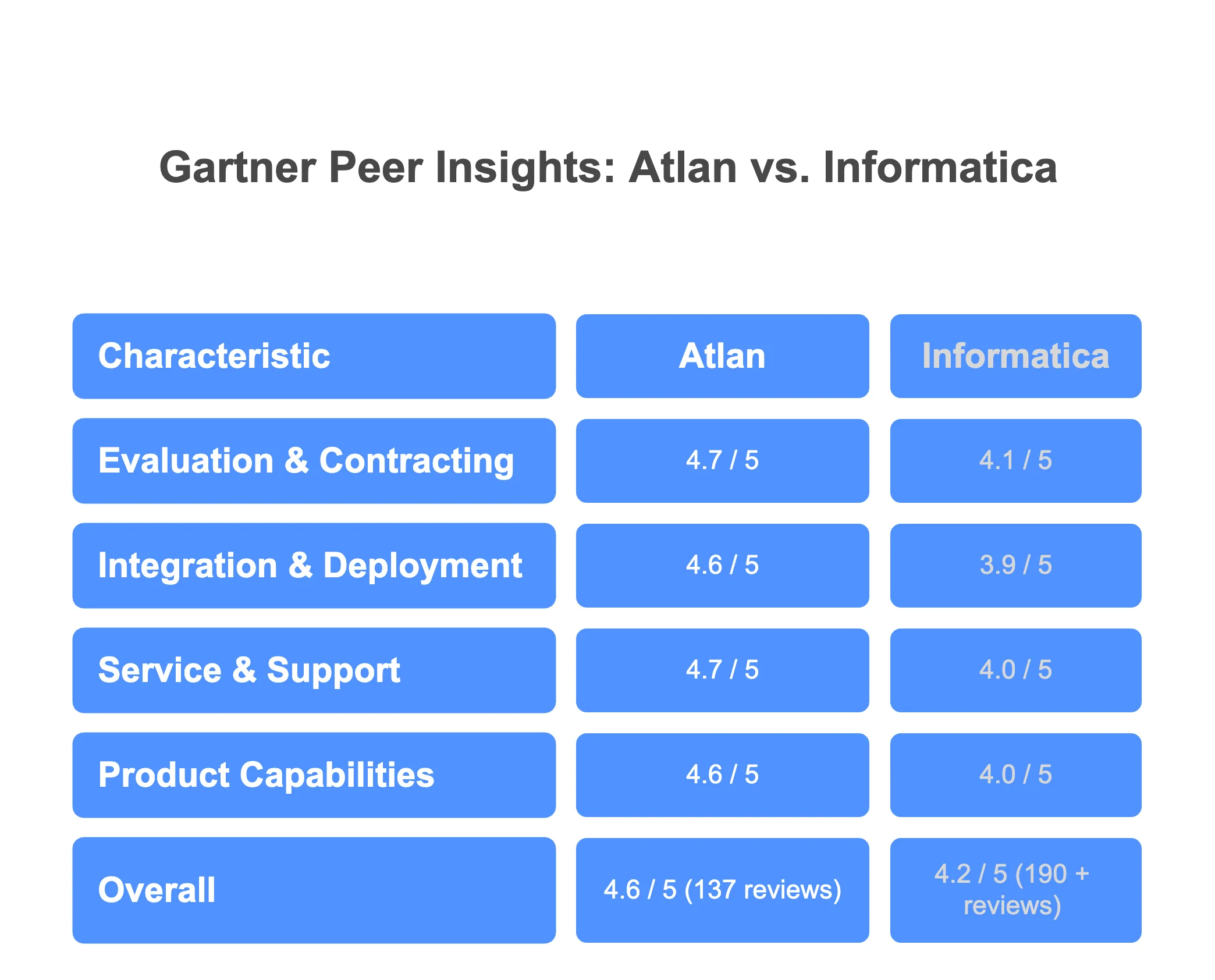

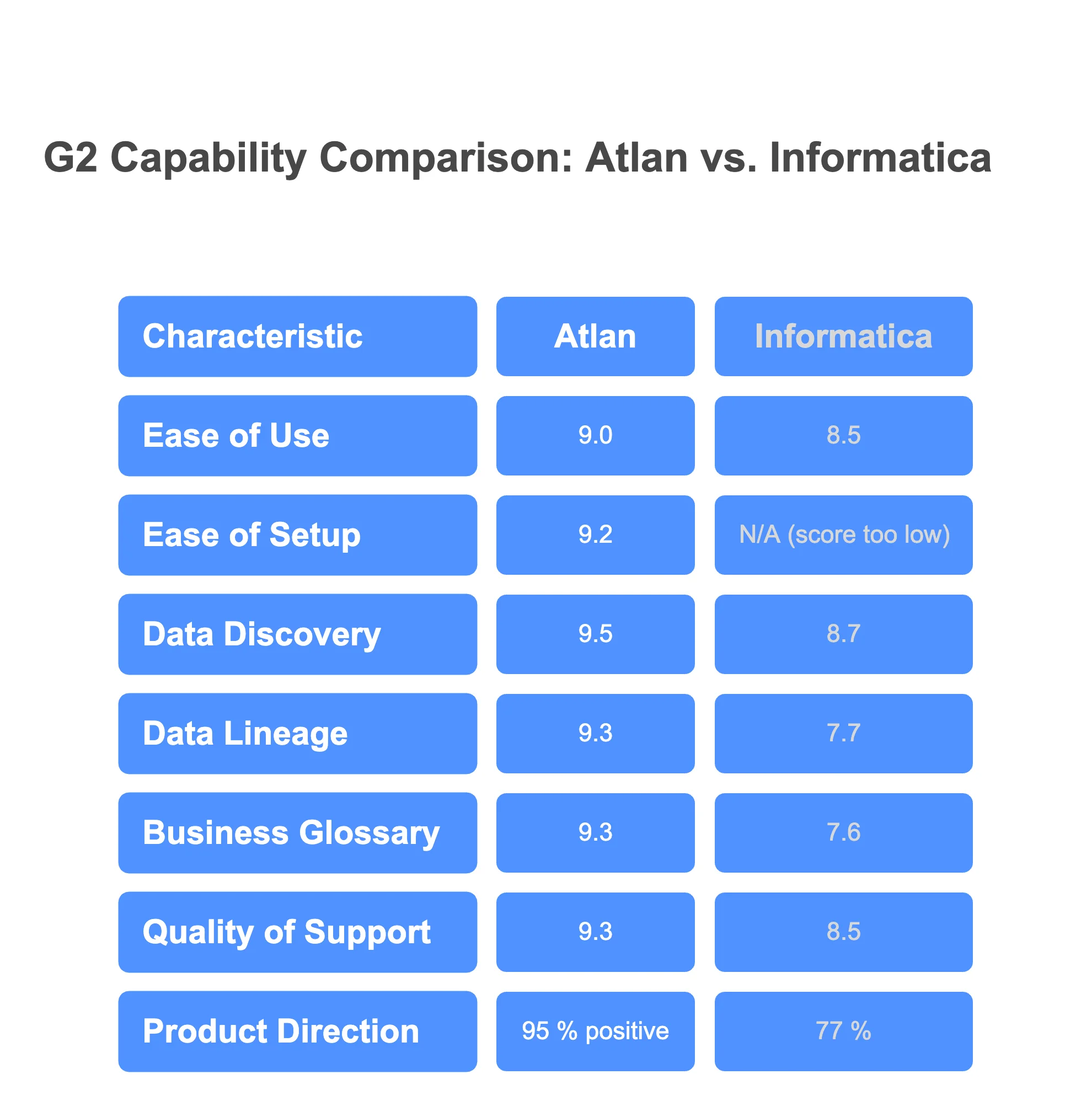

How does Atlan compare to Informatica EDC?

Permalink to “How does Atlan compare to Informatica EDC?”Atlan differs from Informatica EDC primarily in architecture and pricing. Atlan uses active metadata monitoring that reflects changes continuously, while EDC relies on scheduled crawls. Atlan provides automated column-level lineage across 200+ connectors without manual configuration. Pricing is per data asset rather than IPU-based consumption. Informatica EDC has broader legacy system connector coverage, particularly for mainframe environments. Atlan is stronger for cloud-native stacks on Snowflake, Databricks and dbt.

Is there a free or open-source alternative to Informatica EDC?

Permalink to “Is there a free or open-source alternative to Informatica EDC?”DataHub and Apache Atlas are both open-source metadata platforms available at no licensing cost. DataHub, originally built at LinkedIn, offers data discovery, lineage and observability with an active community. Apache Atlas is designed for Hadoop environments. Both require engineering capacity to deploy and maintain. The total cost of ownership includes infrastructure and engineering time rather than software licensing, so “free” does not mean zero cost.

How long does it take to migrate from Informatica EDC to a modern data catalog?

Permalink to “How long does it take to migrate from Informatica EDC to a modern data catalog?”Typical migrations for mid-market organizations with 50–200 data sources take 8–16 weeks. The technical metadata export via Informatica’s REST API is straightforward. Connector remapping (reconnecting each data source in the new platform) is usually the longest phase. Governance workflow remapping and user training add additional calendar time. Running both systems in parallel for 4–8 weeks before cutover is standard practice.

Which Informatica EDC alternative is best for regulated industries?

Permalink to “Which Informatica EDC alternative is best for regulated industries?”Collibra and Atlan are the most commonly evaluated alternatives for regulated industries. Collibra offers the deepest formal governance workflow automation, including configurable approval chains, stewardship assignments and compliance reporting for frameworks like GDPR, CCPA and BCBS 239. Atlan provides governance capabilities with faster implementation timelines. The right choice depends on whether the priority is workflow depth (Collibra) or time-to-value with strong governance (Atlan).

Does Atlan support the same lineage depth as Informatica EDC?

Permalink to “Does Atlan support the same lineage depth as Informatica EDC?”Atlan provides automated column-level lineage across more than 200 connectors, including Snowflake, Databricks, dbt, Tableau, Looker and Power BI. Informatica EDC also supports column-level lineage but typically requires configuration per data source. For cloud-native data stacks, Atlan’s lineage coverage is comparable or stronger. For legacy systems like mainframes and COBOL-based environments, Informatica EDC’s lineage connectors are more mature.

How does Informatica’s IPU pricing compare to alternatives?

Permalink to “How does Informatica’s IPU pricing compare to alternatives?”Informatica’s IPU (Informatica Processing Units) model is consumption-based, scaling with metadata volume and connector activity. This makes costs variable and harder to forecast. Atlan uses per-asset pricing, which scales linearly with data assets cataloged. Microsoft Purview uses Azure consumption-based pricing. Collibra and Alation use enterprise contract and per-user models respectively. DataHub and Apache Atlas are open-source with no licensing cost but require engineering investment to operate.

Which Informatica alternatives integrate natively with Snowflake and Databricks?

Permalink to “Which Informatica alternatives integrate natively with Snowflake and Databricks?”Atlan and Databricks Unity Catalog offer the deepest native integrations with Snowflake and Databricks. Atlan provides active metadata monitoring and automated lineage for both platforms across 200+ connectors. Unity Catalog provides native governance within Databricks but does not extend to Snowflake. Microsoft Purview, Alation and Collibra also support Snowflake and Databricks, but through standard connectors rather than native platform integration.

Can I export my existing Informatica EDC metadata when switching?

Permalink to “Can I export my existing Informatica EDC metadata when switching?”Yes. Informatica EDC supports metadata export through its REST API. Technical metadata, business glossary terms, custom attributes and lineage relationships can be extracted in structured formats. Most modern catalog platforms (Atlan, Collibra, Alation) provide import utilities or professional services to facilitate migration. Plan for manual review of governance workflow mappings and stewardship assignments, as these rarely transfer automatically between platforms.

What is Informatica CDGC and how does it relate to EDC?

Permalink to “What is Informatica CDGC and how does it relate to EDC?”Informatica CDGC (Cloud Data Governance and Catalog) is the cloud-native successor to Informatica EDC. It runs on Informatica’s Intelligent Cloud Services (IICS) platform rather than on-premises infrastructure. CDGC offers similar catalog and governance capabilities to EDC but with a cloud deployment model. Organizations currently on EDC are being encouraged to migrate to CDGC as Informatica consolidates its cloud strategy. Both products are in the data catalog and governance category, distinct from PowerCenter and IICS integration tools.

Share this article