What are the key characteristics of agentic AI?

Permalink to “What are the key characteristics of agentic AI?”“You can define agentic AI with one word: proactiveness. It refers to AI systems and models that can act autonomously to achieve goals without the need for constant human guidance. The agentic AI system understands what the goal or vision of the user is and the context to the problem they are trying to solve.”

— Enver Cetin, AI expert at Ciklum

That proactiveness is what separates agentic AI from every prior generation of AI tooling. The five characteristics below define what it looks like in practice.

1. Autonomy

Permalink to “1. Autonomy”Agents act without waiting for human approval at every step. This is what makes them valuable at scale, and what makes AI governance non-negotiable. Autonomous agents operating without access controls, audit trails, or decision boundaries create liability.

2. Tool use

Permalink to “2. Tool use”Tool use is what connects agents to enterprise systems. An agent that can query a data warehouse, trigger a pipeline, update a metadata record, or route an access request is genuinely useful. But tool access requires the same access governance that applies to human users, and in most enterprises, that governance infrastructure does not yet extend to agentic AI.

3. Memory and persistence

Permalink to “3. Memory and persistence”Agents maintain short-term context within a task and long-term memory across sessions, stored in vector databases, knowledge graphs, or structured logs. This persistence is what allows agents to accumulate institutional knowledge over time rather than starting from scratch on every request.

The quality of that accumulated knowledge depends entirely on the quality of the underlying metadata.

4. Proactive planning and reasoning

Permalink to “4. Proactive planning and reasoning”Agents don’t wait to be told what to do next. Using chain-of-thought reasoning, tree search, or task decomposition, they break complex goals into steps, evaluate options, and select actions proactively.

But their reasoning is only as sound as the context that’s available to them. An agent that reasons correctly over wrong definitions produces confident, yet wrong answers.

5. Orchestration

Permalink to “5. Orchestration”Orchestration in multi-agent systems requires coordination mechanisms: which agent handles which task, how context is handed off between them, and how conflicts are resolved when agents disagree.

This coordination layer is where most enterprise multi-agent deployments currently break down — not because the orchestration frameworks are immature, but because there is no shared context layer for agents to coordinate around.

What are the core components of agentic AI systems?

Permalink to “What are the core components of agentic AI systems?”EY defines agentic AI systems with four key components:

- Planning and reasoning: Use frameworks like “chain of thoughts” or “tree of thoughts” to create optimal plans based on context and input data, apply reasoning through reflection loops and iterate actions until goals are met.

- Tools: Agents interact with external systems using tools to execute planned activities, utilizing APIs or GUI-based task capabilities.

- Memory: Agents have both short-term memory for specific transactions and long-term memory for enterprise data, enabling Retrieval Augmented Generation (RAG). Long-term memory is typically stored in vector databases, knowledge graphs, or structured logs.

- Guardrails: Manage the risks that come with agent autonomy, operating as constraint layers that define permitted tools, data access boundaries, approval thresholds, and logging requirements.

Caption: Core components of agentic AI. Source: EY

How does agentic AI work?

Permalink to “How does agentic AI work?”“The benefit of agentic AI systems is they can complete an entire workflow with multiple steps and execute actions.”

— Kate Kellogg, MIT Sloan professor

Agentic AI systems follow a perceive-reason-act loop that repeats until a goal is achieved or a stopping condition is met.

Step 1: Perceive

Permalink to “Step 1: Perceive”The agent begins by perceiving its environment: reading inputs from connected systems, querying available tools, retrieving relevant context from memory, and assessing the current state against its goal.

This perception stage is where context quality matters most. An agent that perceives incomplete or inconsistent information will plan and act on that flawed foundation through every subsequent step.

Step 2: Reason and plan

Permalink to “Step 2: Reason and plan”It then reasons over what it has perceived, breaking the goal into subtasks, evaluating options, and selecting an action. This is where reasoning methods like chain-of-thought, tree search, and task decomposition are applied. In practice, an LLM acts as the reasoning engine using techniques like RAG.

Step 3: Act

Permalink to “Step 3: Act”The agent executes the action — calling a tool, querying a database, updating a record, triggering a workflow, or delegating a subtask to another agent. In multi-agent systems, an orchestrator agent assigns component tasks to specialist agents, each of which runs their own perceive-reason-act loop in parallel.

Step 4: Evaluate and adapt

Permalink to “Step 4: Evaluate and adapt”The agent observes the result of its action, compares it against the goal, and determines whether to continue, adjust the plan, or escalate to a human. This feedback loop is what makes agentic systems adaptive — but it also means errors compound if the evaluation step operates on poor context.

Caption: How agentic AI works. Source: Nvidia

In enterprise deployments, four additional requirements surround this loop:

- Governed tool access: Agents only reach systems and data they are authorized to use.

- Observable execution: Human operators can audit what an agent did, in what order, and why.

- Reliable context: Agents reason from consistent, governed definitions rather than reconstructing meaning from raw data on each request.

- Escalation paths: Agents know when to act autonomously and when to defer to a human.

What role does MCP play in agentic AI?

Permalink to “What role does MCP play in agentic AI?”The Model Context Protocol (MCP) is an open standard that defines how AI agents discover and consume context from external systems at inference time. Before MCP, connecting an AI agent to an enterprise system required custom integration work for each combination of agent framework and data source. MCP standardizes this interface so that any MCP-compatible agent can query any MCP-compatible server using the same protocol.

For enterprise agentic AI, MCP matters because it decouples the agent from the data source, makes context portable, and enables governance at the context layer.

Agentic AI vs. Generative AI: What’s the difference?

Permalink to “Agentic AI vs. Generative AI: What’s the difference?”Generative AI and agentic AI are related but distinct. Generative AI generates content based on patterns in training data. Agentic AI is generative AI with goals, tools, memory, and orchestration added on top.

“Rather than simply answering a question, models are trained to take some kind of action.”

— Dave Kerr, McKinsey partner

Practical implication for enterprise leaders: Generative AI is a capability. Agentic AI is a system. Deploying agentic AI requires a governed context layer that gives agents a shared understanding of the enterprise.

| Aspect | Generative AI | Agentic AI |

|---|---|---|

| Primary function | Generates content from prompts | Pursues goals through multi-step action |

| State | Typically stateless | Maintains short and long-term memory |

| Tool use | Requires external scaffolding | Native tool calling and API interaction |

| Supervision | Human-in-the-loop for each output | Autonomous execution with defined guardrails |

| Enterprise requirement | Good prompts, reliable model | Governed context, observable execution, metadata infrastructure |

What are the top use cases of agentic AI?

Permalink to “What are the top use cases of agentic AI?”Agentic AI is being deployed across industries and functions. The use cases where it consistently delivers value involve multi-step workflows that need information from multiple systems, applying organizational context, and taking action based on the result.

Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% in mid-2025.

Caption: Examples of agentic AI embedded within enterprise applications. Source: Gartner

Data management and governance

Permalink to “Data management and governance”Agents monitor data pipelines for failures, enrich metadata automatically, classify sensitive data, and enforce governance policies at runtime. What previously required teams of data stewards working manually can be partially automated through agents that operate continuously at machine speed.

Data engineering

Permalink to “Data engineering”Agents can handle the operational complexity of modern data pipelines end-to-end — monitoring for schema changes, diagnosing root causes, tracing issues back through lineage to their origin, writing and testing transformation code, and updating documentation when assets change.

AI and ML operations

Permalink to “AI and ML operations”Agents monitor model performance, detect data drift, trace features back through lineage to source systems, and flag compliance issues before they reach production. As AI deployments scale, the operational complexity of managing them exceeds what human teams can handle manually.

Business operations

Permalink to “Business operations”Agents can automate the coordination work that sits between enterprise systems — routing approvals, reconciling data across platforms, generating reports, monitoring KPIs against targets, and escalating exceptions to the right human at the right time.

Software development

Permalink to “Software development”Agents can write code, review pull requests, run tests, diagnose failures, and update documentation. Engineering organizations using agentic coding assistants report meaningful reductions in the time from requirement to deployed feature.

Financial analysis and reporting

Permalink to “Financial analysis and reporting”Agents pull data from multiple systems, apply defined calculation logic, reconcile discrepancies, and generate reports on schedule. A BCG report found that using agentic AI in financial analysis has reduced risk events by 60% in pilot environments.

Compliance and risk management

Permalink to “Compliance and risk management”Regulation-specific agents monitor policy adherence, flag violations, and produce audit documentation continuously rather than at point-in-time assessment cycles. For regulated industries, this is the use case with the most immediate ROI and the highest governance stakes simultaneously.

Cybersecurity

Permalink to “Cybersecurity”Agents can monitor enterprise environments continuously for anomalous behavior, correlate signals across systems, and respond to threats faster than human security teams. They can classify sensitive data as it is created or ingested, enforce access policies at runtime, and flag policy violations before they become incidents.

What are the biggest challenges in embracing and scaling agentic AI?

Permalink to “What are the biggest challenges in embracing and scaling agentic AI?”According to Gartner, 63% of organizations either do not have or are unsure if they have the right data management practices for AI.

The barriers to enterprise agentic AI are consistent across organizations and industries. Rather than being model problems, these challenges mostly relate to data management — the underlying infrastructure, governance, and context.

The pilot-to-production gap

Permalink to “The pilot-to-production gap”Bain’s latest AI readiness survey finds that 80% of generative AI use cases met or exceeded expectations, yet only 23% of companies can tie initiatives to measurable revenue gains or cost reductions.

The gap lies in the architecture. While most companies have launched pilots, very few have scaled them into safe, reliable operations. Agents depend on high-quality data delivered in real time, with clear lineage, standardized semantic models, and fine-grained access controls.

Fragmented context and metadata silos

Permalink to “Fragmented context and metadata silos”The typical enterprise stores context across multiple disconnected systems: data catalogs, wikis, BI semantic layers, lineage tools, data quality platforms, GRC systems, and tribal knowledge held by individuals. Agents operating across this landscape cannot reconcile basic concepts.

The cold start, testing hell, and scaling wall pattern

Permalink to “The cold start, testing hell, and scaling wall pattern”For each new AI initiative, teams spend two to three weeks manually documenting field definitions, business rules, and context for prompts and test cases. This work is often stale before the pilot reaches production. When the pilot works, reproducing it for a second use case requires the same manual effort from scratch.

Governance that has not kept pace with autonomy

Permalink to “Governance that has not kept pace with autonomy”“As you move agency from humans to machines, there’s a real increase in the importance of governance and infrastructure to control and support agentic systems.”

— Kate Kellogg, MIT Sloan professor

Traditional data governance was designed for human access patterns. When agents begin executing workflows autonomously, existing governance frameworks have no mechanism to track, audit, or constrain what those agents are doing.

Keeping context current at agent speed

Permalink to “Keeping context current at agent speed”Agents operate continuously. Data estates change continuously. A context layer updated manually or on slow schedules is always stale by the time an agent queries it. Agents acting on outdated definitions, ownership records, or quality signals compound errors downstream before any human notices.

The economics of DIY context infrastructure

Permalink to “The economics of DIY context infrastructure”Organizations building context infrastructure without a platform spend approximately three times more than those using dedicated platforms, according to Gartner projections. AI data readiness spend is expected to grow seven times between 2025 and 2029.

Caption: Worldwide AI Spending by Market, 2025-2027. Source: Gartner

The organizations that get this right early will have a compounding advantage. Those that rebuild context from scratch for each new use case will face an accelerating cost disadvantage.

How does Atlan help enterprises with agentic AI?

Permalink to “How does Atlan help enterprises with agentic AI?”Atlan provides the metadata and governance infrastructure that gives agents the shared, governed understanding of the enterprise they need to operate reliably. Atlan’s platform is built around four interconnected engines that together constitute the context layer agentic AI requires.

Metadata lakehouse as the open context store

Permalink to “Metadata lakehouse as the open context store”An Apache Iceberg-based metadata lakehouse stores all technical, business, and operational metadata as queryable tables. Schemas, lineage, data quality signals, usage logs, glossary terms, policies, and event logs are stored as first-class data — open, queryable by any Iceberg-compatible engine, and analytics-ready.

This closes the fragmentation problem at its root: agents query one authoritative source rather than assembling context from disconnected systems.

Enterprise data graph and knowledge graph

Permalink to “Enterprise data graph and knowledge graph”A context graph connects assets, business concepts, people, policies, and events into a unified representation of the enterprise. It captures semantic relationships, operational lineage, governance applicability, decision traces, and temporal validity. This graph serves as the runtime context for AI agents, enforcing access control and surfacing constraints during reasoning rather than after the fact.

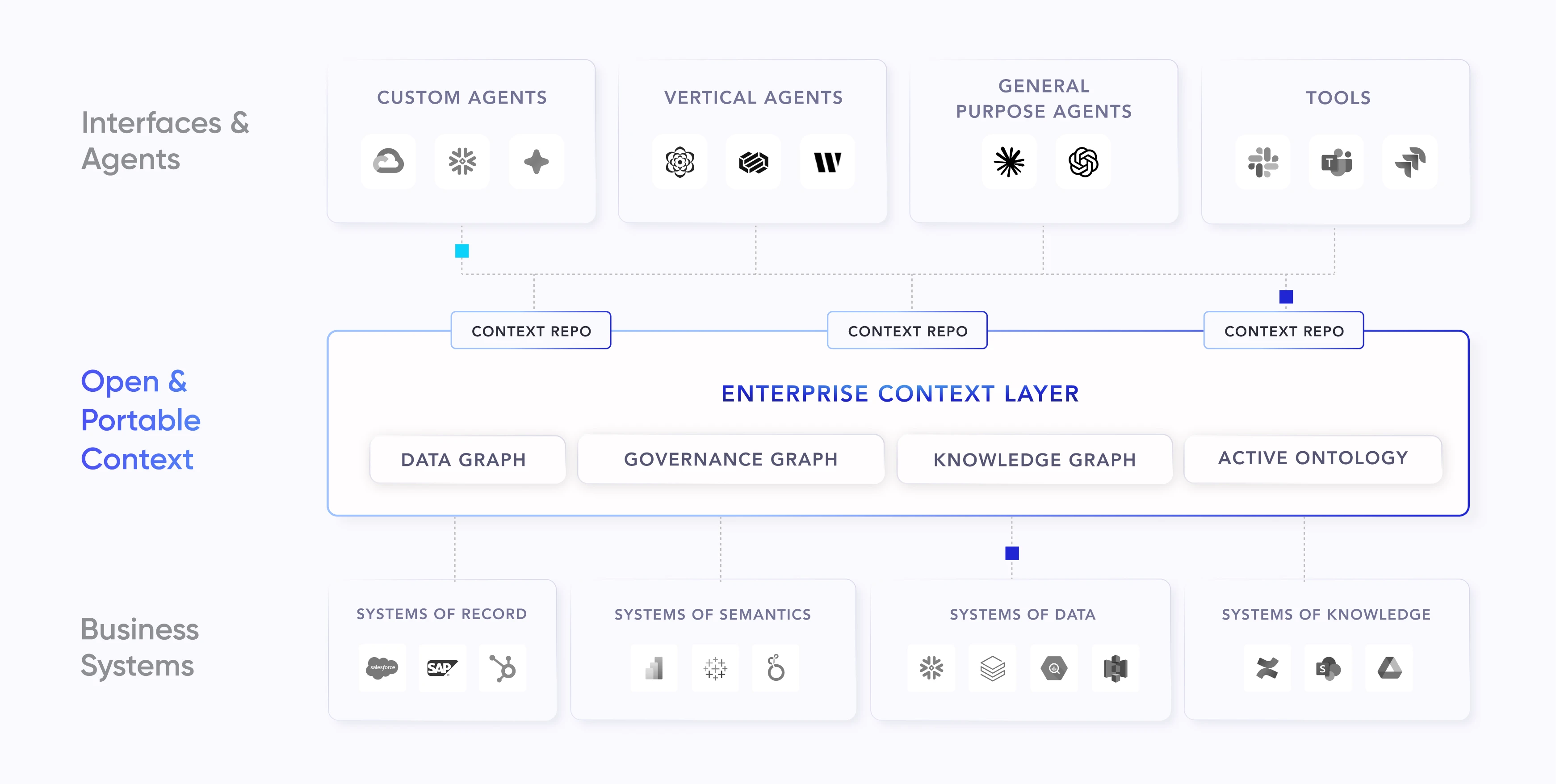

Enterprise context layer for agentic AI

Permalink to “Enterprise context layer for agentic AI”Atlan’s open and portable enterprise context layer weaves the data and knowledge graphs together with a governance graph and active ontology. It’s equipped with a Context Studio to build, test, and deploy context-aware agents. Its model-agnostic Context Repos package pre-assembled governed context for immediate agent consumption.

MCP server and App Framework

Permalink to “MCP server and App Framework”Atlan’s MCP server exposes the context layer to any MCP-compatible agent. Agents can search and discover assets, traverse lineage, access glossary definitions, read governance policies, and — where permitted — update metadata. This interface works with general-purpose agents like Claude and ChatGPT, platform agents like Snowflake Cortex and Databricks Genie, and custom agents built on internal frameworks.

Atlan’s App Framework lets organizations build context-aware applications and agent workflows on top of the metadata lakehouse, reusing Atlan’s security and lineage infrastructure.

Automation engine and AI governance

Permalink to “Automation engine and AI governance”Atlan’s automation engine orchestrates metadata ingestion, classification, quality checks, policy enforcement, and approval workflows continuously. Atlan’s AI Governance auto-discovers AI models and agents deployed across the enterprise, classifies them against governance frameworks, and maintains auditable records of every agent action — what it queried, what it changed, and under what policy it acted.

Real stories from real customers building an enterprise context layer for agentic AI

Permalink to “Real stories from real customers building an enterprise context layer for agentic AI”"AI initiatives require more context than ever. Atlan's metadata lakehouse is configurable, intuitive, and able to scale to hundreds of millions of assets. As we're doing this, we're making life easier for data scientists and speeding up innovation."

— Andrew Reiskind, Chief Data Officer, Mastercard

"With Atlan, we cataloged over 18 million data assets and 1,300+ glossary terms in our first year, so teams can trust and reuse context across the exchange."

— Kiran Panja, Managing Director, CME Group

Moving forward with scaling agentic AI in your enterprise

Permalink to “Moving forward with scaling agentic AI in your enterprise”The bottleneck in enterprise agentic AI is context, semantics, and governance. Organizations that recognize this early and invest in the metadata infrastructure that gives agents a shared, governed understanding of the business will scale agentic AI successfully.

Those that treat context as a secondary concern and focus primarily on model selection and agent frameworks will encounter the cold start, testing hell, and scaling wall pattern on every new initiative.

The path forward is consistent: unify metadata first, build the context layer second, then expose that context to every agent through open standards like MCP. This architecture determines whether agentic AI delivers production value or remains confined to controlled demonstrations.

FAQs about agentic AI

Permalink to “FAQs about agentic AI”1. What is the concept of agentic AI?

Permalink to “1. What is the concept of agentic AI?”Agentic AI refers to AI systems built from one or more autonomous or semi-autonomous agents that can perceive their environment, reason over goals, and take multi-step actions using tools, memory, and orchestration.

The key distinction from generative AI is autonomy: agentic systems pursue objectives across multiple steps without requiring human instruction at each one. For enterprises, the additional critical requirement is grounding — agents must operate on governed organizational context, not just raw data, to produce outputs that are reliable and explainable.

2. What is the difference between agentic AI and generative AI?

Permalink to “2. What is the difference between agentic AI and generative AI?”Generative AI generates content in response to prompts. It is typically stateless, does not call tools natively, and does not manage multi-step workflows without external scaffolding. Agentic AI is generative AI equipped with goals, tools, memory, and orchestration. It doesn’t respond to prompts — instead, it pursues objectives. Deploying generative AI requires good prompts and a reliable model. Deploying agentic AI requires governed tool access, reliable context infrastructure, and observable execution.

3. What is agentic AI vs LLM?

Permalink to “3. What is agentic AI vs LLM?”A large language model (LLM) is the reasoning engine inside an agentic system. It handles language understanding, generation, and chain-of-thought reasoning. Agentic AI is the broader system built around an LLM: the goal definition, tool access, memory management, orchestration, and governance layer that allows the LLM to pursue objectives rather than just respond to prompts.

4. Is ChatGPT agentic AI?

Permalink to “4. Is ChatGPT agentic AI?”ChatGPT in its standard form is generative AI: it responds to prompts and is stateless beyond the current conversation. ChatGPT with tools enabled — web search, code execution, file access — begins to exhibit agentic characteristics. OpenAI’s dedicated agent products are explicitly agentic: they pursue goals, call tools, and manage multi-step workflows. The distinction is the architecture surrounding the model, not the model itself.

5. What is an example of an agentic AI?

Permalink to “5. What is an example of an agentic AI?”A data governance agent that continuously monitors a data estate for policy violations, classifies newly discovered sensitive data, routes remediation tasks to the appropriate data owners, and produces a compliance report — without human instruction for each step — is agentic AI. Other examples include coding agents that diagnose and fix pipeline failures, financial agents that reconcile reporting discrepancies, and customer service agents that resolve issues end-to-end.

6. Does agentic AI deliver tangible business benefits?

Permalink to “6. Does agentic AI deliver tangible business benefits?”Yes, with an important condition: the context layer underneath the agents must be governed and reliable. The gap from concept and production to scale consistently traces to context and governance readiness, not model capability. Organizations that have invested in unified metadata infrastructure before scaling agentic AI report meaningful reductions in manual data management effort, faster time to insight, and governance coverage that scales with AI deployment rather than lagging behind it.

This guide is part of the Enterprise Context Layer Hub — 44+ resources on building, governing, and scaling context infrastructure for AI.

Share this article