Context management software is four distinct categories of tools that deliver governed context to AI agents and LLMs at inference time: context engineering frameworks, RAG and retrieval infrastructure, AI agent platforms, and enterprise context platforms. The reason most enterprise AI pilots stall is rarely the model. It is buying the wrong category first.

This software category gives AI agents the right context at the right moment. The category is not one product type. It is four, each operating at a different layer of the AI stack. Choosing the right one depends on whether you are building a single agent in production or governing context across an organization.

- Context engineering frameworks for application-layer memory and context-window curation

- RAG and retrieval infrastructure for indexing enterprise documents and serving relevant chunks

- AI agent platforms for orchestrating multi-step agent workflows in production

- Enterprise context platforms for governing what context exists across the organization

Context engineering has emerged as a distinct discipline in the past year, with engineering teams at JetBrains publishing dedicated research on efficient context management for LLM agents and Anthropic codifying it as a separate practice from prompt engineering. Below is the quick-reference table.

| Category | What it does | Example software | Who buys it |

|---|---|---|---|

| Context engineering frameworks | Manages what enters an LLM’s context window at inference time | Zep, LangChain, LlamaIndex, Mem0, CrewAI memory | Application developers building a single agent |

| RAG and retrieval infrastructure | Indexes and serves enterprise documents to AI | Pinecone, Weaviate, Chroma | ML and AI engineering teams |

| AI agent platforms | Orchestrates multi-step agent workflows in production | AWS Bedrock Agents, Relevance AI, Langflow | Application teams deploying agents |

| Enterprise context platforms | Governs what context exists across the organization | Atlan, DataHub | Data platform and AI platform leaders |

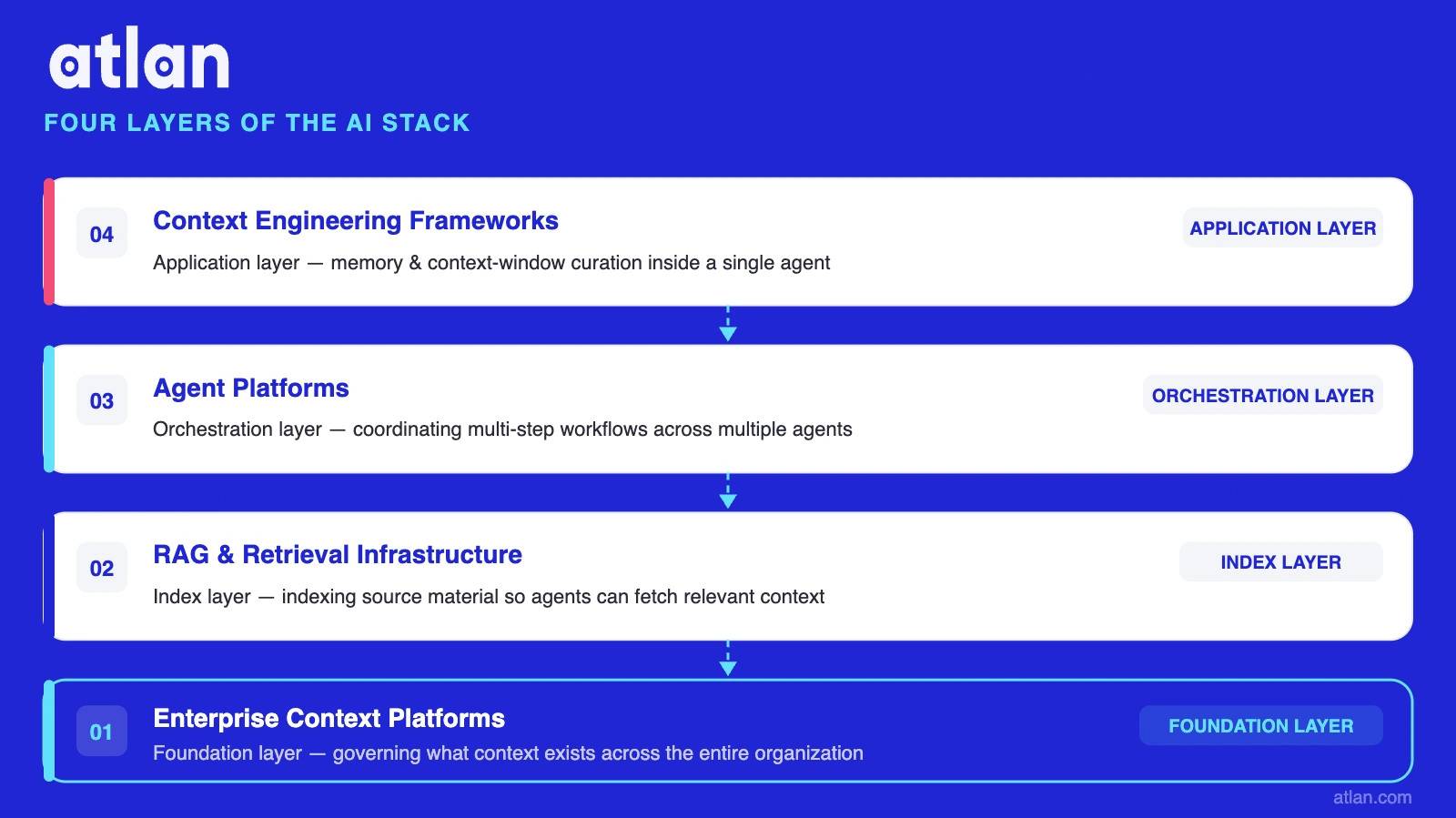

Where each context management category sits in the AI stack. Image by Atlan.

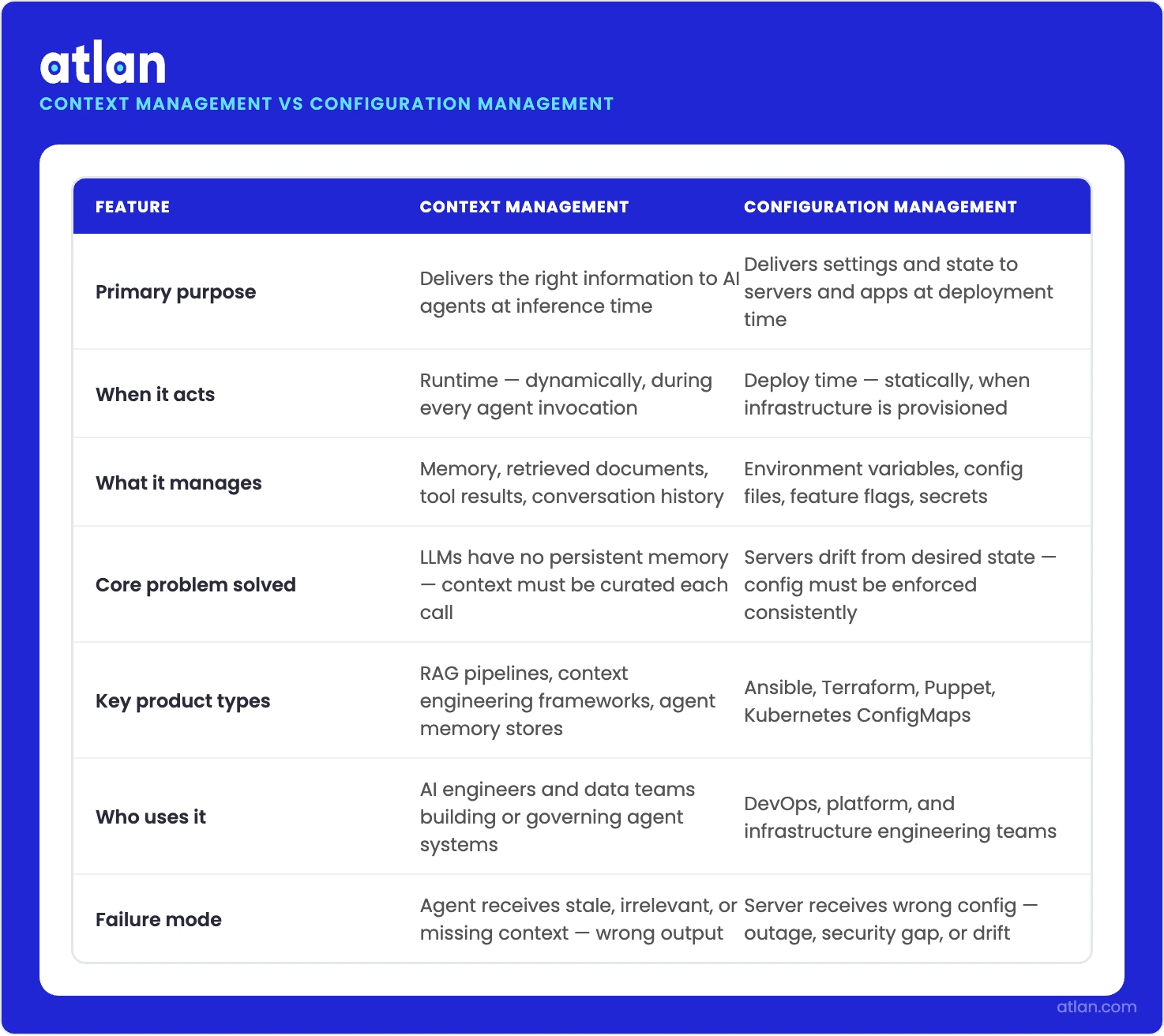

How is context management software different from configuration management software?

Permalink to “How is context management software different from configuration management software?”Context management software delivers information to AI agents at inference time. Configuration management software delivers settings to servers and applications at deployment time. The two share three syllables and nothing else. Context management is an AI-stack discipline rooted in how LLMs treat context as a finite resource. Configuration management is a DevOps discipline rooted in infrastructure state. Buying the second when you need the first is a common, expensive mistake.

| Dimension | Context management software | Configuration management software |

|---|---|---|

| Audience | AI agents and LLMs | Servers, applications, infrastructure |

| Trigger | Inference time | Deployment time |

| Examples | Zep, Pinecone, Atlan, DataHub | Ansible, Puppet, Chef, ServiceNow CMDB |

| What changes | The information delivered to a model | The state of a system |

Two software categories that share three syllables and nothing else. Image by Atlan.

The four categories of context management software

Permalink to “The four categories of context management software”The four categories differ by where they sit in the AI stack. Frameworks sit at the application layer, inside one agent. Retrieval infrastructure sits below, indexing source material. Agent platforms sit above, coordinating multi-step workflows. Enterprise context platforms sit underneath all three, governing what context exists in the first place.

Context engineering frameworks

Permalink to “Context engineering frameworks”Context engineering frameworks manage what goes into an LLM’s context window at inference time. They handle conversation history, memory, retrieval calls, and tool outputs. They live inside a single application and serve a single agent’s needs. Thoughtworks’ Birgitta Böckeler has written about this layer in the specific case of context engineering for coding agents, and LangChain’s documentation on context engineering is a useful starting point for framework-tier work.

- Examples: Zep, LangChain, LlamaIndex, Mem0, CrewAI memory

- Solves: context window curation, conversation state, token economics

- Does not solve: cross-application governance, organization-wide policy, audit trail

- Buy if: you are building one agent and need it to behave consistently across long sessions

For analyst-facing teams using these frameworks inside Atlan-governed pipelines, see how the layers connect in context engineering for AI analysts.

RAG and retrieval infrastructure

Permalink to “RAG and retrieval infrastructure”RAG and retrieval infrastructure ingests enterprise documents, indexes them in a vector store, and serves the most relevant chunks back to AI agents at query time. It is the storage and retrieval substrate that feeds an agent’s context window.

- Examples: Pinecone, Weaviate, Chroma

- Solves: turning unstructured documents into machine-retrievable knowledge

- Does not solve: governance of what should be indexed, policy on who can retrieve what

- Buy if: you have a defined corpus and need agents to retrieve from it

Retrieval works best when it draws on a governed semantic layer rather than free-form embedding indexes alone. For a vendor-neutral primer on this tier, Pinecone’s RAG learning center covers the retrieval mechanics in depth.

AI agent platforms

Permalink to “AI agent platforms”AI agent platforms orchestrate multi-step agent workflows in production. They handle tool calling, retries, state, evaluation, and deployment. They consume context. Some agent platforms now ship eval and guardrail features, but governance there is per-deployment, not cross-organization, and the semantic and lineage layer those features draw on still has to come from somewhere else.

- Examples: AWS Bedrock Agents, Relevance AI, Langflow

- Solves: production deployment, orchestration, evaluation loops

- Does not solve: where the context comes from, whether it is correct, who governs it

- Buy if: you have an agent that works on a laptop and needs to run in production

Many teams hit a ceiling when the same context gets re-derived inconsistently across agents. The pattern is documented in context management across multi-agent systems. For the agent-runtime specifics, AWS Bedrock Agents documentation is a representative reference for this tier.

Enterprise context platforms

Permalink to “Enterprise context platforms”Enterprise context platforms govern what context exists across the organization in the first place: definitions, lineage, policies, semantic meaning. They are infrastructure under the other three categories, not a substitute for them. This is the category Atlan and DataHub compete in. Everything else in this article is software your team will run alongside it, not in place of it.

- Examples: Atlan, DataHub

- Solves: cross-system governance, semantic layer, organization-wide audit trail, MCP-based activation

- Does not solve: per-agent memory management, vector indexing, agent orchestration

- Buy if: you have multiple agents in flight and the same business term gets re-derived (and re-broken) in each one

For the deeper architecture, see the agent context layer architecture and the core components of a context layer.

Why most enterprises buy the wrong category first

Permalink to “Why most enterprises buy the wrong category first”A common pattern in pilots that stall: an enterprise buys a context engineering framework first because the first agent is a small-team prototype. The framework works. Then the team tries to scale to ten agents and discovers the context is different in every one. The same business term has three definitions. The same source table has two lineage trees. The category the platform team actually needed was an enterprise context platform: the layer underneath the framework, not a competitor to it.

This pattern matches the structural picture Anthropic published about context as a finite resource with diminishing marginal returns. Frameworks optimize the context window for one agent. They cannot, by design, govern what context exists for the next agent. Retrieval also degrades as token counts grow, a behavior the same Anthropic Engineering post names as “context rot,” where recall accuracy falls as the window fills. The fix is not a bigger context window. The fix is governing what enters it.

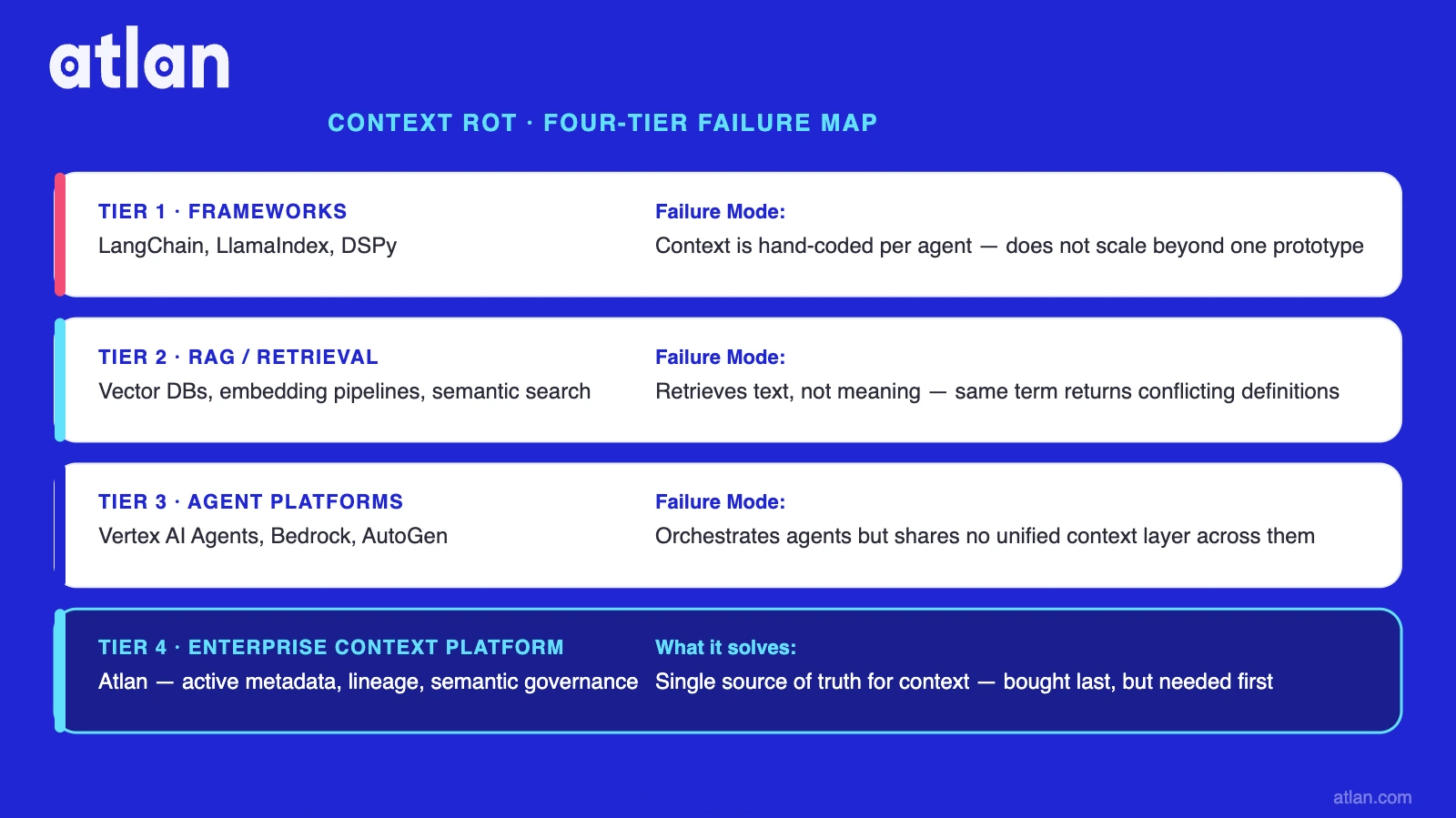

Context rot looks different at each tier of the stack, which is part of why one piece of software cannot solve all four:

- Tier 1 (frameworks): the conversation window forgets earlier turns even though they technically still fit

- Tier 2 (RAG and retrieval): the same query returns different chunks across two consecutive runs, and the relevance ranking drifts as the index grows

- Tier 3 (agent platforms): two agents in the same workflow give inconsistent answers because each one assembled its own context from scratch

- Tier 4 (enterprise context platforms): “customer revenue” has three definitions across departments, and every tier above propagates a different version of it

DataHub frames this picture as four layers and argues that most organizations have the top layers covered and the bottom layer missing. The four-layer diagnosis is a useful map. The conclusion that one platform is the only answer for the bottom layer is the kind of vendor-narrowing this guide refuses. A small piece of evidence that the category boundaries are real: LlamaIndex sells a memory module in tier one AND a retrieval product in tier two, under two product names, for two different buyers. The remaining question is whether enterprises need a context layer between data and AI at all, and what to do once they conclude they do.

Each tier has a distinct failure mode no single tool can solve. Image by Atlan.

How do you tell which category you actually need?

Permalink to “How do you tell which category you actually need?”The right category depends on the failure mode you are trying to fix. If one agent forgets its own conversation, you need a framework. If retrieval is returning irrelevant chunks, you need better retrieval infrastructure. If agents will not deploy, you need an agent platform. If different agents use different definitions of the same business term, you need an enterprise context platform.

| Your problem | Category you need | Atlan competes here? | Where to go next |

|---|---|---|---|

| One agent forgets its own conversation history | Context engineering frameworks | No | External category |

| Retrieval returns irrelevant or inconsistent chunks | RAG and retrieval infrastructure | No | External category |

| Agents work in dev but fail in production | AI agent platforms | No | External category |

| Different agents use different definitions of the same term | Enterprise context platforms | Yes | See “Where Atlan fits” below |

If your need is in category four, three deeper Atlan resources address the next questions:

- To compare 10 context management tools side-by-side

- For 5 strategies for enterprise context management

- For build-vs-buy across the full stack, see context engineering platforms compared

Where Atlan fits in the category map

Permalink to “Where Atlan fits in the category map”Atlan is an enterprise context platform: category four. It is the governance and activation layer that sits under the other three categories. Frameworks, retrieval infrastructure, and agent platforms still belong in the stack alongside it. The two Atlan products that touch the AI agent path directly are Atlan Context Studio and the Atlan MCP server.

Atlan Context Studio bootstraps agent context from existing BI dashboards, reports, SQL queries, and metadata. Domain experts review the AI-generated context in a human-in-the-loop workflow before release. Context Studio also generates evaluation suites from real business questions and stores context in a versioned Context Repo that propagates to Snowflake Cortex, Claude, and custom agents.

The Atlan MCP server is the activation layer. It is available as a hosted per-tenant deployment and as a local server you can run with Docker or uv. Per Atlan’s MCP product documentation, supported clients today include Claude, Cursor, Windsurf, and Microsoft Copilot Studio. The MCP server is an activation surface, not an agent runtime. It does not orchestrate multi-step workflows. It exposes search, lineage, metadata updates, governance actions, glossary creation, and data quality enforcement, and inherits the permissions already configured in Atlan.

For the head-to-head with the most-cited alternative in this tier, see Atlan vs DataHub.

How to use this category map for your business

Permalink to “How to use this category map for your business”Use the four-category map to stop buying overlapping tools. Diagnose the failure mode first. Match it to a category second. Read the deeper resource for that category third. Most enterprises need fewer tools than vendors imply, deployed in a different order.

A practical next step by category:

- Comparison shopping in tier four: compare 10 context management tools side-by-side

- Implementing tier four context infrastructure: 5 strategies for enterprise context management

- Composing across all four tiers: context engineering platforms compared

For the question underneath all four categories — what a context layer actually is and why enterprises are missing it — see what is a context layer and the context layer resource page.

FAQs about context management software

Permalink to “FAQs about context management software”What is context management software?

Permalink to “What is context management software?”Context management software is the set of tools enterprises use to deliver governed context to AI agents and LLMs at inference time. It is not one product type. The category covers four sub-categories: engineering frameworks, retrieval infrastructure, agent platforms, and enterprise context platforms. They operate at different layers of the AI stack and solve different problems.

What is the difference between context management software and context engineering platforms?

Permalink to “What is the difference between context management software and context engineering platforms?”Context engineering is a per-application discipline. A developer uses it to curate what enters one agent’s context window. Context management is an organization-wide capability. A platform team uses it to govern what context exists across the enterprise. Context engineering platforms are one of the four categories inside the broader context management software market.

Is context management software the same as configuration management software?

Permalink to “Is context management software the same as configuration management software?”No. Context management software delivers information to AI agents at inference time. Configuration management software delivers settings to servers and applications at deployment time. The two share three syllables and nothing else. Context management lives in the AI stack. Configuration management lives in the DevOps stack. They are bought by different teams for different problems.

What are the four categories of context management software?

Permalink to “What are the four categories of context management software?”The four categories are context engineering frameworks (Zep, LangChain, LlamaIndex, Mem0), RAG and retrieval infrastructure (Pinecone, Weaviate, Chroma), AI agent platforms (AWS Bedrock Agents, Relevance AI, Langflow), and enterprise context platforms (Atlan, DataHub). Each category solves a problem at a different layer of the AI stack. Most enterprises end up running two to four of them in parallel.

Do enterprises need standalone context management software, or can existing tools handle it?

Permalink to “Do enterprises need standalone context management software, or can existing tools handle it?”Enterprises need standalone software for at least one category, usually enterprise context platforms, because existing data catalogs, prompt management tools, and observability stacks do not deliver governed context to AI agents at inference time. The other three categories are sometimes covered by adjacent investments. Enterprise context governance is the layer most often missing.

How do you choose context management software?

Permalink to “How do you choose context management software?”Start with the failure mode your team is hitting now. One agent forgetting its own conversation points to a framework. Bad retrieval points to retrieval infrastructure. Deployment failure points to an agent platform. Inconsistent definitions across agents point to an enterprise context platform. Buy the category that matches the failure mode, not the category with the loudest marketing.