Why is context engineering important in 2026?

Permalink to “Why is context engineering important in 2026?”In mid-2025, we entered the era of intelligence abundance. The flagship AI models released by OpenAI, Anthropic, and Google could perform complex math and write flawless production-ready code.

Around the same time, AI experts and organizations recognized the limitations of prompt engineering and began recommending that organizations shift to context engineering as part of their enterprise AI strategy. Why?

While prompt engineering focuses on crafting better instructions for LLMs, context engineering addresses something deeper. It focuses on delivering the right information to AI agents, in the right format, at the right time, so they have everything they need to accomplish a multi-step workflow. This process of dynamic context optimization helps organizations reduce context rot and build a unified institutional memory, which lays the foundation for agentic systems to deliver consistent outcomes.

This shift was widely agreed upon, including by the industry analysts. In July 2025, Gartner declared that “context engineering is in, and prompt engineering is out,” urging AI leaders to prioritize context-aware architectures over clever prompting.

Looking back, we could see four converging realizations that led to this shift.

1. The failure of enterprise AI pilots

Permalink to “1. The failure of enterprise AI pilots”According to MIT’s 2025 State of AI in Business study, 95% of enterprise AI pilots delivered zero measurable ROI. The root cause is not the models or their intelligence. It’s the lack of context.

Consider a simple request: “Give me a list of our top 10 customers.” Sounds straightforward. But what does “top” mean? For sales teams, it’s the accounts with the highest contract value. For product teams, it’s the customers with the strongest adoption and usage. For customer success, it’s the ones most likely to renew. Each team has a different definition of “top.”

So, when asked this question, an AI agent needs to know who is asking, which definition of “top” applies, what time period to use (quarter or year), and where the canonical data lives. Without understanding the context, an AI agent might misinterpret the question and deliver an incorrect answer.

.webp)

How an agent understands context - Image by Atlan.

2. The performance of AI agents relied on context

Permalink to “2. The performance of AI agents relied on context”The “missing context” problem is not new. It existed since the beginning of chatbots. But the consequences were smaller.

When a chatbot fetches the price of a particular product, the action is often one-dimensional. It refers to the internal knowledge repository and provides sales or support agents with all information about the product’s price. The sales or support agent interprets the available information and makes a final decision.

But it’s not the case with agents. AI Agents are autonomous. They take action. They approve discounts, escalate tickets, and generate contracts. When an agent acts on incomplete context, the damage could be significant.

When you ask an AI agent about the pricing of a product, the AI agent pulls together:

-

Details of the customer to understand if it’s a new deal or a renewal

-

Contract terms and approval chains to know what’s already been agreed for a particular customer

-

Regulatory and compliance constraints to avoid violations

-

Cross-system decision history to stay consistent with past commitments

If you look closely, you can see that each of the aforementioned data lives in a different system, owned by a different team.

So, context is crucial for an AI agent to make sense of the data, review similar past requests, and make a decision.

3. Context windows created architectural constraints

Permalink to “3. Context windows created architectural constraints”As models expanded from 4K to 100K+ tokens, organizations found that flooding them with irrelevant information degraded performance. The challenge wasn’t token limits but context precision: getting the right information, in the right format, at the right time.

Gartner’s 2025 research emphasizes that “strategically managing context for each step of an agentic workflow” is critical for accuracy and reliability. This shift recognizes that AI success depends less on clever prompting and more on robust information infrastructure.

Organizations that treat context engineering as core infrastructure rather than an afterthought report dramatically different outcomes. Research from Anthropic found that enterprises with effective context management successfully deploy sophisticated AI systems, while those with fragmented context struggle even with simple use cases.

4. Context became the competitive moat for organizations

Permalink to “4. Context became the competitive moat for organizations”When every company has access to the same foundation models, the differentiator is no longer which model you use. It’s what your model knows about your business.

As one panelist put it at Atlan’s Great Data Debate: “If my model only knew what my company knows, we’d be 100x more productive.”

The organizations pulling ahead in 2026 aren’t the ones with the biggest AI budgets. They’re the ones that have turned their institutional knowledge — definitions, workflows, decision history, exception logic — into machine-readable context that any AI system can use.

The emergence of semantic layers, context graphs, and active metadata platforms reflects enterprise demand for context infrastructure. Organizations are investing in systems that capture, govern, and serve context rather than manually engineering it for each AI application.

Prompt engineering vs. context engineering

Permalink to “Prompt engineering vs. context engineering”Understanding this shift is easier with a direct comparison. Prompt engineering hasn’t become irrelevant; it’s become one layer inside a much larger system.

| Prompt engineering | Context engineering | |

|---|---|---|

| What it is | Crafting the right instructions for a single model interaction | Designing systems that deliver the right information, tools, and memory to AI agents |

| How context is provided | Manually written into the prompt by a developer | Dynamically assembled at runtime from databases, APIs, documents, and decision logs |

| What happens at scale | Each new use case requires a new, hand-tuned prompt | Feedback loops give rise to institutional memory that provides accurate context for AI agents |

| Who owns it | Individual developers or prompt specialists | Cross-functional teams: data, AI, governance, and domain experts |

| Institutional memory | None. Every interaction starts from scratch | Decision traces accumulate over time, so agents learn from past outcomes |

| Risks | Poorly worded prompt leads to a bad answer | Missing or stale context leads to a confidently wrong action |

Prompt engineering still matters. It’s one input into the context window. But in 2026, it’s the context layer that wraps around it that determines whether enterprise AI actually works.

The CIO's Guide to Context Graphs — Discover the key strategies that CIOs are using to implement context layers and scale AI.

Download the GuideContext engineering is the discipline — the Context Hub is the infrastructure map. 40+ guides cover context layers, context graphs, and how enterprises are building the AI-ready knowledge infrastructure that makes context engineering possible at scale.

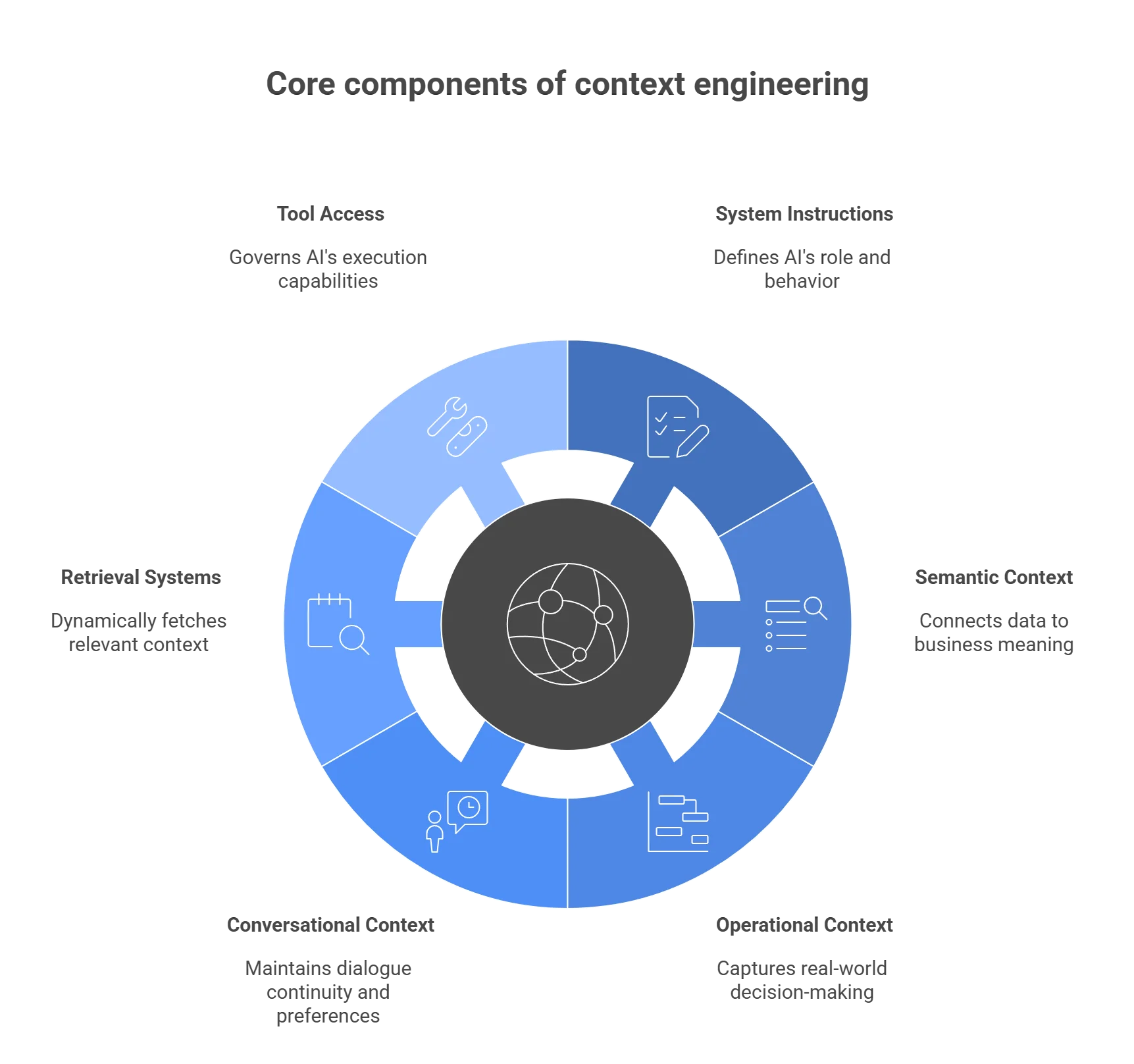

What are the core components of context engineering?

Permalink to “What are the core components of context engineering?”Effective context engineering operates through multiple interconnected layers, each contributing essential information that AI systems need to reason accurately. Understanding these components helps organizations build a comprehensive context infrastructure rather than fragmented solutions.

The core components of context engineering - Image by Atlan.

1. System instructions and role definitions

Permalink to “1. System instructions and role definitions”System instructions tell an AI agent what it is, what it can do, and where its boundaries are.

-

Role and domain scope: “You are a financial analyst for EMEA revenue reporting” — not a generic assistant

-

Behavioral constraints: What the agent must never do (expose PII, bypass approval chains, make assumptions about missing data)

-

Decision frameworks: Which rules apply first? For example, regulatory constraints override business preferences, and company policy overrides individual requests

-

Organizational hierarchy: Who can approve exceptions, which team owns which definitions, and where to escalate in case of ambiguity

The difference between a useful AI agent and a sloppy one often comes down to how precisely these instructions encode your organization’s actual operating rules — not generic best practices.

2. Semantic and structural context

Permalink to “2. Semantic and structural context”Semantic context bridges technical data structures with business meaning.

Semantic layers standardize definitions across systems: what “customer” means, how “revenue” is calculated, and which fields represent “churn.”

This layer also answers other foundational questions, like:

- Which table contains customer data?

- How are customers, accounts, and contracts connected?

- What constitutes an “active” user?

Without semantic context, AI systems make incorrect assumptions about the meaning of data.

Structural context adds relationships and lineage. Column-level lineage shows how individual data fields are connected across various internal services and pipelines. Business glossaries define canonical terms. Data quality metrics indicate trustworthiness. Together, these elements help AI systems navigate complex data landscapes with confidence.

3. Operational context and procedural memory

Permalink to “3. Operational context and procedural memory”This is the layer most enterprises are missing. And it’s the one that separates AI demos from AI that actually works.

Operational context captures how your organization makes decisions. This includes:

-

Decision traces: Why a discount was approved, which policy was applied, who signed off on the deal, and when

-

Exception logic and precedents: The kind of institutional knowledge that no database captures today

-

Standard operating procedures: Escalation paths, approval workflows, compliance checklists encoded in machine-readable formats

-

Cross-system synthesis: A single customer renewal decision pulls context from 6+ systems — CRM, support tickets, usage data, Slack approvals, billing, and more

Context graphs store this procedural memory as queryable traces that agents can learn from.

4. Conversational and temporal context

Permalink to “4. Conversational and temporal context”Conversational context maintains dialogue history and user preferences, made possible with:

-

Short-term memory: Tracks current conversation flow, prior questions in this session, user’s stated intent

-

Long-term memory: Stores patterns across sessions — how this user prefers to receive information, which questions they ask repeatedly, what exceptions they typically request

Temporal context tracks the freshness of data for the agents:

-

Temporal awareness: When was the last time this data was refreshed, whether a policy is current or deprecated, and which metrics are seasonal

-

Change signals: Schema changes, definition updates, ownership transfers — events that invalidate previously reliable context

This prevents AI systems from citing outdated policies or using stale data in decision-making.

5. Retrieval and integration systems

Permalink to “5. Retrieval and integration systems”The retrieval layer is where context engineering becomes a live system rather than a static configuration. Instead of pre-loading everything into the prompt, modern systems fetch what’s needed at the moment of decision.

-

RAG (Retrieval Augmented Generation): Querying vector databases, document stores, and knowledge bases to ground responses in source material

-

Live data integration: Real-time connections to CRMs, warehouses, BI tools, collaboration platforms — pulling current state, not cached snapshots

-

Semantic search: Matching user intent to relevant context, not just keyword matches

-

Context delivery protocols: Standards like MCP (Model Context Protocol) that let agents query metadata lakehouse, lineage, and quality signals through governed interfaces

The best systems retrieve just enough context to be accurate without overwhelming the model’s attention.

6. Tool access and action capabilities

Permalink to “6. Tool access and action capabilities”AI agents need to know which tools they can use, what each tool does, and when to use which one.

-

Tool discovery: What databases, APIs, calculators, and workflows are available to this agent in this context

-

Capability boundaries: Which actions require human approval, which can be executed autonomously, which are read-only vs. write-enabled

-

Execution governance: Audit trails for every tool invocation — what was called, with what parameters, and what changed

-

Inter-agent coordination: When multiple agents operate in the same enterprise, they need shared information to avoid conflicting actions. Standards like MCP and A2A (Agent2Agent Protocol) are emerging to address this

Together, these layers create the comprehensive understanding AI agents need to perform reliably in enterprise environments.

Build Your AI Context Stack — Get the blueprint for implementing context graphs across your enterprise.

Get the Stack GuideHow do you strategically implement context engineering?

Permalink to “How do you strategically implement context engineering?”Successful context engineering requires organizations to build the right system architecture to establish a unified institutional memory and context layer. Organizations that treat context as a strategic capability rather than a tactical prompt-engineering problem achieve dramatically better AI outcomes.

Start with high-value, contained domains

Permalink to “Start with high-value, contained domains”Context engineering is complex, so it is better not to boil the ocean. Atlan’s approach to context engineering recommends starting with one of three critical areas: customer analytics, financial reporting, or supply chain operations.

Choose a domain where context gaps are causing measurable pain and where existing metadata is strongest.

Here are a few signals to help you get started:

-

Proven demand — if someone built a dashboard for it, there’s a question worth answering with context engineering

-

80% accuracy threshold — below 80%, business users reject the system; above 80%, the adoption flywheel begins

-

Internal proof points — one successful domain unlocks budget and buy-in for the next

Build from existing metadata and semantic models

Permalink to “Build from existing metadata and semantic models”Most enterprises already have more context than they realize. But it’s scattered across BI tools, dbt models, glossaries, and documentation. The fastest path to building a working context layer starts by harvesting what already exists.

-

Semantic layers in dbt, Looker, or Tableau already encode metric definitions and business logic. Ingest these rather than recreating from scratch

-

Harvest business glossaries that contain term definitions, ownership, domain boundaries, and make them machine-readable

-

Extract relationships from data lineage to understand data flows

-

Capture usage patterns and quality signals from active metadata

The best way to get started is by implementing Atlan’s metadata lakehouse, which unifies these disparate sources into a queryable context layer.

Design for continuous refinement

Permalink to “Design for continuous refinement”Context engineering isn’t a one-time project. The system that wins is the one that improves its context delivery over time.

-

Feedback loops: Accuracy creates trust, trust drives adoption, adoption generates corrections, corrections improve accuracy

-

Version context like code: When definitions change, dependent AI systems need to know; active metadata captures these change signals in real time

-

Systematic testing: Treat AI context validation like QA, not manual spot-checking

-

Track performance metrics: Track completeness, accuracy, and look for staleness indicators, conflict rates, and retrieval relevance

Federate ownership while centralizing infrastructure

Permalink to “Federate ownership while centralizing infrastructure”No single team can define all context for an enterprise. Context engineering fails when data teams try to centrally document everything, or when AI teams build fragmented context stores for each use case. The answer is federated ownership on shared infrastructure.

-

Data platform teams provide infrastructure, connectors, and governance frameworks

-

Domain experts own definitions, rules, and quality standards for their areas

-

AI teams consume context through standard interfaces and flag what’s missing

-

Governance teams ensure consistency, compliance, and auditability across domains

This model scales because context creation happens where knowledge lives, while consumption remains centralized and consistent. See context layer ownership for a deeper breakdown.

Choose composable infrastructure over monoliths

Permalink to “Choose composable infrastructure over monoliths”Context must survive the inevitable churn of AI tools, cloud strategies, and business pivots. To keep the context infrastructure open and portable, organizations should use:

-

Semantic layers for metric definitions and business logic

-

Graph databases for relationships and lineage

-

Vector stores for document search and semantic retrieval

-

Metadata platforms for governance, integration, and context delivery

Platforms like Atlan serve as the connective tissue, ingesting semantic models from existing tools, capturing lineage across systems, and exposing unified context through standard protocols like MCP.

What context failures cause AI systems to break?

Permalink to “What context failures cause AI systems to break?”Most AI failures aren’t model failures. They’re context failures. Understanding the different types of context failures will help organizations diagnose problems and design more resilient systems.

Missing context: The information gap

Permalink to “Missing context: The information gap”The most fundamental failure. An agent asked to analyze regional sales performance without proper definitions produces irrelevant results.

How it manifests:

-

Hallucinated answers filling gaps with plausible-sounding fiction

-

Generic responses that can’t address organization-specific situations

-

Decisions made without considering relevant constraints or precedents

-

Repeated questions about information already answered earlier in conversations

How to address it:

-

Map what information exists, where it lives, and how to retrieve it before deploying agents

-

Start with Atlan’s approach, which involves inventorying data assets, capturing metadata, and building retrieval paths before deploying AI systems

Stale context: The freshness problem

Permalink to “Stale context: The freshness problem”Context that was accurate yesterday becomes misleading today when business rules change, data refreshes, or policies update.

Reasons why this is happening:

-

Policy documents that change but aren’t versioned or dated

-

Metric definitions that evolve without clear change management

-

Seasonal business rules that apply only during specific periods

-

Agents treating last quarter’s data with the same weight as this morning’s refresh

How to address it:

-

Version context like code — every definition change triggers downstream notifications

-

Use active metadata to capture change signals in real time

-

Set staleness thresholds — flag context that hasn’t been reviewed within a defined window

Conflicting context: The consistency crisis

Permalink to “Conflicting context: The consistency crisis”When different sources provide contradictory information, AI systems arbitrarily pick one interpretation. Finance defines “customer” differently than Sales. Production data conflicts with the semantic layer.

Conflict in context manifests as:

-

Inconsistent answers to the same question, depending on which source the agent retrieves first

-

Different results when querying equivalent data through different paths

-

AI systems choosing arbitrary definitions when multiple definitions exist for the same term

How to address it:

-

Establish canonical definitions in a governed business glossary that agents reference as the single source of truth

-

Use column-level lineage to trace which path each metric follows, and flag when paths diverge

-

Set conflict-detection rules that surface when agents encounter multiple definitions for a term

Irrelevant context: The noise problem

Permalink to “Irrelevant context: The noise problem”Context overload degrades performance as much as context scarcity. Flooding AI systems with marginally relevant information dilutes attention, increases latency, and introduces spurious correlations.

How it manifests:

-

Slower response times as agents process unnecessary information

-

Lower accuracy as the model’s attention spreads across irrelevant tokens

-

Correlations that lead to confident but wrong conclusions

How to address it:

-

Prioritize retrieval precision over volume

-

Use semantic search and reranking to filter context by relevance, not just keyword match

-

Design context products scoped to specific domains and use cases

Permission-violated context: The security breach

Permalink to “Permission-violated context: The security breach”AI agents that surface information users shouldn’t see create compliance risks and destroy trust.

How it manifests:

-

Agents surfacing restricted data that bypasses row- or column-level access controls

-

Sensitive information leaking through inference

-

Compliance violations when AI outputs cross regulatory boundaries (GDPR, HIPAA, etc.)

How to address it:

By adopting security-aware context engineering:

-

Embed policy enforcement and access controls directly into the context layer

-

Apply the same row- and column-level security to AI retrieval that exists for human query access

-

Maintain audit trails showing which context was accessed, by which agent, for what purpose

How does Atlan power context engineering for AI systems?

Permalink to “How does Atlan power context engineering for AI systems?”Atlan positions itself as the missing context layer between enterprise data and AI, providing the infrastructure organizations need to reliably move AI from pilots to production at scale.

Modern AI systems require more than data access — they need a comprehensive understanding of what data means, how it connects, which information is trustworthy, and what rules govern its use. Atlan’s metadata lakehouse serves as this foundation, capturing and activating context that makes AI systems effective.

Core capabilities of Atlan that enable context engineering at scale

Permalink to “Core capabilities of Atlan that enable context engineering at scale”-

Unified semantic and context layers: Atlan ingests semantic definitions from dbt, BI tools, and warehouse-native layers, then enriches them with lineage, quality, ownership, and policies to create comprehensive context

-

Column-level lineage as operational memory: Automated lineage traces how data transforms across systems, capturing not just technical dependencies but also the business logic and decision precedents embedded in pipelines

-

Active metadata for dynamic context: Real-time metadata collection captures usage patterns, quality signals, and collaboration trails that reveal how organizations actually work

-

Governance-aware context delivery: Policy enforcement and access controls ensure AI systems respect the same security and compliance constraints that protect human users

-

MCP server for agent integration: Atlan’s Model Context Protocol implementation exposes metadata, lineage, and quality context through standard interfaces, enabling AI agents to query and reason about data assets safely

The architectural advantage is subtle but critical. Instead of each team stitching together context for their specific AI project, Atlan provides a federated, governed context infrastructure that every agent and application can draw from.

| Company | Outcome |

|---|---|

| Workday | 5x improvement in AI response accuracy through Atlan’s MCP server |

| Porto Insurance | 40% reduction in governance workload |

| Tide | 50 days of manual PII tagging compressed to 5 hours |

| Nasdaq | Data discovery time cut by one-third |

Context engineering in action: Real stories from real customers

Permalink to “Context engineering in action: Real stories from real customers”

"All of the work that we did to get to a shared language amongst people at Workday can be leveraged by AI via Atlan's MCP server. As part of Atlan's AI labs, we are co-building the semantic layers that AI needs with new constructs like context products."

Joe DosSantos, VP Enterprise Data & Analytics

Workday

Workday: Context as Culture

Watch now

"We have moved from privacy by design to data by design to now context by design. Atlan's metadata lakehouse is configurable across all tools and flexible enough to get us to a future state where AI agents can access lineage context through the Model Context Protocol."

Andrew Reiskind, Chief Data Officer

Mastercard

Mastercard: Context by Design

Watch Now

"One of the main issues we were facing was the lack of consistency when providing context around data, making context the missing layer in our data stack. The clearest outcome is that everyone is finally talking about the same numbers, which is helping us rebuild trust in our data."

Prudhvi Vasa, Analytics Leader

Postman

🎧 Listen to the podcast: How Postman built trust in data with Atlan

Wrapping up

Permalink to “Wrapping up”Context engineering has emerged as the defining capability for reliable AI systems in 2026. The models are powerful enough. What’s missing is a unified context layer that delivers the right information, definitions, decision history, and governance rules to AI agents when they need them.

Successful implementations start focused, build from existing knowledge, design for continuous refinement, and federate ownership while centralizing infrastructure. They systematically address context failures — missing, stale, conflicting, irrelevant, or permission-violating information — through governance, monitoring, and feedback loops.

The path forward requires investing in context infrastructure that serves all AI applications rather than fragmenting context across isolated use cases. Modern platforms like Atlan provide the unified semantic layers, active metadata, and governance capabilities that transform scattered information into an operational context that AI systems can trust.

FAQs about context engineering

Permalink to “FAQs about context engineering”1. How is context engineering different from prompt engineering?

Permalink to “1. How is context engineering different from prompt engineering?”Prompt engineering is about crafting better instructions for a single AI interaction. Context engineering is about designing systems that automatically gather the right information, tools, and memory so the AI can answer correctly in the first place. A prompt tells the model how to respond. A context engineering system ensures the model has what it needs to respond accurately by making sense of multiple signals, such as business definitions, decision history, live data, and governance rules.

2. Do I need a context layer if I already have a semantic layer?

Permalink to “2. Do I need a context layer if I already have a semantic layer?”Yes — they solve different problems and work best together. A semantic layer standardizes metric definitions and business logic: what “revenue” or “active user” means across your organization. A context layer adds everything else AI agents need to act reliably: decision precedents, approval workflows, trust signals, temporal awareness, and governance policies. Think of the semantic layer as “what does this data mean?” and the context layer as “how should we use it, under which rules, based on which past decisions?” The two are complementary.

3. What’s the difference between RAG and context engineering?

Permalink to “3. What’s the difference between RAG and context engineering?”RAG (Retrieval-Augmented Generation) is a technique within context engineering, not a replacement for it. RAG retrieves documents from a knowledge base to ground an AI model’s responses in source material. Context engineering is the broader discipline that manages all information flowing into an AI system: structured data, semantic definitions, operational memory, tool access, conversation history, and governance rules.

4. How do you measure context engineering effectiveness?

Permalink to “4. How do you measure context engineering effectiveness?”Start with AI output quality: response accuracy, hallucination rate, task completion rate, and user trust score. Then track context health metrics: how complete your context coverage is, how fresh your definitions are, how often conflicting information surfaces, and how relevant retrieved context is to the actual query. Operational metrics matter too — how quickly context refreshes when business rules change, whether governance policies are consistently enforced, and how fast agents can retrieve the context they need.

5. Should data teams or AI teams own context engineering?

Permalink to “5. Should data teams or AI teams own context engineering?”Both. Context engineering requires federated ownership. Data teams provide the platform infrastructure, connectors, and governance frameworks. AI teams drive consumption patterns and surface gaps in the available context. Domain experts (finance, sales, operations) own the definitions, business rules, and exception logic for their areas. Governance teams ensure consistency, compliance, and auditability. This collaborative model works because context creation happens where knowledge actually lives, while context consumption stays unified across all AI applications.

6. How long does it take to implement production-ready context engineering?

Permalink to “6. How long does it take to implement production-ready context engineering?”Initial context layers for focused domains typically take weeks to a few months to deploy. The fastest path is bootstrapping from what you already have: business glossaries, semantic models in dbt or BI tools, existing data lineage, and documentation. Organizations that start with a single high-value domain, such as customer analytics or financial reporting, can quickly reach a minimum viable context. Enterprise-wide deployment scales over quarters as you expand to adjacent domains, harden governance, and build feedback loops. The key is to start small and prove value before scaling.

Share this article