How AI analytics agents deploy: from chat to autonomous multi-agent systems. Image by Atlan.

Quick facts

| Attribute | Detail |

|---|---|

| What they are | Autonomous software systems that query, analyze, and report on business data without prompting |

| How they differ from BI | BI answers questions on demand. Agents pursue goals, monitor continuously, and surface findings on their own |

| Most common failure mode | The absence of a governed metadata context, which leads agents to guess at definitions and pick the wrong sources |

| Production readiness gap | Only 11% of organizations have agents in production, per Deloitte’s Tech Trends 2026 |

| Critical prerequisite | A context layer that exposes lineage, semantic definitions, quality scores, and policies to the agent |

| Where Atlan fits | Provides the governed context layer that agents query before generating an answer |

How AI agents for data analytics differ from traditional BI tools

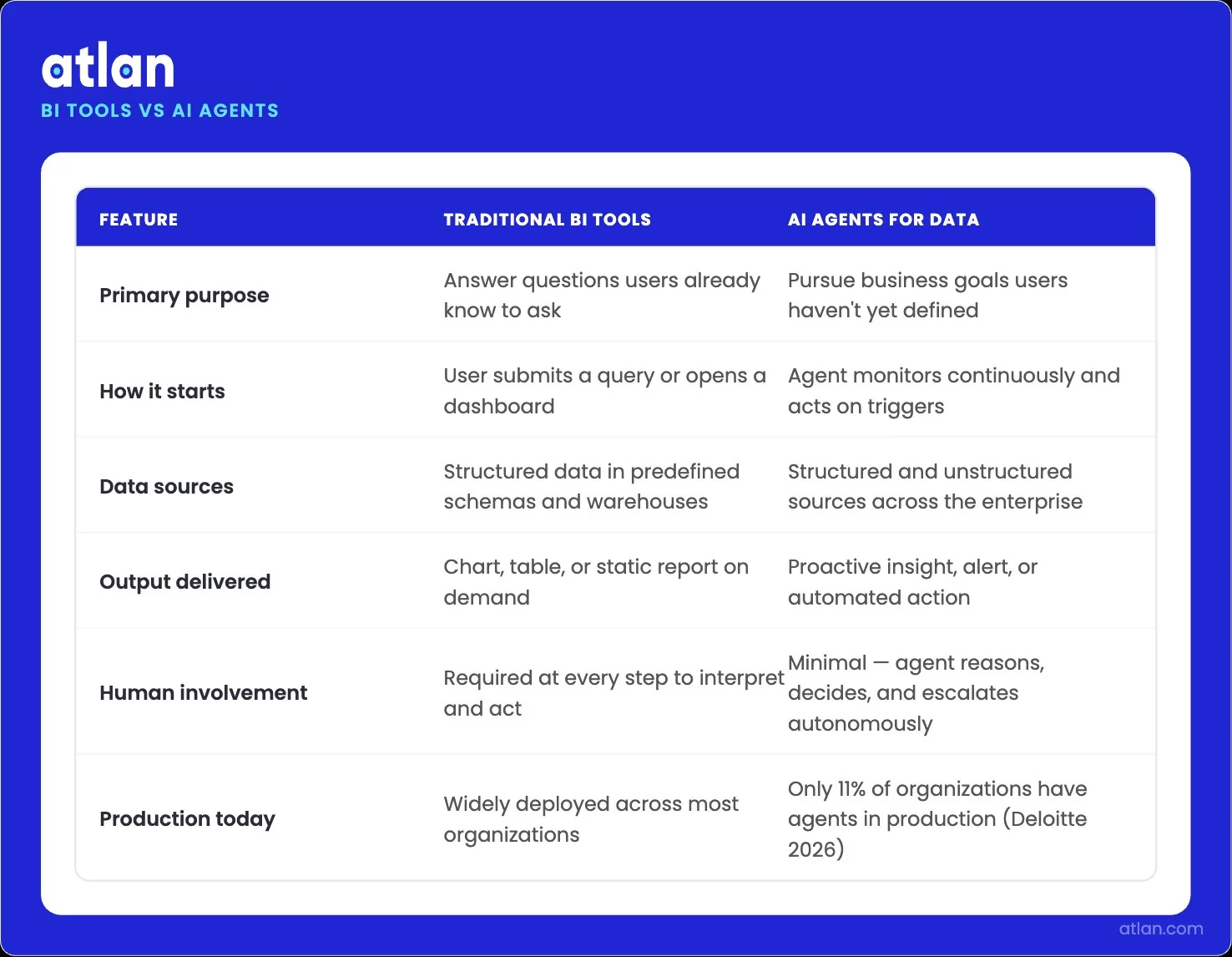

Permalink to “How AI agents for data analytics differ from traditional BI tools”Traditional BI is reactive. A user asks a question, the system runs a query, and a chart appears. AI agents flip that order. They start with a goal, continuously monitor data, and decide on their own when to investigate or escalate. The shift is from “answer this query” to “watch this metric and tell me when something matters.”

The mechanics under the hood differ just as much. Dashboards depend on pre-built schemas and predefined logic. Agents need richer context: business definitions, lineage, quality signals, and access policies, all available at query time. Without that context, an agent that can write SQL still cannot reliably answer “what is our revenue?” because revenue can mean five different things across finance, sales, and product.

Traditional BI vs. AI agents for analytics

| Dimension | Traditional BI | AI agents for analytics |

|---|---|---|

| Query initiation | Human asks | Agent monitors and acts on goals |

| Data freshness | Refreshes on schedule | Streams and reasons in real time |

| Output format | Charts and tables | Insights, recommendations, and actions |

| Human role | Build queries, interpret results | Define goals, review decisions, correct errors |

| Failure mode | Stale dashboards | Confidently wrong answers |

| Governance requirement | Access controls and report sharing | Lineage, semantic definitions, policy enforcement at runtime |

The honest framing is that agents do not replace BI. They sit above it and inside it. A revenue dashboard still exists. The agent watches it, notices a drop, traces the cause through lineage, and pings the right person before the next executive meeting. The workflow only holds together if the agent can read the same governed definitions the dashboard uses.

How traditional BI and autonomous AI agents differ across key dimensions. Image by Atlan.

Why analytics AI agents fail without governed context

Permalink to “Why analytics AI agents fail without governed context”Per Gartner’s 2026 CIO and Technology Executive Survey, only a few organizations have deployed AI agents, despite more than 60% planning to do so within two years. Similarly, McKinsey found that only 23% of enterprises are scaling agentic AI in any business function, with no function exceeding 10% scaled adoption. Most failures trace to the same root cause. The model and the agent framework are fine. What breaks is the context the agent has to work with.

What happens when an agent queries ungoverned columns?

Permalink to “What happens when an agent queries ungoverned columns?”The agent reads schema names and guesses at meaning. If your warehouse has revenue_v2, gross_revenue, and recognized_revenue sitting in three different tables, the agent picks one. Often the wrong one.

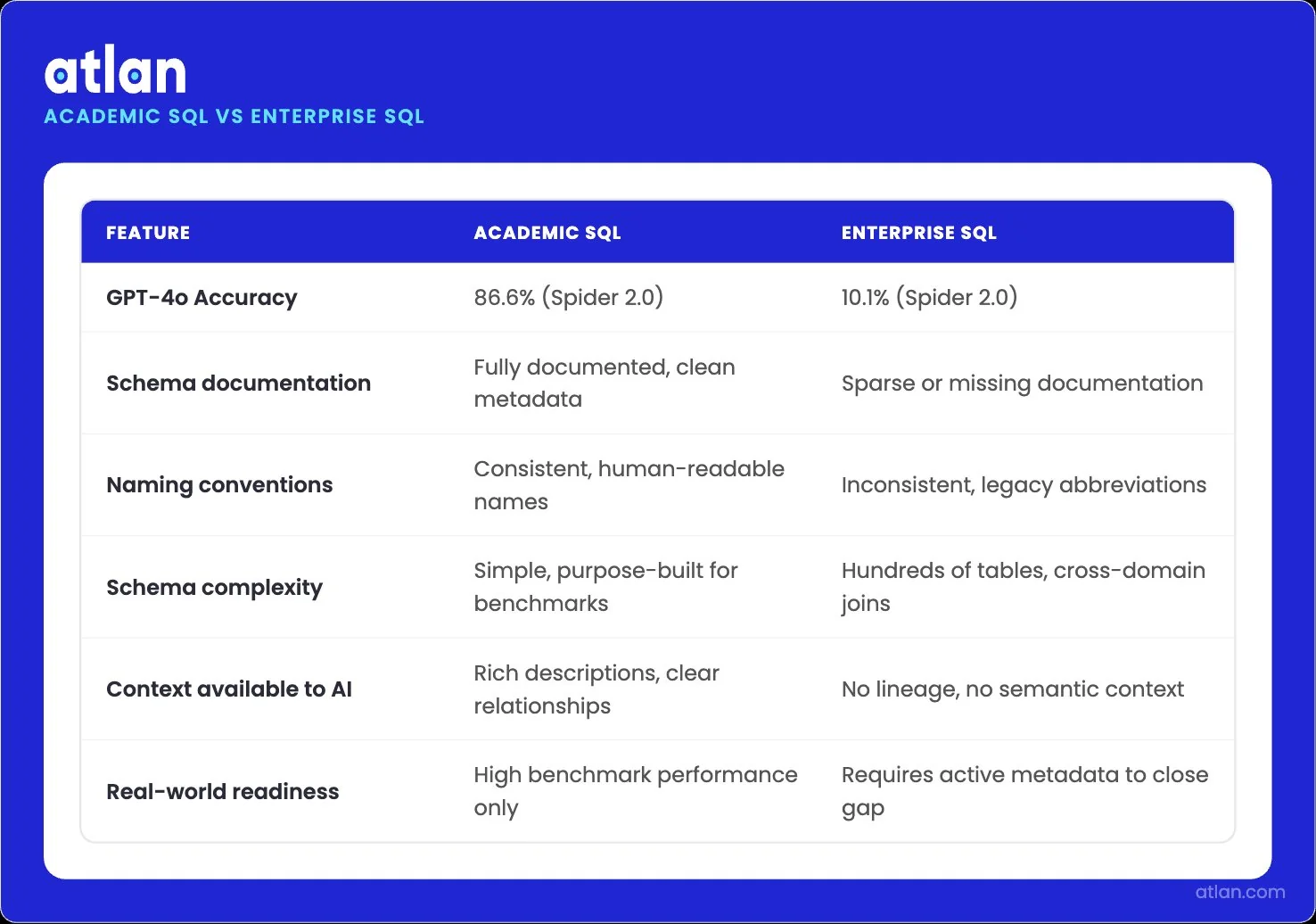

The peer-reviewed Spider 2.0 benchmark, presented as an oral paper at ICLR 2025, made this concrete: GPT-4o achieves 86.6% accuracy on academic SQL but drops to 10.1% on enterprise schemas. Interestingly, the model that fails on enterprise schemas succeeds on academic ones because academic schemas are well documented, consistently named, and free of legacy noise.

GPT-4o scores 86.6% on academic benchmarks but only 10.1% on real enterprise schemas. Image by Atlan.

Why conflicting metric definitions break NL-to-SQL agents

Permalink to “Why conflicting metric definitions break NL-to-SQL agents”There could be multiple definitions of revenue. Finance recognizes it on a schedule. Sales reports bookings. Product tracks usage-weighted revenue. When a business user asks an agent for “Q4 revenue,” the agent has no way to choose between them. Whatever it picks will be wrong somewhere.

Research from data.world’s AI Lab quantified the impact. When LLMs query enterprise SQL directly, accuracy hits 16.7%. When the same models query through a knowledge graph and ontology, accuracy reaches 54.2%. The model did not improve. The context did.

This is why a semantic layer sits at the center of any working agent stack — and why the choice of semantic layer tool for analytics agents determines whether your agents can cite their answers reliably.

How missing lineage creates audit risk in automated reporting

Permalink to “How missing lineage creates audit risk in automated reporting”If an agent generates a number, somebody downstream eventually has to explain where it came from. Without column-level lineage, that conversation goes badly. If it’s an audit-relevant scenario where the trail shows some LLM messed with the data, who’s on the hook? Most organizations do not have an answer.

Lineage solves this. When the agent reports a revenue figure, lineage shows which source tables fed it, which transformations applied, which quality checks passed, and who certified the upstream definitions. Without lineage, every agent’s answer is a black box, and black boxes do not survive audit committees.

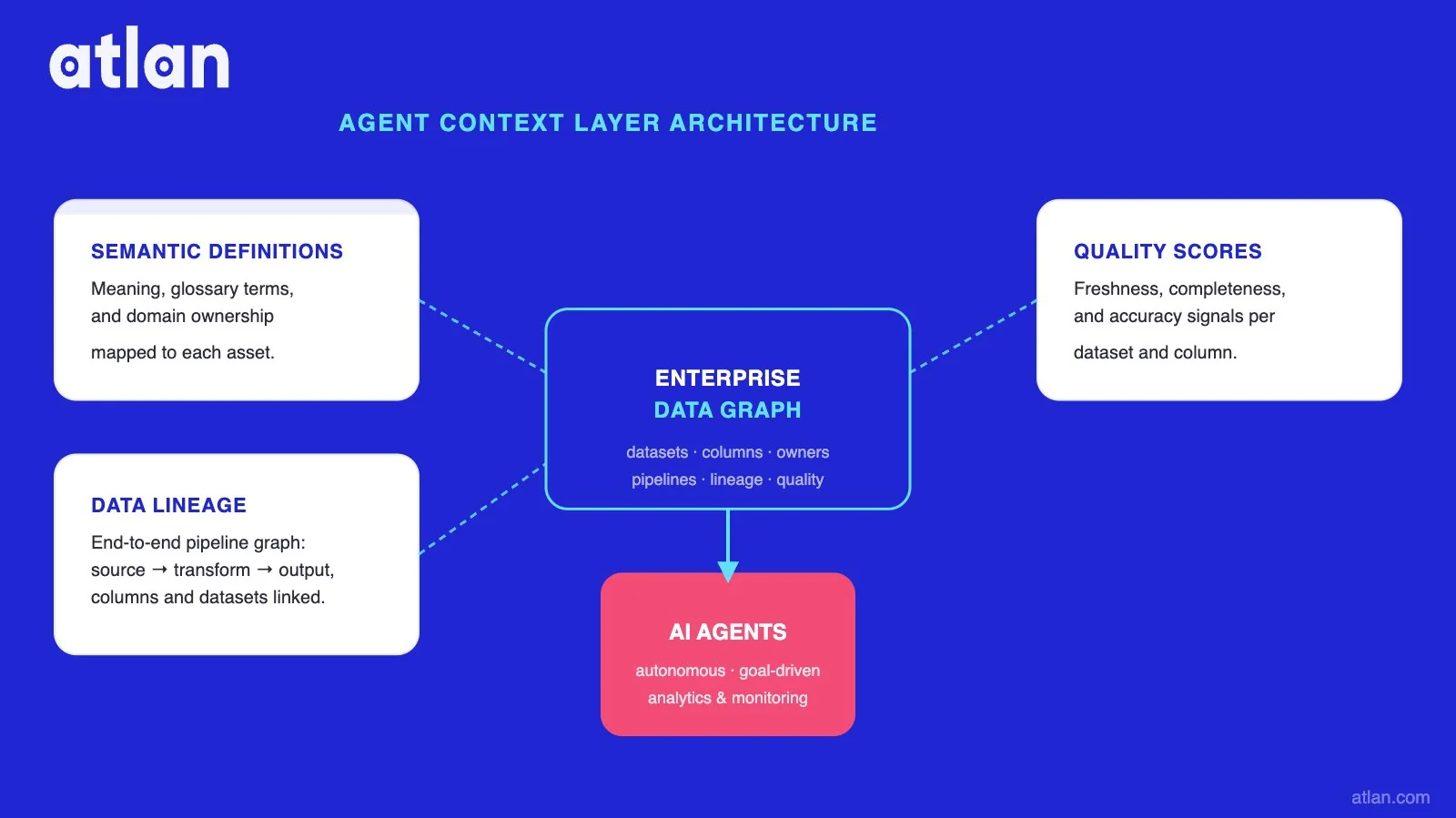

What the context layer gives analytics agents

Permalink to “What the context layer gives analytics agents”The fix is not a smarter model. It is a context layer that the agent can query before it generates an answer. Atlan’s Enterprise Data Graph connects datasets, columns, pipelines, owners, quality scores, and lineage into a single live structure. Agents read from it through the Atlan MCP Server, which standardizes how AI tools like Claude, ChatGPT, Cursor, and Copilot Studio access governed metadata.

Three pieces of that infrastructure carry most of the weight.

Semantic definitions that resolve metric conflicts

Permalink to “Semantic definitions that resolve metric conflicts”The context layer holds one canonical definition of “revenue” with the SQL logic, the owning team, and the certification status attached. When an agent queries the layer, it gets back a structured answer.

Joint Atlan and Snowflake research using BirdBench, an open NL-to-SQL benchmark, directly tested this. With bare-schema access, agents performed at baseline. With context-enriched access, including column descriptions, glossary terms, and lineage, accuracy improved 3x on talk-to-data queries.

Lineage that makes agent outputs traceable

Permalink to “Lineage that makes agent outputs traceable”Column-level data lineage lets an agent trace any metric back to its source. When the agent answers “Q4 revenue is $84M,” the response can include the lineage path: this number came from these tables, was processed by these dbt models, and was certified by this team. Auditors get what they need without anyone having to reconstruct the trail by hand.

This also fixes a second-order problem. When upstream data changes, lineage flags the agent’s previous answer as stale. This signal is the difference between an agent that confidently repeats a wrong number and one that flags itself before the human asks.

Quality scores agents use before querying

Permalink to “Quality scores agents use before querying”Active metadata updates continuously as data changes. Quality scores, ownership flags, and last-modified timestamps stay current. Before an agent queries a table, it can check whether the table is certified and whether quality checks have passed in the last 24 hours, and whether the data is fit for purpose.

Stale or broken data gets flagged before the agent runs the query, not after the user spots a wrong number.

How semantic definitions, lineage, and quality scores power AI agents on the Enterprise Data Graph. Image by Atlan.

AI agents for data analytics: Use cases that work in production

Permalink to “AI agents for data analytics: Use cases that work in production”Not every use case is ready for production. The ones below have shipped at named companies and have supporting evidence. They share a common pattern: each works because the agent has bounded context, governed definitions, and a clear feedback loop.

Revenue metric discrepancy detection and resolution

Permalink to “Revenue metric discrepancy detection and resolution”A finance dashboard says $84M revenue. But the sales report says $86M. An agent watches both, traces the lineage, identifies unrecognized sales bookings, and presents the explanation before the next leadership meeting. This works only when the agent can query certified definitions and their lineage.

Automated dashboard generation with certified data

Permalink to “Automated dashboard generation with certified data”The agent generates dashboards on demand from natural-language requests, but only against tables flagged as certified in the data catalog. Uncertified data is filtered out. The agent’s output inherits the trust signals from the underlying assets, so business users see whether they are looking at production-ready numbers or someone’s experimental table.

NL-to-SQL query agents on governed schemas

Permalink to “NL-to-SQL query agents on governed schemas”This is the bounded-domain use case. Meta’s Analytics Agent team found that 88% of analyst queries use only tables queried in the prior 90 days. When the agent’s scope is constrained to a known set of governed tables, accuracy holds. The Snowflake Intelligence and Atlan partnership operationalizes this pattern through a shared semantic context.

Anomaly detection with root cause investigation

Permalink to “Anomaly detection with root cause investigation”An agent monitors a metric, detects a deviation, and walks the lineage to find the cause. A drop in renewals gets traced to a billing policy change referenced in internal documents. The agent does not just flag the anomaly. It explains it. This requires both governed metric definitions (so the anomaly is real, not a definition mismatch) and lineage, so the root cause investigation has a graph to traverse.

Ad-hoc analytics with self-service guardrails

Permalink to “Ad-hoc analytics with self-service guardrails”Business users ask questions in natural language. The agent generates SQL, but only against schemas the user is authorized to query, with policies enforced at runtime. Tag synchronization between Atlan and Snowflake keeps PII and sensitivity tags consistent, so the agent cannot expose data that the user should not see.

Data quality monitoring across analytics pipelines

Permalink to “Data quality monitoring across analytics pipelines”The agent runs continuous checks against governed quality rules, identifies which tables have failing tests, and notifies owners. Atlan’s Data Quality Studio, running natively on Snowflake’s Data Metric Functions, executes these checks inside the warehouse where the data lives. The agent reads the trust signals and uses them to decide what to query next.

Single-agent vs. multi-agent architectures for analytics

Permalink to “Single-agent vs. multi-agent architectures for analytics”Single-agent setups are simpler to build, monitor, and govern. They suit well-scoped tasks with stable inputs and outputs. A weekly KPI brief, a nightly data quality check, or a monthly sales pack are all good fits. Most teams should start here. Multi-agent systems coordinate specialized agents through an orchestrator.

For example, analytical agents detect anomalies. Knowledge agents pull context from documents and Slack. Workflow agents trigger actions. The pattern is similar to what plays out in AI agents for data management, where specialized agents handle ingestion, quality, and lineage as separate jobs. Gartner predicts that by 2027, one-third of agentic AI implementations will combine agents with different skills to manage complex tasks.

The tradeoff is governance complexity. Each additional agent expands the surface area for context drift, policy violations, and inconsistent metric definitions. Multi-agent systems work when every agent reads from the same governed context layer. They fail when each agent maintains its own private context, because eventually two agents disagree on what “active customer” means, and the system loses coherence.

How to measure the ROI of AI agents in data analytics

Permalink to “How to measure the ROI of AI agents in data analytics”ROI for analytics agents has three components, and most teams measure one and miss the other two. The first is direct productivity, which is calculated from how much time analysts save. In addition, there are ROI components such as decision quality and failure cost.

PwC’s AI Agent Survey of 300 senior US executives found that 66% of adopters report measurable productivity value from AI agents. This is the easiest to measure and the most often cited.

The second is decision quality. Are decisions made on agent outputs better, worse, or the same as decisions made on traditional BI? This requires tracking outcomes over time, comparing forecast accuracy, churn predictions, or anomaly detection precision against the prior baseline. Few teams do this rigorously, which is why so many agent investments produce ambiguous returns.

The third is failure cost. Wrong answers from a confidently wrong agent can cause downstream damage that erases the productivity gains. The average cost of a failed agent project can be in the range of a few hundred thousand dollars, excluding the opportunity costs.

A useful ROI framework tracks four signals. Time saved per analyst per week. Reduction in metric discrepancies surfaced by business users. Forecast and anomaly detection accuracy versus baseline. Agent decision audit rate, meaning how often agent outputs require manual correction. AI agent observability instruments are used in production rather than estimated in slide decks.

How Atlan helps in getting AI agents for data analytics to production

Permalink to “How Atlan helps in getting AI agents for data analytics to production”Infrastructure is the factor that separates organizations running agents in production today from the ones for whom production agents remain a next-quarter ambition. Jay Sobel, a software engineer at Ramp who shipped a working agent in production, framed it directly: “A universal Analyst Agent can’t be built because the problem isn’t the agent, it’s the rest of the implementation; the messy data and the need for high-quality hand-written context.”

That is the gap Atlan was built to close. The Enterprise Data Graph captures the business definitions, lineage, quality signals, and policies that agents need at runtime. The MCP Server makes that context available to any AI tool, including Claude, ChatGPT, Snowflake Intelligence, Databricks Genie, or a custom in-house agent.

Customer evidence backs the architecture. CME Group cataloged 18 million assets and 1,300 glossary terms in year one. Workday co-created its semantic layer with Atlan AI Labs and exposed it to AI analysts via the MCP server. Joe DosSantos, VP of Enterprise Data and Analytics at Workday, summarized the shift: “All of the work that we did with Atlan to get to a shared language amongst people at Workday can be leveraged by AI via Atlan’s MCP server.”

Book a demo to see how Atlan’s context layer makes AI agents for data analytics work in production. Talk to our team.

FAQs about AI agents for data analytics

Permalink to “FAQs about AI agents for data analytics”What are AI agents for data analytics?

Permalink to “What are AI agents for data analytics?”AI agents for data analytics are autonomous software systems that monitor live data, investigate anomalies, generate insights, and trigger actions toward stated business goals. They combine LLMs with governed metadata, lineage, and semantic context to query enterprise data without waiting for a human prompt. Production reliability depends on access to a context layer that resolves business definitions, enforces policies, and traces decisions back to their sources.

Can AI agents replace BI dashboards entirely?

Permalink to “Can AI agents replace BI dashboards entirely?”No, and most teams that try this struggle. Dashboards remain useful for recurring views, executive reporting, and shared reference points. Agents work alongside dashboards by monitoring the same metrics, investigating anomalies, and surfacing findings between scheduled reviews. The realistic pattern is agents reading from the same governed semantic layer that powers the dashboards, so both stay in sync. Replacing dashboards entirely removes the shared reference that everyone in the company relies on.

What are the best AI agents for data analysis?

Permalink to “What are the best AI agents for data analysis?”The right agent depends on your data stack and use case. Snowflake Intelligence and Cortex Analyst work for Snowflake-native NL-to-SQL. Databricks Genie covers Databricks Lakehouse environments.

How do you build an AI agent for data analytics?

Permalink to “How do you build an AI agent for data analytics?”Start with a bounded use case, such as a weekly KPI brief or anomaly monitoring for a single revenue metric. Connect the agent to a governed context layer that supplies semantic definitions, lineage, and quality signals. Use an MCP-compatible interface so the agent can query metadata without custom plumbing. Test extensively before you go for production.

What is agentic analytics?

Permalink to “What is agentic analytics?”Agentic analytics is the application of AI agents to the full data-to-insight workflow, from sensing data changes through analysis, explanation, recommendation, and action. The category extends augmented analytics by adding autonomous reasoning and continuous monitoring rather than only assisting human-driven queries.

What are the prerequisites for deploying AI agents in analytics?

Permalink to “What are the prerequisites for deploying AI agents in analytics?”Four prerequisites matter most. Governed metadata that supplies business definitions and certifications. Column-level lineage so agent outputs are traceable. Active quality signals so agents avoid stale or broken data. Runtime policy enforcement so agents cannot bypass access controls.

How do AI agents handle data quality problems?

Permalink to “How do AI agents handle data quality problems?”Well-designed agents query active metadata for quality scores before running their own logic. If a table has failed quality checks or its lineage shows upstream issues, the agent flags the problem rather than producing a confident wrong answer. Atlan’s Data Quality Studio runs checks natively in Snowflake and surfaces trust signals to agents through the MCP server, so quality is a runtime input rather than an after-the-fact discovery.

What is a realistic 90-day ROI target for an analytics agent?

Permalink to “What is a realistic 90-day ROI target for an analytics agent?”A defensible 90-day target focuses on a single bounded use case rather than on blanket productivity claims. Teams that ship cleanly aim for a measurable reduction in metric discrepancy escalations on a single revenue or KPI workflow. Anomaly-detection precision relative to the prior baseline is another tracked signal in the first quarter. Productivity gains compound later, but early wins come from accuracy and trust in a narrow domain. Avoid setting cross-functional ROI targets in the first 90 days. The agents that survive are the ones that earn trust in one workflow first.

The analytics infrastructure your agents actually need

Permalink to “The analytics infrastructure your agents actually need”The vendor that ships you an agent does not solve the agent problem. The agent problem is what the agent reads when it runs. Definitions, lineage, quality, and policies have to live somewhere governed, current, and accessible to whatever model you choose. This layer is the deciding factor between agents that demo well and agents that hold up under a quarterly close.

Atlan provides the context layer that any analytics agent can query, regardless of which warehouse you run, which BI tool you use, or which model your team prefers. The agents change. The context endures.

Sources

Permalink to “Sources”- The State of AI in 2026, McKinsey & Company

- Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Gartner Newsroom

- Building an Analyst Agent with dbt, Jay Sobel (Substack)

- 2025 Was Supposed to Be the Year of the Agent — It Never Arrived, Reworked (referencing Deloitte Tech Trends 2026)

- AI Agent Survey, PwC

- Text-to-SQL accuracy with grounded context (arXiv:2405.11706)

- Hype Cycle for Agentic AI, Gartner

- Spider 2.0 Benchmark for Enterprise Text-to-SQL (arXiv:2411.07763)