Why do you need context architecture for AI agents?

Permalink to “Why do you need context architecture for AI agents?”Context is not just a prompt. For an AI agent, it includes the tools available to it, the instructions it was given, the background knowledge it holds, and the patterns learned from prior interactions. Without a deliberate architecture governing all of this, agents act on incomplete or conflicting information — and the outputs reflect that.

Context architecture determines how all these information points are structured, prioritized, and retrieved. As Anthropic notes on building effective AI agents: the challenge is thoughtfully curating what information enters the model’s limited attention budget at each step.

Larger context windows help, but they don’t solve the problem. Research from Stanford’s “Lost in the Middle” study found that language models consistently underperform when relevant information is positioned in the middle of long contexts, regardless of window size. The goal is high-signal, low-noise context at every step.

Memory types in context architecture

Permalink to “Memory types in context architecture”Agents draw on different memory tiers depending on the scope and duration of the task:

- Short-term memory: Holds the current conversation or task thread. Session-scoped and discarded once the interaction ends.

- Working memory: Spans a multi-step task or workflow. Carries intermediate results and sub-task state forward across steps.

- Long-term memory: Persists across sessions. Stored in vector databases, knowledge graphs, or structured logs, it gives agents access to institutional knowledge built over time.

What are the core components of context architecture for AI agents?

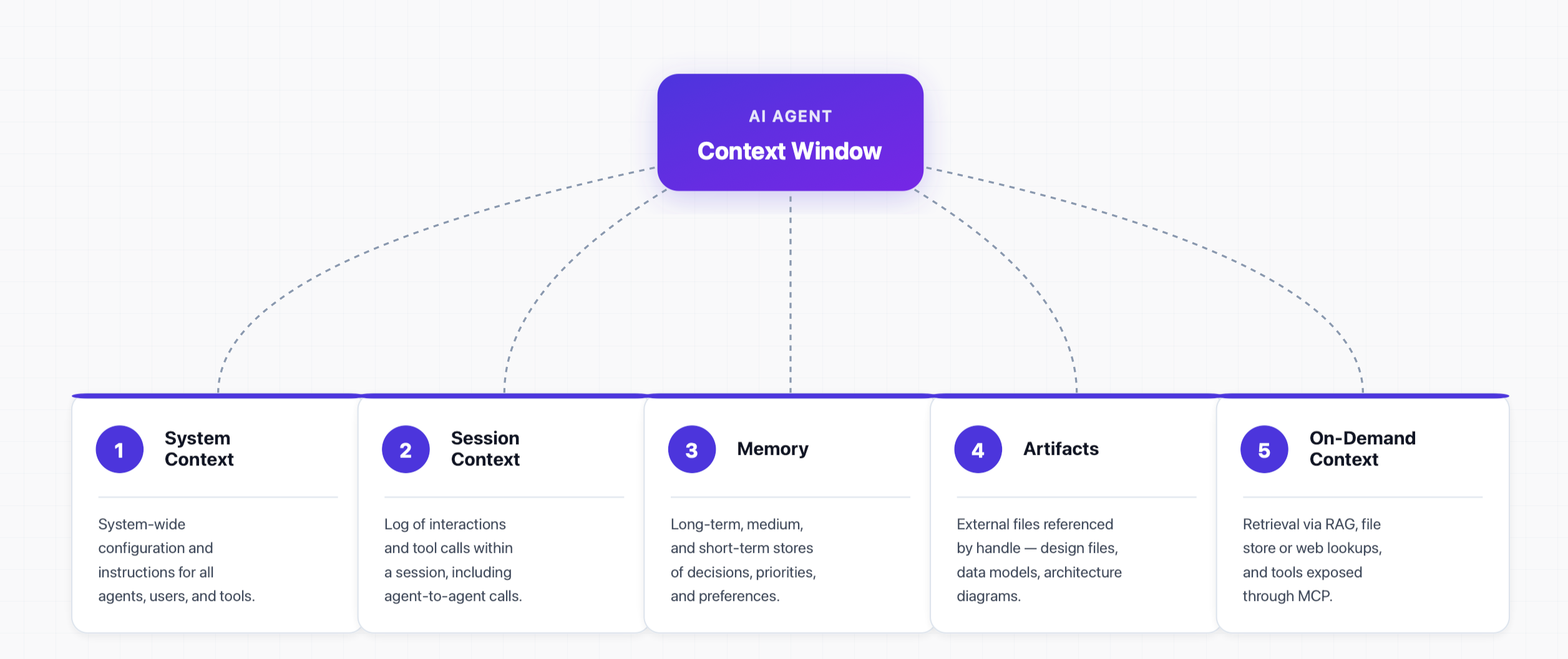

Permalink to “What are the core components of context architecture for AI agents?”Context engineering and context-aware AI agents emerged when practitioners found that prompt quality alone could not account for the full complexity of multi-step agent tasks. Context architecture is a stack of distinct layers, each serving a specific function in the agent’s decision-making process.

1. System context

Permalink to “1. System context”System-wide configuration and instructions that apply to all agents, human users, and tools. This layer is loaded first, before any task begins, and typically lives in the agentic platform’s configuration. Changes here propagate across every interaction the agent handles.

2. Session context

Permalink to “2. Session context”The log of interactions and tool calls within a session of work, typically using agent-to-agent communication protocol. The agentic tool’s internal database handles session-level context, keeping a running record of what has been established within the current working session.

3. Memory

Permalink to “3. Memory”The persistence layer that carries knowledge across sessions — mostly long-term memory, but also medium-term and short-term memory depending on the use case (decisions, priorities, preferences, idiosyncrasies, etc.). Different memory tiers require different storage infrastructure, from vector databases to knowledge graphs to structured logs.

4. Artifacts

Permalink to “4. Artifacts”External files and objects that can’t be directly copied over with the prompt, but are essential for context — design files, data models, architecture diagrams, etc. Artifacts are referenced at runtime, not copied, and must be reliably accessible when the agent needs them.

5. On-demand context

Permalink to “5. On-demand context”Retrieving data via Retrieval Augmented Generation (RAG) — learning how to evaluate RAG systems is key to optimization — looking up an internal file store or the web, or using other tools exposed via Model Context Protocol (MCP). On-demand context keeps agent outputs current without inflating the context window.

Core components of context architecture for AI agents.

How can you optimize context architecture for AI agents?

Permalink to “How can you optimize context architecture for AI agents?”Pushing more information to fill up the context window might seem like a good idea, but it isn’t. Models get easily distracted and overwhelmed by irrelevant information. This is where you have to optimize context.

Key techniques to optimize context architecture for AI agents:

- Context compression: Trims and summarizes older context while keeping the relevant bits. Simpler to apply, but carries a risk of losing important information.

- Memory curation: Manages various types and scopes of memories that sometimes conflict with each other. Long-term memory is very important for optimization.

- Prompt caching: Caching important parts of the prompt — system configurations, broad task types, and common details — so they aren’t processed on every run.

- Summary artifacts: When artifacts consume the whole context window, a summary of large objects works better for the AI agent.

- Utilizing RAG: Organizational or domain-specific knowledge transformed and stored in vector databases enables semantic search for AI agents.

- Context filtering: For many use cases in the data domain, filtering by tags, certification, ownership, and quality introduces structured metadata in a high-impact way.

What are the challenges in optimizing context for AI agents?

Permalink to “What are the challenges in optimizing context for AI agents?”While context is king, it is one of the hardest things to solve for. Data systems are siloed and fragmented, with their own universes, broken governance, and half-implemented or missing components. Even if you can connect to individual tools, you won’t get a coherent picture.

The key difficulties include:

- The disconnect between the technical schema layer and the business semantic layer.

- The absence of data quality for LLMs, lineage, and governance metadata.

- The siloing and fragmentation of metadata making it hard to search, discover, and work with.

- Metadata that doesn’t propagate automatically through lineage and hierarchies.

- The lack of a common interface for accessing context from various data systems.

These issues prevent context from getting accumulated and consolidated so that it can be used in a cogent and consistent manner by AI agents. For context optimization to be applied, you first need a sovereign context layer.

How Atlan provides the sovereign enterprise context layer for AI agents

Permalink to “How Atlan provides the sovereign enterprise context layer for AI agents”AI agents need optimized context, which can be served from a context layer that requires a healthy metadata layer powered by metadata from data systems across your organization.

Atlan is architected on the foundation of a solid metadata layer that powers the enterprise context layer, organizing context using ontologies and knowledge graphs:

- Atlan’s enterprise context layer is built on the Enterprise Data Graph — unifying metadata from 80+ sources — with knowledge graphs and an active ontology layered on top to capture lineage, quality, semantics, and business meaning in one interconnected graph.

- Atlan AI automatically generates and enriches context based on existing business glossary, concepts, and semantics, and allows you to get context from other systems via the Atlan MCP server.

- Atlan’s features around classification, tag propagation, lineage, and data quality in LLMs turn every production interaction, correction, and feedback loop into enterprise-wide memory that AI agents can draw on and improve over time.

- Atlan is ahead of the curve with a Context Engineering Studio that allows you to create context repositories, simulate evaluations, deploy them via agents, and then continuously monitor, observe, and improve workflows.

Real stories from real customers building enterprise context layers

Permalink to “Real stories from real customers building enterprise context layers”

"Atlan captures Workday's shared language to be leveraged by AI via its MCP server. As part of Atlan's AI labs, we're co-building the semantic layer that AI needs."

Joe DosSantos, VP Enterprise Data & Analytics

Workday

Workday: Context as Culture

Watch Now

"Atlan is our context operating system to cover every type of context in every system including our operational systems. For the first time we have a single source of truth for context."

Sridher Arumugham, Chief Data Analytics Officer

DigiKey

DigiKey: Context Operating System

Watch NowMoving forward with building context architecture for AI agents

Permalink to “Moving forward with building context architecture for AI agents”Context architecture is an evolving practice that aims to optimize how context is written, stored, organized, and consumed by AI agents. The five core components — system context, session context, memory, artifacts, and on-demand context — each serve a specific purpose.

Many optimization techniques help you use context more effectively. But you can only use context better when you have it deliberately designed in the first place. This is where a lot of organizations are stuck — the lack of context due to siloed metadata, disjointed governance, and no common thread to connect context across all systems. That’s where Atlan comes in.

Atlan’s enterprise context layer is built on the Enterprise Data Graph, Active Ontology, Context Engineering Studio, and Atlan AI — all of which operate through Atlan’s MCP server, APIs, and SDK.

FAQs about context architecture for AI agents

Permalink to “FAQs about context architecture for AI agents”1. What is context architecture for AI agents?

Permalink to “1. What is context architecture for AI agents?”Context architecture is the deliberate design and organization of system instructions, interactions, memories, artifacts, and retrieved data that an AI agent needs to see during a completion. It is important to improve the time and cost of completion.

2. What is the difference between context architecture and context engineering?

Permalink to “2. What is the difference between context architecture and context engineering?”Context architecture is a structural question: how are the layers that supply context to an AI agent designed, organized, and connected? Context engineering is a content and quality question: what goes into that architecture, and how is it kept accurate? Good architecture serving stale content produces confident, wrong answers. Good content in a poorly designed architecture never reaches the agent reliably.

3. What is the difference between prompt and context engineering?

Permalink to “3. What is the difference between prompt and context engineering?”Prompt engineering relates to the prompt you write as an instruction for a specific task. Context engineering involves many additional aspects, such as memory management, on-demand retrieval, rules, and session state. Context is broader in scope than a prompt, as a prompt is usually one of many components of the context.

4. What is on-demand context for AI agents?

Permalink to “4. What is on-demand context for AI agents?”On-demand context is information that’s pulled into the agent’s context window at runtime rather than being pre-loaded. It’s typically retrieved through RAG over a vector database, lookups against an internal file store or the web, or tools exposed through the Model Context Protocol (MCP). This is how agents access fresh, domain-specific knowledge without bloating every prompt.

5. Why is a context layer important for AI agents?

Permalink to “5. Why is a context layer important for AI agents?”A context layer is the foundation for AI agents for data. It is built on a metadata foundation that provides both structured and unstructured information about the data you’re dealing with. All this metadata accumulates and consolidates in the context layer, which the AI agent then uses to work with data.

6. How does Atlan serve AI agents?

Permalink to “6. How does Atlan serve AI agents?”An enterprise context layer is at the heart of how Atlan serves and activates AI agents. Agents access lineage, quality, governance, reliability, and semantics using the context layer irrespective of the data systems. Atlan also has a Context Engineering Studio that allows you to create versioned Context Repos that AI agents can read via Atlan’s MCP server.

7. What role does memory play in context engineering?

Permalink to “7. What role does memory play in context engineering?”Memory plays a crucial role in ensuring best practices, preferences, and guardrails are implemented across multiple sessions and completions by an AI agent. Depending on the task, an AI agent can invoke short-term, medium-term, or long-term memory. In complex scenarios, it is important to curate memories and flush out irrelevant ones to achieve the best results.