Organizations are racing to deliver AI value. They’re investing in better models, cleaner data, and stronger infrastructure. Leadership is asking the same questions in every boardroom: Do we have the right data? The right models? Can we deploy at scale?

Yet despite all this investment and urgency, a stark reality persists: 84% of organizations say they’re betting big on AI, while only 17% are actually seeing meaningful results.

What’s causing this 67-point execution gap?

In the scramble to solve technical problems, leaders are overlooking the most critical component of AI success: the humans who make it work. Not just any humans, but specifically the data teams who are uniquely equipped to solve AI’s hardest problems yet are being sidelined, exhausted, or scared into paralysis.

We surveyed 550+ data leaders and talked 1:1 to dozens of others to understand what this looks like in reality and the impact it has on organizations. Here’s what we found out – and how to fix it.

Gap #1: Treating context as a technical problem

Permalink to “Gap #1: Treating context as a technical problem”Walk into any organization implementing AI and you’ll hear familiar complaints: “The AI keeps giving wrong answers.” “The recommendations don’t make business sense.” “We can’t trust the outputs enough to use them at scale.”

These sound like technical problems. They’re not. As a data professional we interviewed told us: “Context is the root problem.”

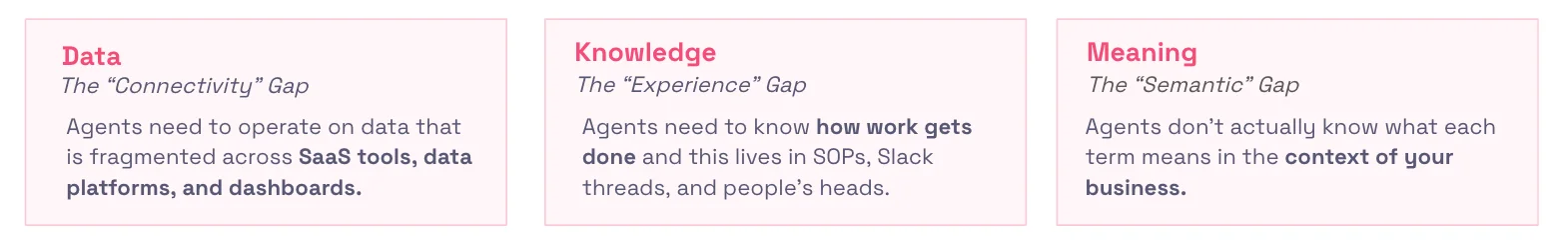

AI systems need context to function. Without shared definitions, quality data, semantic understanding, and governance frameworks, even the most sophisticated models will fail. Research shows that 97% of organizations struggle with context gaps, and 56% face three or more simultaneously. These gaps manifest in three ways:

More than a quarter of data leaders report concern about inaccurate AI responses stemming from lack of business context, while 45% say hallucinations undermine their confidence in LLMs. When you can’t trust AI, you won’t use it.

“It destroys trust,” one engineer told us. “If the agent uses the wrong source or gives inconsistent answers, users lose confidence and adoption collapses.”

Context is a human problem – but who’s responsible?

Permalink to “Context is a human problem – but who’s responsible?”Creating and maintaining context isn’t a technical problem that can be solved with better tools or bigger budgets. It’s fundamentally a human problem.

Someone has to make sense of messy reality and build consensus around definitions. Someone has to adjudicate when “revenue” means different things to Finance versus Sales versus Product. Someone has to notice when an AI’s conclusion doesn’t seem right and provide the feedback that makes it better. Someone has to maintain the semantic layer that helps AI understand not just what data exists, but what it means in the context of your business.

That someone is the data team.

Without data teams actively leading context work, context gaps don’t get closed—they get papered over with workarounds that break the moment you try to scale AI beyond a pilot. Building context infrastructure requires putting the right humans in charge of it.

Gap #2: Leaving data teams out of the conversation

Permalink to “Gap #2: Leaving data teams out of the conversation”Data professionals possess a superpower that most organizations don’t recognize: they’re the only people who truly understand how to work in non-deterministic environments.

Unlike software engineers, whose work is fundamentally binary—a function either passes or fails, code either compiles or it doesn’t—data teams have spent their entire careers navigating ambiguity and uncertainty:

- Two analysts can examine the same dataset and reach different, equally valid conclusions

- Two data scientists can follow identical inputs and generate entirely different models, each optimized for different trade-offs

- Every analysis requires iteration, experimentation, and judgment calls where experience matters more than formulas

This expertise built through years of operating in gray zones where there is no single “right answer.”

When we look at organizations that have successfully deployed most or nearly all of their AI projects, the leadership patterns are striking:

- 52% have an executive sponsor (CxO) driving the initiative, and 87% of those organizations report they’ve operationalized AI or are scaling it with traction

- 30% have AI/ML teams or business unit leaders driving initiatives, and 89% of those report success

The gap emerges in research showing that only 16% of organizations have AI/ML teams leading AI initiatives, and 8% have no clear owner at all. Most programs are led by IT teams, who know how data flows but may lack the context needed to make AI work.

The pattern is clear: when people who own context drive AI, it works. When they’re sidelined, it doesn’t.

Gap #3: Ignoring the human crisis

Permalink to “Gap #3: Ignoring the human crisis”There’s a human crisis happening beneath the surface of AI implementation that most organizations aren’t addressing: the people who should be leading AI initiatives are often the most uncertain about their future.

- 29% of professionals are wary of AI, hesitant to adopt it

- Data teams spend 60-70% of their time on manual troubleshooting

- 10% explicitly worry about job losses

The fear is rational. ChatGPT Enterprise has seen an 8x increase in usage, primarily for early-career tasks. Junior analysts who spend most of their time pulling reports can see AI replacing them. Yet organizations aren’t helping teams understand the evolution: senior judgment, context engineering, and the ability to spot when AI gets it wrong are more valuable than ever.

But fear isn’t the only problem. Many data teams are simply exhausted.

They’re maintaining undocumented pipelines, fielding dashboard discrepancy tickets, and increasingly, figuring out how to make AI just work at scale. According to leaders we spoke with, data teams spend 60-70% of their time on manual troubleshooting.

“Without alignment, everything slows down,” one person told us. “Internal politics around who gets to ‘lead AI’ creates friction that stalls progress.”

It’s not that data teams lack skills—they lack capacity. You can’t ask people to simultaneously be firefighters and architects.

When the experts who understand context best are operating from fear or exhaustion, they can’t provide the confident leadership AI initiatives need. When they’re unclear about their role, they can’t close context gaps. When they’re underwater with tactical work, they can’t step back to do strategic work.

How to close the gap without burning out

Permalink to “How to close the gap without burning out”The 67-point gap between AI investment and AI value isn’t a technical problem—it’s an organizational one. Here’s how to start closing it:

1. Run the self-awareness test

Permalink to “1. Run the self-awareness test”Before investing more in AI infrastructure, assess your organizational readiness with these five questions (adapted from Gaurav Ramesh’s recent analysis in Metadata Weekly):

- The Ownership Test: Can you name who owns each AI initiative end-to-end?

- The Urgency Test: Are you optimizing for appearance or results?

- The Context Test: Do teams share common definitions? Who maintains them?

- The Reality Test: Are decisions based on actual capabilities or aspirational ones?

- The Learning Test: When experiments fail, do you extract signals or add them to the “innovation portfolio” slide?

If you can’t answer these clearly, your context gaps won’t close and your AI investments won’t scale.

2. Clarify ownership and success metrics before executing

Permalink to “2. Clarify ownership and success metrics before executing”Eliminate ambiguity about who owns what:

- Who owns AI strategy end-to-end? Not just who builds it, but who’s accountable for outcomes.

- Who owns context creation and maintenance? Think about the semantic layer, business glossary, and shared definitions.

- Who makes the call when AI outputs don’t pass the smell test? You need curious people who are willing to question the machine.

- Establish success metrics from day one. Don’t wait until after launch to figure out if something’s working. Define the KPIs upfront so you have a baseline to measure against.

Organizations with executive sponsors paired with data/AI teams see success rates around 88%. Make ownership explicit and public.

3. Have the honest conversations

Permalink to “3. Have the honest conversations”Stop pretending everything will stay the same as AI continues to get smarter. Address these areas directly with your teams:

Skills: Which tasks are being automated (e.g. low-judgment, repetitive work)? Which skills are mission-critical (e.g. context engineering, semantic training, judgment in ambiguous situations)?

Capacity: How do we create space for architecture work when teams are firefighting? You can’t scale AI with teams in survival mode. Address root causes of technical debt, not just symptoms.

Expectations: What does “good enough” look like for AI in our organization? A data leader we spoke with put it best: “We align upfront on exit criteria. We’re not aiming for perfection; we’re aiming for safe performance with continuous iteration. If you wait for perfection, you’ll delay transformation and leave value on the table.”

4. Position data teams to lead

Permalink to “4. Position data teams to lead”Give data teams authority to make decisions, not just implement them. This may look like giving them:

- A say in which use cases to pursue and when something isn’t ready for production

- Capacity to do thoughtful design work by investing in quality infrastructure and mitigating manual tasks

- Recognition that their role is evolving from execution to judgment—from pipeline builders to context engineers

5. Start where you have alignment

Permalink to “5. Start where you have alignment”Don’t wait for perfect organizational readiness. According to a practitioner we interviewed: “The goal is to show an early win that builds trust and sets realistic expectations.”

But make sure you have:

- Executive sponsorship (not just permission, but active advocacy)

- Clear ownership (one person accountable for outcomes)

- Data teams involved from day one (not brought in to execute someone else’s vision)

Choose your first use cases strategically. Pick ones where you have good data, clear ownership, and a realistic shot at a quick win — not the flashiest idea, but the most winnable one.

The bottom line

Permalink to “The bottom line”Technical readiness is table stakes. Human readiness is a competitive advantage.

You can have the best models, the cleanest pipelines, and the most advanced infrastructure, but if the people who understand context are sidelined, scared, or exhausted, none of it will scale.

The humans who are best equipped to make AI work—your data teams—need clarity about their role, confidence that they’re being positioned to lead, and a seat at the table where strategy gets made.

The path to AI value doesn’t run through better models. It runs through better support for the people who make those models meaningful with context. Start there, and the 67-point execution gap starts closing.

Read more about the future of modern data teams and their tech stacks here.

Share this article