Data mesh exists so context can be both distributed and governed — the architecture pattern AI agents need to operate across a federated enterprise. Setting one up is how you put that pattern into practice across your domains. A successful data mesh setup transforms data management within organizations by treating data as a product, clearly defining domain ownership, creating a self-serve infrastructure, and implementing federated governance.

Watch Context Studio Demo

This approach fosters collaboration and innovation, allowing teams to leverage data effectively.

How to Setup a Data Mesh?

Permalink to “How to Setup a Data Mesh?”Setting up the data mesh architecture requires you to follow four primary steps

- Treat your data as a product

- Map the distribution of domain ownership clearly

- Build a self-serve data infrastructure

- Ensure federated governance

Data mesh is a modern analytics architecture targeted at mid-sized and large organizations. Organizations are keen on implementing the data mesh architecture to move away from a service-oriented view of data.

Instead, they seek to empower business teams to fully own their data and the pipelines enabling data flow across the data ecosystem.

Here we will explore each of these steps at length to understand how to build and implement the data mesh architecture at scale. But first, let’s quickly recap the principles behind the data mesh.

Also read: Snowflake Data Mesh: Step-by-Step Setup Guide

The data mesh architecture

Permalink to “The data mesh architecture”The term “data mesh” was coined by Zhamak Dehghani in 2019 in her article titled “How to Move Beyond a Monolithic Data Lake to a Distributed Data Mesh.”

Traditionally, organizations have maintained a service-oriented view of data, where data individuals are usually bottlenecks to decision-makers.

With the data mesh architecture, Dehghani puts the onus of data management on business teams. Since they’ll manage their data end to end, they can address the most pressing business questions autonomously, with zero bottlenecks.

Reasons for adopting the data mesh

Permalink to “Reasons for adopting the data mesh”Why implement data mesh in 2024?

Here are some reasons for adopting the data mesh in 2024.

Organizations transition to the data mesh architecture when data ownership is centralized, creating a big monolithic data platform.

The monolith creates silos. Yet, data platform engineers are expected to ensure access to the right data, with no understanding of the business domain or use cases.

The data mesh breaks these silos with a decentralized approach.

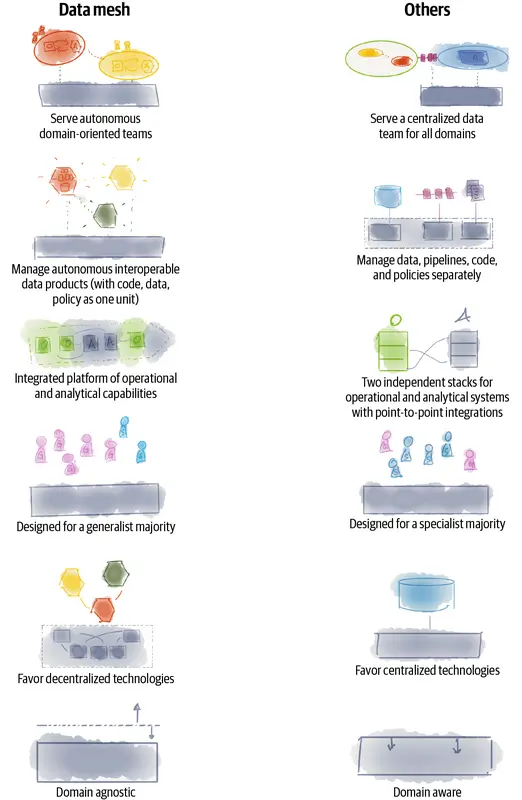

How data mesh compares with traditional data warehousing/lake principles. Source: Data Mesh, Zhamak Dehghani, O'Reilly

According to Dehghani, the data mesh will:

- Enable autonomous teams to extract value from data

- Support value exchange between independent, yet interoperable data products

- Scale data sharing across domains

- Encourage a culture of embedded innovation — easy to find data, capture insights, and use it for ML model development

Data mesh principles

Permalink to “Data mesh principles”Four fundamental principles shape the data mesh architecture:

- Data domains: Data domains contain data products belonging to a part of the business or domain. Each domain is owned by a department or team self-reliant in creating these data products.

- Data products: Every table or dashboard can be viewed as a data product. Like any other product that an organization offers, data products will also mirror their properties by being:

- Discoverable

- Addressable

- Trustworthy

- Self-describing

- Interoperable

- Secure

- Self-serve infrastructure: Since the business teams would be fully responsible for their data domain, they can create their own data products.

- Federated governance: For the data mesh to work, the data domains, platform, and products must be interoperable and governed by standardized conventions.

Also read: What is the data mesh? | Data fabric vs. data mesh

How can you set up the data mesh?

Permalink to “How can you set up the data mesh?”Going from a centralized model to a distributed one for data is primarily a mindset shift. So, the steps you must take to successfully set up the data mesh are more about adapting your mindset than choosing the right technology.

In the end, you need the various organizational units to follow similar data governance practices to create interoperable and shareable data assets. For this purpose, here’s what you must do.

1. Treat your data as a product

Permalink to “1. Treat your data as a product”The first step towards reaching your goal is treating your data as a product. This helps you set a standard for documenting datasets and dashboards while ensuring that they’re interoperable.

To do so, you must catalog your data in a way that it’s credible and trustworthy. So, the data catalog must ensure the discoverability, addressability, interoperability, security, and integrity of data, besides providing adequate context.

Also read: Data as a Product: Applying Product Thinking Into Data

2. Map the distribution of domain ownership clearly

Permalink to “2. Map the distribution of domain ownership clearly”Once your datasets are treated as products, the next step is to address their distribution. You should use Domain-Driven Design (DDD) techniques to group your datasets into different domains.

For example, if you’re in e-commerce, you could split your datasets into domains such as Users, Traffic, Orders, and so on.

It’s a lot easier when businesses are already split by domains. If that’s your case — each department has control over a part of your business, then make them own the datasets and dashboards that make sense to their share of the business.

Without aligning your data platform customers on the distribution of ownership when it comes to datasets and domains, you cannot transition to the mesh architecture successfully.

Also read: Understanding the data mesh architecture

3. Build a self-serve data infrastructure

Permalink to “3. Build a self-serve data infrastructure”After the first datasets as products are available and the domain teams start managing them, it’s time to focus on your data infrastructure.

Having up to a handful of departments (domains) working on their own datasets will raise shared needs when it comes to infrastructure usage. That’s when you must take a product-centric approach to building the data infrastructure platform.

So, all data product owners and domains must align on the technology being used and its purpose. That means using the same underlying technology — cloud providers, programming languages, job scheduling tools — to build and handle datasets. As a result, you’ll have the required level of technical governance to succeed in your data mesh implementation.

Are there some data mesh tools to help set up the architecture?

As mentioned earlier, data mesh is a paradigm shift, rather than a technological setup. So, there is no out-of-the-box tool to help set up a data mesh architecture. However, engineering the right data culture can help substantially.

4. Ensure federated governance

Permalink to “4. Ensure federated governance”This step requires you to work on establishing the best practices and conventions, such as working agreements and shared nomenclature between domains.

The best way forward is to use the learnings from early adopter domains to document the rules for naming fields and tables, publishing and updating documentation, fixing quality issues, and more.

Governance efforts only succeed when they’re a collaborative effort, involving all the domain and data platform stakeholders. That’s why it’s called federated, and not centralized governance.

Also read: Data Governance 101 | Data documentation framework | Data governance has a branding problem

How organizations making the most out of their data using Atlan

Permalink to “How organizations making the most out of their data using Atlan”The recently published Forrester Wave report compared all the major enterprise data catalogs and positioned Atlan as the market leader ahead of all others. The comparison was based on 24 different aspects of cataloging, broadly across the following three criteria:

- Automatic cataloging of the entire technology, data, and AI ecosystem

- Enabling the data ecosystem AI and automation first

- Prioritizing data democratization and self-service

These criteria made Atlan the ideal choice for a major audio content platform, where the data ecosystem was centered around Snowflake. The platform sought a “one-stop shop for governance and discovery,” and Atlan played a crucial role in ensuring their data was “understandable, reliable, high-quality, and discoverable.”

For another organization, Aliaxis, which also uses Snowflake as their core data platform, Atlan served as “a bridge” between various tools and technologies across the data ecosystem. With its organization-wide business glossary, Atlan became the go-to platform for finding, accessing, and using data. It also significantly reduced the time spent by data engineers and analysts on pipeline debugging and troubleshooting.

A key goal of Atlan is to help organizations maximize the use of their data for AI use cases. As generative AI capabilities have advanced in recent years, organizations can now do more with both structured and unstructured data—provided it is discoverable and trustworthy, or in other words, AI-ready.

Tide’s Story of GDPR Compliance: Embedding Privacy into Automated Processes

Permalink to “Tide’s Story of GDPR Compliance: Embedding Privacy into Automated Processes”- Tide, a UK-based digital bank with nearly 500,000 small business customers, sought to improve their compliance with GDPR’s Right to Erasure, commonly known as the “Right to be forgotten”.

- After adopting Atlan as their metadata platform, Tide’s data and legal teams collaborated to define personally identifiable information in order to propagate those definitions and tags across their data estate.

- Tide used Atlan Playbooks (rule-based bulk automations) to automatically identify, tag, and secure personal data, turning a 50-day manual process into mere hours of work.

Book your personalized demo today to find out how Atlan can help your organization in establishing and scaling data governance programs.

Rounding up on Data Mesh Setup

Permalink to “Rounding up on Data Mesh Setup”The problem with centralized data repositories like data lakes is the challenge of extracting value quickly. A decentralized approach — the data mesh — that distributes data ownership among domain experts, while following a shared governance framework is the solution.

Implementing the data mesh architecture warrants following the four steps mentioned above. These steps have been modeled after the underlying principles of the data mesh, and as such, play a deciding role in the success of your mesh architecture implementation.

As Dehghani summarizes it, “the approach is a mesh of data that is organized around domains, and owned by cross-functional teams, and managed by centralized governance to allow interoperability, and served by a self-serve infrastructure.”

Written by Xavier Gumara Rigol

FAQs about Data Mesh Setup

Permalink to “FAQs about Data Mesh Setup”1. How to set up a data mesh?

Permalink to “1. How to set up a data mesh?”Setting up a data mesh involves four key steps: treating data as a product, mapping domain ownership, building a self-serve data infrastructure, and ensuring federated governance. Each step is crucial for empowering teams and enhancing data accessibility.

2. What is a data mesh setup?

Permalink to “2. What is a data mesh setup?”A data mesh setup is a decentralized approach to data architecture that emphasizes domain-oriented ownership, treating data as a product, and enabling self-serve data infrastructure. This model enhances collaboration and data governance across organizations.

3. What are the 4 pillars of data mesh?

Permalink to “3. What are the 4 pillars of data mesh?”The four pillars of data mesh are: domain-oriented decentralized data ownership, data as a product, self-serve data infrastructure, and federated computational governance. These principles guide organizations in implementing effective data management strategies.

4. How does a data mesh setup differ from traditional data architectures?

Permalink to “4. How does a data mesh setup differ from traditional data architectures?”Unlike traditional data architectures, which often centralize data management, a data mesh setup decentralizes ownership and governance. This approach empowers individual teams to manage their data, improving accessibility and responsiveness to business needs.

5. What challenges might I face when transitioning to a data mesh setup?

Permalink to “5. What challenges might I face when transitioning to a data mesh setup?”Challenges in transitioning to a data mesh setup include managing decentralized governance, ensuring consistent data standards, and aligning organizational goals with local autonomy. Addressing these challenges requires careful planning and stakeholder engagement.