What are the core principles of data mesh?

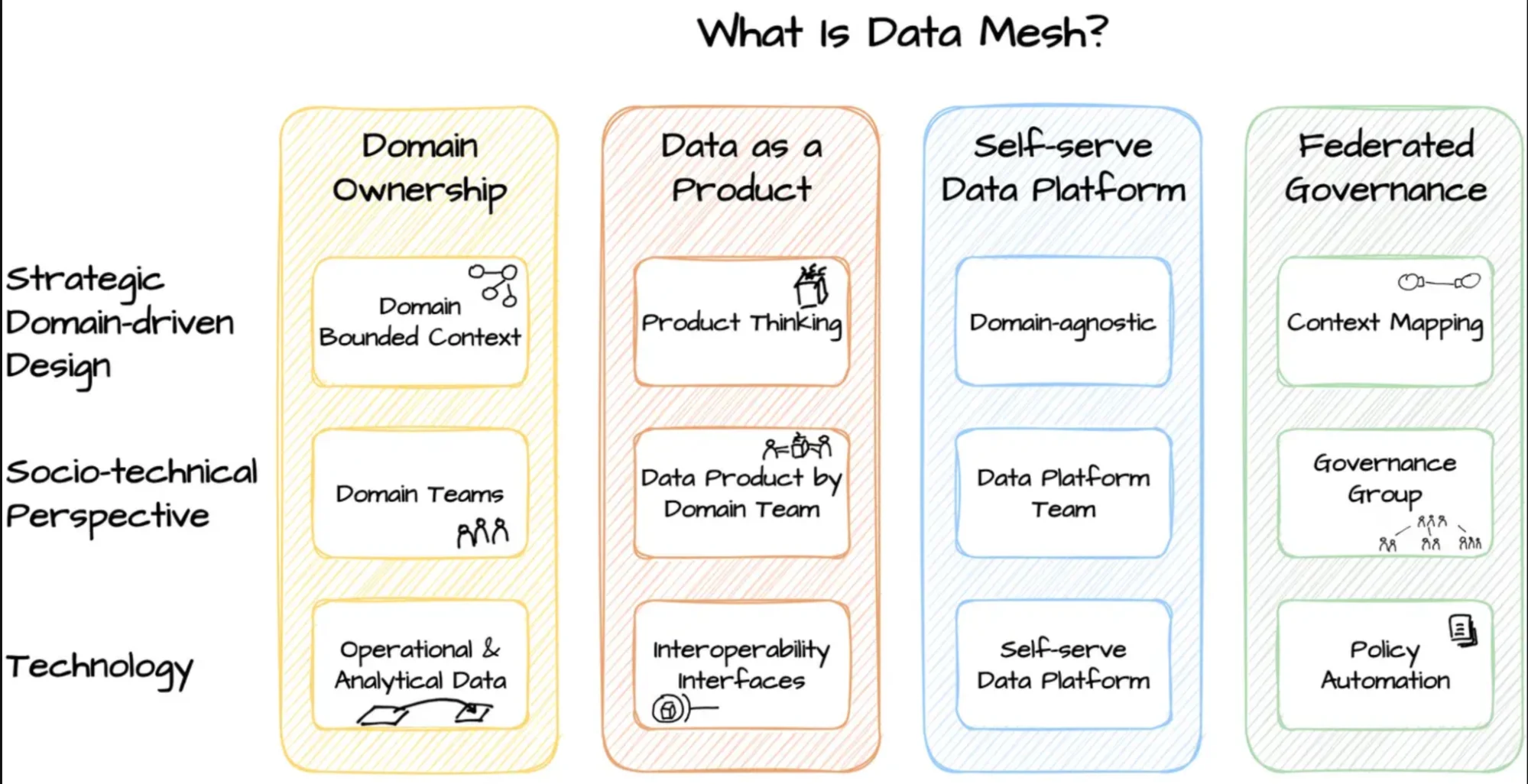

Permalink to “What are the core principles of data mesh?”Data mesh operates on four foundational principles that distinguish it from centralized data architectures: domain ownership, data as a product, self-serve data platform, and federated governance.

The four fundamental principles of the data mesh approach. Source:Data mesh Architecture

Organizations implementing data mesh must embrace all four principles to realize the full benefits of this architectural approach.

1. Domain-oriented decentralized data ownership

Permalink to “1. Domain-oriented decentralized data ownership”Each domain is responsible for creating, managing, storing, and sharing the data it creates without relying on a central data team. This principle aligns data ownership with domain expertise, ensuring those closest to the data maintain its quality and context.

For instance, marketing owns marketing data, sales owns sales data, and each domain manages its data products from creation through delivery.

2. Data as a product

Permalink to “2. Data as a product”Domains treat their data outputs like products with defined consumers, quality standards, and lifecycle management.

Data products include not just the data itself but also metadata, documentation, access mechanisms, and quality metrics. This mindset shift means domains invest in making their data discoverable, understandable, and trustworthy for downstream consumers.

Note: A data product best practice to follow is ensuring that a single data product isn’t owned by multiple domains.

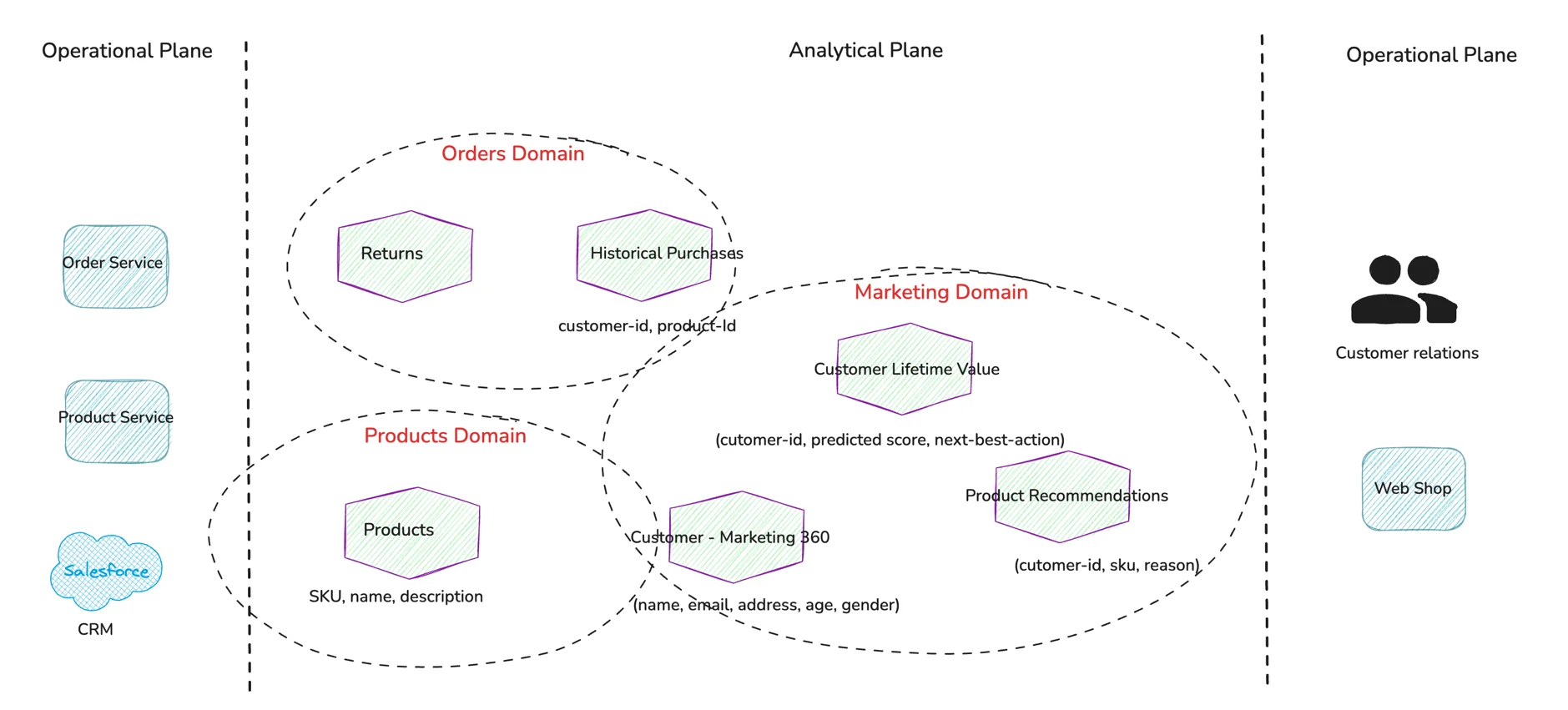

Mapping data products to domains in a data mesh architecture. Source:Martin Fowler

3. Self-serve data infrastructure as a platform

Permalink to “3. Self-serve data infrastructure as a platform”Domain teams access platform capabilities that abstract away infrastructure complexity. This includes automated data pipeline deployment, standardized data storage, monitoring tools, and security controls.

Modern data catalogs enable these principles through automated metadata management and domain workspaces that provide unified views of domain assets.

4. Federated computational governance

Permalink to “4. Federated computational governance”A cross-domain governance group establishes global policies while domains retain local decision-making authority. This federation defines standards for data contracts, security protocols, and interoperability requirements.

According to research on domain-driven design, this balance between autonomy and standardization enables organizations to scale analytical capabilities without creating chaos.

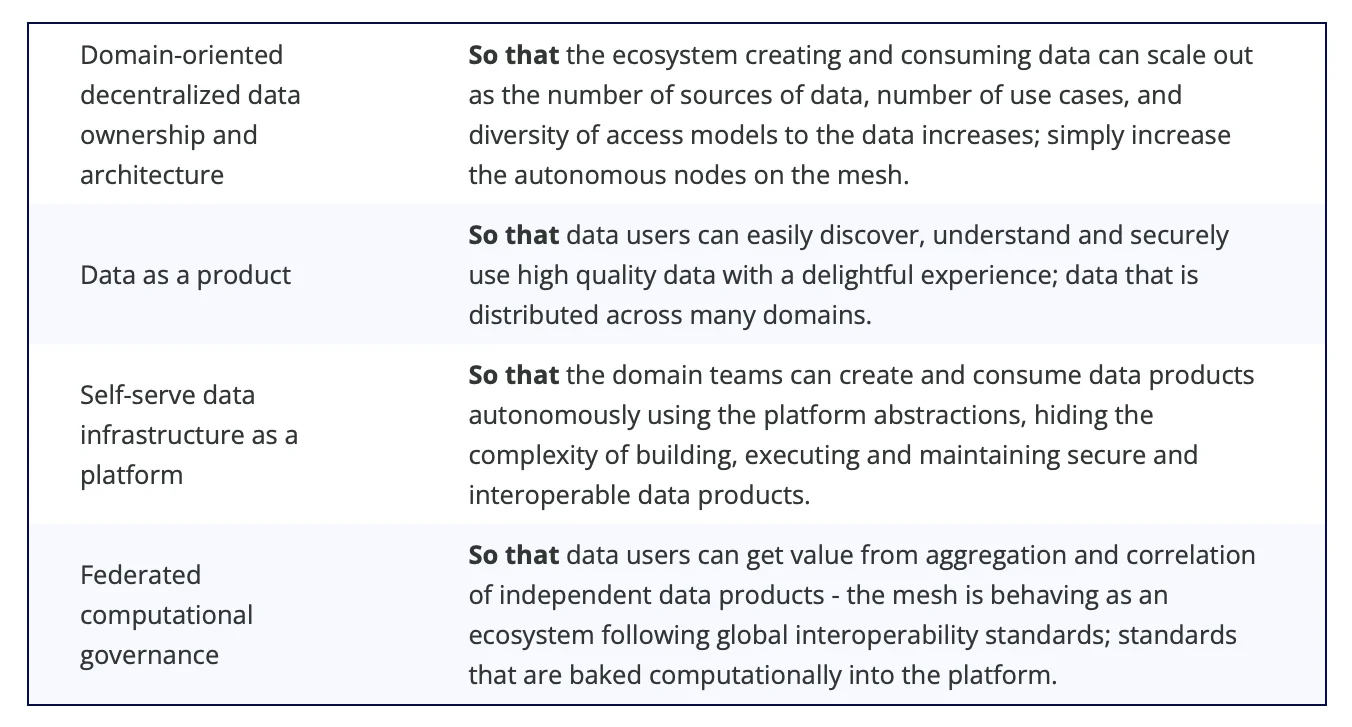

A summary of the four data mesh principles. Source:Martin Fowler

Why do organizations adopt data mesh?

Permalink to “Why do organizations adopt data mesh?”Centralized data architectures create bottlenecks as organizations scale their data operations. A single central team managing all data pipelines, quality, and access becomes overwhelmed with requests, forcing business teams to wait weeks or months for data they need today. This dependency throttles innovation and slows decision-making across the organization.

Key benefits driving data mesh adoption:

- Eliminates central team bottlenecks: Domain teams can iterate on their data products independently without waiting for central infrastructure teams, reducing time-to-insight from months to days.

- Faster data processing through distribution: Distributing data pipelines across domains reduces technical strain on monolithic systems and enables parallel processing at scale.

- Better data quality: Domain experts who generate and use data daily are best positioned to ensure its accuracy, completeness, and relevance rather than distant central teams.

- Enhanced scalability and autonomy: Organizations can add new domains and data products without redesigning central architecture or overwhelming core infrastructure teams.

- Improved compliance through domain-level control: Domain teams apply appropriate security and privacy controls based on their specific regulatory requirements and data sensitivity.

Platforms with active metadata can accelerate these benefits by automating discovery and enforcing governance policies across federated domains.

For teams implementing active data governance, the mesh model provides a framework where governance becomes embedded in domain operations rather than imposed from above.

What are the fundamental data mesh architecture components?

Permalink to “What are the fundamental data mesh architecture components?”Data mesh architecture differs from monolithic systems by distributing data management across multiple interconnected components. Rather than funneling all data through a single warehouse or lake, the mesh creates an ecosystem of specialized data products that communicate through standardized interfaces.

This distribution mirrors microservices architecture principles applied to analytical data.

1. Data products

Permalink to “1. Data products”Data products are the fundamental building blocks containing operational and analytical data for specific domains.

Each product includes the data itself, metadata describing its structure and lineage, code for transformation logic, and infrastructure dependencies. Domain teams package these elements together and expose them through well-defined interfaces.

2. Domain-oriented data pipelines

Permalink to “2. Domain-oriented data pipelines”Each domain operates its own pipelines for ingesting source data, applying transformations, and serving outputs. These pipelines run independently, allowing domains to choose technologies and schedules that fit their specific needs.

Pipeline standardization happens through shared platform services rather than central coordination.

3. Data infrastructure platform

Permalink to “3. Data infrastructure platform”The platform layer provides self-service capabilities that domain teams use to build and deploy data products. This includes automated deployment pipelines, monitoring and alerting, data storage provisioned on demand, and security controls.

Modern implementations use metadata platforms to connect these components and provide unified discovery across domains.

4. Governance layer

Permalink to “4. Governance layer”A federated governance structure defines standards for data contracts, security policies, quality metrics, and cataloging practices. This layer ensures data products remain interoperable and discoverable while domains retain control over implementation details.

How to implement data mesh in 6 steps

Permalink to “How to implement data mesh in 6 steps”Implementing data mesh requires both organizational and technical changes that unfold over months or years.

Organizations should resist the temptation to transform everything simultaneously. Instead, successful implementations follow an iterative approach that builds capability progressively while learning from each iteration.

Six essential implementation steps

Permalink to “Six essential implementation steps”- Define data product criteria: Establish what qualifies as a data product in your organization, including required characteristics like discoverability, addressability, understandability, and trustworthiness. Document expected quality standards and SLA commitments.

- Map domain ownership: Identify natural business domains aligned with organizational structure, such as customer service, manufacturing, or marketing. Assign clear ownership and ensure domains have necessary resources including data engineers and product managers.

- Build self-serve infrastructure: Create platform capabilities that let domain teams deploy pipelines, provision storage, and publish data products without depending on central teams for routine operations.

- Migrate existing data: Transition ownership of data currently in centralized repositories to appropriate domain teams. This involves not just moving data but transferring responsibility for quality, documentation, and access management.

- Establish federated governance: Form a cross-domain governance group that defines global standards while respecting domain autonomy. Start with critical policies around security, privacy, and interoperability before expanding to other areas.

- Start with MVPs: Select one or two pilot domains to implement first, validate the approach, and refine processes before scaling organization-wide. This reduces risk and builds organizational capability gradually.

Research on agile methodology applied to data shows that iterative implementation with rapid feedback cycles produces better outcomes than waterfall approaches.

Organizations using data catalogs designed for mesh can accelerate time-to-value by providing ready-made capabilities for discovery and governance.

Data mesh vs. centralized architectures: What’s the difference?

Permalink to “Data mesh vs. centralized architectures: What’s the difference?”Choosing between centralizing data management or distributing it across domains depends on various factors, such as control, scalability, and operational complexity. Understanding these differences helps leaders make informed architectural decisions based on their organization’s maturity, culture, and strategic objectives.

Key architectural differences

Permalink to “Key architectural differences”Factor | Centralized architectures | Data mesh |

|---|---|---|

Ownership model | Vest control in dedicated data teams who manage pipelines and infrastructure for all business units. | Distributes ownership to domain teams who manage their data as product owners managing customer relationships. |

Scalability approach | Scale vertically by adding headcount and infrastructure capacity, creating natural bottlenecks as data volume grows. | Scale horizontally as each domain manages its own capacity independently. |

Governance structure | Enforce top-down policies uniformly across all data. | Federated governance establishes global standards while allowing domain-specific implementation that reflects local requirements and context. |

Innovation speed | Central approval processes slow experimentation as teams must justify requests and wait for implementation. | Domain autonomy in mesh architectures lets teams iterate rapidly on data products without coordination overhead. |

Ideal for | Small to mid-sized organizations, or those with strict regulatory needs and limited data maturity. | Decentralized models trade control for agility, making them better suited for large organizations with diverse data needs. |

What are the common implementation challenges with the data mesh architecture?

Permalink to “What are the common implementation challenges with the data mesh architecture?”Data mesh introduces organizational and technical complexity that teams must navigate carefully. While the architecture solves centralization problems, it creates new challenges around coordination, skill distribution, and technology standardization.

Organizations should anticipate these difficulties and plan mitigation strategies before beginning implementation.

Five critical challenges with data mesh implementations

Permalink to “Five critical challenges with data mesh implementations”- Talent gap: Domain teams need data engineering skills to build and maintain their data products. Most organizations lack distributed data expertise, creating competition for scarce talent. Mitigation: Invest in training programs and platform abstractions that reduce technical burden.

- Ownership boundary conflicts: Data often spans multiple domains, making ownership ambiguous. For instance, customer data serves marketing, sales, and support simultaneously. Mitigation: Establish clear ownership models and coordination protocols for shared entities.

- Technology standardization: Domains need autonomy to choose appropriate tools, but excessive variation creates interoperability problems and maintenance burden. Mitigation: Define approved technology stacks while allowing justified exceptions.

- Cultural shift: Moving from central control to distributed ownership challenges existing power structures and requires new working patterns. Mitigation: Executive sponsorship, change management programs, and celebration of early wins build momentum.

- Maintaining interoperability: Independent domain teams may drift apart in data formats, semantics, and quality standards without coordination. Mitigation: Federated governance groups establish and enforce standards that ensure mesh cohesion.

Research on organizational change management indicates that 70% of transformation initiatives fail due to people issues rather than technology.

Automated metadata platforms reduce technical burden on domain teams by handling discovery, lineage tracking, and policy enforcement. Understanding what metadata platforms provide helps organizations select tools that enable rather than hinder mesh implementation.

How do modern platforms enable data mesh?

Permalink to “How do modern platforms enable data mesh?”Running a data mesh without unified tooling creates significant overhead for domain teams. Teams spend hours manually documenting data products, tracking lineage across domains, and coordinating governance policies through email threads and spreadsheets.

This administrative burden pulls focus from building valuable data products and slows the organization’s ability to scale analytics operations.

Modern data catalog platforms address these challenges through automation and centralization of mesh operations.

Atlan’s active metadata approach automatically discovers data products and maps lineage based on actual system usage rather than requiring manual documentation. Domain teams access real-time visibility into how their data products are consumed across the organization.

The platform’s collaboration features enable policy discussions to happen in context, with relevant stakeholders automatically notified when changes affect their domains.

Data products in Atlan are scored based on data mesh principles such as discoverability, interoperability, and trust, providing organizations with insights into their mesh maturity.

Automated lineage tracking across domains gives business users a clear understanding of data flows and dependencies without requiring technical expertise. Federated governance capabilities allow the central governance group to create and track policies while domains maintain autonomy over implementation within their products.

Organizations using this approach report faster policy approval cycles and higher participation rates from business stakeholders. Domain teams shift their time from administrative coordination to strategic work on data product development and improvement.

For example, Autodesk implemented their data mesh strategy and now has 60 domain teams with full visibility into data product consumption, using a self-service interface that makes discovery and trust-building effortless for consumers.

See how Atlan helps domain teams implement and scale data mesh architectures

Book a DemoReal stories from real customers: How enterprises activate their data mesh

Permalink to “Real stories from real customers: How enterprises activate their data mesh”

Why Autodesk chose Atlan to go from centralized bottlenecks to 60 autonomous domains

"We had a number of false starts looking at data catalog technology. To better support data mesh, we selected Atlan. Atlan is the layer that brings a lot of the metadata that publishers provide to the consumers, and it's where consumers can discover and use the data they need."

Mark Kidwell, Chief Data Architect

Autodesk

🎧 Listen to podcast: Why Autodesk chose Atlan to activate its Snowflake data mesh

Porto: 40% governance cost reduction through efficient scaling

"While traditional data catalogs and open-source solutions may have been capable of a metrics catalog, the deeper level of cross-functional collaboration needed to execute Data Mesh meant that Atlan became Porto's partner of choice going forward."

Danrlei Alves, Senior Data Governance Analyst

Porto

🎧 Listen to podcast: Porto: 40% governance cost reduction through efficient scaling

Moving forward with data mesh

Permalink to “Moving forward with data mesh”Implementing data mesh requires careful planning, the right organizational structure, and platform capabilities that reduce coordination overhead.

Start by identifying pilot domains where the benefits of autonomy outweigh the costs of new infrastructure. Build your governance federation with representatives who can make decisions rather than just provide input. The tools and processes you choose will determine whether your mesh becomes a strategic asset or an administrative burden.

Modern platforms can automate much of the discovery, lineage tracking, and policy enforcement that would otherwise consume domain team capacity. As organizations mature their mesh implementations, they shift focus from infrastructure concerns to strategic questions about data product development and cross-domain analytics that deliver business value.

Atlan supports your data mesh journey with automated metadata management and native mesh capabilities.

FAQs about data mesh

Permalink to “FAQs about data mesh”1. What is the difference between data mesh and data lake?

Permalink to “1. What is the difference between data mesh and data lake?”A data mesh is a decentralized architecture where domain teams own and manage their data as products. A data lake is a centralized storage repository managed by a central team.

Data mesh emphasizes organizational structure and ownership, while data lakes focus on storage technology. Organizations can implement data mesh using data lakes as the underlying storage layer for domain data products.

2. Who should own data products in a data mesh?

Permalink to “2. Who should own data products in a data mesh?”Data products should be owned by domain teams closest to the data’s origin and primary use cases. For example, the sales team owns sales data products, marketing owns marketing data, and customer service owns support interaction data.

Each domain team includes a data product manager responsible for quality, security, and user satisfaction, supported by data engineers who build and maintain the products.

3. How does federated governance work in practice?

Permalink to “3. How does federated governance work in practice?”Federated governance establishes a cross-domain committee with representatives from each domain plus platform teams. This group defines global standards for security, data contracts, quality metrics, and interoperability while domains control implementation details.

Policies cover critical areas like data classification, access protocols, and documentation requirements. The governance group meets regularly to review standards and address coordination issues.

4. What skills do domain teams need for data mesh?

Permalink to “4. What skills do domain teams need for data mesh?”Domain teams need data product managers who understand both business requirements and data capabilities. Data engineers build pipelines and maintain infrastructure. Analysts ensure data products serve consumer needs effectively.

Domain teams should include or have access to platform engineers who manage underlying infrastructure and security specialists who implement access controls and compliance requirements.

5. How long does data mesh implementation typically take?

Permalink to “5. How long does data mesh implementation typically take?”Pilot implementations with one or two domains typically take 3-6 months to validate the approach and establish patterns. Organization-wide rollouts span 12-24 months or longer depending on size and complexity. Implementation proceeds iteratively, with new domains joining progressively as the platform matures and organizational capabilities develop. Rushing implementation without proper foundation leads to fragmentation and governance failures.