Magda is a federated, open-source data catalog that helps you organize and manage large data assets from various sources.

In this explainer article, we’ll explore the architecture, capabilities, use cases, and setup for the Magda data catalog.

Is Open Source really free? Estimate the cost of deploying an open-source data catalog 👉 Download Free Calculator

Magda data catalog explained

Permalink to “Magda data catalog explained”Magda is an open-source, federated data catalog. It offers a centralized hub to catalog, enrich, search, monitor, and manage all of your organization’s data from diverse sources.

Unlike traditional data catalogs, a federated data catalog like Magda provides “a single view across all data of interest to a user, regardless of where the data is stored or where it was sourced from. Federating over many data sources of any format is at the core of how Magda works.”

An introduction to Magda, a federated data catalog - Source: Youtube.

Federation in Magda

Permalink to “Federation in Magda”As such, Magda can accept metadata from existing Excel or CSV-based data inventories, existing metadata APIs such as CKAN or Data.json, or have data pushed to it from other tools in your data stack via REST API.

You can look up any data asset (that you have access to) using Magda’s search functionality. You can track the history of each asset and get webhook notifications when metadata records are changed.

We’ll explore these capabilities further in the subsequent sections, but first, let’s take a quick detour and take a peek at Magda’s history.

Magda data catalog: Origins

Permalink to “Magda data catalog: Origins”Magda is a project started by the Commonwealth Scientific and Industrial Research Organisation (CSIRO) and the government of Australia. The team behind Magda names CSIRO’s Data61, the Digital Transformation Agency, the Department of Agriculture, the Department of the Environment and Energy and CSIRO Land and Water as the entities behind its development.

Since January 2019, Magda has been powering the Australian government’s federal open data portal — data.gov.au. This portal is a single place for Australia’s citizens, scientists, journalists and businesses to discover and access 80,000+ datasets — everything from data APIs to Excel files.

According to the CSIRO, “data.gov.au now makes over 89,000 datasets drawn from sources all over the Australian government available for search, and makes these available to 10,000-20,000 individual users per week.”

Magda data catalog: Architecture

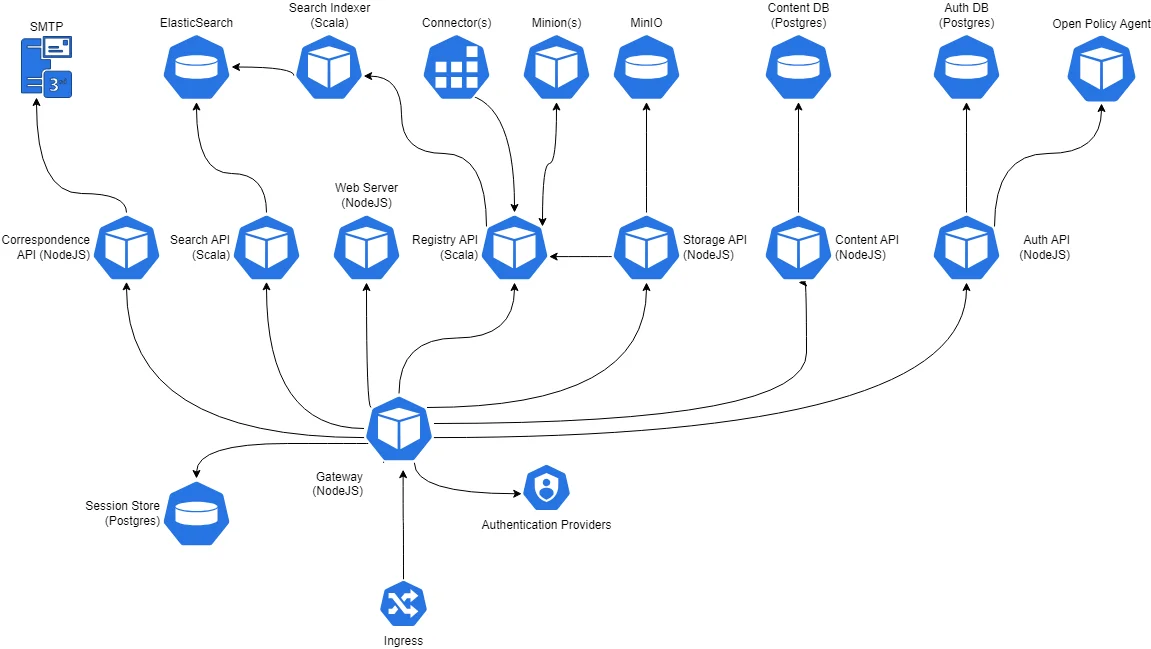

Permalink to “Magda data catalog: Architecture”Magda employs an open-source, modular architecture. It consists of a set of microservices that make it easy to extend the catalog to collect data from various sources or to enrich metadata with more context.

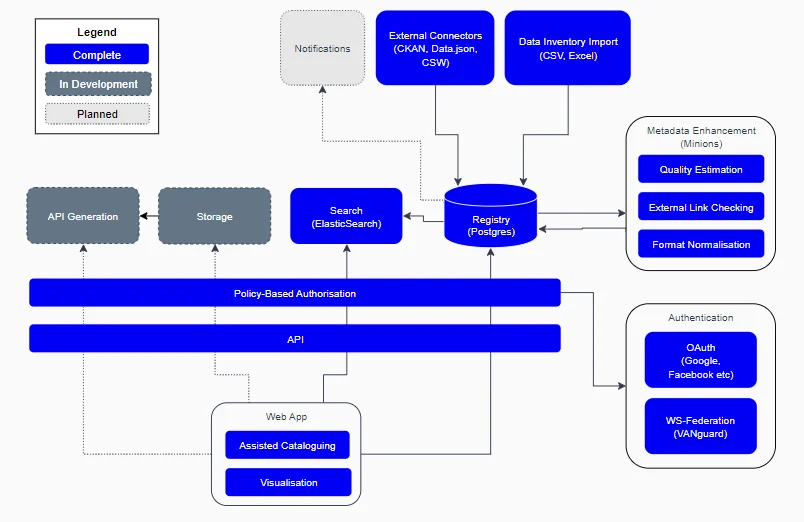

Magda data catalog architecture - Source: Magda.

It has multiple core components (or microservices) that are packaged as Helm Charts.

Architectural components of the Magda data catalog - Source: GitHub.

These components include:

- Connectors: These modules establish the link between Magda and an array of data sources. They extract metadata related to data assets from diverse sources, including files, databases, and APIs.

- Authorization API: This API handles users, roles, permissions, API keys, and more within Magda.It’s important to note that the Authorization API is not responsible for authentication. That’s taken care of by one of the Authentication Plugins or Gateway’s built-in API keys authentication mechanism.

- Content API: The management of Magda’s content is facilitated by this API. So, you can create, alter, and remove records, as well as include and exclude aspects within these records. Magda refers to this API as a very light headless CMS to hold text and files.

- Correspondence API: This API coordinates communication between Magda and external systems. It allows Magda to exchange messages with external systems (e.g., data warehouses and lakes).

- Gateway: Serving as the main point of entry, the gateway routes requests to various components within the Magda system. It’s also responsible for managing Authentication Plugins, coordinating the authentication process, and managing security-related HTTP headers via Helmet.

- Minions: These worker processes are tasked with retrieving metadata from connectors and storing it in Magda, thus ensuring Magda’s scalability for handling substantial volumes of data. Magda runs all minions as external plugins.

- Registry: The registry serves as the metadata store for maintaining information on connectors, minions, and records, enabling other Magda components to locate and access the necessary data.

- Records: These fundamental data units within Magda’s registry. They represent individual data assets (like files and database tables) and include metadata such as location, format, schema, and usage details.

- Aspects: As additional data associated with records, aspects (i.e., a piece JSON data) help describe the record’s data significance and track its lineage.

- Events: Magda sends out notifications, or events, upon changes to the registry —significant actions such as a new record creation or an aspect update. These events offer insight into Magda’s activities and can trigger subsequent processes.

- Webhooks: These are URLs that Magda triggers when vital events occur, facilitating the integration of Magda with other systems like notification services and data pipelines. Webhooks notify services outside the registry about changes within the registry in real-time.

- Search API: This API aids in record search within Magda, enabling users to find records based on their name, description, or other criteria.

- Search Indexer: This process is responsible for indexing records in Magda, supporting the search API in finding the desired records quickly and efficiently.

- Storage API: Used for storing records within Magda, this API is used by minions to store metadata related to records in the registry.

- Web Server: Hosting the Magda user interface (UI), the web server is tasked with presenting records and enabling user interactions with Magda.

Authentication (authn) in Magda data catalog

Permalink to “Authentication (authn) in Magda data catalog”Internal authentication requests are handled using a JWT token, which usually contains at least the userID.

For external authentication requests, Magda offers two options:

- Authentication Plugins-Based Authentication

- API Key-Based Authentication

As of now, Magda’s authentication system can integrate with:

- CKAN

- AAF

- VANguard (WSFed)

- ESRI Portal

- Okta

Read more → Federated authentication (authn) in Magda

Authorization (authz) in Magda data catalog

Permalink to “Authorization (authz) in Magda data catalog”Magda’s role-based access control system is powered by the policy engine Open Policy Agent (OPA).

Here’s how it works:

- Access to datasets is restricted based on established access-control frameworks (e.g. role-based), or custom policies that you specify

- Since authorization is federated, Magda can pull in data from an external source and retain the authorization policies as per the configuration of the external data source

- On search, you get to see results that you have access to

Read more → Federated authorization (authz) in Magda

How do Magda’s architectural components interact with each other?

Permalink to “How do Magda’s architectural components interact with each other?”Imagine you’re working on a project that requires you to find, access, and integrate climate change data from several sources into your data processing pipeline.

Firstly, you engage with Connectors in Magda, setting them up to gather metadata about relevant datasets from diverse external sources such as open data portals, databases, and file systems. These connectors pull all relevant metadata and integrate it into the Magda catalog.

Next, this metadata is stored in the Registry. Each individual dataset, distribution, organization, or other resource forms a Record within the Registry.

Aspects, which define the schema for different types of metadata, are associated with each of these Records. For your climate change datasets, “Aspects” could include critical information such as the data source, time range, geographic coverage, and format.

While the Registry and Records are getting populated, Minions are in the background, performing tasks such as indexing, crawling, and data processing. Minions play a crucial role in enhancing search performance by indexing newly added datasets.

When you’re ready to search for specific datasets, you interact with Magda’s Search API, which relies on the Search Indexer to rapidly retrieve indexed data from the Registry, ensuring your search results are delivered quickly and accurately. This result set is then presented back to you via the Magda web interface, served by the Web Server.

Before you can download or access the data, the Authorization API may need to verify your permissions, especially if the dataset has access restrictions. The Storage API then manages the retrieval or download of the chosen data asset, ensuring you get the data in the required format.

Throughout your interaction, the Content API manages any static content you might encounter, such as images, documents, and so on. Should you have any feedback or queries, the Correspondence API is there to manage your communications.

Meanwhile, an Events system underpins all these operations, maintaining a record of all changes to the Registry. This ensures data consistency and can trigger actions such as reindexing by Minions when changes occur. It’s like a reliable notification service keeping all components in sync.

Throughout this process, the Gateway acts as the middleman, ensuring seamless communication. It is the single entry point for all your requests to Magda’s APIs, simplifying and streamlining your interactions with the system.

Magda data catalog: Capabilities

Permalink to “Magda data catalog: Capabilities”Magda offers a range of features to address diverse needs, such as:

- Handling diverse data

- Broad source adaptability

- Task automation

- Data access regulation

- Metadata management efficiency

- Data governance, data discovery, and metadata management

Let’s explore each capability further.

Handling diverse data

Permalink to “Handling diverse data”Magda can efficiently catalog data, irrespective of its volume, from substantial big data to smaller data sets. It is also proficient at accommodating a variety of data types, dealing with both structured sources (i.e., SQL databases and CSV files) and unstructured forms (i.e., text files and images).

Broad source adaptability

Permalink to “Broad source adaptability”Magda’s capabilities extend to assimilating data from multiple internal and external sources. With its capacity to interface with numerous data APIs, it demonstrates high adaptability to varying data environments.

Task automation

Permalink to “Task automation”One of Magda’s strengths is its ability to automate key tasks such as indexing and crawling, which are vital for maintaining a current and searchable data catalog. This automated functionality streamlines data management, freeing up resources to concentrate on data analysis.

Data access regulation

Permalink to “Data access regulation”Magda users are authenticated through multiple methods, and upon authentication, the system carefully regulates their access to specific data resources. This system ensures that sensitive data is well-protected.

Metadata management efficiency

Permalink to “Metadata management efficiency”Magda stores extensive metadata pertaining to datasets, distributions, and organizations, among other resources. Such metadata provides a valuable context to your data assets, aiding in its search-ability and categorization.

The ability to customize the categorization of datasets further augments the efficiency of Magda’s metadata management.

Data governance, data discovery, and metadata management in Magda

Permalink to “Data governance, data discovery, and metadata management in Magda”Data governance

Permalink to “Data governance”Data governance is a key aspect of Magda’s capabilities. As discussed earlier, its Authorization API is critical in managing access control to data, ensuring that only authorized users can access the necessary resources.

Its role-based access control ensures that users have permissions appropriate to their roles in the organization.

However, there may be potential room for improvement in the ability to fine-tune access controls based on more complex policies.

Data discovery

Permalink to “Data discovery”For data discovery, Magda’s Search API and Search Indexer play crucial roles. These tools enable users to find and retrieve the data they need from the catalog quickly and efficiently.

The Search API’s performance and accuracy, however, can be influenced by the amount and complexity of the data, potentially slowing down the search process in large-scale environments.

Metadata management

Permalink to “Metadata management”Metadata management in Magda is primarily handled through the Registry, Records, and Aspects. As mentioned earlier, the Registry stores metadata about various resources, represented as Records. Aspects, defining schemas for different metadata types, can be attached to these Records for detailed categorization.

Yet, complex metadata schemas may pose challenges to effective management and categorization, particularly when dealing with unstructured data or rapidly evolving data structures.

To address these potential limitations, you should consider integrating complementary tools into your Magda setup. Here are some examples:

- Incorporating advanced access management solutions could provide more granular control over data governance.

- Using specialized search solutions could enhance data discovery in large-scale data environments.

- For metadata management, schema management tools can help cope with complex or evolving data structures.

Alternatively, you can use an off-the-shelf active metadata management and cataloging platform like Atlan to overcome the limitations of an open-source tool like Magda.

For instance, Atlan’s UI is more intuitive and user-centric. It also supports AI-powered data discovery, documentation, and querying. Moreover, Atlan’s equipped with collaboration features for all data practitioners, reducing data chaos and fostering a culture of data-driven decisions.

Read more → Modern data catalog 101 and evaluation guide

Magda data catalog: Setup process

Permalink to “Magda data catalog: Setup process”Initiating the Magda setup demands awareness of the preconditions and potential pitfalls. The following rudimentary guide provides a brief overview of the setup:

- Prerequisites

- Trying it out locally

- Building and running the frontend

- Building and running the backend

- Set up Helm

- Install a local Kube registry

- Install Kubernetes-replicator

- Build local Docker images

- Build Connector and Minion local Docker images

- Deploy Magda with Helm

Let’s explore each step at length.

1. Prerequisites

Permalink to “1. Prerequisites”Before proceeding with the installation, ensure that you have the following prerequisites installed:

- Node.js (Version 14)

- Java 8 JDK

- sbt

- yarn

- GNU tar (for macOS users)

- gcloud (for using the kubectl tool)

- Helm 3 (package manager for Kubernetes)

- Docker

- Kubernetes cluster (can be minikube, Docker Desktop, or a cloud cluster)

2.Trying it out locally without building source code

Permalink to “2.Trying it out locally without building source code”If you want to try Magda without modifying anything, you can install either minikube or Docker for Desktop and follow the instructions at existing-k8s.md.

3. Building and running the frontend

Permalink to “3. Building and running the frontend”If you only want to work on the UI, you can follow these steps:

- Clone the Magda repository.

- Run the following commands: This will build and run a local version of the Magda web client that connects to the API at

https://dev.magda.io/api. If you want to connect to a different Magda API, you can modify theconfig.tsfile in the client.

arduinoCopy code

cd magda-web-client

yarn install

yarn run dev

4. Building and running the backend

Permalink to “4. Building and running the backend”To build and run the backend components, follow these steps:

- Clone the Magda repository and navigate to the root directory.

- Install dependencies and set up component links:

Copy code

yarn install

- Build Magda by running the following command: You can also build individual components by running

yarn buildin the component’s directory (e.g.,magda-whatever/).

cssCopy code

yarn lerna run build --stream --concurrency=1 --include-dependencies

5. Set up Helm

Permalink to “5. Set up Helm”Helm is used as the package manager for Kubernetes. Follow these steps to set it up:

- Install Helm 3 by following the instructions at helm.sh/docs/intro/install.

- Add Magda Helm Chart Repo and other relevant Helm chart repos:

csharpCopy code

helm repo add magda-io https://charts.magda.io

helm repo add twuni https://helm.twun.io

helm repo update

6. Install a local kube registry

Permalink to “6. Install a local kube registry”This step allows you to use a local Docker registry to upload your built images. Use the following commands:

bashCopy code

helm install docker-registry -f deploy/helm/docker-registry.yml twuni/docker-registry

helm install kube-registry-proxy -f deploy/helm/kube-registry-proxy.yml magda-io/kube-registry-proxy

7. Install Kubernetes-replicator

Permalink to “7. Install Kubernetes-replicator”Kubernetes-replicator helps automate the copying of required secrets from the main deployed namespace to the workload (the OpenFaaS function) namespace. Follow these steps:

- Add the Kubernetes-replicator Helm chart repo:

csharpCopy code

helm repo add mittwald https://helm.mittwald.de

- Update the Helm chart repo:

sqlCopy code

helm repo update

- Create a namespace for Kubernetes-replicator:

sqlCopy code

helm repo update

- Install Kubernetes-replicator:

sqlCopy code

helm repo update

8. Build local Docker images

Permalink to “8. Build local Docker images”Build the Docker containers locally using the following commands:

scssCopy code

eval $(minikube docker-env) # (If you're running in minikube and haven't run this already)

yarn lerna run build --stream --concurrency=1 --include-dependencies # (if you haven't run this already)

yarn lerna run docker-build-local --stream --concurrency=1 --include-dependencies

9. Build Connector and minion local Docker images

Permalink to “9. Build Connector and minion local Docker images”If you want to use locally built connector and minion Docker images for testing and development purposes, follow these steps:

- Clone the relevant connector or minion repository.

- Build and push the Docker image to a local Docker registry. Run the following commands from the cloned folder:

arduinoCopy code

yarn install

yarn run build

eval $(minikube docker-env)

yarn run docker-build-local

- Modify

minikube-dev.ymlto remove theglobal.connectors.imageandglobal.minions.imagesections.

10. Deploy Magda with Helm

Permalink to “10. Deploy Magda with Helm”Follow these steps to deploy Magda using Helm:

- Update the Magda Helm repo:

sqlCopy code

helm repo update

- Update Magda chart dependencies:

sqlCopy code

yarn update-all-charts

- Deploy the Magda chart from the Magda Helm repo: If you need HTTPS access to your local dev cluster, follow the extra setup steps mentioned in the provided documentation.

bashCopy code

helm upgrade --install --timeout 9999s -f deploy/helm/minikube-dev.yml magda deploy/helm/local-deployment

This process may take some time as it involves downloading all the Docker images, starting them up, and running database migration jobs. You can monitor the progress by running kubectl get pods -w in another terminal.

These steps cover the basic setup and deployment process of Magda. Refer to the provided documentation for additional details and advanced configuration options.

Note

Permalink to “Note”You may encounter potential roadblocks during the setup. A key point to note is that familiarity with Kubernetes is essential due to Magda’s dependence on it. For novices to Kubernetes, this might entail a learning curve. Additionally, configuring the connectors and the authorization API may necessitate an intricate understanding of your data sources and access control policies.

To overcome these hurdles, a few strategies can prove useful:

- Having a comprehensive understanding of Kubernetes basics can be advantageous during the setup process.

- A thorough comprehension of your data environment can also facilitate the configuration of connectors and the Authorization API.

- Lastly, do not hesitate to consult the comprehensive documentation available on the GitHub page of Magda, which offers detailed guides and problem-solving tips.

Final thoughts

Permalink to “Final thoughts”Magda is an open-source data cataloging and management solution underpinned by a federated architecture. It aims to build a single view for all of your data assets, irrespective of their origins or formats.

By accumulating metadata from various sources into a single system, it promises effective data governance, discovery, and metadata management. The microservice architecture ensures that you can extend the catalog as per your requirements.

However, it’s an open-source platform that’s still under development and as such, it lacks in the areas of user-friendliness, collaboration, and actionable, cross-system lineage, among others. So, you might have to integrate supplementary tools to fully meet your cataloging requirements.