Connectors are how an AI agent reaches every source of context. Without them, the agent only sees a slice of the business and answers from a partial view. Deploying Snowflake on Azure correctly is how you wire those sources together for analytics and AI. Snowflake is a data cloud platform that supports modern data architectures like warehouses, lakes, and lakehouses across your preferred cloud provider, and Azure, one of the three major platforms Snowflake supports, integrates closely with Microsoft tools such as Power BI and Microsoft 365. This article explores how to deploy and operate Snowflake on Azure, including:

- Region availability and pricing

- Loading and unloading data in Azure containers

- Using Snowpipe and Azure Functions

- Connecting Snowflake to Power BI

- Common FAQs about deploying Snowflake on Azure

Is Snowflake available on Azure?

Permalink to “Is Snowflake available on Azure?”Snowflake has been generally available on Azure since mid-2018. As of May 2025, Snowflake is available in 16 Azure regions, including a few SnowGov regions in the US.

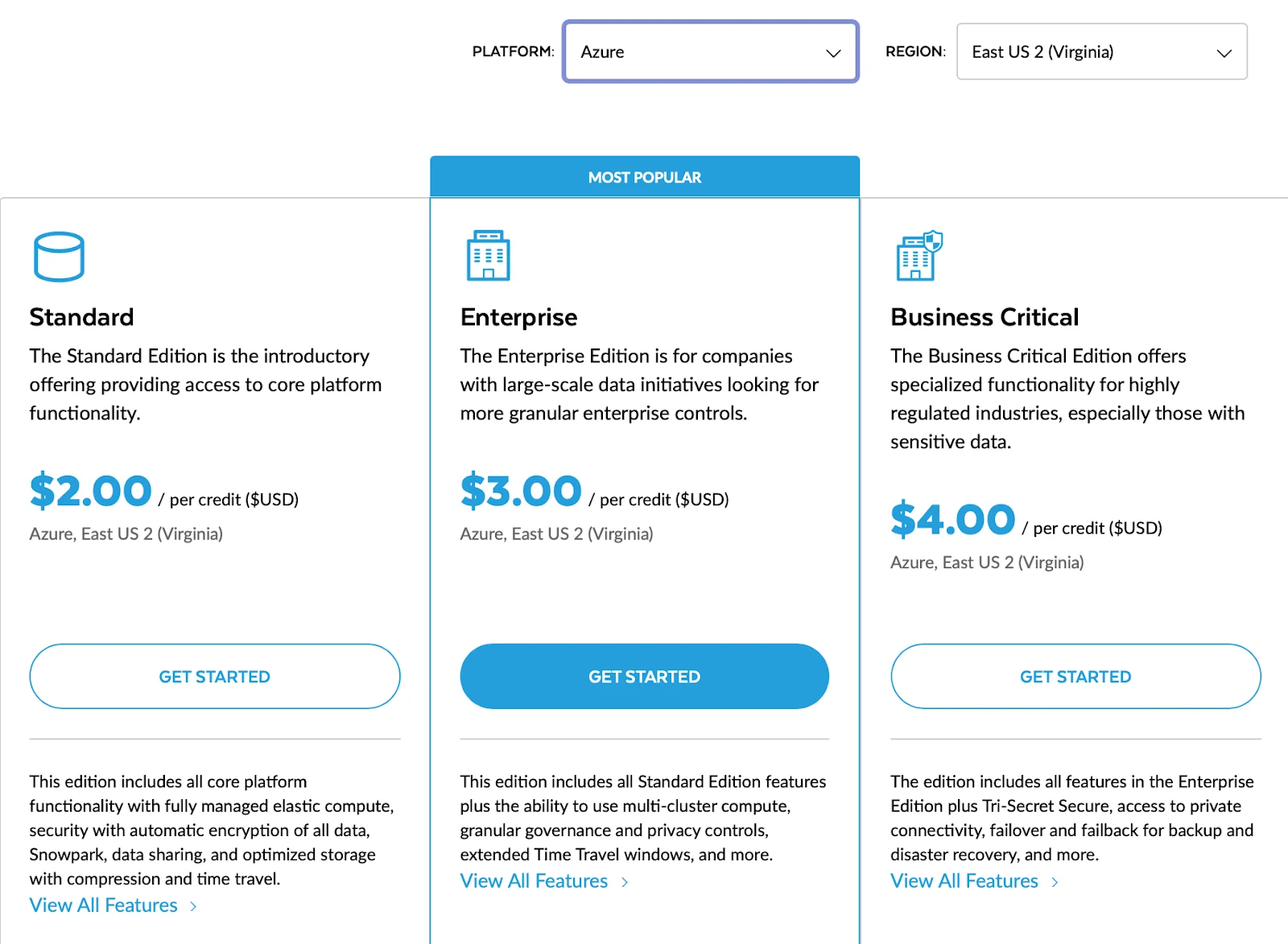

Snowflake pricing depends on the Snowflake Edition and the region where you want to deploy it. Here’s what the Snowflake pricing page looks like in Azure’s Virginia region.

Snowflake credit pricing for Azure's Virginia region - Source: Snowflake.

Before choosing a region, you must know that not all regions support all Snowflake features. This is especially important when your business requires specific security and compliance features, private networking and data residency being a couple of them. You need to consider all these factors when deploying Snowflake in Azure.

How can you deploy Snowflake on Azure?

Permalink to “How can you deploy Snowflake on Azure?”You can deploy Snowflake on Azure by:

- Choosing a supported Azure region and Snowflake Edition

- Using Azure Blob Storage or Data Lake Storage Gen2 as external stages for data loading/unloading

- Creating storage integrations to securely connect Snowflake with Azure containers

- Defining external tables for querying staged data directly from Azure

- Setting up Snowpipe with Azure Event Grid for continuous, event-driven data ingestion

- Using Azure Functions and API Management for serverless external functions

- Enabling native SSO connectivity to Power BI with a security integration using Azure Active Directory

Let’s explore the specifics.

Snowflake support for Azure Containers

Permalink to “Snowflake support for Azure Containers”Snowflake supports the use of Azure blob storage containers for bulk loading your existing containers and folder paths for Snowflake.

Snowflake supports the following types of storage accounts:

- Blob storage

- Data Lake Storage Gen2

- General-purpose v1

- General-purpose v2

It’s important to note that Snowflake does not support Data Lake Storage Gen1.

Before proceeding, let’s quickly understand Azure blob storage containers.

What are Azure blob storage containers?

Permalink to “What are Azure blob storage containers?”Azure’s object-based storage comes in the form of Azure Blob Storage, which, in turn, is realized by Azure Containers. Containers are like buckets in Amazon S3 or Google Cloud Storage.

If you already have existing data infrastructure on Azure, you might want to move data into Snowflake for more structured consumption use cases, such as enterprise reporting and data analytics.

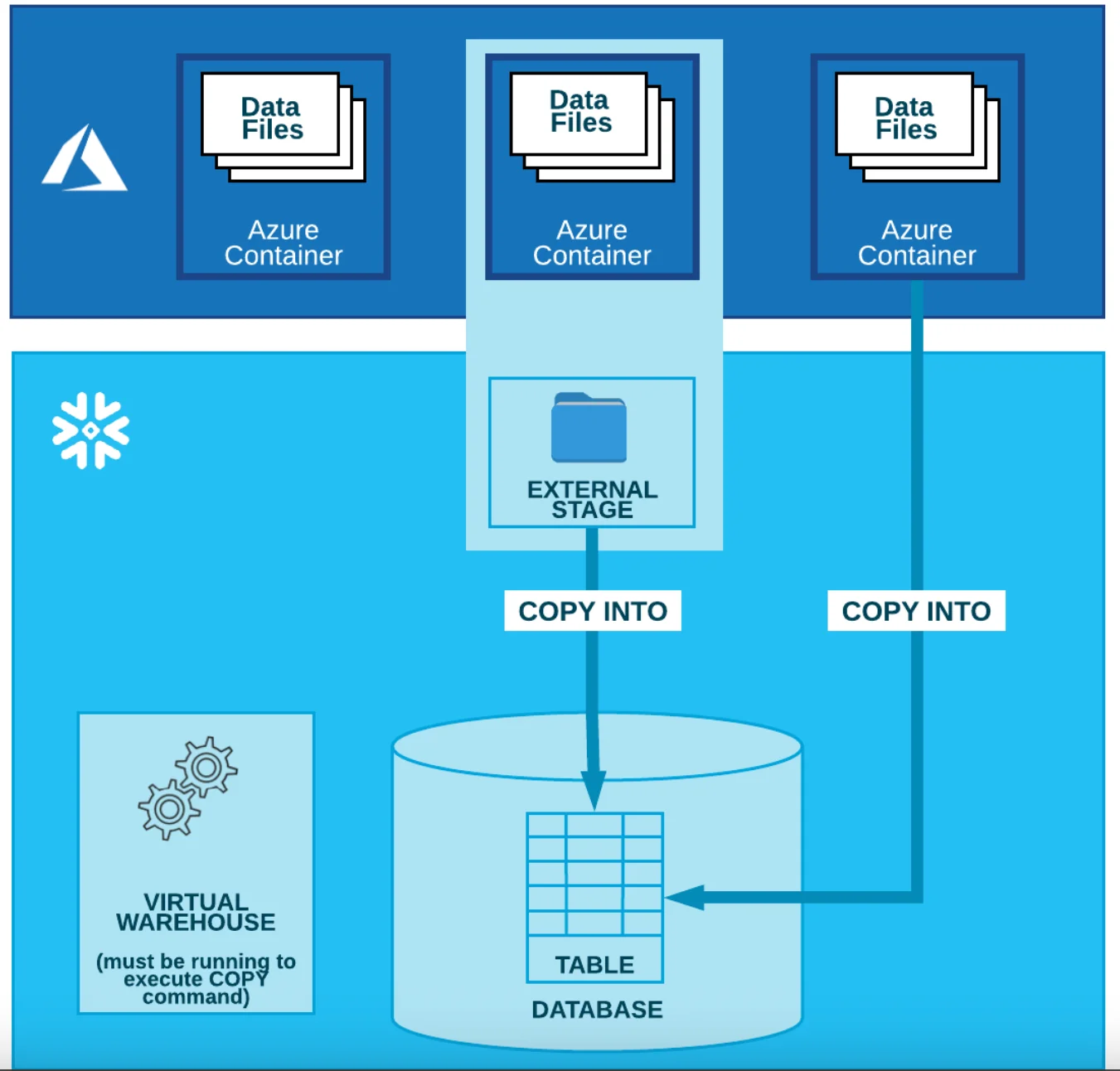

You can load and unload data from and to other data systems into Snowflake stages, which can be Snowflake-managed internal stages to load data into a Snowflake table from a local file system, and external stages to load data from object-based storage too.

The unloading process also involves the same steps but in reverse.

Staging data in Azure Containers

Permalink to “Staging data in Azure Containers”If your data is in Azure Containers, you can create an external stage for your container to load data into Snowflake. You must create a storage integration with your Azure credentials. You can create a storage integration using the following SQL statement in Snowflake:

CREATE STORAGE INTEGRATION <storage_integration_name>

TYPE = EXTERNAL_STAGE

STORAGE_PROVIDER = 'AZURE'

ENABLED = TRUE

AZURE_TENANT_ID = '<azure_tenant_id>'

STORAGE_ALLOWED_LOCATIONS = ('azure://<account>.blob.core.windows.net/<container>/<path>/', 'azure://<account>.blob.core.windows.net/<container>/<path>/')

[ STORAGE_BLOCKED_LOCATIONS = ('azure://<account>.blob.core.windows.net/<container>/<path>/', 'azure://<account>.blob.core.windows.net/<container>/<path>/') ]

Using the storage integration you just created, you can create a stage by specifying the Azure Container path and the format of the files you want to stage into Snowflake using the following SQL statement:

CREATE STAGE <stage_name>

STORAGE_INTEGRATION = <storage_integration_name>

URL = 'azure://myaccount.blob.core.windows.net/mycontainer/load/files/'

FILE_FORMAT = my_csv_format;

Once that’s done, you can load data from and unload data into Azure Containers.

Loading and unloading data in Azure Containers

Permalink to “Loading and unloading data in Azure Containers”Once the Azure Container external stage is created, you can use the COPY INTO command for bulk-loading the data into a table, as shown in the SQL statement below:

COPY INTO <table_name>

FROM @<stage_name>

PATTERN='.*address.*.csv';

The pattern would get all the address.*.csv files, such as address.part001.csv, address.part002.csv, and so on, and load them in parallel into the specified Snowflake table.

You don’t necessarily have to create a separate stage for unloading data. Still, it’s considered best practice to create an unload directory to unload the data from a table, as shown in the SQL statement below:

COPY INTO @<stage_name>/unload/ from address;

After this, you can run the GET statement to unload the data from the stage to your local file system:

GET @mystage/unload/address.csv.gz file:///address.csv.gz

Loading the contents of staged files into Snowflake - Source: Snowflake.

The Snowflake + Azure storage integration also allows you to run queries on an external stage without getting the data into Snowflake. To do this, you’ll need to create external tables in Snowflake, which collect table-level metadata and store that in Snowflake’s data catalog.

When you create external tables that refer to an Azure Container external stage, you can set that external table to refresh automatically using Azure Event Grid events from your Azure Container.

Can you use Snowpipe with Azure Containers?

Permalink to “Can you use Snowpipe with Azure Containers?”Snowpipe is a managed micro-batching data loading service offered by Snowflake. Like bulk data loading from Azure Containers, you can also load data in micro-batches using Snowpipe. The Snowpipe + Azure integration also rests upon the storage integration and external stage construct in Snowflake.

To trigger Snowpipe data loads, you can use Azure Event Grid messages for Blob Storage events. An additional step here is to create a notification integration using the following SQL statement:

CREATE NOTIFICATION INTEGRATION <notification_integration_name>

TYPE = QUEUE

NOTIFICATION_PROVIDER = AZURE_STORAGE_QUEUE

AZURE_STORAGE_QUEUE_PRIMARY_URI = '<queue_URL>'

AZURE_TENANT_ID = '<directory_ID>'

ENABLED = TRUE;

Using the same notification integration, you need to create a pipe and then use the following SQL statement:

CREATE PIPE <pipe_name>

AUTO_INGEST = TRUE

INTEGRATION = '<notification_integration_name>'

AS

<copy_statement>;

Can you use Azure functions with Snowflake?

Permalink to “Can you use Azure functions with Snowflake?”In addition to external tables where you don’t have to move your data into Snowflake, you can also use external functions where you don’t have to move your business logic into Snowflake.

An external function or a remote service gives you the option to offload certain aspects of your data platform to certain services in Azure, the most prominent of which is Azure Functions, the serverless compute option in Azure.

To integrate Snowflake with Azure Functions, you have to create an API integration, as shown in the SQL statement below:

CREATE OR REPLACE API INTEGRATION <api_integration_name>

API_PROVIDER = azure_api_management

AZURE_TENANT_ID = '<azure_tenant_id>'

AZURE_AD_APPLICATION_ID = '<azure_application_id>'

API_ALLOWED_FIXES = ('https://')

ENABLED = TRUE;

Snowflake uses Azure API Management service as a proxy service to send requests for Azure Function invocation, which is why you have the API_PROVIDER listed as azure_api_management.

Several use cases exist for external functions to enrich, clean, protect, and mask data. You can call other third-party APIs from these external functions or write your code within the external function.

A typical example of external functions in Snowflake is the external tokenization of data masking, where you can use Azure Functions as the tokenizer.

How to connect Snowflake to Power BI Desktop for visualization

Permalink to “How to connect Snowflake to Power BI Desktop for visualization”Microsoft Power BI is one of the most widely used enterprise BI tools. Chances are, if you are already using Azure, you’ll be using Power BI too. The great thing about Snowflake’s integration with Power BI is that it provides native OAuth 2.0 SSO connectivity.

So, you can log into Snowflake directly from Power BI without the need for an on-prem Power BI Gateway, which simplifies the connectivity a great deal without compromising on security.

The Snowflake + Power BI integration relies upon a security integration, which you can set up using the following SQL statement:

CREATE SECURITY INTEGRATION <security_integration_name>

TYPE = external_oauth

EXTERNAL_OAUTH_TYPE = azure

EXTERNAL_OAUTH_ISSUER = '<azure_ad_issuer>'

EXTERNAL_OAUTH_JWS_KEYS_URL = '<https://login.windows.net/common/discovery/keys>'

EXTERNAL_OAUTH_AUDIENCE_LIST = ('<https://analysis.windows.net/powerbi/connector/Snowflake>')

EXTERNAL_OAUTH_TOKEN_USER_MAPPING_CLAIM = 'upn'

EXTERNAL_OAUTH_SNOWFLAKE_USER_MAPPING_ATTRIBUTE = 'login_name'

ENABLED = TRUE;

Summary

Permalink to “Summary”Snowflake has been available on Azure since 2018 and offers a powerful option for teams already invested in the Microsoft ecosystem. With support for Azure Blob Storage, Event Grid, Azure Functions, and native Power BI connectivity, you can efficiently stage, load, process, and visualize data across platforms.

By combining Snowflake’s governance features with services like Azure Data Catalog and Microsoft Purview, you can build a metadata-rich data stack. This metadata can then be integrated into Atlan, a metadata control plane for data, metadata, and AI, to improve discovery, governance, and collaboration across your enterprise.

You can learn more about the Snowflake + Azure partnership from Snowflake’s official website and the Snowflake + Atlan partnership here.

Snowflake on Azure: Frequently asked questions (FAQs)

Permalink to “Snowflake on Azure: Frequently asked questions (FAQs)”1. Is Snowflake available on Microsoft Azure?

Permalink to “1. Is Snowflake available on Microsoft Azure?”Yes. Snowflake has been generally available on Azure since mid-2018 and now operates in 16 Azure regions, including select SnowGov regions in the US.

2. How do you deploy Snowflake on Azure?

Permalink to “2. How do you deploy Snowflake on Azure?”To deploy Snowflake on Azure, you simply select Azure as your cloud provider when creating your Snowflake account. From there, you can configure your region, connect Azure Blob Storage, set up external stages, and use services like Snowpipe or Azure Functions depending on your workload needs.

3. Does Snowflake support Azure Blob Storage for data loading?

Permalink to “3. Does Snowflake support Azure Blob Storage for data loading?”Yes. You can use Azure Blob Storage (including Data Lake Gen2) to stage, load, and unload data in Snowflake via external stages and COPY INTO commands.

4. Can Snowflake automatically ingest data from Azure Containers?

Permalink to “4. Can Snowflake automatically ingest data from Azure Containers?”Yes. You can use Snowpipe with Azure Event Grid to set up automated, micro-batch ingestion of files as they land in Azure Blob Storage.

5. What types of Azure services integrate with Snowflake?

Permalink to “5. What types of Azure services integrate with Snowflake?”Snowflake integrates with Azure services such as Azure Functions (for external business logic), Event Grid (for Snowpipe triggers), and Azure Active Directory (for OAuth-based authentication).

6. How do I visualize Snowflake data in Power BI?

Permalink to “6. How do I visualize Snowflake data in Power BI?”You can connect Snowflake to Power BI Desktop using OAuth 2.0 SSO, without requiring a Power BI Gateway, making it secure and easy to use in Azure-native environments.

7. Does Snowflake support external tables with Azure Containers?

Permalink to “7. Does Snowflake support external tables with Azure Containers?”Yes. You can query data stored in Azure Blob Storage using external tables, allowing you to analyze data without physically moving it into Snowflake.

8. Can I use Snowflake’s Business Critical Edition on Azure?

Permalink to “8. Can I use Snowflake’s Business Critical Edition on Azure?”If your business requires the highest level of data security and protection in the cloud, you should use Snowflake’s Business Critical Edition. This edition comes compliance-ready for regulations like HIPAA and HITRUST CSF.

Moreover, you can use the Azure Private Link service to enable private networking between Snowflake and the rest of your Azure infrastructure.