Data profiling is the process of analyzing data sets systematically to evaluate their quality, structure, and content. It identifies anomalies, missing values, and patterns, ensuring data accuracy and consistency.

See How Atlan Automates Data Quality at Scale ➜

This process provides insights into metadata, validates data integrity, and supports data governance.

Organizations use data profiling to enhance decision-making and improve business analytics. It also facilitates data standardization and compliance with industry regulations.

By automating data profiling, companies can efficiently handle large datasets and maintain data quality across systems.

Data profiling explained

Permalink to “Data profiling explained”Data profiling is the systematic process of determining and recording the characteristics of data sets. We can also think of it as building a metadata catalog that summarizes the essential characteristics.

Watch Atlan in Action: Data Quality at Scale ➜

According to Gartner, this involves analyzing data sources and collecting metadata on the condition of data, so that the data steward can investigate the origin of data errors. As a result, you can discover and investigate data quality issues, such as duplication, lack of consistency, and lack of accuracy and completeness.

Kirk Borne from DataPrime says, Data profiling is like “going on a first date with your data.”

You sit across from your computer screen, gazing at your data tooling, wondering if this new dataset is compatible with your project goals and whether there will be any red flags.

That’s where data profiling can help. We start our relationship with a new dataset by profiling it — dedicating some time to get to know it.

According to Gartner D&A and AI predictions through 2030, 80% of organizations will initiate data profiling activities to improve the quality of their data assets.

There are many tools available to profile data. However, it’s important to remember the end goal — build a useful overview of your dataset with the information you need to understand its origins and trajectory through your systems.

Data profiling is often confused with data mining or data cleansing. So, before we proceed, let’s explore the differences.

Data profiling vs. data mining

Permalink to “Data profiling vs. data mining”Although data profiling has some overlaps with data mining, the end goals are different.

Gartner defines data mining as the process of discovering meaningful correlations, patterns and trends by analyzing data. Meanwhile, data profiling helps in the understanding of data and its characteristics to ensure its completeness.

An MIT survey on data profiling highlights the difference between data profiling and data mining as follows:

Data profiling produces a summary of data characteristics, whereas data mining aims to discover useful but non-obvious insights from the data. So, while data profiling supports or enables the use of the data, data mining is the use of the data.

Data profiling vs. data cleansing

Permalink to “Data profiling vs. data cleansing”Data cleansing is the process of finding and dealing with problematic data points within a data set. It can include:

- Revisiting the original data sources for clarification

- Removing dubious records

- Deciding how to handle missing values

However, data cleansing is useful when you know which data must be checked.

Watch Context Studio Demo

According to Experian, bad data lurks in our databases undetected and unaddressed until we shine a spotlight on that data using a specific SQL query. That’s where data profiling comes in handy, as it can analyze vast quantities of data for completeness, inconsistencies, errors, anomalies, and more.

So, a thorough data profiling process usually reveals some aspects of our data that need fixing. That’s why you can think of data cleansing as a use case of data profiling.

Data profiling: Benefits & use cases

Permalink to “Data profiling: Benefits & use cases”According to a SIGMOD research paper, in addition to data cleansing, data profiling has several use cases, such as:

- Query optimization

- Data integration

- Scientific data management

- Data analytics

- Project management

- Data discovery

Let’s explore each use case.

Query optimization

Permalink to “Query optimization”Data profiling provides information on the characteristics of a database, such as rows, columns, average values, and more. Statistics about each database can help you estimate the query design, considerations, and implementation plan. As a result, you can optimize your queries for better performance.

Data integration

Permalink to “Data integration”To integrate multiple datasets, we first need to understand the datasets and their relationships. This is crucial to understand how to link datasets, what’s the best way to link them, do you need to take into account different conventions such as name or unit of measurement, and so on.

Scientific data management

Permalink to “Scientific data management”Before importing raw data into your databases, it’s important to understand the nature of that data. That’s where data profiling can help. After profiling these datasets, you can develop a plan to extract that data and adopt the appropriate schema.

Data analytics

Permalink to “Data analytics”Any analysis or data mining starts with data profiling. Data profiling gives an initial high-level understanding of the dataset and its characteristics so that you can choose the right algorithms.

For example, clustering algorithms might need values in numerical columns to fall in similar number ranges. You can use the statistical summary from profiling to check which columns must be transformed.

Project management

Permalink to “Project management”Taking data-driven decisions requires a solid understanding of the data you have, and the information you need for the project. With data profiling, you can take stock of your data, its quality, completeness, and credibility. You can also determine whether you have all the data you need to make your project work.

Data discovery

Permalink to “Data discovery”Having data available to be used broadly across an organization requires that data be easily accessible, searchable, and understandable. Data profiling can help by enabling you to compile the metadata needed, along with descriptive summaries and metrics for better context.

The 2024 Thales Data Threat Report indicates that 28% of organizations experienced a cyber attack, with those having robust compliance processes being less likely to suffer breaches.

Data profiling in action: An example from the healthcare industry

Permalink to “Data profiling in action: An example from the healthcare industry”Data profiling plays a critical role in ensuring data quality. In healthcare, data profiling can help improve the quality and accuracy of patient data. Bad data can lead to inefficiencies, patient frustration, and even patient mistreatment.

According to The Office of the National Coordinator for Health IT, healthcare organizations should profile their data to:

- Finalize the design of a master data store

- Assess the state of data

- Develop critical metrics and standards for data accuracy, credibility, and use

- Set up a new system for EHR, patient registration, billing, and more

- Connect to a health information exchange

How can you do data profiling: Techniques & approach

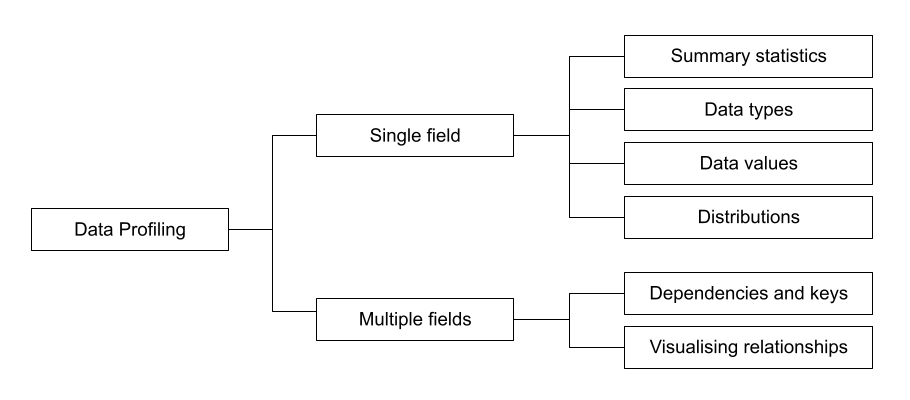

Permalink to “How can you do data profiling: Techniques & approach”According to Felix Naumann’s “An Introduction to Data Profiling”, data profiling can be done using single or multiple fields.

Data profiling techniques

Single field profiling is the most basic form of profiling that assumes all fields are of the same type and share common properties. This type of profiling helps you discover:

- Summary statistics: This includes count of data and mathematical aggregations such as maximum, minimum, and mean values.

- Data types: This involves determining whether the data is categorical, continuous, and exhibits any patterns. Simple data types include strings, numbers, and timestamps, whereas more complex types include XML and JSON.

- Data values: This means identifying the characteristics and patterns in data values. Examples include address fields, cities, ID strings, and more. Profiling data values also helps you assess your data against known business rules. For instance, if you know that only students from South America can apply for a specific category of funding, you can check for that condition in your data.

- Distributions: Visualizing data distribution is useful in spotting outliers. For categorical data, you can see counts per category. Meanwhile, for numerical data, you can plot histograms and note characteristics like skewness, the number of modes, and presence of outliers.

Meanwhile, multi-field profiling explores the relationship between fields to discover:

- Inclusion dependencies, keys, and functional dependencies: With profiling, you can find out if the values in one field are a subset of values in other fields. This helps you add dimensions to your data set.

- Visualize numerical relationships: Profiling helps explore the relationships between numerical fields using pair plots, cross-correlation heat maps, or tables of correlations between fields. These visualizations provide a quick overview of the relationships each data set has with other assets.

When should data profiling happen?

Permalink to “When should data profiling happen?”Ralph Kimball argues that data profiling should happen right at the beginning of a data project. This can help you evaluate whether the project is viable. You get an early view of the data and a taste of the problems that may occur further into a project.

It also helps you find dirty data and analyze data quality so that you set up proper processes at the beginning. Catching problems early can lead to significant savings in time and more robust data projects.

Here are some of the other benefits of profiling data at the beginning:

- Spot and map data transformations and quality improvement

- Identify unexpected behavior that needs addressing at a business process or ETL system design level

- Predict potential ETL issues and set up contingencies if needed

Kimball also highlights how “data profiling makes the implementation team look like they know what they’re doing. By correctly anticipating the difficult data quality issues of a project upfront, the team avoids the embarrassing surprise of discovering big problems near the end of the project.”

How organizations making the most out of their data using Atlan

Permalink to “How organizations making the most out of their data using Atlan”The recently published Forrester Wave report compared all the major enterprise data catalogs and positioned Atlan as the market leader ahead of all others. The comparison was based on 24 different aspects of cataloging, broadly across the following three criteria:

- Automatic cataloging of the entire technology, data, and AI ecosystem

- Enabling the data ecosystem AI and automation first

- Prioritizing data democratization and self-service

These criteria made Atlan the ideal choice for a major audio content platform, where the data ecosystem was centered around Snowflake. The platform sought a “one-stop shop for governance and discovery,” and Atlan played a crucial role in ensuring their data was “understandable, reliable, high-quality, and discoverable.”

For another organization, Aliaxis, which also uses Snowflake as their core data platform, Atlan served as “a bridge” between various tools and technologies across the data ecosystem. With its organization-wide business glossary, Atlan became the go-to platform for finding, accessing, and using data. It also significantly reduced the time spent by data engineers and analysts on pipeline debugging and troubleshooting.

A key goal of Atlan is to help organizations maximize the use of their data for AI use cases. As generative AI capabilities have advanced in recent years, organizations can now do more with both structured and unstructured data—provided it is discoverable and trustworthy, or in other words, AI-ready.

Tide’s Story of GDPR Compliance: Embedding Privacy into Automated Processes

Permalink to “Tide’s Story of GDPR Compliance: Embedding Privacy into Automated Processes”- Tide, a UK-based digital bank with nearly 500,000 small business customers, sought to improve their compliance with GDPR’s Right to Erasure, commonly known as the “Right to be forgotten”.

- After adopting Atlan as their metadata platform, Tide’s data and legal teams collaborated to define personally identifiable information in order to propagate those definitions and tags across their data estate.

- Tide used Atlan Playbooks (rule-based bulk automations) to automatically identify, tag, and secure personal data, turning a 50-day manual process into mere hours of work.

Book your personalized demo today to find out how Atlan can help your organization in establishing and scaling data governance programs.

Conclusion

Permalink to “Conclusion”Data profiling is a critically important step in any data management or analytics project. So, it should come at the beginning so that you can provide an accurate project timeline estimate, ensure the availability of high-quality data, and enable data-driven decisions.

As Kirk Borne highlights, data profiling is the best path to “knowing thy data”. To know more about data management, check out this article on the four key things to grasp.

FAQs about Data Profiling

Permalink to “FAQs about Data Profiling”1. What is data profiling?

Permalink to “1. What is data profiling?”Data profiling is the systematic process of analyzing and summarizing data sets to understand their structure, content, and quality. It helps identify trends, patterns, and anomalies, providing valuable insights into data for effective decision-making.

2. How does data profiling improve data quality?

Permalink to “2. How does data profiling improve data quality?”By analyzing data for inconsistencies, missing values, and duplications, data profiling helps maintain high data quality. This process ensures that datasets are reliable, consistent, and fit for their intended purpose.

3. How can I automate data profiling?

Permalink to “3. How can I automate data profiling?”Automation in data profiling can be achieved through advanced tools that support scheduled data scans, AI-driven insights, and integration with data pipelines. This reduces manual effort and ensures real-time monitoring of data quality.

4. What are the benefits of data profiling?

Permalink to “4. What are the benefits of data profiling?”Data profiling improves data quality, enhances data governance, and supports better decision-making. It helps organizations maintain regulatory compliance, optimize business processes, and improve analytics outcomes.

5. How does data profiling fit within data governance?

Permalink to “5. How does data profiling fit within data governance?”Data profiling plays a crucial role in data governance by providing accurate insights into data assets. It supports the creation of policies and standards for data usage, ensuring consistency, compliance, and security across the organization.